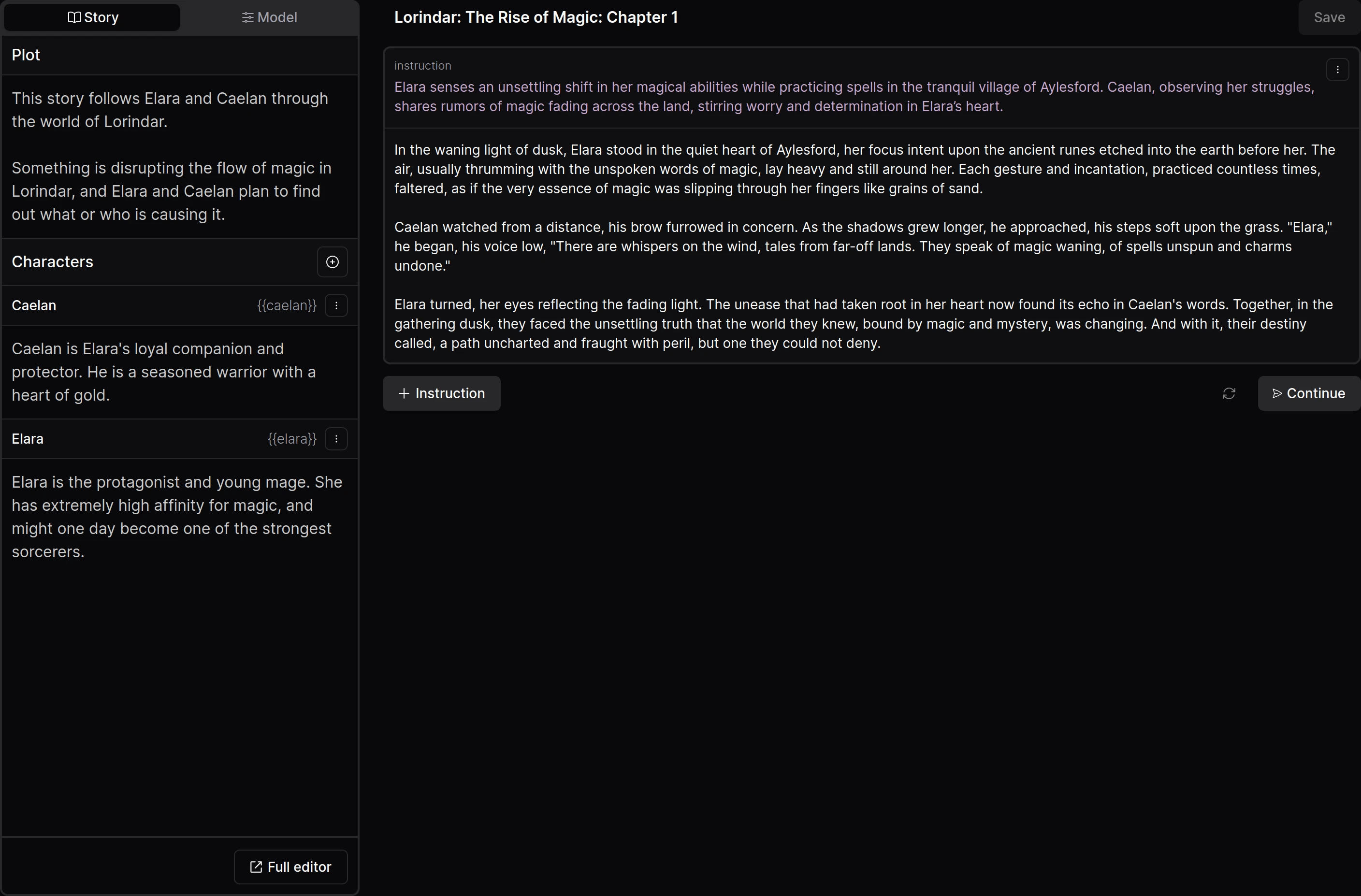

Models for **(steerable) story-writing and role-playing**.

[All Opus V1 models, including quants](https://huggingface.co/collections/dreamgen/opus-v1-65d092a6f8ab7fc669111b31).

## Resources

- [**Opus V1 prompting guide**](https://dreamgen.com/docs/models/opus/v1) with many (interactive) examples and prompts that you can copy.

- [**Google Colab**](https://colab.research.google.com/drive/1J178fH6IdQOXNi-Njgdacf5QgAxsdT20?usp=sharing) for interactive role-play using `opus-v1.2-7b`.

- [Python code](example/prompt/format.py) to format the prompt correctly.

- Join the community on [**Discord**](https://dreamgen.com/discord) to get early access to new models.

Models for **(steerable) story-writing and role-playing**.

Models for **(steerable) story-writing and role-playing**.

Models for **(steerable) story-writing and role-playing**.

Models for **(steerable) story-writing and role-playing**.

## Prompting

## Prompting