BRIA 2.3 ControlNet Depth Model Card

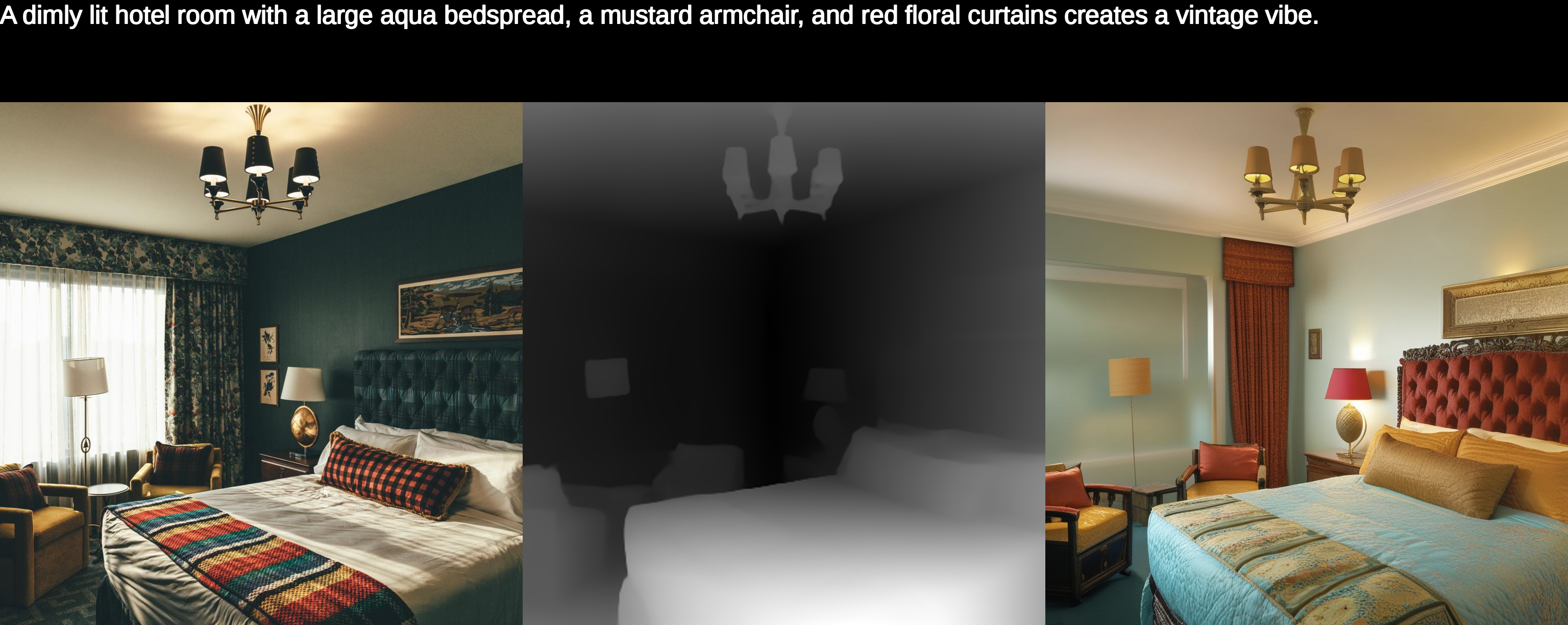

BRIA 2.3 ControlNet-Depth, trained on the foundation of BRIA 2.3 Text-to-Image, enables the generation of high-quality images guided by a textual prompt and the extracted monocular depth estimation from an input image. This allows for the creation of different variations of an image, all sharing the same geometry.

BRIA 2.3 was trained from scratch exclusively on licensed data from our esteemed data partners. Therefore, they are safe for commercial use and provide full legal liability coverage for copyright and privacy infringement, as well as harmful content mitigation. That is, our dataset does not contain copyrighted materials, such as fictional characters, logos, trademarks, public figures, harmful content, or privacy-infringing content.

Join our Discord community for more information, tutorials, tools, and to connect with other users!

Model Description

Developed by: BRIA AI

Model type: ControlNet for Latent diffusion

License: bria-2.3

Model Description: ControlNet Depth for BRIA 2.3 Text-to-Image model. The model generates images guided by text and the monocular depth estimation of the conditioned image.

Resources for more information: BRIA AI

Get Access

BRIA 2.3 ControlNet-Depth requires access to BRIA 2.3 Text-to-Image. For more information, click here.

Code example using Diffusers

pip install diffusers

from diffusers import ControlNetModel, StableDiffusionXLControlNetPipeline

import torch

from transformers import DPTFeatureExtractor, DPTForDepthEstimation

depth_estimator = DPTForDepthEstimation.from_pretrained("Intel/dpt-hybrid-midas").to("cuda")

feature_extractor = DPTFeatureExtractor.from_pretrained("Intel/dpt-hybrid-midas")

def get_depth_map(image):

image = feature_extractor(images=image, return_tensors="pt").pixel_values.to("cuda")

with torch.no_grad(), torch.autocast("cuda"):

depth_map = depth_estimator(image).predicted_depth

image = transforms.functional.center_crop(image, min(image.shape[-2:]))

depth_map = torch.nn.functional.interpolate(

depth_map.unsqueeze(1),

size=(1024, 1024),

mode="bicubic",

align_corners=False,

)

depth_min = torch.amin(depth_map, dim=[1, 2, 3], keepdim=True)

depth_max = torch.amax(depth_map, dim=[1, 2, 3], keepdim=True)

depth_map = (depth_map - depth_min) / (depth_max - depth_min)

image = torch.cat([depth_map] * 3, dim=1)

image = image.permute(0, 2, 3, 1).cpu().numpy()[0]

image = Image.fromarray((image * 255.0).clip(0, 255).astype(np.uint8))

return image

controlnet = ControlNetModel.from_pretrained(

"briaai/BRIA-2.3-ControlNet-Depth",

torch_dtype=torch.float16

)

pipe = StableDiffusionXLControlNetPipeline.from_pretrained(

"briaai/BRIA-2.3",

controlnet=controlnet,

torch_dtype=torch.float16,

)

pipe.to("cuda")

prompt = "A portrait of a Beautiful and playful ethereal singer, golden designs, highly detailed, blurry background"

negative_prompt = "Logo,Watermark,Text,Ugly,Morbid,Extra fingers,Poorly drawn hands,Mutation,Blurry,Extra limbs,Gross proportions,Missing arms,Mutated hands,Long neck,Duplicate,Mutilated,Mutilated hands,Poorly drawn face,Deformed,Bad anatomy,Cloned face,Malformed limbs,Missing legs,Too many fingers"

# Calculate Depth image

input_image = cv2.imread('pics/singer.png')

depth_image = get_depth_map(input_image)

image = pipe(prompt=prompt, negative_prompt=negative_prompt, image=depth_image, controlnet_conditioning_scale=1.0, height=1024, width=1024).images[0]

- Downloads last month

- 115