38161e67e8a70b6f3c70e78bbd546520cdeea07c50814bfff0dcc805ffbc2fa1

Browse files- trl_md_files/customization.mdx +0 -216

- trl_md_files/ddpo_trainer.mdx +0 -119

- trl_md_files/detoxifying_a_lm.mdx +0 -191

- trl_md_files/dpo_trainer.mdx +0 -297

- trl_md_files/index.mdx +0 -65

- trl_md_files/installation.mdx +0 -24

- trl_md_files/iterative_sft_trainer.mdx +0 -54

- trl_md_files/judges.mdx +0 -75

- trl_md_files/kto_trainer.mdx +0 -102

- trl_md_files/learning_tools.mdx +0 -232

- trl_md_files/logging.mdx +0 -75

- trl_md_files/lora_tuning_peft.mdx +0 -144

- trl_md_files/models.mdx +0 -28

- trl_md_files/multi_adapter_rl.mdx +0 -100

- trl_md_files/ppo_trainer.mdx +0 -169

- trl_md_files/quickstart.mdx +0 -88

- trl_md_files/reward_trainer.mdx +0 -96

- trl_md_files/sentiment_tuning.mdx +0 -130

- trl_md_files/sft_trainer.mdx +0 -752

- trl_md_files/trainer.mdx +0 -70

- trl_md_files/using_llama_models.mdx +0 -160

trl_md_files/customization.mdx

DELETED

|

@@ -1,216 +0,0 @@

|

|

| 1 |

-

# Training customization

|

| 2 |

-

|

| 3 |

-

TRL is designed with modularity in mind so that users to be able to efficiently customize the training loop for their needs. Below are some examples on how you can apply and test different techniques.

|

| 4 |

-

|

| 5 |

-

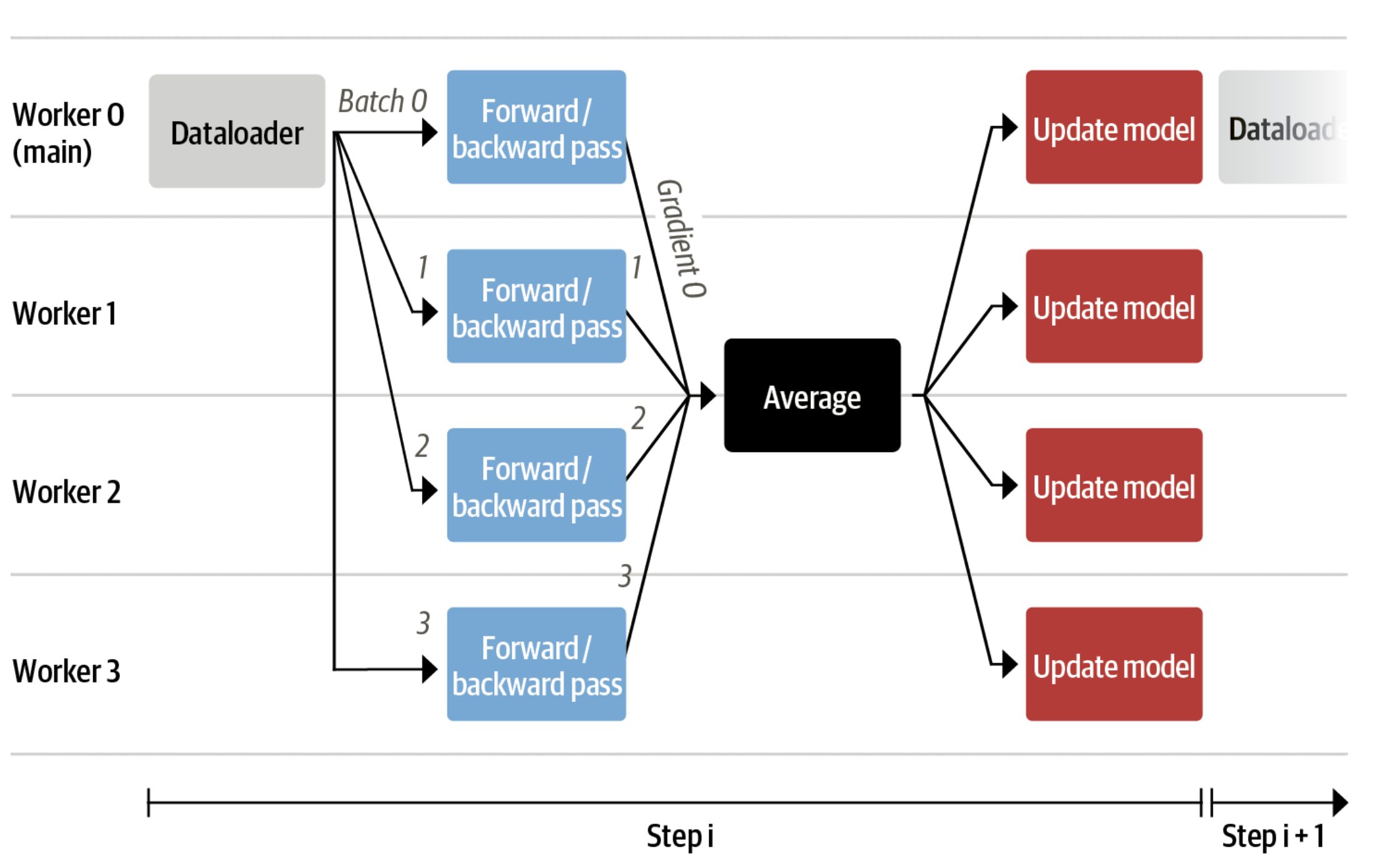

## Train on multiple GPUs / nodes

|

| 6 |

-

|

| 7 |

-

The trainers in TRL use 🤗 Accelerate to enable distributed training across multiple GPUs or nodes. To do so, first create an 🤗 Accelerate config file by running

|

| 8 |

-

|

| 9 |

-

```bash

|

| 10 |

-

accelerate config

|

| 11 |

-

```

|

| 12 |

-

|

| 13 |

-

and answering the questions according to your multi-gpu / multi-node setup. You can then launch distributed training by running:

|

| 14 |

-

|

| 15 |

-

```bash

|

| 16 |

-

accelerate launch your_script.py

|

| 17 |

-

```

|

| 18 |

-

|

| 19 |

-

We also provide config files in the [examples folder](https://github.com/huggingface/trl/tree/main/examples/accelerate_configs) that can be used as templates. To use these templates, simply pass the path to the config file when launching a job, e.g.:

|

| 20 |

-

|

| 21 |

-

```shell

|

| 22 |

-

accelerate launch --config_file=examples/accelerate_configs/multi_gpu.yaml --num_processes {NUM_GPUS} path_to_script.py --all_arguments_of_the_script

|

| 23 |

-

```

|

| 24 |

-

|

| 25 |

-

Refer to the [examples page](https://github.com/huggingface/trl/tree/main/examples) for more details.

|

| 26 |

-

|

| 27 |

-

### Distributed training with DeepSpeed

|

| 28 |

-

|

| 29 |

-

All of the trainers in TRL can be run on multiple GPUs together with DeepSpeed ZeRO-{1,2,3} for efficient sharding of the optimizer states, gradients, and model weights. To do so, run:

|

| 30 |

-

|

| 31 |

-

```shell

|

| 32 |

-

accelerate launch --config_file=examples/accelerate_configs/deepspeed_zero{1,2,3}.yaml --num_processes {NUM_GPUS} path_to_your_script.py --all_arguments_of_the_script

|

| 33 |

-

```

|

| 34 |

-

|

| 35 |

-

Note that for ZeRO-3, a small tweak is needed to initialize your reward model on the correct device via the `zero3_init_context_manager()` context manager. In particular, this is needed to avoid DeepSpeed hanging after a fixed number of training steps. Here is a snippet of what is involved from the [`sentiment_tuning`](https://github.com/huggingface/trl/blob/main/examples/scripts/ppo.py) example:

|

| 36 |

-

|

| 37 |

-

```python

|

| 38 |

-

ds_plugin = ppo_trainer.accelerator.state.deepspeed_plugin

|

| 39 |

-

if ds_plugin is not None and ds_plugin.is_zero3_init_enabled():

|

| 40 |

-

with ds_plugin.zero3_init_context_manager(enable=False):

|

| 41 |

-

sentiment_pipe = pipeline("sentiment-analysis", model="lvwerra/distilbert-imdb", device=device)

|

| 42 |

-

else:

|

| 43 |

-

sentiment_pipe = pipeline("sentiment-analysis", model="lvwerra/distilbert-imdb", device=device)

|

| 44 |

-

```

|

| 45 |

-

|

| 46 |

-

Consult the 🤗 Accelerate [documentation](https://huggingface.co/docs/accelerate/usage_guides/deepspeed) for more information about the DeepSpeed plugin.

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

## Use different optimizers

|

| 50 |

-

|

| 51 |

-

By default, the `PPOTrainer` creates a `torch.optim.Adam` optimizer. You can create and define a different optimizer and pass it to `PPOTrainer`:

|

| 52 |

-

```python

|

| 53 |

-

import torch

|

| 54 |

-

from transformers import GPT2Tokenizer

|

| 55 |

-

from trl import PPOTrainer, PPOConfig, AutoModelForCausalLMWithValueHead

|

| 56 |

-

|

| 57 |

-

# 1. load a pretrained model

|

| 58 |

-

model = AutoModelForCausalLMWithValueHead.from_pretrained('gpt2')

|

| 59 |

-

ref_model = AutoModelForCausalLMWithValueHead.from_pretrained('gpt2')

|

| 60 |

-

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

|

| 61 |

-

|

| 62 |

-

# 2. define config

|

| 63 |

-

ppo_config = {'batch_size': 1, 'learning_rate':1e-5}

|

| 64 |

-

config = PPOConfig(**ppo_config)

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

# 2. Create optimizer

|

| 68 |

-

optimizer = torch.optim.SGD(model.parameters(), lr=config.learning_rate)

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

# 3. initialize trainer

|

| 72 |

-

ppo_trainer = PPOTrainer(config, model, ref_model, tokenizer, optimizer=optimizer)

|

| 73 |

-

```

|

| 74 |

-

|

| 75 |

-

For memory efficient fine-tuning, you can also pass `Adam8bit` optimizer from `bitsandbytes`:

|

| 76 |

-

|

| 77 |

-

```python

|

| 78 |

-

import torch

|

| 79 |

-

import bitsandbytes as bnb

|

| 80 |

-

|

| 81 |

-

from transformers import GPT2Tokenizer

|

| 82 |

-

from trl import PPOTrainer, PPOConfig, AutoModelForCausalLMWithValueHead

|

| 83 |

-

|

| 84 |

-

# 1. load a pretrained model

|

| 85 |

-

model = AutoModelForCausalLMWithValueHead.from_pretrained('gpt2')

|

| 86 |

-

ref_model = AutoModelForCausalLMWithValueHead.from_pretrained('gpt2')

|

| 87 |

-

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

|

| 88 |

-

|

| 89 |

-

# 2. define config

|

| 90 |

-

ppo_config = {'batch_size': 1, 'learning_rate':1e-5}

|

| 91 |

-

config = PPOConfig(**ppo_config)

|

| 92 |

-

|

| 93 |

-

|

| 94 |

-

# 2. Create optimizer

|

| 95 |

-

optimizer = bnb.optim.Adam8bit(model.parameters(), lr=config.learning_rate)

|

| 96 |

-

|

| 97 |

-

# 3. initialize trainer

|

| 98 |

-

ppo_trainer = PPOTrainer(config, model, ref_model, tokenizer, optimizer=optimizer)

|

| 99 |

-

```

|

| 100 |

-

|

| 101 |

-

### Use LION optimizer

|

| 102 |

-

|

| 103 |

-

You can use the new [LION optimizer from Google](https://huggingface.co/papers/2302.06675) as well, first take the source code of the optimizer definition [here](https://github.com/lucidrains/lion-pytorch/blob/main/lion_pytorch/lion_pytorch.py), and copy it so that you can import the optimizer. Make sure to initialize the optimizer by considering the trainable parameters only for a more memory efficient training:

|

| 104 |

-

```python

|

| 105 |

-

optimizer = Lion(filter(lambda p: p.requires_grad, self.model.parameters()), lr=self.config.learning_rate)

|

| 106 |

-

|

| 107 |

-

...

|

| 108 |

-

ppo_trainer = PPOTrainer(config, model, ref_model, tokenizer, optimizer=optimizer)

|

| 109 |

-

```

|

| 110 |

-

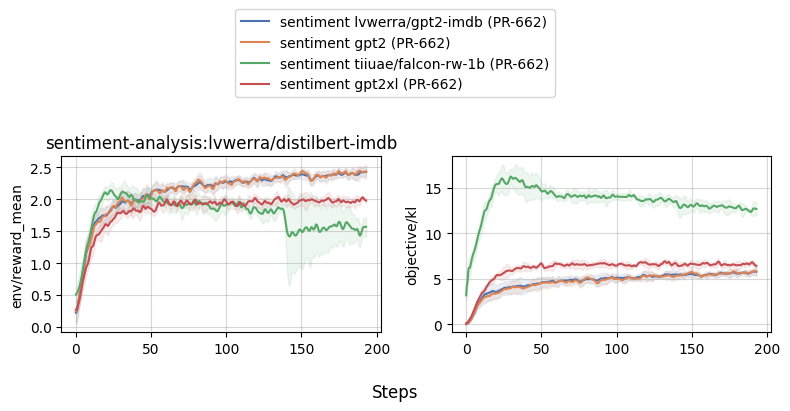

We advise you to use the learning rate that you would use for `Adam` divided by 3 as pointed out [here](https://github.com/lucidrains/lion-pytorch#lion---pytorch). We observed an improvement when using this optimizer compared to classic Adam (check the full logs [here](https://wandb.ai/distill-bloom/trl/runs/lj4bheke?workspace=user-younesbelkada)):

|

| 111 |

-

|

| 112 |

-

<div style="text-align: center">

|

| 113 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-lion.png">

|

| 114 |

-

</div>

|

| 115 |

-

|

| 116 |

-

|

| 117 |

-

## Add a learning rate scheduler

|

| 118 |

-

|

| 119 |

-

You can also play with your training by adding learning rate schedulers!

|

| 120 |

-

```python

|

| 121 |

-

import torch

|

| 122 |

-

from transformers import GPT2Tokenizer

|

| 123 |

-

from trl import PPOTrainer, PPOConfig, AutoModelForCausalLMWithValueHead

|

| 124 |

-

|

| 125 |

-

# 1. load a pretrained model

|

| 126 |

-

model = AutoModelForCausalLMWithValueHead.from_pretrained('gpt2')

|

| 127 |

-

ref_model = AutoModelForCausalLMWithValueHead.from_pretrained('gpt2')

|

| 128 |

-

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

|

| 129 |

-

|

| 130 |

-

# 2. define config

|

| 131 |

-

ppo_config = {'batch_size': 1, 'learning_rate':1e-5}

|

| 132 |

-

config = PPOConfig(**ppo_config)

|

| 133 |

-

|

| 134 |

-

|

| 135 |

-

# 2. Create optimizer

|

| 136 |

-

optimizer = torch.optim.SGD(model.parameters(), lr=config.learning_rate)

|

| 137 |

-

lr_scheduler = torch.optim.lr_scheduler.ExponentialLR(optimizer, gamma=0.9)

|

| 138 |

-

|

| 139 |

-

# 3. initialize trainer

|

| 140 |

-

ppo_trainer = PPOTrainer(config, model, ref_model, tokenizer, optimizer=optimizer, lr_scheduler=lr_scheduler)

|

| 141 |

-

```

|

| 142 |

-

|

| 143 |

-

## Memory efficient fine-tuning by sharing layers

|

| 144 |

-

|

| 145 |

-

Another tool you can use for more memory efficient fine-tuning is to share layers between the reference model and the model you want to train.

|

| 146 |

-

```python

|

| 147 |

-

import torch

|

| 148 |

-

from transformers import AutoTokenizer

|

| 149 |

-

from trl import PPOTrainer, PPOConfig, AutoModelForCausalLMWithValueHead, create_reference_model

|

| 150 |

-

|

| 151 |

-

# 1. load a pretrained model

|

| 152 |

-

model = AutoModelForCausalLMWithValueHead.from_pretrained('bigscience/bloom-560m')

|

| 153 |

-

ref_model = create_reference_model(model, num_shared_layers=6)

|

| 154 |

-

tokenizer = AutoTokenizer.from_pretrained('bigscience/bloom-560m')

|

| 155 |

-

|

| 156 |

-

# 2. initialize trainer

|

| 157 |

-

ppo_config = {'batch_size': 1}

|

| 158 |

-

config = PPOConfig(**ppo_config)

|

| 159 |

-

ppo_trainer = PPOTrainer(config, model, ref_model, tokenizer)

|

| 160 |

-

```

|

| 161 |

-

|

| 162 |

-

## Pass 8-bit reference models

|

| 163 |

-

|

| 164 |

-

<div>

|

| 165 |

-

|

| 166 |

-

Since `trl` supports all key word arguments when loading a model from `transformers` using `from_pretrained`, you can also leverage `load_in_8bit` from `transformers` for more memory efficient fine-tuning.

|

| 167 |

-

|

| 168 |

-

Read more about 8-bit model loading in `transformers` [here](https://huggingface.co/docs/transformers/perf_infer_gpu_one#bitsandbytes-integration-for-int8-mixedprecision-matrix-decomposition).

|

| 169 |

-

|

| 170 |

-

</div>

|

| 171 |

-

|

| 172 |

-

```python

|

| 173 |

-

# 0. imports

|

| 174 |

-

# pip install bitsandbytes

|

| 175 |

-

import torch

|

| 176 |

-

from transformers import AutoTokenizer

|

| 177 |

-

from trl import PPOTrainer, PPOConfig, AutoModelForCausalLMWithValueHead

|

| 178 |

-

|

| 179 |

-

# 1. load a pretrained model

|

| 180 |

-

model = AutoModelForCausalLMWithValueHead.from_pretrained('bigscience/bloom-560m')

|

| 181 |

-

ref_model = AutoModelForCausalLMWithValueHead.from_pretrained('bigscience/bloom-560m', device_map="auto", load_in_8bit=True)

|

| 182 |

-

tokenizer = AutoTokenizer.from_pretrained('bigscience/bloom-560m')

|

| 183 |

-

|

| 184 |

-

# 2. initialize trainer

|

| 185 |

-

ppo_config = {'batch_size': 1}

|

| 186 |

-

config = PPOConfig(**ppo_config)

|

| 187 |

-

ppo_trainer = PPOTrainer(config, model, ref_model, tokenizer)

|

| 188 |

-

```

|

| 189 |

-

|

| 190 |

-

## Use the CUDA cache optimizer

|

| 191 |

-

|

| 192 |

-

When training large models, you should better handle the CUDA cache by iteratively clearing it. Do do so, simply pass `optimize_cuda_cache=True` to `PPOConfig`:

|

| 193 |

-

|

| 194 |

-

```python

|

| 195 |

-

config = PPOConfig(..., optimize_cuda_cache=True)

|

| 196 |

-

```

|

| 197 |

-

|

| 198 |

-

|

| 199 |

-

|

| 200 |

-

## Use score scaling/normalization/clipping

|

| 201 |

-

As suggested by [Secrets of RLHF in Large Language Models Part I: PPO](https://huggingface.co/papers/2307.04964), we support score (aka reward) scaling/normalization/clipping to improve training stability via `PPOConfig`:

|

| 202 |

-

```python

|

| 203 |

-

from trl import PPOConfig

|

| 204 |

-

|

| 205 |

-

ppo_config = {

|

| 206 |

-

use_score_scaling=True,

|

| 207 |

-

use_score_norm=True,

|

| 208 |

-

score_clip=0.5,

|

| 209 |

-

}

|

| 210 |

-

config = PPOConfig(**ppo_config)

|

| 211 |

-

```

|

| 212 |

-

|

| 213 |

-

To run `ppo.py`, you can use the following command:

|

| 214 |

-

```

|

| 215 |

-

python examples/scripts/ppo.py --log_with wandb --use_score_scaling --use_score_norm --score_clip 0.5

|

| 216 |

-

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

trl_md_files/ddpo_trainer.mdx

DELETED

|

@@ -1,119 +0,0 @@

|

|

| 1 |

-

# Denoising Diffusion Policy Optimization

|

| 2 |

-

## The why

|

| 3 |

-

|

| 4 |

-

| Before | After DDPO finetuning |

|

| 5 |

-

| --- | --- |

|

| 6 |

-

| <div style="text-align: center"><img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/pre_squirrel.png"/></div> | <div style="text-align: center"><img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/post_squirrel.png"/></div> |

|

| 7 |

-

| <div style="text-align: center"><img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/pre_crab.png"/></div> | <div style="text-align: center"><img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/post_crab.png"/></div> |

|

| 8 |

-

| <div style="text-align: center"><img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/pre_starfish.png"/></div> | <div style="text-align: center"><img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/post_starfish.png"/></div> |

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

## Getting started with Stable Diffusion finetuning with reinforcement learning

|

| 12 |

-

|

| 13 |

-

The machinery for finetuning of Stable Diffusion models with reinforcement learning makes heavy use of HuggingFace's `diffusers`

|

| 14 |

-

library. A reason for stating this is that getting started requires a bit of familiarity with the `diffusers` library concepts, mainly two of them - pipelines and schedulers.

|

| 15 |

-

Right out of the box (`diffusers` library), there isn't a `Pipeline` nor a `Scheduler` instance that is suitable for finetuning with reinforcement learning. Some adjustments need to made.

|

| 16 |

-

|

| 17 |

-

There is a pipeline interface that is provided by this library that is required to be implemented to be used with the `DDPOTrainer`, which is the main machinery for fine-tuning Stable Diffusion with reinforcement learning. **Note: Only the StableDiffusion architecture is supported at this point.**

|

| 18 |

-

There is a default implementation of this interface that you can use out of the box. Assuming the default implementation is sufficient and/or to get things moving, refer to the training example alongside this guide.

|

| 19 |

-

|

| 20 |

-

The point of the interface is to fuse the pipeline and the scheduler into one object which allows for minimalness in terms of having the constraints all in one place. The interface was designed in hopes of catering to pipelines and schedulers beyond the examples in this repository and elsewhere at this time of writing. Also the scheduler step is a method of this pipeline interface and this may seem redundant given that the raw scheduler is accessible via the interface but this is the only way to constrain the scheduler step output to an output type befitting of the algorithm at hand (DDPO).

|

| 21 |

-

|

| 22 |

-

For a more detailed look into the interface and the associated default implementation, go [here](https://github.com/lvwerra/trl/tree/main/trl/models/modeling_sd_base.py)

|

| 23 |

-

|

| 24 |

-

Note that the default implementation has a LoRA implementation path and a non-LoRA based implementation path. The LoRA flag enabled by default and this can be turned off by passing in the flag to do so. LORA based training is faster and the LORA associated model hyperparameters responsible for model convergence aren't as finicky as non-LORA based training.

|

| 25 |

-

|

| 26 |

-

Also in addition, there is the expectation of providing a reward function and a prompt function. The reward function is used to evaluate the generated images and the prompt function is used to generate the prompts that are used to generate the images.

|

| 27 |

-

|

| 28 |

-

## Getting started with `examples/scripts/ddpo.py`

|

| 29 |

-

|

| 30 |

-

The `ddpo.py` script is a working example of using the `DDPO` trainer to finetune a Stable Diffusion model. This example explicitly configures a small subset of the overall parameters associated with the config object (`DDPOConfig`).

|

| 31 |

-

|

| 32 |

-

**Note:** one A100 GPU is recommended to get this running. Anything below a A100 will not be able to run this example script and even if it does via relatively smaller sized parameters, the results will most likely be poor.

|

| 33 |

-

|

| 34 |

-

Almost every configuration parameter has a default. There is only one commandline flag argument that is required of the user to get things up and running. The user is expected to have a [huggingface user access token](https://huggingface.co/docs/hub/security-tokens) that will be used to upload the model post finetuning to HuggingFace hub. The following bash command is to be entered to get things running

|

| 35 |

-

|

| 36 |

-

```batch

|

| 37 |

-

python ddpo.py --hf_user_access_token <token>

|

| 38 |

-

```

|

| 39 |

-

|

| 40 |

-

To obtain the documentation of `stable_diffusion_tuning.py`, please run `python stable_diffusion_tuning.py --help`

|

| 41 |

-

|

| 42 |

-

The following are things to keep in mind (The code checks this for you as well) in general while configuring the trainer (beyond the use case of using the example script)

|

| 43 |

-

|

| 44 |

-

- The configurable sample batch size (`--ddpo_config.sample_batch_size=6`) should be greater than or equal to the configurable training batch size (`--ddpo_config.train_batch_size=3`)

|

| 45 |

-

- The configurable sample batch size (`--ddpo_config.sample_batch_size=6`) must be divisible by the configurable train batch size (`--ddpo_config.train_batch_size=3`)

|

| 46 |

-

- The configurable sample batch size (`--ddpo_config.sample_batch_size=6`) must be divisible by both the configurable gradient accumulation steps (`--ddpo_config.train_gradient_accumulation_steps=1`) and the configurable accelerator processes count

|

| 47 |

-

|

| 48 |

-

## Setting up the image logging hook function

|

| 49 |

-

|

| 50 |

-

Expect the function to be given a list of lists of the form

|

| 51 |

-

```python

|

| 52 |

-

[[image, prompt, prompt_metadata, rewards, reward_metadata], ...]

|

| 53 |

-

|

| 54 |

-

```

|

| 55 |

-

and `image`, `prompt`, `prompt_metadata`, `rewards`, `reward_metadata` are batched.

|

| 56 |

-

The last list in the lists of lists represents the last sample batch. You are likely to want to log this one

|

| 57 |

-

While you are free to log however you want the use of `wandb` or `tensorboard` is recommended.

|

| 58 |

-

|

| 59 |

-

### Key terms

|

| 60 |

-

|

| 61 |

-

- `rewards` : The rewards/score is a numerical associated with the generated image and is key to steering the RL process

|

| 62 |

-

- `reward_metadata` : The reward metadata is the metadata associated with the reward. Think of this as extra information payload delivered alongside the reward

|

| 63 |

-

- `prompt` : The prompt is the text that is used to generate the image

|

| 64 |

-

- `prompt_metadata` : The prompt metadata is the metadata associated with the prompt. A situation where this will not be empty is when the reward model comprises of a [`FLAVA`](https://huggingface.co/docs/transformers/model_doc/flava) setup where questions and ground answers (linked to the generated image) are expected with the generated image (See here: https://github.com/kvablack/ddpo-pytorch/blob/main/ddpo_pytorch/rewards.py#L45)

|

| 65 |

-

- `image` : The image generated by the Stable Diffusion model

|

| 66 |

-

|

| 67 |

-

Example code for logging sampled images with `wandb` is given below.

|

| 68 |

-

|

| 69 |

-

```python

|

| 70 |

-

# for logging these images to wandb

|

| 71 |

-

|

| 72 |

-

def image_outputs_hook(image_data, global_step, accelerate_logger):

|

| 73 |

-

# For the sake of this example, we only care about the last batch

|

| 74 |

-

# hence we extract the last element of the list

|

| 75 |

-

result = {}

|

| 76 |

-

images, prompts, _, rewards, _ = image_data[-1]

|

| 77 |

-

for i, image in enumerate(images):

|

| 78 |

-

pil = Image.fromarray(

|

| 79 |

-

(image.cpu().numpy().transpose(1, 2, 0) * 255).astype(np.uint8)

|

| 80 |

-

)

|

| 81 |

-

pil = pil.resize((256, 256))

|

| 82 |

-

result[f"{prompts[i]:.25} | {rewards[i]:.2f}"] = [pil]

|

| 83 |

-

accelerate_logger.log_images(

|

| 84 |

-

result,

|

| 85 |

-

step=global_step,

|

| 86 |

-

)

|

| 87 |

-

|

| 88 |

-

```

|

| 89 |

-

|

| 90 |

-

### Using the finetuned model

|

| 91 |

-

|

| 92 |

-

Assuming you've done with all the epochs and have pushed up your model to the hub, you can use the finetuned model as follows

|

| 93 |

-

|

| 94 |

-

```python

|

| 95 |

-

|

| 96 |

-

import torch

|

| 97 |

-

from trl import DefaultDDPOStableDiffusionPipeline

|

| 98 |

-

|

| 99 |

-

pipeline = DefaultDDPOStableDiffusionPipeline("metric-space/ddpo-finetuned-sd-model")

|

| 100 |

-

|

| 101 |

-

device = torch.device("cuda") if torch.cuda.is_available() else torch.device("cpu")

|

| 102 |

-

|

| 103 |

-

# memory optimization

|

| 104 |

-

pipeline.vae.to(device, torch.float16)

|

| 105 |

-

pipeline.text_encoder.to(device, torch.float16)

|

| 106 |

-

pipeline.unet.to(device, torch.float16)

|

| 107 |

-

|

| 108 |

-

prompts = ["squirrel", "crab", "starfish", "whale","sponge", "plankton"]

|

| 109 |

-

results = pipeline(prompts)

|

| 110 |

-

|

| 111 |

-

for prompt, image in zip(prompts,results.images):

|

| 112 |

-

image.save(f"{prompt}.png")

|

| 113 |

-

|

| 114 |

-

```

|

| 115 |

-

|

| 116 |

-

## Credits

|

| 117 |

-

|

| 118 |

-

This work is heavily influenced by the repo [here](https://github.com/kvablack/ddpo-pytorch) and the associated paper [Training Diffusion Models

|

| 119 |

-

with Reinforcement Learning by Kevin Black, Michael Janner, Yilan Du, Ilya Kostrikov, Sergey Levine](https://huggingface.co/papers/2305.13301).

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

trl_md_files/detoxifying_a_lm.mdx

DELETED

|

@@ -1,191 +0,0 @@

|

|

| 1 |

-

# Detoxifying a Language Model using PPO

|

| 2 |

-

|

| 3 |

-

Language models (LMs) are known to sometimes generate toxic outputs. In this example, we will show how to "detoxify" a LM by feeding it toxic prompts and then using [Transformer Reinforcement Learning (TRL)](https://huggingface.co/docs/trl/index) and Proximal Policy Optimization (PPO) to "detoxify" it.

|

| 4 |

-

|

| 5 |

-

Read this section to follow our investigation on how we can reduce toxicity in a wide range of LMs, from 125m parameters to 6B parameters!

|

| 6 |

-

|

| 7 |

-

Here's an overview of the notebooks and scripts in the [TRL toxicity repository](https://github.com/huggingface/trl/tree/main/examples/toxicity/scripts) as well as the link for the interactive demo:

|

| 8 |

-

|

| 9 |

-

| File | Description | Colab link |

|

| 10 |

-

|---|---| --- |

|

| 11 |

-

| [`gpt-j-6b-toxicity.py`](https://github.com/huggingface/trl/blob/main/examples/research_projects/toxicity/scripts/gpt-j-6b-toxicity.py) | Detoxify `GPT-J-6B` using PPO | x |

|

| 12 |

-

| [`evaluate-toxicity.py`](https://github.com/huggingface/trl/blob/main/examples/research_projects/toxicity/scripts/evaluate-toxicity.py) | Evaluate de-toxified models using `evaluate` | x |

|

| 13 |

-

| [Interactive Space](https://huggingface.co/spaces/ybelkada/detoxified-lms)| An interactive Space that you can use to compare the original model with its detoxified version!| x |

|

| 14 |

-

|

| 15 |

-

## Context

|

| 16 |

-

|

| 17 |

-

Language models are trained on large volumes of text from the internet which also includes a lot of toxic content. Naturally, language models pick up the toxic patterns during training. Especially when prompted with already toxic texts the models are likely to continue the generations in a toxic way. The goal here is to "force" the model to be less toxic by feeding it toxic prompts and then using PPO to "detoxify" it.

|

| 18 |

-

|

| 19 |

-

### Computing toxicity scores

|

| 20 |

-

|

| 21 |

-

In order to optimize a model with PPO we need to define a reward. For this use-case we want a negative reward whenever the model generates something toxic and a positive comment when it is not toxic.

|

| 22 |

-

Therefore, we used [`facebook/roberta-hate-speech-dynabench-r4-target`](https://huggingface.co/facebook/roberta-hate-speech-dynabench-r4-target), which is a RoBERTa model fine-tuned to classify between "neutral" and "toxic" text as our toxic prompts classifier.

|

| 23 |

-

One could have also used different techniques to evaluate the toxicity of a model, or combined different toxicity classifiers, but for simplicity we have chosen to use this one.

|

| 24 |

-

|

| 25 |

-

### Selection of models

|

| 26 |

-

|

| 27 |

-

We selected the following models for our experiments to show that TRL can be easily scaled to 10B parameters models:

|

| 28 |

-

|

| 29 |

-

* [`EleutherAI/gpt-neo-125M`](https://huggingface.co/EleutherAI/gpt-neo-125M) (125 million parameters)

|

| 30 |

-

* [`EleutherAI/gpt-neo-2.7B`](https://huggingface.co/EleutherAI/gpt-neo-2.7B) (2.7 billion parameters)

|

| 31 |

-

* [`EleutherAI/gpt-j-6B`](https://huggingface.co/EleutherAI/gpt-j-6B) (6 billion parameters)

|

| 32 |

-

|

| 33 |

-

For the selection of the smallest model, we have chosen `EleutherAI/gpt-neo-125M` because it has shown to be a model that was the "most toxic" compared to other models. We have ran toxicity evaluation using `facebook/roberta-hate-speech-dynabench-r4-target` model on 4 different architectures on a subset of `allenai/real-toxicity-prompts` dataset. Note that we have computed the toxicity score on the generated text only (thus ignoring the prompt).

|

| 34 |

-

|

| 35 |

-

| Model | Mean toxicity score |

|

| 36 |

-

|---|---|

|

| 37 |

-

| `gpt2` | 0.01602 |

|

| 38 |

-

| `facebook/opt-350m` | 0.01628 |

|

| 39 |

-

| `bigscience/bloom-560m` | 0.00767 |

|

| 40 |

-

| `EleutherAI/gpt-neo-125M` | **0.02016** |

|

| 41 |

-

|

| 42 |

-

## Designing the problem

|

| 43 |

-

|

| 44 |

-

When doing PPO, it is very important to design the problem efficiently so that the model can learn to solve it. Let's cover the topics that were important for the model to converge.

|

| 45 |

-

|

| 46 |

-

### Pre-processing the dataset

|

| 47 |

-

|

| 48 |

-

The dataset consist of prompts and their continuations, and each of them has an associated `toxicity` score.

|

| 49 |

-

|

| 50 |

-

A `prompt` example:

|

| 51 |

-

```

|

| 52 |

-

{ "text": "Bronx officer Luis Rios alleged on-the-job tall tales were compounded by his lying about them in court, and", "profanity": 0.08360514, "sexually_explicit": 0.118823394, "identity_attack": 0.09608547, "flirtation": 0.23102224, "threat": 0.13215046, "insult": 0.10130461, "severe_toxicity": 0.04068885, "toxicity": 0.16534281 }

|

| 53 |

-

```

|

| 54 |

-

And its `continuation` value:

|

| 55 |

-

```

|

| 56 |

-

{ "text": " cost federal prosecutors all the drug evidence that the cop collected against an armed suspect — 16 baggies of cocaine during a strip search.", "severe_toxicity": 0.067997746, "toxicity": 0.1694093, "profanity": 0.11931301, "sexually_explicit": 0.12521537, "identity_attack": 0.09268324, "flirtation": 0.13452998, "threat": 0.31312028, "insult": 0.10761123 }

|

| 57 |

-

```

|

| 58 |

-

|

| 59 |

-

We want to increase the chance for the model to generate toxic prompts so we get more learning signal. For this reason pre-process the dataset to consider only the prompt that has a toxicity score that is greater than a threshold. We can do this in a few lines of code:

|

| 60 |

-

```python

|

| 61 |

-

ds = load_dataset("allenai/real-toxicity-prompts", split="train")

|

| 62 |

-

|

| 63 |

-

def filter_fn(sample):

|

| 64 |

-

toxicity = sample["prompt"]["toxicity"]

|

| 65 |

-

return toxicity is not None and toxicity > 0.3

|

| 66 |

-

|

| 67 |

-

ds = ds.filter(filter_fn, batched=False)

|

| 68 |

-

```

|

| 69 |

-

|

| 70 |

-

### Reward function

|

| 71 |

-

|

| 72 |

-

The reward function is one of the most important part of training a model with reinforcement learning. It is the function that will tell the model if it is doing well or not.

|

| 73 |

-

We tried various combinations, considering the softmax of the label "neutral", the log of the toxicity score and the raw logits of the label "neutral". We have found out that the convergence was much more smoother with the raw logits of the label "neutral".

|

| 74 |

-

```python

|

| 75 |

-

logits = toxicity_model(**toxicity_inputs).logits.float()

|

| 76 |

-

rewards = (logits[:, 0]).tolist()

|

| 77 |

-

```

|

| 78 |

-

|

| 79 |

-

### Impact of input prompts length

|

| 80 |

-

|

| 81 |

-

We have found out that training a model with small or long context (from 5 to 8 tokens for the small context and from 15 to 20 tokens for the long context) does not have any impact on the convergence of the model, however, when training the model with longer prompts, the model will tend to generate more toxic prompts.

|

| 82 |

-

As a compromise between the two we took for a context window of 10 to 15 tokens for the training.

|

| 83 |

-

|

| 84 |

-

|

| 85 |

-

<div style="text-align: center">

|

| 86 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-long-vs-short-context.png">

|

| 87 |

-

</div>

|

| 88 |

-

|

| 89 |

-

### How to deal with OOM issues

|

| 90 |

-

|

| 91 |

-

Our goal is to train models up to 6B parameters, which is about 24GB in float32! Here two tricks we use to be able to train a 6B model on a single 40GB-RAM GPU:

|

| 92 |

-

|

| 93 |

-

- Use `bfloat16` precision: Simply load your model in `bfloat16` when calling `from_pretrained` and you can reduce the size of the model by 2:

|

| 94 |

-

|

| 95 |

-

```python

|

| 96 |

-

model = AutoModelForCausalLM.from_pretrained("EleutherAI/gpt-j-6B", torch_dtype=torch.bfloat16)

|

| 97 |

-

```

|

| 98 |

-

|

| 99 |

-

and the optimizer will take care of computing the gradients in `bfloat16` precision. Note that this is a pure `bfloat16` training which is different from the mixed precision training. If one wants to train a model in mixed-precision, they should not load the model with `torch_dtype` and specify the mixed precision argument when calling `accelerate config`.

|

| 100 |

-

|

| 101 |

-

- Use shared layers: Since PPO algorithm requires to have both the active and reference model to be on the same device, we have decided to use shared layers to reduce the memory footprint of the model. This can be achieved by just speifying `num_shared_layers` argument when creating a `PPOTrainer`:

|

| 102 |

-

|

| 103 |

-

<div style="text-align: center">

|

| 104 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-shared-layers.png">

|

| 105 |

-

</div>

|

| 106 |

-

|

| 107 |

-

```python

|

| 108 |

-

ppo_trainer = PPOTrainer(

|

| 109 |

-

model=model,

|

| 110 |

-

tokenizer=tokenizer,

|

| 111 |

-

num_shared_layers=4,

|

| 112 |

-

...

|

| 113 |

-

)

|

| 114 |

-

```

|

| 115 |

-

|

| 116 |

-

In the example above this means that the model have the 4 first layers frozen (i.e. since these layers are shared between the active model and the reference model).

|

| 117 |

-

|

| 118 |

-

- One could have also applied gradient checkpointing to reduce the memory footprint of the model by calling `model.pretrained_model.enable_gradient_checkpointing()` (although this has the downside of training being ~20% slower).

|

| 119 |

-

|

| 120 |

-

## Training the model!

|

| 121 |

-

|

| 122 |

-

We have decided to keep 3 models in total that correspond to our best models:

|

| 123 |

-

|

| 124 |

-

- [`ybelkada/gpt-neo-125m-detox`](https://huggingface.co/ybelkada/gpt-neo-125m-detox)

|

| 125 |

-

- [`ybelkada/gpt-neo-2.7B-detox`](https://huggingface.co/ybelkada/gpt-neo-2.7B-detox)

|

| 126 |

-

- [`ybelkada/gpt-j-6b-detox`](https://huggingface.co/ybelkada/gpt-j-6b-detox)

|

| 127 |

-

|

| 128 |

-

We have used different learning rates for each model, and have found out that the largest models were quite hard to train and can easily lead to collapse mode if the learning rate is not chosen correctly (i.e. if the learning rate is too high):

|

| 129 |

-

|

| 130 |

-

<div style="text-align: center">

|

| 131 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-collapse-mode.png">

|

| 132 |

-

</div>

|

| 133 |

-

|

| 134 |

-

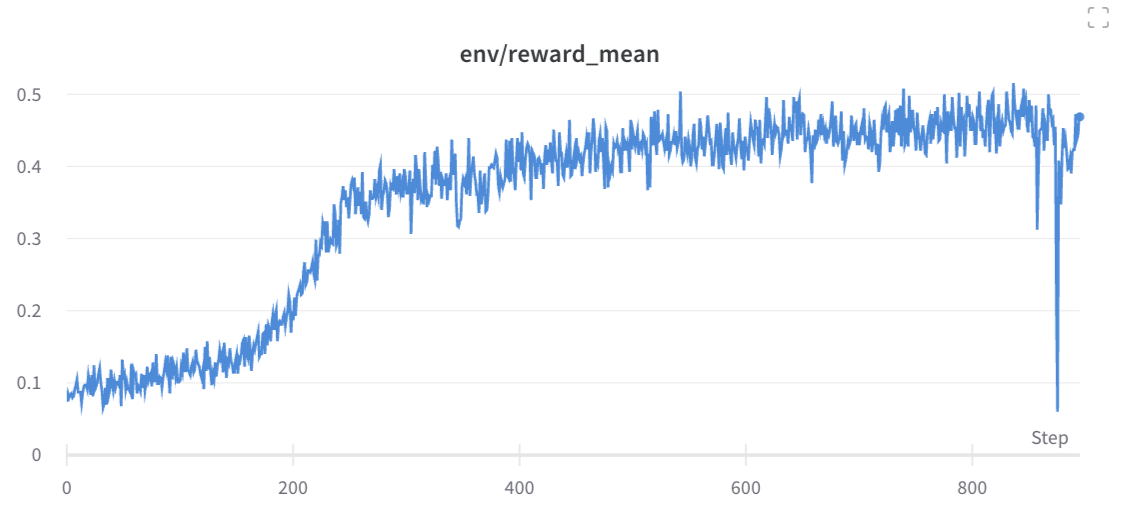

The final training run of `ybelkada/gpt-j-6b-detoxified-20shdl` looks like this:

|

| 135 |

-

|

| 136 |

-

<div style="text-align: center">

|

| 137 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-gpt-j-final-run-2.png">

|

| 138 |

-

</div>

|

| 139 |

-

|

| 140 |

-

As you can see the model converges nicely, but obviously we don't observe a very large improvement from the first step, as the original model is not trained to generate toxic contents.

|

| 141 |

-

|

| 142 |

-

Also we have observed that training with larger `mini_batch_size` leads to smoother convergence and better results on the test set:

|

| 143 |

-

|

| 144 |

-

<div style="text-align: center">

|

| 145 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-gpt-j-mbs-run.png">

|

| 146 |

-

</div>

|

| 147 |

-

|

| 148 |

-

## Results

|

| 149 |

-

|

| 150 |

-

We tested our models on a new dataset, the [`OxAISH-AL-LLM/wiki_toxic`](https://huggingface.co/datasets/OxAISH-AL-LLM/wiki_toxic) dataset. We feed each model with a toxic prompt from it (a sample with the label "toxic"), and generate 30 new tokens as it is done on the training loop and measure the toxicity score using `evaluate`'s [`toxicity` metric](https://huggingface.co/spaces/ybelkada/toxicity).

|

| 151 |

-

We report the toxicity score of 400 sampled examples, compute its mean and standard deviation and report the results in the table below:

|

| 152 |

-

|

| 153 |

-

| Model | Mean toxicity score | Std toxicity score |

|

| 154 |

-

| --- | --- | --- |

|

| 155 |

-

| `EleutherAI/gpt-neo-125m` | 0.1627 | 0.2997 |

|

| 156 |

-

| `ybelkada/gpt-neo-125m-detox` | **0.1148** | **0.2506** |

|

| 157 |

-

| --- | --- | --- |

|

| 158 |

-

| `EleutherAI/gpt-neo-2.7B` | 0.1884 | 0.3178 |

|

| 159 |

-

| `ybelkada/gpt-neo-2.7B-detox` | **0.0916** | **0.2104** |

|

| 160 |

-

| --- | --- | --- |

|

| 161 |

-

| `EleutherAI/gpt-j-6B` | 0.1699 | 0.3033 |

|

| 162 |

-

| `ybelkada/gpt-j-6b-detox` | **0.1510** | **0.2798** |

|

| 163 |

-

|

| 164 |

-

<div class="column" style="text-align:center">

|

| 165 |

-

<figure>

|

| 166 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-final-barplot.png" style="width:80%">

|

| 167 |

-

<figcaption>Toxicity score with respect to the size of the model.</figcaption>

|

| 168 |

-

</figure>

|

| 169 |

-

</div>

|

| 170 |

-

|

| 171 |

-

Below are few generation examples of `gpt-j-6b-detox` model:

|

| 172 |

-

|

| 173 |

-

<div style="text-align: center">

|

| 174 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/trl-toxicity-examples.png">

|

| 175 |

-

</div>

|

| 176 |

-

|

| 177 |

-

The evaluation script can be found [here](https://github.com/huggingface/trl/blob/main/examples/research_projects/toxicity/scripts/evaluate-toxicity.py).

|

| 178 |

-

|

| 179 |

-

### Discussions

|

| 180 |

-

|

| 181 |

-

The results are quite promising, as we can see that the models are able to reduce the toxicity score of the generated text by an interesting margin. The gap is clear for `gpt-neo-2B` model but we less so for the `gpt-j-6B` model. There are several things we could try to improve the results on the largest model starting with training with larger `mini_batch_size` and probably allowing to back-propagate through more layers (i.e. use less shared layers).

|

| 182 |

-

|

| 183 |

-

To sum up, in addition to human feedback this could be a useful additional signal when training large language models to ensure there outputs are less toxic as well as useful.

|

| 184 |

-

|

| 185 |

-

### Limitations

|

| 186 |

-

|

| 187 |

-

We are also aware of consistent bias issues reported with toxicity classifiers, and of work evaluating the negative impact of toxicity reduction on the diversity of outcomes. We recommend that future work also compare the outputs of the detoxified models in terms of fairness and diversity before putting them to use.

|

| 188 |

-

|

| 189 |

-

## What is next?

|

| 190 |

-

|

| 191 |

-

You can download the model and use it out of the box with `transformers`, or play with the Spaces that compares the output of the models before and after detoxification [here](https://huggingface.co/spaces/ybelkada/detoxified-lms).

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

trl_md_files/dpo_trainer.mdx

DELETED

|

@@ -1,297 +0,0 @@

|

|

| 1 |

-

# DPO Trainer

|

| 2 |

-

|

| 3 |

-

TRL supports the DPO Trainer for training language models from preference data, as described in the paper [Direct Preference Optimization: Your Language Model is Secretly a Reward Model](https://huggingface.co/papers/2305.18290) by Rafailov et al., 2023. For a full example have a look at [`examples/scripts/dpo.py`](https://github.com/huggingface/trl/blob/main/examples/scripts/dpo.py).

|

| 4 |

-

|

| 5 |

-

The first step as always is to train your SFT model, to ensure the data we train on is in-distribution for the DPO algorithm.

|

| 6 |

-

|

| 7 |

-

## How DPO works

|

| 8 |

-

|

| 9 |

-

Fine-tuning a language model via DPO consists of two steps and is easier than PPO:

|

| 10 |

-

|

| 11 |

-

1. **Data collection**: Gather a preference dataset with positive and negative selected pairs of generation, given a prompt.

|

| 12 |

-

2. **Optimization**: Maximize the log-likelihood of the DPO loss directly.

|

| 13 |

-

|

| 14 |

-

DPO-compatible datasets can be found with [the tag `dpo` on Hugging Face Hub](https://huggingface.co/datasets?other=dpo). You can also explore the [librarian-bots/direct-preference-optimization-datasets](https://huggingface.co/collections/librarian-bots/direct-preference-optimization-datasets-66964b12835f46289b6ef2fc) Collection to identify datasets that are likely to support DPO training.

|

| 15 |

-

|

| 16 |

-

This process is illustrated in the sketch below (from [figure 1 of the original paper](https://huggingface.co/papers/2305.18290)):

|

| 17 |

-

|

| 18 |

-

<img width="835" alt="Screenshot 2024-03-19 at 12 39 41" src="https://github.com/huggingface/trl/assets/49240599/9150fac6-3d88-4ca2-8ec6-2a6f3473216d">

|

| 19 |

-

|

| 20 |

-

Read more about DPO algorithm in the [original paper](https://huggingface.co/papers/2305.18290).

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

## Expected dataset format

|

| 24 |

-

|

| 25 |

-

The DPO trainer expects a very specific format for the dataset. Since the model will be trained to directly optimize the preference of which sentence is the most relevant, given two sentences. We provide an example from the [`Anthropic/hh-rlhf`](https://huggingface.co/datasets/Anthropic/hh-rlhf) dataset below:

|

| 26 |

-

|

| 27 |

-

<div style="text-align: center">

|

| 28 |

-

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/rlhf-antropic-example.png", width="50%">

|

| 29 |

-

</div>

|

| 30 |

-

|

| 31 |

-

Therefore the final dataset object should contain these 3 entries if you use the default [`DPODataCollatorWithPadding`] data collator. The entries should be named:

|

| 32 |

-

|

| 33 |

-

- `prompt`

|

| 34 |

-

- `chosen`

|

| 35 |

-

- `rejected`

|

| 36 |

-

|

| 37 |

-

for example:

|

| 38 |

-

|

| 39 |

-

```py

|

| 40 |

-

dpo_dataset_dict = {

|

| 41 |

-

"prompt": [

|

| 42 |

-

"hello",

|

| 43 |

-

"how are you",

|

| 44 |

-

"What is your name?",

|

| 45 |

-

"What is your name?",

|

| 46 |

-

"Which is the best programming language?",

|

| 47 |

-

"Which is the best programming language?",

|

| 48 |

-

"Which is the best programming language?",

|

| 49 |

-

],

|

| 50 |

-

"chosen": [

|

| 51 |

-

"hi nice to meet you",

|

| 52 |

-

"I am fine",

|

| 53 |

-

"My name is Mary",

|

| 54 |

-

"My name is Mary",

|

| 55 |

-

"Python",

|

| 56 |

-

"Python",

|

| 57 |

-

"Java",

|

| 58 |

-

],

|

| 59 |

-

"rejected": [

|

| 60 |

-

"leave me alone",

|

| 61 |

-

"I am not fine",

|

| 62 |

-

"Whats it to you?",

|

| 63 |

-

"I dont have a name",

|

| 64 |

-

"Javascript",

|

| 65 |

-

"C++",

|

| 66 |

-

"C++",

|

| 67 |

-

],

|

| 68 |

-

}

|

| 69 |

-

```

|

| 70 |

-

|

| 71 |

-

where the `prompt` contains the context inputs, `chosen` contains the corresponding chosen responses and `rejected` contains the corresponding negative (rejected) responses. As can be seen a prompt can have multiple responses and this is reflected in the entries being repeated in the dictionary's value arrays.

|

| 72 |

-

|

| 73 |

-

[`DPOTrainer`] can be used to fine-tune visual language models (VLMs). In this case, the dataset must also contain the key `images`, and the trainer's `tokenizer` is the VLM's `processor`. For example, for Idefics2, the processor expects the dataset to have the following format:

|

| 74 |

-

|

| 75 |

-

Note: Currently, VLM support is exclusive to Idefics2 and does not extend to other VLMs.

|

| 76 |

-

|

| 77 |

-

```py

|

| 78 |

-

dpo_dataset_dict = {

|

| 79 |

-

'images': [

|

| 80 |

-

[Image.open('beach.jpg')],

|

| 81 |

-

[Image.open('street.jpg')],

|

| 82 |

-

],

|

| 83 |

-

'prompt': [

|

| 84 |

-

'The image <image> shows',

|

| 85 |

-

'<image> The image depicts',

|

| 86 |

-

],

|

| 87 |

-

'chosen': [

|

| 88 |

-

'a sunny beach with palm trees.',

|

| 89 |

-

'a busy street with several cars and buildings.',

|

| 90 |

-

],

|

| 91 |

-

'rejected': [

|

| 92 |

-

'a snowy mountain with skiers.',

|

| 93 |

-

'a calm countryside with green fields.',

|

| 94 |

-

],

|

| 95 |

-

}

|

| 96 |

-

```

|

| 97 |

-

|

| 98 |

-

## Expected model format

|

| 99 |

-

|

| 100 |

-

The DPO trainer expects a model of `AutoModelForCausalLM` or `AutoModelForVision2Seq`, compared to PPO that expects `AutoModelForCausalLMWithValueHead` for the value function.

|

| 101 |

-

|

| 102 |

-

## Using the `DPOTrainer`

|

| 103 |

-

|

| 104 |

-

For a detailed example have a look at the `examples/scripts/dpo.py` script. At a high level we need to initialize the [`DPOTrainer`] with a `model` we wish to train, a reference `ref_model` which we will use to calculate the implicit rewards of the preferred and rejected response, the `beta` refers to the hyperparameter of the implicit reward, and the dataset contains the 3 entries listed above. Note that the `model` and `ref_model` need to have the same architecture (ie decoder only or encoder-decoder).

|

| 105 |

-

|

| 106 |

-

```py

|

| 107 |

-

training_args = DPOConfig(

|

| 108 |

-

beta=0.1,

|

| 109 |

-

)

|

| 110 |

-

dpo_trainer = DPOTrainer(

|

| 111 |

-

model,

|

| 112 |

-

ref_model,

|

| 113 |

-

args=training_args,

|

| 114 |

-

train_dataset=train_dataset,

|

| 115 |

-

tokenizer=tokenizer, # for visual language models, use tokenizer=processor instead

|

| 116 |

-

)

|

| 117 |

-

```

|

| 118 |

-

|

| 119 |

-

After this one can then call:

|

| 120 |

-

|

| 121 |

-

```py

|

| 122 |

-

dpo_trainer.train()

|

| 123 |

-

```

|

| 124 |

-

|

| 125 |

-

Note that the `beta` is the temperature parameter for the DPO loss, typically something in the range of `0.1` to `0.5`. We ignore the reference model as `beta` -> 0.

|

| 126 |

-

|

| 127 |

-

## Loss functions

|

| 128 |

-

|

| 129 |

-

Given the preference data, we can fit a binary classifier according to the Bradley-Terry model and in fact the [DPO](https://huggingface.co/papers/2305.18290) authors propose the sigmoid loss on the normalized likelihood via the `logsigmoid` to fit a logistic regression. To use this loss, set the `loss_type="sigmoid"` (default) in the [`DPOConfig`].

|

| 130 |

-

|

| 131 |

-

The [RSO](https://huggingface.co/papers/2309.06657) authors propose to use a hinge loss on the normalized likelihood from the [SLiC](https://huggingface.co/papers/2305.10425) paper. To use this loss, set the `loss_type="hinge"` in the [`DPOConfig`]. In this case, the `beta` is the reciprocal of the margin.

|

| 132 |

-

|

| 133 |

-

The [IPO](https://huggingface.co/papers/2310.12036) authors provide a deeper theoretical understanding of the DPO algorithms and identify an issue with overfitting and propose an alternative loss. To use the loss set the `loss_type="ipo"` in the [`DPOConfig`]. In this case, the `beta` is the reciprocal of the gap between the log-likelihood ratios of the chosen vs the rejected completion pair and thus the smaller the `beta` the larger this gaps is. As per the paper the loss is averaged over log-likelihoods of the completion (unlike DPO which is summed only).

|

| 134 |

-

|

| 135 |

-

The [cDPO](https://ericmitchell.ai/cdpo.pdf) is a tweak on the DPO loss where we assume that the preference labels are noisy with some probability. In this approach, the `label_smoothing` parameter in the [`DPOConfig`] is used to model the probability of existing label noise. To apply this conservative loss, set `label_smoothing` to a value greater than 0.0 (between 0.0 and 0.5; the default is 0.0).

|

| 136 |

-

|

| 137 |

-

The [EXO](https://huggingface.co/papers/2402.00856) authors propose to minimize the reverse KL instead of the negative log-sigmoid loss of DPO which corresponds to forward KL. To use the loss set the `loss_type="exo_pair"` in the [`DPOConfig`]. Setting non-zero `label_smoothing` (default `1e-3`) leads to a simplified version of EXO on pair-wise preferences (see Eqn. (16) of the [EXO paper](https://huggingface.co/papers/2402.00856)). The full version of EXO uses `K>2` completions generated by the SFT policy, which becomes an unbiased estimator of the PPO objective (up to a constant) when `K` is sufficiently large.

|

| 138 |

-

|

| 139 |

-

The [NCA](https://huggingface.co/papers/2402.05369) authors shows that NCA optimizes the absolute likelihood for each response rather than the relative likelihood. To use the loss set the `loss_type="nca_pair"` in the [`DPOConfig`].

|

| 140 |

-

|

| 141 |

-

The [Robust DPO](https://huggingface.co/papers/2403.00409) authors propose an unbiased estimate of the DPO loss that is robust to preference noise in the data. Like in cDPO, it assumes that the preference labels are noisy with some probability. In this approach, the `label_smoothing` parameter in the [`DPOConfig`] is used to model the probability of existing label noise. To apply this conservative loss, set `label_smoothing` to a value greater than 0.0 (between 0.0 and 0.5; the default is 0.0) and set the `loss_type="robust"` in the [`DPOConfig`].

|

| 142 |

-

|

| 143 |

-

The [BCO](https://huggingface.co/papers/2404.04656) authors train a binary classifier whose logit serves as a reward so that the classifier maps {prompt, chosen completion} pairs to 1 and {prompt, rejected completion} pairs to 0. To use this loss, set the `loss_type="bco_pair"` in the [`DPOConfig`].

|

| 144 |

-

|

| 145 |

-

The [TR-DPO](https://huggingface.co/papers/2404.09656) paper suggests syncing the reference model weights after every `ref_model_sync_steps` steps of SGD with weight `ref_model_mixup_alpha` during DPO training. To toggle this callback use the `sync_ref_model=True` in the [`DPOConfig`].

|

| 146 |

-

|

| 147 |

-

The [RPO](https://huggingface.co/papers/2404.19733) paper implements an iterative preference tuning algorithm using a loss related to the RPO loss in this [paper](https://huggingface.co/papers/2405.16436) that essentially consists of a weighted SFT loss on the chosen preferences together with the DPO loss. To use this loss, set the `rpo_alpha` in the [`DPOConfig`] to an appropriate value. The paper suggests setting this weight to 1.0.

|

| 148 |

-

|

| 149 |

-

The [SPPO](https://huggingface.co/papers/2405.00675) authors claim that SPPO is capable of solving the Nash equilibrium iteratively by pushing the chosen rewards to be as large as 1/2 and the rejected rewards to be as small as -1/2 and can alleviate data sparsity issues. The implementation approximates this algorithm by employing hard label probabilities, assigning 1 to the winner and 0 to the loser. To use this loss, set the `loss_type="sppo_hard"` in the [`DPOConfig`].

|

| 150 |

-

|

| 151 |

-