马 仕镕

commited on

Commit

•

807017b

1

Parent(s):

634e29e

Update README

Browse files- README.md +179 -2

- figures/arena1.png +0 -0

- figures/arena2.png +0 -0

README.md

CHANGED

|

@@ -1,5 +1,182 @@

|

|

| 1 |

---

|

| 2 |

license: other

|

| 3 |

-

license_name: deepseek

|

| 4 |

-

license_link: LICENSE

|

| 5 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: other

|

| 3 |

+

license_name: deepseek

|

| 4 |

+

license_link: https://github.com/deepseek-ai/DeepSeek-V2/blob/main/LICENSE-MODEL

|

| 5 |

---

|

| 6 |

+

|

| 7 |

+

<!-- markdownlint-disable first-line-h1 -->

|

| 8 |

+

<!-- markdownlint-disable html -->

|

| 9 |

+

<!-- markdownlint-disable no-duplicate-header -->

|

| 10 |

+

|

| 11 |

+

<div align="center">

|

| 12 |

+

<img src="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/figures/logo.svg?raw=true" width="60%" alt="DeepSeek-V2" />

|

| 13 |

+

</div>

|

| 14 |

+

<hr>

|

| 15 |

+

<div align="center" style="line-height: 1;">

|

| 16 |

+

<a href="https://www.deepseek.com/" target="_blank" style="margin: 2px;">

|

| 17 |

+

<img alt="Homepage" src="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/figures/badge.svg?raw=true" style="display: inline-block; vertical-align: middle;"/>

|

| 18 |

+

</a>

|

| 19 |

+

<a href="https://chat.deepseek.com/" target="_blank" style="margin: 2px;">

|

| 20 |

+

<img alt="Chat" src="https://img.shields.io/badge/🤖%20Chat-DeepSeek%20V2-536af5?color=536af5&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 21 |

+

</a>

|

| 22 |

+

<a href="https://huggingface.co/deepseek-ai" target="_blank" style="margin: 2px;">

|

| 23 |

+

<img alt="Hugging Face" src="https://img.shields.io/badge/%F0%9F%A4%97%20Hugging%20Face-DeepSeek%20AI-ffc107?color=ffc107&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 24 |

+

</a>

|

| 25 |

+

</div>

|

| 26 |

+

|

| 27 |

+

<div align="center" style="line-height: 1;">

|

| 28 |

+

<a href="https://discord.gg/Tc7c45Zzu5" target="_blank" style="margin: 2px;">

|

| 29 |

+

<img alt="Discord" src="https://img.shields.io/badge/Discord-DeepSeek%20AI-7289da?logo=discord&logoColor=white&color=7289da" style="display: inline-block; vertical-align: middle;"/>

|

| 30 |

+

</a>

|

| 31 |

+

<a href="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/figures/qr.jpeg?raw=true" target="_blank" style="margin: 2px;">

|

| 32 |

+

<img alt="Wechat" src="https://img.shields.io/badge/WeChat-DeepSeek%20AI-brightgreen?logo=wechat&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 33 |

+

</a>

|

| 34 |

+

<a href="https://twitter.com/deepseek_ai" target="_blank" style="margin: 2px;">

|

| 35 |

+

<img alt="Twitter Follow" src="https://img.shields.io/badge/Twitter-deepseek_ai-white?logo=x&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 36 |

+

</a>

|

| 37 |

+

</div>

|

| 38 |

+

|

| 39 |

+

<div align="center" style="line-height: 1;">

|

| 40 |

+

<a href="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/LICENSE-CODE" style="margin: 2px;">

|

| 41 |

+

<img alt="Code License" src="https://img.shields.io/badge/Code_License-MIT-f5de53?&color=f5de53" style="display: inline-block; vertical-align: middle;"/>

|

| 42 |

+

</a>

|

| 43 |

+

<a href="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/LICENSE-MODEL" style="margin: 2px;">

|

| 44 |

+

<img alt="Model License" src="https://img.shields.io/badge/Model_License-Model_Agreement-f5de53?&color=f5de53" style="display: inline-block; vertical-align: middle;"/>

|

| 45 |

+

</a>

|

| 46 |

+

</div>

|

| 47 |

+

|

| 48 |

+

<p align="center">

|

| 49 |

+

<a href="#2-model-downloads">Model Download</a> |

|

| 50 |

+

<a href="#3-evaluation-results">Evaluation Results</a> |

|

| 51 |

+

<a href="#4-model-architecture">Model Architecture</a> |

|

| 52 |

+

<a href="#6-api-platform">API Platform</a> |

|

| 53 |

+

<a href="#8-license">License</a> |

|

| 54 |

+

<a href="#9-citation">Citation</a>

|

| 55 |

+

</p>

|

| 56 |

+

|

| 57 |

+

<p align="center">

|

| 58 |

+

<a href="https://arxiv.org/abs/2405.04434"><b>Paper Link</b>👁️</a>

|

| 59 |

+

</p>

|

| 60 |

+

|

| 61 |

+

# DeepSeek-V2-Chat-0628

|

| 62 |

+

|

| 63 |

+

## 1. Introduction

|

| 64 |

+

|

| 65 |

+

DeepSeek-V2-Chat-0628 is an improved version of DeepSeek-V2-Chat. For model details, please visit [DeepSeek-V2 page](https://huggingface.co/deepseek-ai/DeepSeek-V2-Chat) for more information.

|

| 66 |

+

|

| 67 |

+

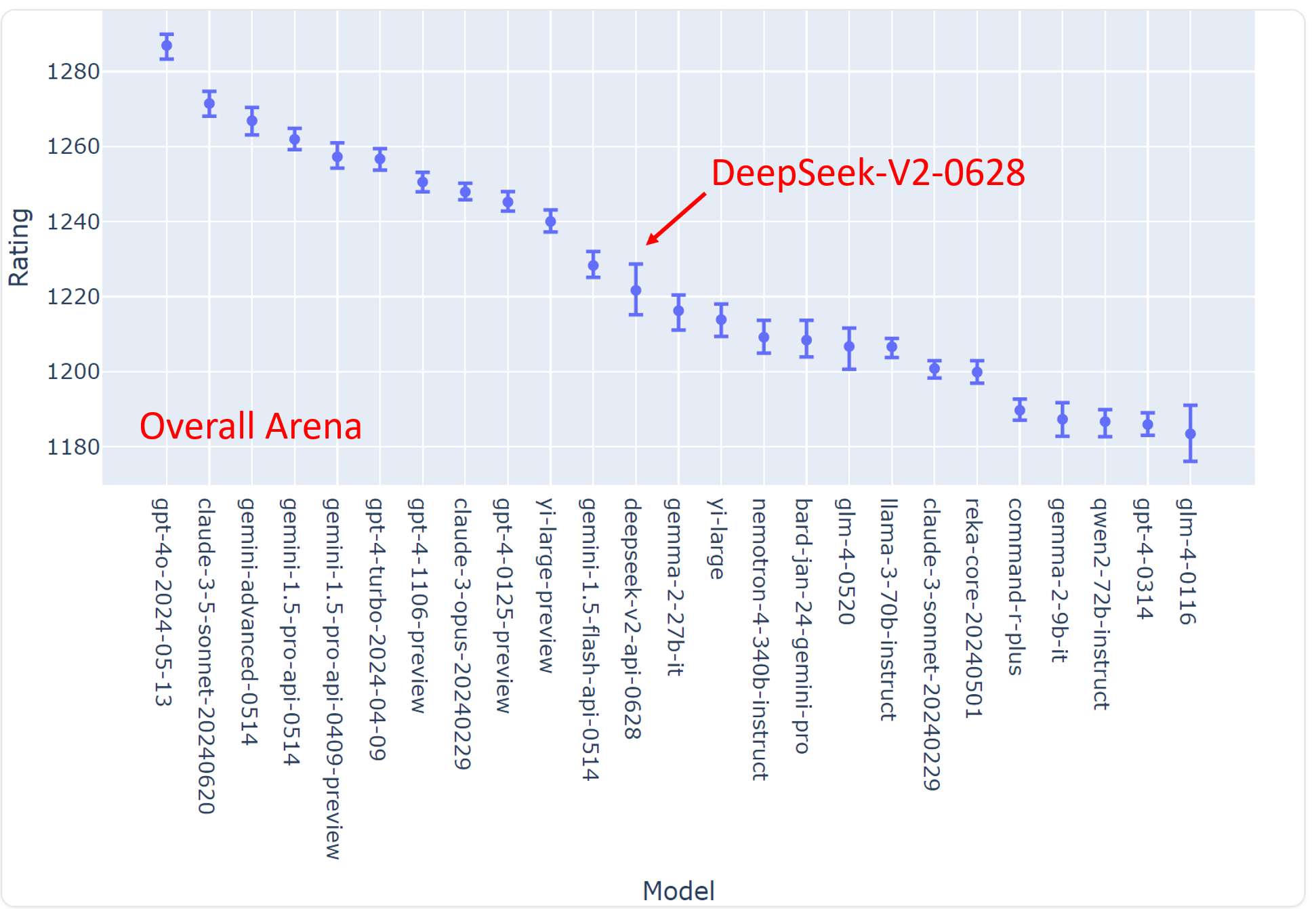

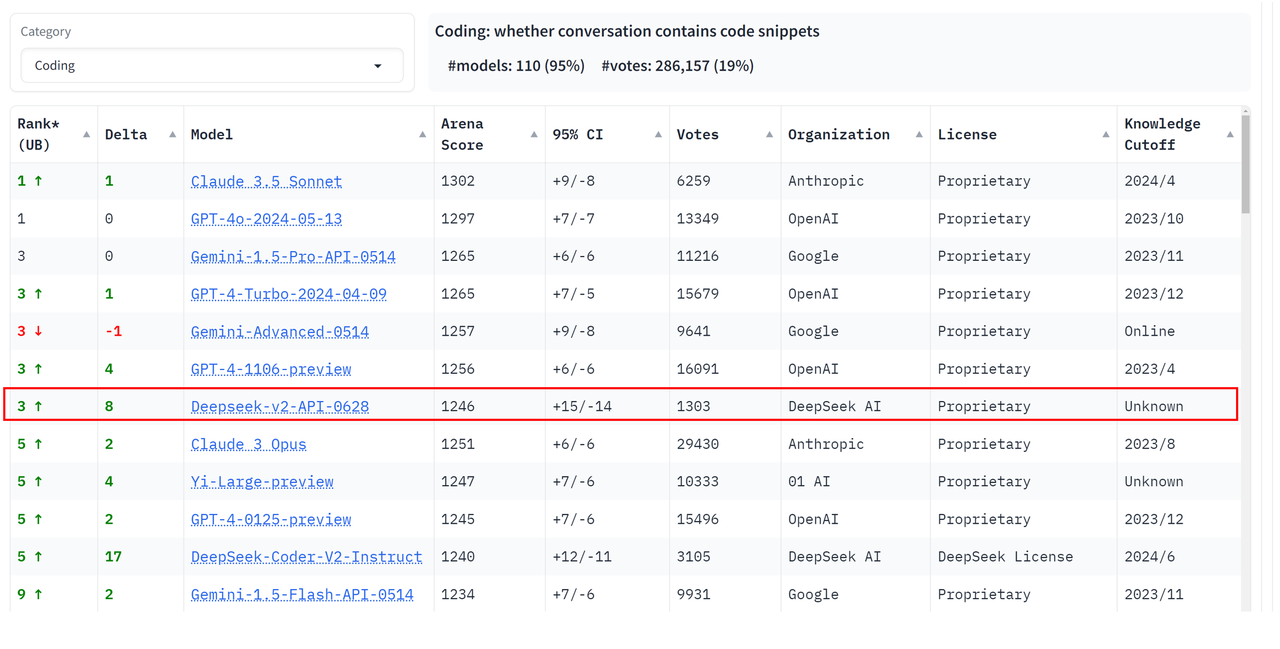

DeepSeek-V2-Chat-0628 has achieved remarkable performance on the LMSYS Chatbot Arena Leaderboard:

|

| 68 |

+

|

| 69 |

+

- Overall Ranking: #11, outperforming all other open-source models.

|

| 70 |

+

- Coding Arena Ranking: #3, showcasing exceptional capabilities in coding tasks.

|

| 71 |

+

- Hard Prompts Arena Ranking: #3, demonstrating strong performance on challenging prompts.

|

| 72 |

+

|

| 73 |

+

<p align="center">

|

| 74 |

+

<img width="90%" src="figures/arena1.png" />

|

| 75 |

+

</p>

|

| 76 |

+

|

| 77 |

+

<p align="center">

|

| 78 |

+

<img width="90%" src="figures/arena2.png" />

|

| 79 |

+

</p>

|

| 80 |

+

|

| 81 |

+

## 2. Improvement

|

| 82 |

+

|

| 83 |

+

Compared to the previous version DeepSeek-V2-Chat, the new version has made the following improvements:

|

| 84 |

+

|

| 85 |

+

- Code: HumanEval Pass@1 increased from 79.88% to 84.76%.

|

| 86 |

+

- Mathematics: MATH ACC@1 improved from 55.02% to 71.02%.

|

| 87 |

+

- Reasoning: Big-Bench-Hard(BBH) improved from 78.56% to 83.40%.

|

| 88 |

+

- Instruction Following: IFEval Benchmark Prompt-Level accuracy improved from 63.9% to 77.6%.

|

| 89 |

+

- JSON Format Output: Internal test set performance increased from 78% to 85%.

|

| 90 |

+

- Additionally, in the Arena-Hard evaluation, the win rate against GPT-4-0314 has increased from 41.6% to 68.3%. Furthermore, the instruction following capability in the "system" area has been optimized, significantly enhancing the user experience for immersive translation, RAG, and other tasks.

|

| 91 |

+

|

| 92 |

+

## 3. How to run locally

|

| 93 |

+

|

| 94 |

+

**To utilize DeepSeek-V2-Chat-0628 in BF16 format for inference, 80GB*8 GPUs are required.**

|

| 95 |

+

### Inference with Huggingface's Transformers

|

| 96 |

+

You can directly employ [Huggingface's Transformers](https://github.com/huggingface/transformers) for model inference.

|

| 97 |

+

|

| 98 |

+

```python

|

| 99 |

+

import torch

|

| 100 |

+

from transformers import AutoTokenizer, AutoModelForCausalLM, GenerationConfig

|

| 101 |

+

|

| 102 |

+

model_name = "deepseek-ai/DeepSeek-V2-Chat-0628"

|

| 103 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True)

|

| 104 |

+

# `max_memory` should be set based on your devices

|

| 105 |

+

max_memory = {i: "75GB" for i in range(8)}

|

| 106 |

+

# `device_map` cannot be set to `auto`

|

| 107 |

+

model = AutoModelForCausalLM.from_pretrained(model_name, trust_remote_code=True, device_map="sequential", torch_dtype=torch.bfloat16, max_memory=max_memory, attn_implementation="eager")

|

| 108 |

+

model.generation_config = GenerationConfig.from_pretrained(model_name)

|

| 109 |

+

model.generation_config.pad_token_id = model.generation_config.eos_token_id

|

| 110 |

+

|

| 111 |

+

messages = [

|

| 112 |

+

{"role": "user", "content": "Write a piece of quicksort code in C++"}

|

| 113 |

+

]

|

| 114 |

+

input_tensor = tokenizer.apply_chat_template(messages, add_generation_prompt=True, return_tensors="pt")

|

| 115 |

+

outputs = model.generate(input_tensor.to(model.device), max_new_tokens=100)

|

| 116 |

+

|

| 117 |

+

result = tokenizer.decode(outputs[0][input_tensor.shape[1]:], skip_special_tokens=True)

|

| 118 |

+

print(result)

|

| 119 |

+

```

|

| 120 |

+

|

| 121 |

+

The complete chat template can be found within `tokenizer_config.json` located in the huggingface model repository.

|

| 122 |

+

|

| 123 |

+

**Note: The chat template has been updated compared to the previous DeepSeek-V2-Chat version.**

|

| 124 |

+

|

| 125 |

+

An example of chat template is as belows:

|

| 126 |

+

|

| 127 |

+

```bash

|

| 128 |

+

<|begin▁of▁sentence|><|User|>{user_message_1}<|Assistant|>{assistant_message_1}<|end▁of▁sentence|><|User|>{user_message_2}<|Assistant|>

|

| 129 |

+

```

|

| 130 |

+

|

| 131 |

+

You can also add an optional system message:

|

| 132 |

+

|

| 133 |

+

```bash

|

| 134 |

+

<|begin▁of▁sentence|>{system_message}

|

| 135 |

+

|

| 136 |

+

<|User|>{user_message_1}<|Assistant|>{assistant_message_1}<|end▁of▁sentence|><|User|>{user_message_2}<|Assistant|>

|

| 137 |

+

```

|

| 138 |

+

|

| 139 |

+

### Inference with vLLM (recommended)

|

| 140 |

+

To utilize [vLLM](https://github.com/vllm-project/vllm) for model inference, please merge this Pull Request into your vLLM codebase: https://github.com/vllm-project/vllm/pull/4650.

|

| 141 |

+

|

| 142 |

+

```python

|

| 143 |

+

from transformers import AutoTokenizer

|

| 144 |

+

from vllm import LLM, SamplingParams

|

| 145 |

+

|

| 146 |

+

max_model_len, tp_size = 8192, 8

|

| 147 |

+

model_name = "deepseek-ai/DeepSeek-V2-Chat-0628"

|

| 148 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 149 |

+

llm = LLM(model=model_name, tensor_parallel_size=tp_size, max_model_len=max_model_len, trust_remote_code=True, enforce_eager=True)

|

| 150 |

+

sampling_params = SamplingParams(temperature=0.3, max_tokens=256, stop_token_ids=[tokenizer.eos_token_id])

|

| 151 |

+

|

| 152 |

+

messages_list = [

|

| 153 |

+

[{"role": "user", "content": "Who are you?"}],

|

| 154 |

+

[{"role": "user", "content": "Translate the following content into Chinese directly: DeepSeek-V2 adopts innovative architectures to guarantee economical training and efficient inference."}],

|

| 155 |

+

[{"role": "user", "content": "Write a piece of quicksort code in C++."}],

|

| 156 |

+

]

|

| 157 |

+

|

| 158 |

+

prompt_token_ids = [tokenizer.apply_chat_template(messages, add_generation_prompt=True) for messages in messages_list]

|

| 159 |

+

|

| 160 |

+

outputs = llm.generate(prompt_token_ids=prompt_token_ids, sampling_params=sampling_params)

|

| 161 |

+

|

| 162 |

+

generated_text = [output.outputs[0].text for output in outputs]

|

| 163 |

+

print(generated_text)

|

| 164 |

+

```

|

| 165 |

+

|

| 166 |

+

## 4. License

|

| 167 |

+

This code repository is licensed under [the MIT License](LICENSE-CODE). The use of DeepSeek-V2 Base/Chat models is subject to [the Model License](LICENSE-MODEL). DeepSeek-V2 series (including Base and Chat) supports commercial use.

|

| 168 |

+

|

| 169 |

+

## 5. Citation

|

| 170 |

+

```

|

| 171 |

+

@misc{deepseekv2,

|

| 172 |

+

title={DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model},

|

| 173 |

+

author={DeepSeek-AI},

|

| 174 |

+

year={2024},

|

| 175 |

+

eprint={2405.04434},

|

| 176 |

+

archivePrefix={arXiv},

|

| 177 |

+

primaryClass={cs.CL}

|

| 178 |

+

}

|

| 179 |

+

```

|

| 180 |

+

|

| 181 |

+

## 6. Contact

|

| 182 |

+

If you have any questions, please raise an issue or contact us at [[email protected]]([email protected]).

|

figures/arena1.png

ADDED

|

figures/arena2.png

ADDED

|