Commit

•

f5b866b

1

Parent(s):

de30f79

Upload 26 files

Browse files- .gitattributes +17 -0

- README.md +263 -3

- assets/comparisons/bear.jpg +0 -0

- assets/comparisons/lady.jpg +3 -0

- assets/comparisons/lime.jpg +3 -0

- assets/comparisons/moon.jpg +0 -0

- assets/comparisons/scars.jpg +0 -0

- assets/comparisons/selfie.jpg +0 -0

- assets/comparisons/teal_woman.jpg +3 -0

- assets/comparisons/witch.jpg +3 -0

- assets/comparisons_full/comparison_0.jpg +3 -0

- assets/comparisons_full/comparison_1.jpg +3 -0

- assets/comparisons_full/comparison_10.jpg +3 -0

- assets/comparisons_full/comparison_11.jpg +3 -0

- assets/comparisons_full/comparison_12.jpg +3 -0

- assets/comparisons_full/comparison_2.jpg +3 -0

- assets/comparisons_full/comparison_3.jpg +3 -0

- assets/comparisons_full/comparison_4.jpg +3 -0

- assets/comparisons_full/comparison_5.jpg +3 -0

- assets/comparisons_full/comparison_6.jpg +3 -0

- assets/comparisons_full/comparison_7.jpg +3 -0

- assets/comparisons_full/comparison_8.jpg +3 -0

- assets/comparisons_full/comparison_9.jpg +3 -0

- assets/comparisons_full/prompts.py +54 -0

- assets/science.png +0 -0

- assets/splash.jpg +0 -0

- pipeline.py +1813 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,20 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/comparisons_full/comparison_0.jpg filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

assets/comparisons_full/comparison_1.jpg filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

assets/comparisons_full/comparison_10.jpg filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

assets/comparisons_full/comparison_11.jpg filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

assets/comparisons_full/comparison_12.jpg filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

assets/comparisons_full/comparison_2.jpg filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

assets/comparisons_full/comparison_3.jpg filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

assets/comparisons_full/comparison_4.jpg filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

assets/comparisons_full/comparison_5.jpg filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

assets/comparisons_full/comparison_6.jpg filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

assets/comparisons_full/comparison_7.jpg filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

assets/comparisons_full/comparison_8.jpg filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

assets/comparisons_full/comparison_9.jpg filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

assets/comparisons/lady.jpg filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

assets/comparisons/lime.jpg filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

assets/comparisons/teal_woman.jpg filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

assets/comparisons/witch.jpg filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,3 +1,263 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# LibreFLUX: A free, de-distilled FLUX model

|

| 2 |

+

|

| 3 |

+

LibreFLUX is an Apache 2.0 version of [FLUX.1-schnell](https://huggingface.co/black-forest-labs/FLUX.1-schnell) that provides a full T5 context length, uses attention masking, has classifier free guidance restored, and has had most of the FLUX aesthetic finetuning/DPO fully removed. That means it's a lot uglier than base flux, but it has the potential to be more easily finetuned to any new distribution. It keeps in mind the core tenets of open source software, that it should be difficult to use, slower and clunkier than a proprietary solution, and have an aesthetic trapped somewhere inside the early 2000s.

|

| 4 |

+

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

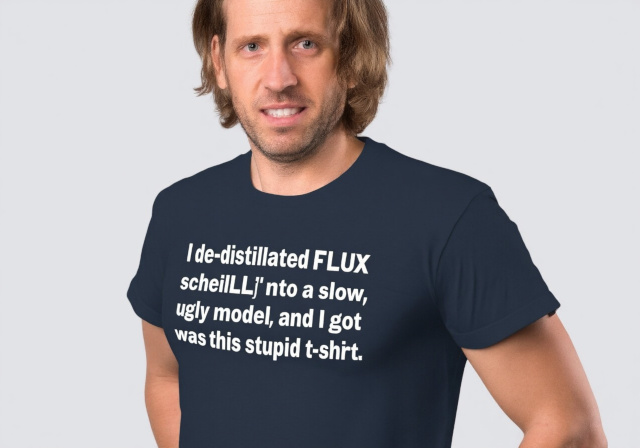

> The image features a man standing confidently, wearing a simple t-shirt with a humorous and quirky message printed across the front. The t-shirt reads: "I de-distilled FLUX into a slow, ugly model and all I got was this stupid t-shirt." The man’s expression suggests a mix of pride and irony, as if he's aware of the complexity behind the statement, yet amused by the underwhelming reward. The background is neutral, keeping the focus on the man and his t-shirt, which pokes fun at the frustrating and often anticlimactic nature of technical processes or complex problem-solving, distilled into a comically understated punchline.

|

| 8 |

+

|

| 9 |

+

## Table of Contents

|

| 10 |

+

|

| 11 |

+

- [LibreFLUX: A free, de-distilled FLUX model](#libreflux-a-free-de-distilled-flux-model)

|

| 12 |

+

- [Usage](#usage)

|

| 13 |

+

- [Non-technical Report on Schnell De-distillation](#non-technical-report-on-schnell-de-distillation)

|

| 14 |

+

- [Why](#why)

|

| 15 |

+

- [Restoring the Original Training Objective](#restoring-the-original-training-objective)

|

| 16 |

+

- [FLUX and Attention Masking](#flux-and-attention-masking)

|

| 17 |

+

- [Make De-distillation Go Fast and Fit in Small GPUs](#make-de-distillation-go-fast-and-fit-in-small-gpus)

|

| 18 |

+

- [Selecting Better Layers to Train with LoKr](#selecting-better-layers-to-train-with-lokr)

|

| 19 |

+

- [Beta Timestep Scheduling and Timestep Stratification](#beta-timestep-scheduling-and-timestep-stratification)

|

| 20 |

+

- [Datasets](#datasets)

|

| 21 |

+

- [Training](#training)

|

| 22 |

+

- [Post-hoc "EMA"](#post-hoc-ema)

|

| 23 |

+

- [Results](#results)

|

| 24 |

+

- [Closing Thoughts](#closing-thoughts)

|

| 25 |

+

- [Contacting Me and Grants](#contacting-me-and-grants)

|

| 26 |

+

- [Citation](#citation)

|

| 27 |

+

|

| 28 |

+

# Usage

|

| 29 |

+

|

| 30 |

+

To use the model, just call the custom pipeline using [diffusers](https://github.com/huggingface/diffusers).

|

| 31 |

+

|

| 32 |

+

```py

|

| 33 |

+

from diffusers import DiffusionPipeline

|

| 34 |

+

pipeline = DiffusionPipeline.from_pretrained(

|

| 35 |

+

"jimmycarter/LibreFLUX",

|

| 36 |

+

custom_pipeline="jimmycarter/LibreFLUX",

|

| 37 |

+

use_safetensors=True,

|

| 38 |

+

)

|

| 39 |

+

|

| 40 |

+

# High VRAM

|

| 41 |

+

prompt = "Photograph of a chalk board on which is written: 'I thought what I'd do was, I'd pretend I was one of those deaf-mutes.'"

|

| 42 |

+

negative_prompt = "blurry"

|

| 43 |

+

images = pipeline(

|

| 44 |

+

prompt=prompt,

|

| 45 |

+

negative_prompt=negative_prompt,

|

| 46 |

+

)

|

| 47 |

+

images[0].save('chalkboard.png')

|

| 48 |

+

|

| 49 |

+

# If you have <=24 GB VRAM, try:

|

| 50 |

+

# ! pip install optimum-quanto

|

| 51 |

+

# Then

|

| 52 |

+

from optimum.quanto import freeze, quantize, qint8

|

| 53 |

+

quantize(

|

| 54 |

+

pipe.transformer,

|

| 55 |

+

weights=qint8,

|

| 56 |

+

exclude=[

|

| 57 |

+

"*.norm", "*.norm1", "*.norm2", "*.norm2_context",

|

| 58 |

+

"proj_out", "x_embedder", "norm_out", "context_embedder",

|

| 59 |

+

],

|

| 60 |

+

)

|

| 61 |

+

freeze(pipe.transformer)

|

| 62 |

+

pipe.enable_model_cpu_offload()

|

| 63 |

+

images = pipeline(

|

| 64 |

+

prompt=prompt,

|

| 65 |

+

negative_prompt=negative_prompt,

|

| 66 |

+

device=None,

|

| 67 |

+

)

|

| 68 |

+

images[0].save('chalkboard.png')

|

| 69 |

+

```

|

| 70 |

+

|

| 71 |

+

# Non-technical Report on Schnell De-distillation

|

| 72 |

+

|

| 73 |

+

Welcome to my non-technical report on de-distilling FLUX.1-schnell in the most un-scientific way possible with extremely limited resources. I'm not going to claim I made a good model, but I did make a model. It was trained on about 1,500 H100 hour equivalents.

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

**Everyone is ~~an artist~~ a machine learning researcher.**

|

| 78 |

+

|

| 79 |

+

## Why

|

| 80 |

+

|

| 81 |

+

FLUX is a good text-to-image model, but the only versions of it that are out are distilled. FLUX.1-dev is distilled so that you don't need to use CFG (classifier free guidance), so instead of making one sample for conditional (your prompt) and unconditional (negative prompt), you only have to make the sample for conditional. This means that FLUX.1-dev is twice as fast as the model without distillation.

|

| 82 |

+

|

| 83 |

+

FLUX.1-schnell (German for "fast") is further distilled so that you only need 4 steps of conditional generation to get an image. Importantly, FLUX.1-schnell has an Apache-2.0 license, so you can use it freely without having to obtain a commercial license from Black Forest Labs. Out of the box, schnell is pretty bad when you use CFG unless you skip the first couple of steps.

|

| 84 |

+

|

| 85 |

+

The FLUX distilled models are created for their base, non-distilled models by [training on output from the teacher model (non-distilled) to student model (distilled) along with some tricks like an adversarial network](https://arxiv.org/abs/2403.12015).

|

| 86 |

+

|

| 87 |

+

For de-distilled models, image generation takes a little less than twice as long because you need to compute a sample for both conditional and unconditional images at each step. The benefit is you can use them commercially for free, training is a little easier, and they may be more creative.

|

| 88 |

+

|

| 89 |

+

## Restoring the original training objective

|

| 90 |

+

|

| 91 |

+

This part is actually really easy. You just train it on the normal flow-matching objective with MSE loss and the model starts learning how to do it again. That being said, I don't think either LibreFLUX or [OpenFLUX.1](https://huggingface.co/ostris/OpenFLUX.1) managed to fully de-distill the model. The evidence I see for that is that both models will either get strange shadows that overwhelm the image or blurriness when using CFG scale values greater than 4.0. Neither of us trained very long in comparison to the training for the original model (assumed to be around 0.5-2.0m H100 hours), so it's not particularly surprising.

|

| 92 |

+

|

| 93 |

+

## FLUX and attention masking

|

| 94 |

+

|

| 95 |

+

FLUX models use a text model called T5-XXL to get most of its conditioning for the text-to-image task. Importantly, they pad the text out to either 256 (schnell) or 512 (dev) tokens. 512 tokens is the maximum trained length for the model. By padding, I mean they repeat the last token until the sequence is this length.

|

| 96 |

+

|

| 97 |

+

This results in the model using these padding tokens to [store information](https://arxiv.org/abs/2309.16588). When you [visualize the attention maps of the tokens in the padding segment of the text encoder](https://github.com/kaibioinfo/FluxAttentionMap/blob/main/attentionmap.ipynb), you can see that about 10-40 tokens shortly after the last token of the text and about 10-40 tokens at the end of the padding contain information which the model uses to make images. Because these are normally used to store information, it means that any prompt long enough to not have some of these padding tokens will end up with degraded performance.

|

| 98 |

+

|

| 99 |

+

It's easy to prevent this by masking out these padding token during attention. BFL and their engineers know this, but they probably decided against it because it works as is and most fast implementations of attention only work with causal (LLM) types of padding and so would let them train faster.

|

| 100 |

+

|

| 101 |

+

I already [implemented attention masking](https://github.com/bghira/SimpleTuner/blob/main/helpers/models/flux/transformer.py#L404-L406) and I would like to be able to use all 512 tokens without degradation, so I did my finetune with it on. Small scale finetunes with it on tend to damage the model, but since I need to train so much out of distillation schnell to make it work anyway I figured it probably didn't matter to add it.

|

| 102 |

+

|

| 103 |

+

Note that FLUX.1-schnell was only trained on 256 tokens, so my finetune allows users to use the whole 512 token sequence length.

|

| 104 |

+

|

| 105 |

+

## Make de-distillation go fast and fit in small GPUs

|

| 106 |

+

|

| 107 |

+

I avoided doing any full-rank (normal, all parameters) finetuning at all, since FLUX is big. I trained initially with the model in int8 precision using [quanto](https://github.com/huggingface/optimum-quanto). I started with a 600 million parameter [LoKr](https://arxiv.org/abs/2309.14859), since LoKr tends to approximate full-rank finetuning better than LoRA. The loss was really slow to go down when I began, so after poking around the code to initialize the matrix to apply to the LoKr I settled on this function, which injects noise at a fraction of the magnitudes of the layers they apply to.

|

| 108 |

+

|

| 109 |

+

```py

|

| 110 |

+

def approximate_normal_tensor(inp, target, scale=1.0):

|

| 111 |

+

tensor = torch.randn_like(target)

|

| 112 |

+

desired_norm = inp.norm()

|

| 113 |

+

desired_mean = inp.mean()

|

| 114 |

+

desired_std = inp.std()

|

| 115 |

+

|

| 116 |

+

current_norm = tensor.norm()

|

| 117 |

+

tensor = tensor * (desired_norm / current_norm)

|

| 118 |

+

current_std = tensor.std()

|

| 119 |

+

tensor = tensor * (desired_std / current_std)

|

| 120 |

+

tensor = tensor - tensor.mean() + desired_mean

|

| 121 |

+

tensor.mul_(scale)

|

| 122 |

+

|

| 123 |

+

target.copy_(tensor)

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

def init_lokr_network_with_perturbed_normal(lycoris, scale=1e-3):

|

| 127 |

+

with torch.no_grad():

|

| 128 |

+

for lora in lycoris.loras:

|

| 129 |

+

lora.lokr_w1.fill_(1.0)

|

| 130 |

+

approximate_normal_tensor(lora.org_weight, lora.lokr_w2, scale=scale)

|

| 131 |

+

```

|

| 132 |

+

|

| 133 |

+

This isn't normal PEFT (parameter efficient fine-tuning) anymore, because this will perturb all the weights of the model slightly in the beginning. It doesn't seem to cause any performance degradation in the model after testing and it made the loss fall for my LoKr twice as fast, so I used it with `scale=1e-3`. The LoKr weights I trained in bfloat16, with the `adamw_bf16` optimizer that I ~~plagiarized~~ wrote with the magic of open source software.

|

| 134 |

+

|

| 135 |

+

## Selecting better layers to train with LoKr

|

| 136 |

+

|

| 137 |

+

FLUX is a pretty standard transformer model aside from some peculiarities. One of these peculiarities is in their "norm" layers, which contain non-linearities so they don't act like norms except for a single normalization that is applied in the layer without any weights (LayerNorm with `elementwise_affine=False`). When you fine-tune and look at what changes these layers are one of the big ones that seems to change.

|

| 138 |

+

|

| 139 |

+

The other thing about transformers is that [all the heavy lifting is most often done at the start and end layers of the network](https://arxiv.org/abs/2403.17887), so you may as well fine-tune those more than other layers. When I looked at the cosine similarity of the hidden states between each block in diffusion transformers, it more or less reflected what was observed with LLMs. So I made a pull-request to the LyCORIS repository (that maintains a LoKr implementation) that lets you more easily pick individual layers and set different factors on them, then focused my LoKr on these layers.

|

| 140 |

+

|

| 141 |

+

## Beta timestep scheduling and timestep stratification

|

| 142 |

+

|

| 143 |

+

One problem with diffusion models is that they are [multi-task](https://arxiv.org/abs/2211.01324) (different timesteps are considered different tasks) and the tasks all tend to be associated with differently shaped and sized gradients and different magnitudes of loss. This is very much not a big deal when you have a huge batch size, so the timesteps of the model all get more or less sampled evenly and the gradients are smoothed out and have less variance. I also knew that the schnell model had more problems with image distortions caused by sampling at the high-noise timesteps, so I did two things:

|

| 144 |

+

|

| 145 |

+

1. Implemented a Beta schedule that approximates the original sigmoid sampling, to let me shift the timesteps sampled to the high noise steps similar but less extreme than some of the alternative sampling methods in the SD3 research paper.

|

| 146 |

+

2. Implement multi-rank stratified sampling so that during each step the model trained timesteps were selected per batch based on regions, which normalizes the gradients significantly like using a higher batch size would.

|

| 147 |

+

|

| 148 |

+

```py

|

| 149 |

+

alpha = 2.0

|

| 150 |

+

beta = 2.0

|

| 151 |

+

num_processes = self.accelerator.num_processes

|

| 152 |

+

process_index = self.accelerator.process_index

|

| 153 |

+

total_bsz = num_processes * bsz

|

| 154 |

+

start_idx = process_index * bsz

|

| 155 |

+

end_idx = (process_index + 1) * bsz

|

| 156 |

+

indices = torch.arange(start_idx, end_idx, dtype=torch.float64)

|

| 157 |

+

u = torch.rand(bsz)

|

| 158 |

+

p = (indices + u) / total_bsz

|

| 159 |

+

sigmas = torch.from_numpy(

|

| 160 |

+

sp_beta.ppf(p.numpy(), a=alpha, b=beta)

|

| 161 |

+

).to(device=self.accelerator.device)

|

| 162 |

+

```

|

| 163 |

+

|

| 164 |

+

## Datasets

|

| 165 |

+

|

| 166 |

+

No one talks about what datasets they train anymore, but I used open ones from the web captioned with VLMs and 2-3 captions per image. There was at least one short and one long caption for every image. The datasets were diverse and most of them did not have aesthetic selection, which helped direct the model away from the traditional hyper-optimized image generation of text-to-image models. Many people think that looks worse, but I like that it can make a diverse pile of images. The model was trained on about 0.5 million high resolution images in both random square crops and random aspect ratio crops.

|

| 167 |

+

|

| 168 |

+

## Training

|

| 169 |

+

|

| 170 |

+

I started training for over a month on a 5x 3090s and about 500,000 images. I used a 600m LoKr for this. The model looked okay after. Then, I [unexpectedly gained access to 7x H100s for compute resources](https://rundiffusion.com), so I merged my PEFT model in and began training on a new LoKr with 3.2b parameters.

|

| 171 |

+

|

| 172 |

+

## Post-hoc "EMA"

|

| 173 |

+

|

| 174 |

+

I've been too lazy to implement real [post-hoc EMA like from EDM2](https://github.com/lucidrains/ema-pytorch/blob/main/ema_pytorch/post_hoc_ema.py), but to approximate it I saved all the checkpoints from the H100 runs and then LERPed them iteratively with different alpha values. I evaluated those checkpoints at different CFG scales to see if any of them were superior to the last checkpoint.

|

| 175 |

+

|

| 176 |

+

```py

|

| 177 |

+

first_checkpoint_file = checkpoint_files[0]

|

| 178 |

+

ema_state_dict = load_file(first_checkpoint_file)

|

| 179 |

+

for checkpoint_file in checkpoint_files[1:]:

|

| 180 |

+

new_state_dict = load_file(checkpoint_file)

|

| 181 |

+

for k in ema_state_dict.keys():

|

| 182 |

+

ema_state_dict[k] = torch.lerp(

|

| 183 |

+

ema_state_dict[k],

|

| 184 |

+

new_state_dict[k],

|

| 185 |

+

alpha,

|

| 186 |

+

)

|

| 187 |

+

|

| 188 |

+

output_file = os.path.join(output_folder, f"alpha_linear_{alpha}.safetensors")

|

| 189 |

+

save_file(ema_state_dict, output_file)

|

| 190 |

+

```

|

| 191 |

+

|

| 192 |

+

After looking at all models in alphas `[0.2, 0.4, 0.6, 0.8, 0.9, 0.95, 0.975, 0.99, 0.995, 0.999]`, I ended up settling on alpha 0.9 using the power of my eyeballs. If I am being frank, many of the EMA models looked remarkably similar and had the same kind of "rolling around various minima" qualities that training does in general.

|

| 193 |

+

|

| 194 |

+

## Results

|

| 195 |

+

|

| 196 |

+

I will go over the results briefly, but I'll start with the images.

|

| 197 |

+

|

| 198 |

+

**Figure 1.** Some side-by-side images of LibreFLUX and [OpenFLUX.1](https://huggingface.co/ostris/OpenFLUX.1). They were made using diffusers, with 512-token maximum length text embeddings for LibreFLUX and 256-token maximum length for OpenFLUX.1. LibreFLUX had attention masking on while OpenFLUX did not. The models were sampled with 35 steps at various resolutions. The negative prompt for both was simply "blurry". All inference was done with the transformer quantized to int8 by quanto.

|

| 199 |

+

|

| 200 |

+

|

| 201 |

+

|

| 202 |

+

> A cinematic style shot of a polar bear standing confidently in the center of a vibrant nightclub. The bear is holding a large sign that reads 'Open Source! Apache 2.0' in one arm and giving a thumbs up with the other arm. Around him, the club is alive with energy as colorful lasers and disco lights illuminate the scene. People are dancing all around him, wearing glowsticks and candy bracelets, adding to the fun and electric atmosphere. The polar bear's white fur contrasts against the dark, neon-lit background, and the entire scene has a surreal, festive vibe, blending technology activism with a lively party environment.

|

| 203 |

+

|

| 204 |

+

|

| 205 |

+

|

| 206 |

+

> widescreen, vintage style from 1970s, Extreme realism in a complex, highly detailed composition featuring a woman with extremely long flowing rainbow-colored hair. The glowing background, with its vibrant colors, exaggerated details, intricate textures, and dynamic lighting, creates a whimsical, dreamy atmosphere in photorealistic quality. Threads of light that float and weave through the air, adding movement and intrigue. Patterns on the ground or in the background that glow subtly, adding a layer of complexity.Rainbows that appear faintly in the background, adding a touch of color and wonder.Butterfly wings that shimmer in the light, adding life and movement to the scene.Beams of light that radiate softly through the scene, adding focus and direction. The woman looks away from the camera, with a soft, wistful expression, her hair framing her face.

|

| 207 |

+

|

| 208 |

+

|

| 209 |

+

|

| 210 |

+

> a highly detailed and atmospheric, painted western movie poster with the title text "Once Upon a Lime in the West" in a dark red western-style font and the tagline text "There were three men ... and one very sour twist", with movie credits at the bottom, featuring small white text detailing actor and director names and production company logos, inspired by classic western movie posters from the 1960s, an oversized lime is the central element in the middle ground of a rugged, sun-scorched desert landscape typical of a western, the vast expanse of dry, cracked earth stretches toward the horizon, framed by towering red rock formations, the absurdity of the lime is juxtaposed with the intense gravitas of the stoic, iconic gunfighters, as if the lime were as formidable an adversary as any seasoned gunslinger, in the foreground, the silhouettes of two iconic gunfighters stand poised, facing the lime and away from the viewer, the lime looms in the distance like a final showdown in the classic western tradition, in the foreground, the gunfighters stand with long duster coats flowing in the wind, and wide-brimmed hats tilted to cast shadows over their faces, their stances are tense, as if ready for the inevitable draw, and the weapons they carry glint, the background consists of the distant town, where the sun is casting a golden glow, old wooden buildings line the sides, with horses tied to posts and a weathered saloon sign swinging gently in the wind, in this poster, the lime plays the role of the silent villain, an almost mythical object that the gunfighters are preparing to confront, the tension of the scene is palpable, the gunfighters in the foreground have faces marked by dust and sweat, their eyes narrowed against the bright sunlight, their expressions are serious and resolute, as if they have come a long way for this final duel, the absurdity of the lime is in stark contrast with their stoic demeanor, a wide, panoramic shot captures the entire scene, with the gunfighters in the foreground, the lime in the mid-ground, and the town on the horizon, the framing emphasizes the scale of the desert and the dramatic standoff taking place, while subtly highlighting the oversized lime, the camera is positioned low, angled upward from the dusty ground toward the gunfighters, with the distant lime looming ahead, this angle lends the figures an imposing presence, while still giving the lime an absurd grandeur in the distance, the perspective draws the viewerâs eye across the desert, from the silhouettes of the gunfighters to the bizarre focal point of the lime, amplifying the tension, the lighting is harsh and unforgiving, typical of a desert setting, with the evening sun casting deep shadows across the ground, dust clouds drift subtly across the ground, creating a hazy effect, while the sky above is a vast expanse of pale blue, fading into golden hues near the horizon where the sun begins to set, the poster is shot as if using classic anamorphic lenses to capture the wide, epic scale of the desert, the color palette is warm and saturated, evoking the look of a classic spaghetti western, the lime looms unnaturally in the distance, as if conjured from the land itself, casting an absurdly grand shadow across the rugged landscape, the texture and detail evoke hand-painted, weathered posters from the golden age of westerns, with slightly frayed edges and faint creases mimicking the wear of vintage classics

|

| 211 |

+

|

| 212 |

+

|

| 213 |

+

|

| 214 |

+

> A boxed action figure of a beautiful elf girl witch wearing a skimpy black leotard, black thigh highs, black armlets, and a short black cloak. Her hair is pink and shoulder-length. Her eyes are green. She is a slim and attractive elf with small breasts. The accessories include an apple, magic wand, potion bottle, black cat, jack o lantern, and a book. The box is orange and black with a logo near the bottom of it that says "BAD WITCH". The box is on a shelf on the toy aisle.

|

| 215 |

+

|

| 216 |

+

|

| 217 |

+

|

| 218 |

+

> A cute blonde woman in bikini and her doge are sitting on a couch cuddling and the expressive, stylish living room scene with a playful twist. The room is painted in a soothing turquoise color scheme, stylish living room scene bathed in a cool, textured turquoise blanket and adorned with several matching turquoise throw pillows. The room's color scheme is predominantly turquoise, relaxed demeanor. The couch is covered in a soft, reflecting light and adding to the vibrant blue hue., dark room with a sleek, spherical gold decorations, This photograph captures a scene that is whimsically styled in a vibrant, reflective cyan sunglasses. The dog's expression is cheerful, metallic fabric sofa. The dog, soothing atmosphere.

|

| 219 |

+

|

| 220 |

+

|

| 221 |

+

|

| 222 |

+

> Selfie of a woman in front of the eiffel tower, a man is standing next to her and giving a thumbs up

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

|

| 226 |

+

> An image contains three motivational phrases, all in capitalized stylized text on a colorful background: 1. At the top: "PAIN HEALS" 2. In the middle, bold and slightly larger: "CHICKS DIG SCARS" 3. At the bottom: "GLORY LASTS FOREVER"

|

| 227 |

+

|

| 228 |

+

|

| 229 |

+

|

| 230 |

+

> An illustration featuring a McDonald's on the moon. An anthropomorphic cat in a pink top and blue jeans is ordering McDonald's, while a zebra cashier stands behind the counter. The moon's surface is visible outside the windows, with craters and a distant view of Earth. The interior of the McDonald's is similar to those on Earth but adapted to the lunar environment, with vibrant colors and futuristic design elements. The overall scene is whimsical and imaginative, blending everyday life with a fantastical setting.

|

| 231 |

+

|

| 232 |

+

LibreFLUX and OpenFLUX have their strengths and weaknesses. OpenFLUX was de-distilled using the outputs of FLUX.1-schnell, which might explain why it's worse at text but also has the FLUX hyperaesthetics. Text-to-image models [don't have any good metrics](https://arxiv.org/abs/2306.04675) so past a point of "soupiness" and single digit FID you just need to look at the model and see if it fits what you think nice pictures are.

|

| 233 |

+

|

| 234 |

+

Both models appear to be terrible at making drawings. Because people are probably curious to see the non-cherry picks, [I've included CFG sweep comparisons of both LibreFLUX and OpenFLUX.1 here](https://huggingface.co/jimmycarter/LibreFLUX/blob/main/assets/comparisons_full/). I'm not going to say this is the best model ever, but it might be a springboard for people wanting to finetune better models from.

|

| 235 |

+

|

| 236 |

+

## Closing thoughts

|

| 237 |

+

|

| 238 |

+

If I had to do it again, I'd probably raise the learning rate more on the H100 run. There was a [bug in SimpleTuner](https://github.com/bghira/SimpleTuner/issues/1064) that caused me to not use the [initialization trick](#make-de-distillation-go-fast-and-fit-in-small-gpus) when on the H100s, then [timestep stratification](#beta-timestep-scheduling-and-timestep-stratification) ended up quieting down the gradient magnitudes even more and caused the model to learn very slowly at `1e-5`. I realized this when looking at the results of EMA on the final FLUX.1-dev. The H100s really came out of nowhere as I just got an IP address to shell into late one night around 10PM and ended up staying up all night to get everything running, so in the future I'm sure I would be more prepared.

|

| 239 |

+

|

| 240 |

+

For de-distillation of schnell I think you probably need a lot more than 1500 H100-equivalent hours. I am very tired of training FLUX and am looking forward to a better model with less parameters. The model learns new concepts slowly when given piles of well labeled data. Given the history of LLMs, we now have models like LLaMA 3.1 8B that trade blows with GPT3.5 175B and I am hopeful that the future holds [smaller, faster models that look better](https://openreview.net/pdf?id=jQP5o1VAVc).

|

| 241 |

+

|

| 242 |

+

As far as what I think of the FLUX "open source", many models being trained and released today are attempts at raising VC cash and I have noticed a mountain of them being promoted on Twitter. Since [a16z poached the entire SD3 dev team from Stability.ai](https://siliconcanals.com/black-forest-labs-secures-28m/) the field feels more toxic than ever, but I am hopeful for individuals and research labs to selflessly lead the path forward for open weights. I made zero dollars on this and have made zero dollars on ML to date, but I try to make contributions where I can.

|

| 243 |

+

|

| 244 |

+

|

| 245 |

+

|

| 246 |

+

I would like to thank [RunDiffusion](https://rundiffusion.com) for the H100 access.

|

| 247 |

+

|

| 248 |

+

## Contacting me and grants

|

| 249 |

+

|

| 250 |

+

You can contact me by opening an issue on the discuss page of this model. If you want to speak privately about grants because you want me to continue training this or give me a means to conduct reproducible research, leave an email address too.

|

| 251 |

+

|

| 252 |

+

## Citation

|

| 253 |

+

|

| 254 |

+

```

|

| 255 |

+

@misc{libreflux,

|

| 256 |

+

author = {James Carter},

|

| 257 |

+

title = {LibreFLUX: A free, de-distilled FLUX model},

|

| 258 |

+

year = {2024},

|

| 259 |

+

publisher = {Huggingface},

|

| 260 |

+

journal = {Huggingface repository},

|

| 261 |

+

howpublished = {\url{https://huggingface.co/datasets/jimmycarter/libreflux}},

|

| 262 |

+

}

|

| 263 |

+

```

|

assets/comparisons/bear.jpg

ADDED

|

assets/comparisons/lady.jpg

ADDED

|

Git LFS Details

|

assets/comparisons/lime.jpg

ADDED

|

Git LFS Details

|

assets/comparisons/moon.jpg

ADDED

|

assets/comparisons/scars.jpg

ADDED

|

assets/comparisons/selfie.jpg

ADDED

|

assets/comparisons/teal_woman.jpg

ADDED

|

Git LFS Details

|

assets/comparisons/witch.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_0.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_1.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_10.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_11.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_12.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_2.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_3.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_4.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_5.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_6.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_7.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_8.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/comparison_9.jpg

ADDED

|

Git LFS Details

|

assets/comparisons_full/prompts.py

ADDED

|

@@ -0,0 +1,54 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

prompts = [

|

| 2 |

+

( # 0

|

| 3 |

+

"A wide format poster featuring George Washington atop a glorious bald eagle with its wings spread flying through the sky, background is the American flag and fireworks. Huge shiny Red white and blue, bold, gradient letters at the bottom spelling out \"WTF IS A KILOMETER\" in flaming text. 4k, masterpiece.",

|

| 4 |

+

(1536, 1024),

|

| 5 |

+

),

|

| 6 |

+

( # 1

|

| 7 |

+

"widescreen, vintage style from 1970s, Extreme realism in a complex, highly detailed composition featuring a woman with extremely long flowing rainbow-colored hair. The glowing background, with its vibrant colors, exaggerated details, intricate textures, and dynamic lighting, creates a whimsical, dreamy atmosphere in photorealistic quality. Threads of light that float and weave through the air, adding movement and intrigue. Patterns on the ground or in the background that glow subtly, adding a layer of complexity.Rainbows that appear faintly in the background, adding a touch of color and wonder.Butterfly wings that shimmer in the light, adding life and movement to the scene.Beams of light that radiate softly through the scene, adding focus and direction. The woman looks away from the camera, with a soft, wistful expression, her hair framing her face. ",

|

| 8 |

+

(1536, 1024),

|

| 9 |

+

),

|

| 10 |

+

( # 2

|

| 11 |

+

'A cinematic style shot of a polar bear standing confidently in the center of a vibrant nightclub. The bear is holding a large sign that reads \'Open Source! Apache 2.0\' in one arm and giving a thumbs up with the other arm. Around him, the club is alive with energy as colorful lasers and disco lights illuminate the scene. People are dancing all around him, wearing glowsticks and candy bracelets, adding to the fun and electric atmosphere. The polar bear\'s white fur contrasts against the dark, neon-lit background, and the entire scene has a surreal, festive vibe, blending technology activism with a lively party environment.',

|

| 12 |

+

(1536, 1024),

|

| 13 |

+

),

|

| 14 |

+

( # 3

|

| 15 |

+

'A boxed action figure of a beautiful elf girl witch wearing a skimpy black leotard, black thigh highs, black armlets, and a short black cloak. Her hair is pink and shoulder-length. Her eyes are green. She is a slim and attractive elf with small breasts. The accessories include an apple, magic wand, potion bottle, black cat, jack o lantern, and a book. The box is orange and black with a logo near the bottom of it that says "BAD WITCH". The box is on a shelf on the toy aisle.',

|

| 16 |

+

(1024, 1536),

|

| 17 |

+

),

|

| 18 |

+

( # 4

|

| 19 |

+

"A cute blonde woman in bikini and her doge are sitting on a couch cuddling and the expressive, stylish living room scene with a playful twist. The room is painted in a soothing turquoise color scheme, stylish living room scene bathed in a cool, textured turquoise blanket and adorned with several matching turquoise throw pillows. The room's color scheme is predominantly turquoise, relaxed demeanor. The couch is covered in a soft, reflecting light and adding to the vibrant blue hue., dark room with a sleek, spherical gold decorations, This photograph captures a scene that is whimsically styled in a vibrant, reflective cyan sunglasses. The dog's expression is cheerful, metallic fabric sofa. The dog, soothing atmosphere.",

|

| 20 |

+

(1536, 1024),

|

| 21 |

+

),

|

| 22 |

+

( # 5

|

| 23 |

+

"Bioluminescent, A hyperrealistic depiction of a surreal scene: a piano keyboard morphs into a spiral staircase, ascending into a swirling vortex of golden, autumnal hues. A figure with a porcelain mask, reminiscent of commedia dell'arte, emerges from beneath the keys, their hand extended towards a lone female figure in a flowing gown at the apex of the staircase. Emphasize the juxtaposition of the organic and geometric, the tangible and ethereal, with a chiaroscuro lighting style. Capture the melancholic beauty and enigmatic narrative inherent in the scene.",

|

| 24 |

+

(1024, 1536),

|

| 25 |

+

),

|

| 26 |

+

( # 6

|

| 27 |

+

"highly detailed cinematic movie poster with the text \"PACIFIST RIM\" in a bold, vibrant sci-fi-style font at the top and a tagline reading \"Saving the world, one bouquet at a time\" below it, with the movie credits at the bottom, in the foreground, a gigantic tailless humanoid bipedal mecha-robot and an equally massive kaiju with blue-green iridescent scales and bioluminescent accents stand face-to-face, the enormous mecha on the left is clad in battle-worn yet gleaming metallic armor plates, holding out a large bouquet of exotic, colorful flowers to the kaiju on the right, the kaiju looks surprised by the gesture, its grotesque, otherworldly, surreal form equipped with a row of cyan glowing crystalline spikes along its back, the setting is an urban waterfront, framed by towering skyscrapers and a shimmering ocean with soft waves behind them, the background is bathed in the glow of moonlight and flickering neon billboards with messages like \"HARMONY\" and \"PEACE,\" tiny people on the ground below snap photos with their phones, while some onlookers stare in disbelief, behind the two titanic figures, the calm ocean glistens under the moonlight as distant ships drift by, the robot's posture is calm and serene, its large claw-like hands extended as it presents the bouquet, both figures express an aura of peace and harmony despite their intimidating size, the monstrous kaiju, though menacing, is curious and seemingly receptive to the offering, the overall mood is tranquil, a wide-angle shot captures both the robot and kaiju in their full, towering forms, emphasizing their colossal scale against the peaceful cityscape backdrop, the waterfront and moonlight add depth, while a low-angle shot looking up at the robot and kaiju further enhances their imposing size, the lighting is vibrant and saturated, with soft moonlight reflecting off the robotâs metallic surface, neon city lights providing colorful accents, lens flare, dappled lighting",

|

| 28 |

+

(1024, 1536),

|

| 29 |

+

),

|

| 30 |

+

( # 7

|

| 31 |

+

'a highly detailed and atmospheric, painted western movie poster with the title text "Once Upon a Lime in the West" in a dark red western-style font and the tagline text "There were three men ... and one very sour twist", with movie credits at the bottom, featuring small white text detailing actor and director names and production company logos, inspired by classic western movie posters from the 1960s, an oversized lime is the central element in the middle ground of a rugged, sun-scorched desert landscape typical of a western, the vast expanse of dry, cracked earth stretches toward the horizon, framed by towering red rock formations, the absurdity of the lime is juxtaposed with the intense gravitas of the stoic, iconic gunfighters, as if the lime were as formidable an adversary as any seasoned gunslinger, in the foreground, the silhouettes of two iconic gunfighters stand poised, facing the lime and away from the viewer, the lime looms in the distance like a final showdown in the classic western tradition, in the foreground, the gunfighters stand with long duster coats flowing in the wind, and wide-brimmed hats tilted to cast shadows over their faces, their stances are tense, as if ready for the inevitable draw, and the weapons they carry glint, the background consists of the distant town, where the sun is casting a golden glow, old wooden buildings line the sides, with horses tied to posts and a weathered saloon sign swinging gently in the wind, in this poster, the lime plays the role of the silent villain, an almost mythical object that the gunfighters are preparing to confront, the tension of the scene is palpable, the gunfighters in the foreground have faces marked by dust and sweat, their eyes narrowed against the bright sunlight, their expressions are serious and resolute, as if they have come a long way for this final duel, the absurdity of the lime is in stark contrast with their stoic demeanor, a wide, panoramic shot captures the entire scene, with the gunfighters in the foreground, the lime in the mid-ground, and the town on the horizon, the framing emphasizes the scale of the desert and the dramatic standoff taking place, while subtly highlighting the oversized lime, the camera is positioned low, angled upward from the dusty ground toward the gunfighters, with the distant lime looming ahead, this angle lends the figures an imposing presence, while still giving the lime an absurd grandeur in the distance, the perspective draws the viewerâs eye across the desert, from the silhouettes of the gunfighters to the bizarre focal point of the lime, amplifying the tension, the lighting is harsh and unforgiving, typical of a desert setting, with the evening sun casting deep shadows across the ground, dust clouds drift subtly across the ground, creating a hazy effect, while the sky above is a vast expanse of pale blue, fading into golden hues near the horizon where the sun begins to set, the poster is shot as if using classic anamorphic lenses to capture the wide, epic scale of the desert, the color palette is warm and saturated, evoking the look of a classic spaghetti western, the lime looms unnaturally in the distance, as if conjured from the land itself, casting an absurdly grand shadow across the rugged landscape, the texture and detail evoke hand-painted, weathered posters from the golden age of westerns, with slightly frayed edges and faint creases mimicking the wear of vintage classics',

|

| 32 |

+

(1024, 1536),

|

| 33 |

+

),

|

| 34 |

+

( # 8

|

| 35 |

+

'Anime illustration of a man standing next to a cat',

|

| 36 |

+

(1024, 1024),

|

| 37 |

+

),

|

| 38 |

+

( # 9

|

| 39 |

+

'Selfie of a woman in front of the eiffel tower, a man is standing next to her and giving a thumbs up',

|

| 40 |

+

(1024, 1024),

|

| 41 |

+

),

|

| 42 |

+

( # 10

|

| 43 |

+

"a life-sized, clear plastic action figure box with a real woman trapped inside. The box has vibrant, eye-catching colors, featuring bold logos and text reminiscent of classic action figure packaging. The woman stands stiffly in the middle, her pose rigid like a doll, her facial expression conveying a mixture of confusion and surprise. She wears a brightly colored outfit that matches the action figure aesthetic, with exaggerated accessories like a toy sword or futuristic helmet strapped to her side. The box\’s background features bold comic-book-like artwork, framing the woman with dynamic lines and cartoonish explosions, emphasizing the \"action\" theme. The plastic window on the front covers the woman\’s entire body, while the sides display branding and promotional text, like \“Superhero Edition\” or \“Ultimate Collector\’s Item!\” Around the box, toy-like details abound: barcodes, toy company logos, and descriptions of her \“powers\” or \“abilities\” written in comic-style font.",

|

| 44 |

+

(1024, 1536),

|

| 45 |

+

),

|

| 46 |

+

( # 11

|

| 47 |

+

'An image contains three motivational phrases, all in capitalized stylized text on a colorful background: 1. At the top: "PAIN HEALS" 2. In the middle, bold and slightly larger: "CHICKS DIG SCARS" 3. At the bottom: "GLORY LASTS FOREVER"',

|

| 48 |

+

(1024, 1024),

|

| 49 |

+

),

|

| 50 |

+

( # 12

|

| 51 |

+

'An illustration featuring a McDonald\'s on the moon. An anthropomorphic cat in a pink top and blue jeans is ordering McDonald\'s, while a zebra cashier stands behind the counter. The moon\'s surface is visible outside the windows, with craters and a distant view of Earth. The interior of the McDonald\'s is similar to those on Earth but adapted to the lunar environment, with vibrant colors and futuristic design elements. The overall scene is whimsical and imaginative, blending everyday life with a fantastical setting.',

|

| 52 |

+

(1024, 1024),

|

| 53 |

+

),

|

| 54 |

+

]

|

assets/science.png

ADDED

|

assets/splash.jpg

ADDED

|

pipeline.py

ADDED

|

@@ -0,0 +1,1813 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|