Spaces:

Running

Running

Upload 80 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +3 -0

- GroundingDINO/.asset/COCO.png +0 -0

- GroundingDINO/.asset/GD_GLIGEN.png +3 -0

- GroundingDINO/.asset/GD_SD.png +3 -0

- GroundingDINO/.asset/ODinW.png +0 -0

- GroundingDINO/.asset/arch.png +0 -0

- GroundingDINO/.asset/cats.png +0 -0

- GroundingDINO/.asset/hero_figure.png +3 -0

- GroundingDINO/LICENSE +201 -0

- GroundingDINO/README.md +163 -0

- GroundingDINO/demo/gradio_app.py +125 -0

- GroundingDINO/demo/inference_on_a_image.py +172 -0

- GroundingDINO/groundingdino/_C.cp38-win_amd64.pyd +0 -0

- GroundingDINO/groundingdino/__init__.py +0 -0

- GroundingDINO/groundingdino/__pycache__/__init__.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/config/GroundingDINO_SwinB.py +43 -0

- GroundingDINO/groundingdino/config/GroundingDINO_SwinT_OGC.py +43 -0

- GroundingDINO/groundingdino/datasets/__init__.py +0 -0

- GroundingDINO/groundingdino/datasets/__pycache__/__init__.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/datasets/__pycache__/transforms.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/datasets/transforms.py +311 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__init__.py +15 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/__init__.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/bertwarper.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/fuse_modules.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/groundingdino.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/ms_deform_attn.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/transformer.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/transformer_vanilla.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/__pycache__/utils.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/__init__.py +1 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/__pycache__/__init__.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/__pycache__/backbone.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/__pycache__/position_encoding.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/__pycache__/swin_transformer.cpython-38.pyc +0 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/backbone.py +221 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/position_encoding.py +186 -0

- GroundingDINO/groundingdino/models/GroundingDINO/backbone/swin_transformer.py +802 -0

- GroundingDINO/groundingdino/models/GroundingDINO/bertwarper.py +273 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/MsDeformAttn/ms_deform_attn.h +64 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/MsDeformAttn/ms_deform_attn_cpu.cpp +43 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/MsDeformAttn/ms_deform_attn_cpu.h +35 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/MsDeformAttn/ms_deform_attn_cuda.cu +156 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/MsDeformAttn/ms_deform_attn_cuda.h +33 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/MsDeformAttn/ms_deform_im2col_cuda.cuh +1327 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/cuda_version.cu +7 -0

- GroundingDINO/groundingdino/models/GroundingDINO/csrc/vision.cpp +58 -0

- GroundingDINO/groundingdino/models/GroundingDINO/fuse_modules.py +297 -0

- GroundingDINO/groundingdino/models/GroundingDINO/groundingdino.py +395 -0

- GroundingDINO/groundingdino/models/GroundingDINO/ms_deform_attn.py +413 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,6 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

GroundingDINO/.asset/GD_GLIGEN.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

GroundingDINO/.asset/GD_SD.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

GroundingDINO/.asset/hero_figure.png filter=lfs diff=lfs merge=lfs -text

|

GroundingDINO/.asset/COCO.png

ADDED

|

GroundingDINO/.asset/GD_GLIGEN.png

ADDED

|

Git LFS Details

|

GroundingDINO/.asset/GD_SD.png

ADDED

|

Git LFS Details

|

GroundingDINO/.asset/ODinW.png

ADDED

|

GroundingDINO/.asset/arch.png

ADDED

|

GroundingDINO/.asset/cats.png

ADDED

|

GroundingDINO/.asset/hero_figure.png

ADDED

|

Git LFS Details

|

GroundingDINO/LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright 2020 - present, Facebook, Inc

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

GroundingDINO/README.md

ADDED

|

@@ -0,0 +1,163 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Grounding DINO

|

| 2 |

+

|

| 3 |

+

---

|

| 4 |

+

|

| 5 |

+

[](https://arxiv.org/abs/2303.05499)

|

| 6 |

+

[](https://youtu.be/wxWDt5UiwY8)

|

| 7 |

+

[](https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/zero-shot-object-detection-with-grounding-dino.ipynb)

|

| 8 |

+

[](https://youtu.be/cMa77r3YrDk)

|

| 9 |

+

[](https://huggingface.co/spaces/ShilongLiu/Grounding_DINO_demo)

|

| 10 |

+

|

| 11 |

+

[](https://paperswithcode.com/sota/zero-shot-object-detection-on-mscoco?p=grounding-dino-marrying-dino-with-grounded) \

|

| 12 |

+

[](https://paperswithcode.com/sota/zero-shot-object-detection-on-odinw?p=grounding-dino-marrying-dino-with-grounded) \

|

| 13 |

+

[](https://paperswithcode.com/sota/object-detection-on-coco-minival?p=grounding-dino-marrying-dino-with-grounded) \

|

| 14 |

+

[](https://paperswithcode.com/sota/object-detection-on-coco?p=grounding-dino-marrying-dino-with-grounded)

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

Official PyTorch implementation of [Grounding DINO](https://arxiv.org/abs/2303.05499), a stronger open-set object detector. Code is available now!

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

## Highlight

|

| 22 |

+

|

| 23 |

+

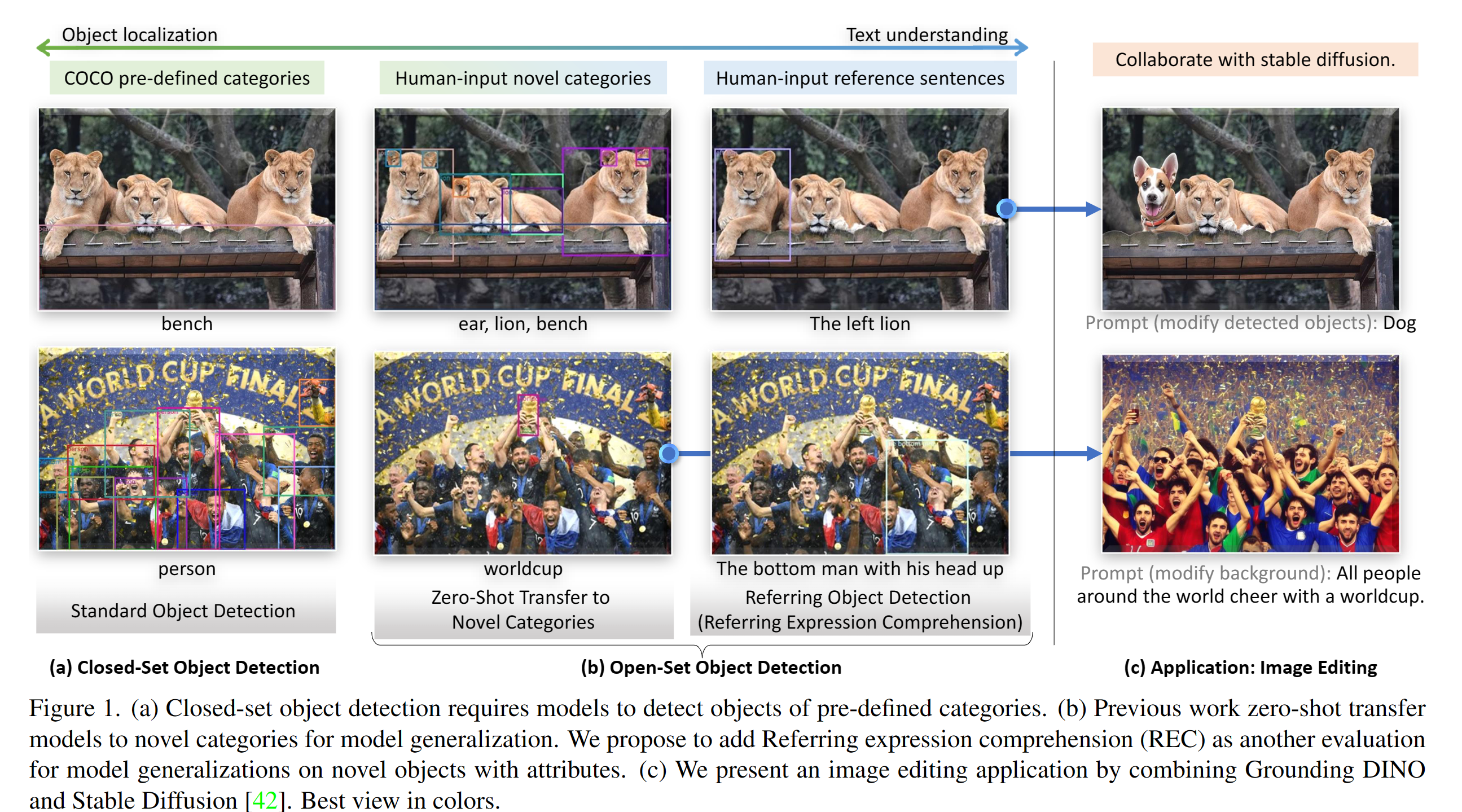

- **Open-Set Detection.** Detect **everything** with language!

|

| 24 |

+

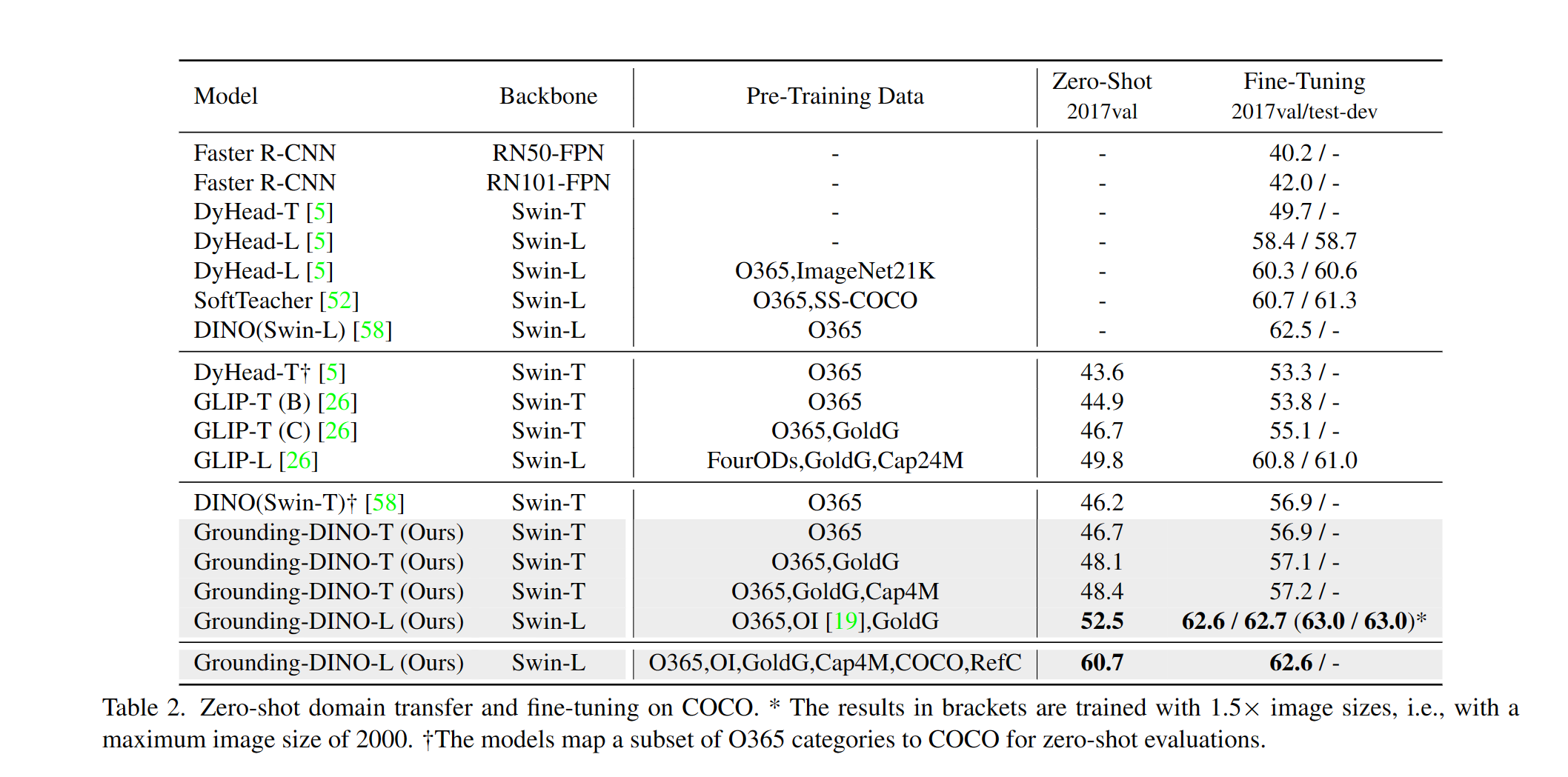

- **High Performancce.** COCO zero-shot **52.5 AP** (training without COCO data!). COCO fine-tune **63.0 AP**.

|

| 25 |

+

- **Flexible.** Collaboration with Stable Diffusion for Image Editting.

|

| 26 |

+

|

| 27 |

+

## News

|

| 28 |

+

[2023/03/28] A YouTube [video](https://youtu.be/cMa77r3YrDk) about Grounding DINO and basic object detection prompt engineering. [[SkalskiP](https://github.com/SkalskiP)] \

|

| 29 |

+

[2023/03/28] Add a [demo](https://huggingface.co/spaces/ShilongLiu/Grounding_DINO_demo) on Hugging Face Space! \

|

| 30 |

+

[2023/03/27] Support CPU-only mode. Now the model can run on machines without GPUs.\

|

| 31 |

+

[2023/03/25] A [demo](https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/zero-shot-object-detection-with-grounding-dino.ipynb) for Grounding DINO is available at Colab. [[SkalskiP](https://github.com/SkalskiP)] \

|

| 32 |

+

[2023/03/22] Code is available Now!

|

| 33 |

+

|

| 34 |

+

<details open>

|

| 35 |

+

<summary><font size="4">

|

| 36 |

+

Description

|

| 37 |

+

</font></summary>

|

| 38 |

+

<img src=".asset/hero_figure.png" alt="ODinW" width="100%">

|

| 39 |

+

</details>

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

## TODO

|

| 44 |

+

|

| 45 |

+

- [x] Release inference code and demo.

|

| 46 |

+

- [x] Release checkpoints.

|

| 47 |

+

- [ ] Grounding DINO with Stable Diffusion and GLIGEN demos.

|

| 48 |

+

- [ ] Release training codes.

|

| 49 |

+

|

| 50 |

+

## Install

|

| 51 |

+

|

| 52 |

+

If you have a CUDA environment, please make sure the environment variable `CUDA_HOME` is set. It will be compiled under CPU-only mode if no CUDA available.

|

| 53 |

+

|

| 54 |

+

```bash

|

| 55 |

+

pip install -e .

|

| 56 |

+

```

|

| 57 |

+

|

| 58 |

+

## Demo

|

| 59 |

+

|

| 60 |

+

```bash

|

| 61 |

+

CUDA_VISIBLE_DEVICES=6 python demo/inference_on_a_image.py \

|

| 62 |

+

-c /path/to/config \

|

| 63 |

+

-p /path/to/checkpoint \

|

| 64 |

+

-i .asset/cats.png \

|

| 65 |

+

-o "outputs/0" \

|

| 66 |

+

-t "cat ear." \

|

| 67 |

+

[--cpu-only] # open it for cpu mode

|

| 68 |

+

```

|

| 69 |

+

See the `demo/inference_on_a_image.py` for more details.

|

| 70 |

+

|

| 71 |

+

**Web UI**

|

| 72 |

+

|

| 73 |

+

We also provide a demo code to integrate Grounding DINO with Gradio Web UI. See the file `demo/gradio_app.py` for more details.

|

| 74 |

+

|

| 75 |

+

## Checkpoints

|

| 76 |

+

|

| 77 |

+

<!-- insert a table -->

|

| 78 |

+

<table>

|

| 79 |

+

<thead>

|

| 80 |

+

<tr style="text-align: right;">

|

| 81 |

+

<th></th>

|

| 82 |

+

<th>name</th>

|

| 83 |

+

<th>backbone</th>

|

| 84 |

+

<th>Data</th>

|

| 85 |

+

<th>box AP on COCO</th>

|

| 86 |

+

<th>Checkpoint</th>

|

| 87 |

+

<th>Config</th>

|

| 88 |

+

</tr>

|

| 89 |

+

</thead>

|

| 90 |

+

<tbody>

|

| 91 |

+

<tr>

|

| 92 |

+

<th>1</th>

|

| 93 |

+

<td>GroundingDINO-T</td>

|

| 94 |

+

<td>Swin-T</td>

|

| 95 |

+

<td>O365,GoldG,Cap4M</td>

|

| 96 |

+

<td>48.4 (zero-shot) / 57.2 (fine-tune)</td>

|

| 97 |

+

<td><a href="https://github.com/IDEA-Research/GroundingDINO/releases/download/v0.1.0-alpha/groundingdino_swint_ogc.pth">Github link</a> | <a href="https://huggingface.co/ShilongLiu/GroundingDINO/resolve/main/groundingdino_swint_ogc.pth">HF link</a></td>

|

| 98 |

+

<td><a href="https://github.com/IDEA-Research/GroundingDINO/blob/main/groundingdino/config/GroundingDINO_SwinT_OGC.py">link</a></td>

|

| 99 |

+

</tr>

|

| 100 |

+

</tbody>

|

| 101 |

+

</table>

|

| 102 |

+

|

| 103 |

+

## Results

|

| 104 |

+

|

| 105 |

+

<details open>

|

| 106 |

+

<summary><font size="4">

|

| 107 |

+

COCO Object Detection Results

|

| 108 |

+

</font></summary>

|

| 109 |

+

<img src=".asset/COCO.png" alt="COCO" width="100%">

|

| 110 |

+

</details>

|

| 111 |

+

|

| 112 |

+

<details open>

|

| 113 |

+

<summary><font size="4">

|

| 114 |

+

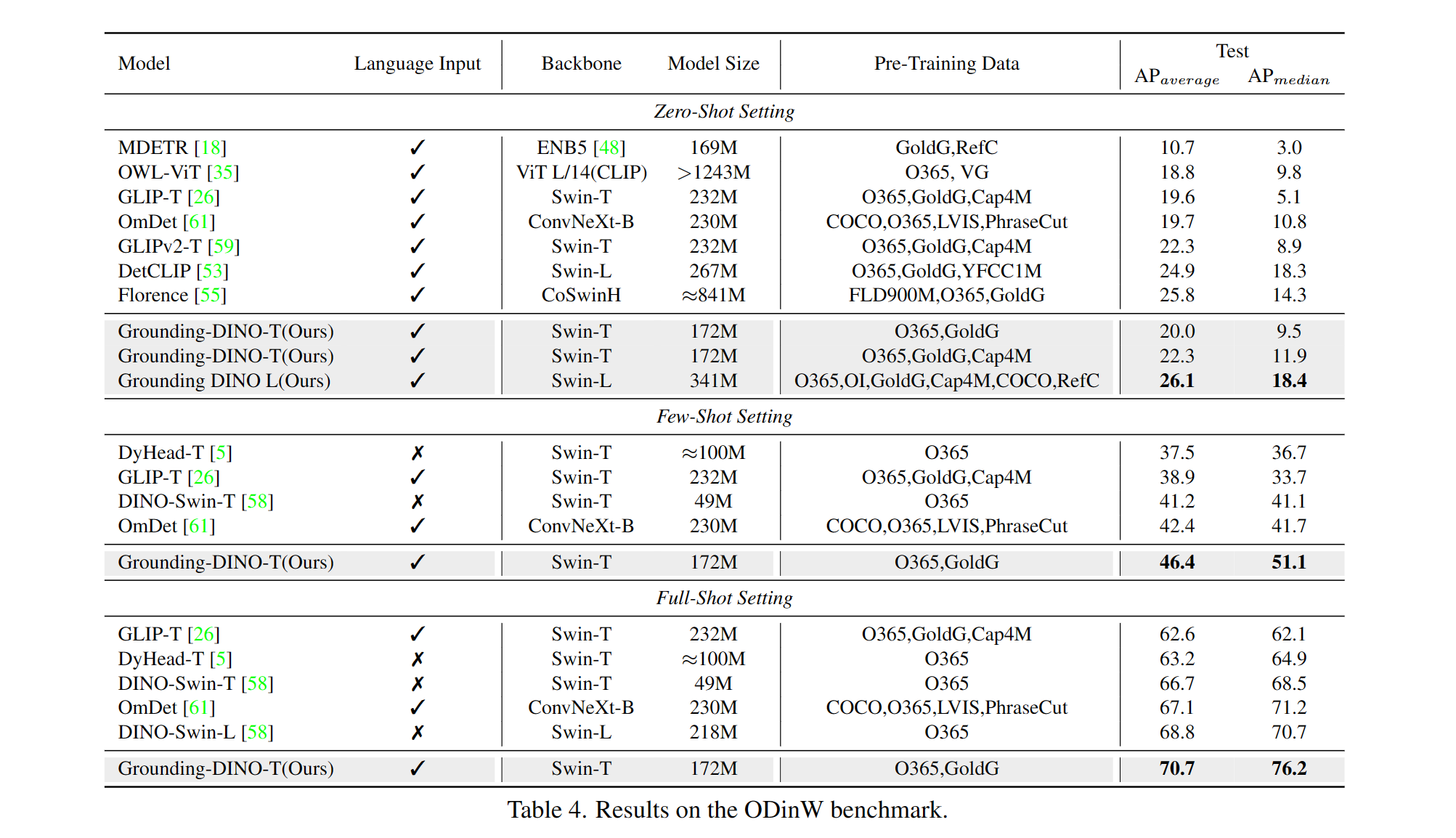

ODinW Object Detection Results

|

| 115 |

+

</font></summary>

|

| 116 |

+

<img src=".asset/ODinW.png" alt="ODinW" width="100%">

|

| 117 |

+

</details>

|

| 118 |

+

|

| 119 |

+

<details open>

|

| 120 |

+

<summary><font size="4">

|

| 121 |

+

Marrying Grounding DINO with <a href="https://github.com/Stability-AI/StableDiffusion">Stable Diffusion</a> for Image Editing

|

| 122 |

+

</font></summary>

|

| 123 |

+

<img src=".asset/GD_SD.png" alt="GD_SD" width="100%">

|

| 124 |

+

</details>

|

| 125 |

+

|

| 126 |

+

<details open>

|

| 127 |

+

<summary><font size="4">

|

| 128 |

+

Marrying Grounding DINO with <a href="https://github.com/gligen/GLIGEN">GLIGEN</a> for more Detailed Image Editing

|

| 129 |

+

</font></summary>

|

| 130 |

+

<img src=".asset/GD_GLIGEN.png" alt="GD_GLIGEN" width="100%">

|

| 131 |

+

</details>

|

| 132 |

+

|

| 133 |

+

## Model

|

| 134 |

+

|

| 135 |

+

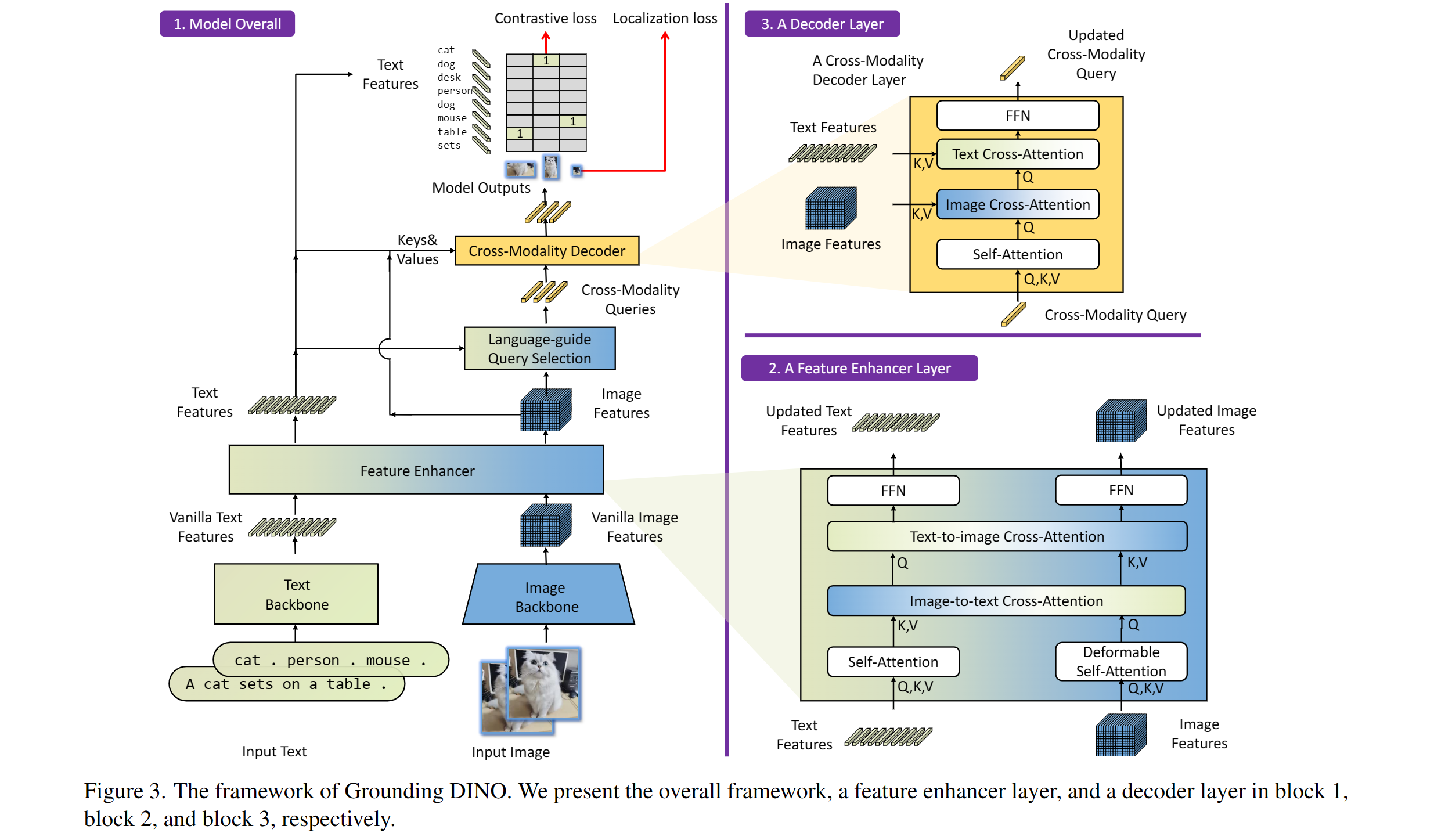

Includes: a text backbone, an image backbone, a feature enhancer, a language-guided query selection, and a cross-modality decoder.

|

| 136 |

+

|

| 137 |

+

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

## Acknowledgement

|

| 141 |

+

|

| 142 |

+

Our model is related to [DINO](https://github.com/IDEA-Research/DINO) and [GLIP](https://github.com/microsoft/GLIP). Thanks for their great work!

|

| 143 |

+

|

| 144 |

+

We also thank great previous work including DETR, Deformable DETR, SMCA, Conditional DETR, Anchor DETR, Dynamic DETR, DAB-DETR, DN-DETR, etc. More related work are available at [Awesome Detection Transformer](https://github.com/IDEACVR/awesome-detection-transformer). A new toolbox [detrex](https://github.com/IDEA-Research/detrex) is available as well.

|

| 145 |

+

|

| 146 |

+

Thanks [Stable Diffusion](https://github.com/Stability-AI/StableDiffusion) and [GLIGEN](https://github.com/gligen/GLIGEN) for their awesome models.

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

## Citation

|

| 150 |

+

|

| 151 |

+

If you find our work helpful for your research, please consider citing the following BibTeX entry.

|

| 152 |

+

|

| 153 |

+

```bibtex

|

| 154 |

+

@inproceedings{ShilongLiu2023GroundingDM,

|

| 155 |

+

title={Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection},

|

| 156 |

+

author={Shilong Liu and Zhaoyang Zeng and Tianhe Ren and Feng Li and Hao Zhang and Jie Yang and Chunyuan Li and Jianwei Yang and Hang Su and Jun Zhu and Lei Zhang},

|

| 157 |

+

year={2023}

|

| 158 |

+

}

|

| 159 |

+

```

|

| 160 |

+

|

| 161 |

+

|

| 162 |

+

|

| 163 |

+

|

GroundingDINO/demo/gradio_app.py

ADDED

|

@@ -0,0 +1,125 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

from functools import partial

|

| 3 |

+

import cv2

|

| 4 |

+

import requests

|

| 5 |

+

import os

|

| 6 |

+

from io import BytesIO

|

| 7 |

+

from PIL import Image

|

| 8 |

+

import numpy as np

|

| 9 |

+

from pathlib import Path

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

import warnings

|

| 13 |

+

|

| 14 |

+

import torch

|

| 15 |

+

|

| 16 |

+

# prepare the environment

|

| 17 |

+

os.system("python setup.py build develop --user")

|

| 18 |

+

os.system("pip install packaging==21.3")

|

| 19 |

+

os.system("pip install gradio")

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

warnings.filterwarnings("ignore")

|

| 23 |

+

|

| 24 |

+

import gradio as gr

|

| 25 |

+

|

| 26 |

+

from groundingdino.models import build_model

|

| 27 |

+

from groundingdino.util.slconfig import SLConfig

|

| 28 |

+

from groundingdino.util.utils import clean_state_dict

|

| 29 |

+

from groundingdino.util.inference import annotate, load_image, predict

|

| 30 |

+

import groundingdino.datasets.transforms as T

|

| 31 |

+

|

| 32 |

+

from huggingface_hub import hf_hub_download

|

| 33 |

+

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

# Use this command for evaluate the GLIP-T model

|

| 37 |

+

config_file = "groundingdino/config/GroundingDINO_SwinT_OGC.py"

|

| 38 |

+

ckpt_repo_id = "ShilongLiu/GroundingDINO"

|

| 39 |

+

ckpt_filenmae = "groundingdino_swint_ogc.pth"

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

def load_model_hf(model_config_path, repo_id, filename, device='cpu'):

|

| 43 |

+

args = SLConfig.fromfile(model_config_path)

|

| 44 |

+

model = build_model(args)

|

| 45 |

+

args.device = device

|

| 46 |

+

|

| 47 |

+

cache_file = hf_hub_download(repo_id=repo_id, filename=filename)

|

| 48 |

+

checkpoint = torch.load(cache_file, map_location='cpu')

|

| 49 |

+

log = model.load_state_dict(clean_state_dict(checkpoint['model']), strict=False)

|

| 50 |

+

print("Model loaded from {} \n => {}".format(cache_file, log))

|

| 51 |

+

_ = model.eval()

|

| 52 |

+

return model

|

| 53 |

+

|

| 54 |

+

def image_transform_grounding(init_image):

|

| 55 |

+

transform = T.Compose([

|

| 56 |

+

T.RandomResize([800], max_size=1333),

|

| 57 |

+

T.ToTensor(),

|

| 58 |

+

T.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

|

| 59 |

+

])

|

| 60 |

+

image, _ = transform(init_image, None) # 3, h, w

|

| 61 |

+

return init_image, image

|

| 62 |

+

|

| 63 |

+

def image_transform_grounding_for_vis(init_image):

|

| 64 |

+

transform = T.Compose([

|

| 65 |

+

T.RandomResize([800], max_size=1333),

|

| 66 |

+

])

|

| 67 |

+

image, _ = transform(init_image, None) # 3, h, w

|

| 68 |

+

return image

|

| 69 |

+

|

| 70 |

+

model = load_model_hf(config_file, ckpt_repo_id, ckpt_filenmae)

|

| 71 |

+

|

| 72 |

+

def run_grounding(input_image, grounding_caption, box_threshold, text_threshold):

|

| 73 |

+

init_image = input_image.convert("RGB")

|

| 74 |

+

original_size = init_image.size

|

| 75 |

+

|

| 76 |

+

_, image_tensor = image_transform_grounding(init_image)

|

| 77 |

+

image_pil: Image = image_transform_grounding_for_vis(init_image)

|

| 78 |

+

|

| 79 |

+

# run grounidng

|

| 80 |

+

boxes, logits, phrases = predict(model, image_tensor, grounding_caption, box_threshold, text_threshold, device='cpu')

|

| 81 |

+

annotated_frame = annotate(image_source=np.asarray(image_pil), boxes=boxes, logits=logits, phrases=phrases)

|

| 82 |

+

image_with_box = Image.fromarray(cv2.cvtColor(annotated_frame, cv2.COLOR_BGR2RGB))

|

| 83 |

+

|

| 84 |

+

|

| 85 |

+

return image_with_box

|

| 86 |

+

|

| 87 |

+

if __name__ == "__main__":

|

| 88 |

+

|

| 89 |

+

parser = argparse.ArgumentParser("Grounding DINO demo", add_help=True)

|

| 90 |

+

parser.add_argument("--debug", action="store_true", help="using debug mode")

|

| 91 |

+

parser.add_argument("--share", action="store_true", help="share the app")

|

| 92 |

+

args = parser.parse_args()

|

| 93 |

+

|

| 94 |

+

block = gr.Blocks().queue()

|

| 95 |

+

with block:

|

| 96 |

+

gr.Markdown("# [Grounding DINO](https://github.com/IDEA-Research/GroundingDINO)")

|

| 97 |

+

gr.Markdown("### Open-World Detection with Grounding DINO")

|

| 98 |

+

|

| 99 |

+

with gr.Row():

|

| 100 |

+

with gr.Column():

|

| 101 |

+

input_image = gr.Image(source='upload', type="pil")

|

| 102 |

+

grounding_caption = gr.Textbox(label="Detection Prompt")

|

| 103 |

+

run_button = gr.Button(label="Run")

|

| 104 |

+

with gr.Accordion("Advanced options", open=False):

|

| 105 |

+

box_threshold = gr.Slider(

|

| 106 |

+

label="Box Threshold", minimum=0.0, maximum=1.0, value=0.25, step=0.001

|

| 107 |

+

)

|

| 108 |

+

text_threshold = gr.Slider(

|

| 109 |

+

label="Text Threshold", minimum=0.0, maximum=1.0, value=0.25, step=0.001

|

| 110 |

+

)

|

| 111 |

+

|

| 112 |

+

with gr.Column():

|

| 113 |

+

gallery = gr.outputs.Image(

|

| 114 |

+

type="pil",

|

| 115 |

+

# label="grounding results"

|

| 116 |

+

).style(full_width=True, full_height=True)

|

| 117 |

+

# gallery = gr.Gallery(label="Generated images", show_label=False).style(

|

| 118 |

+

# grid=[1], height="auto", container=True, full_width=True, full_height=True)

|

| 119 |

+

|

| 120 |

+

run_button.click(fn=run_grounding, inputs=[

|

| 121 |

+

input_image, grounding_caption, box_threshold, text_threshold], outputs=[gallery])

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

block.launch(server_name='0.0.0.0', server_port=7579, debug=args.debug, share=args.share)

|

| 125 |

+

|

GroundingDINO/demo/inference_on_a_image.py

ADDED

|

@@ -0,0 +1,172 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import os

|

| 3 |

+

import sys

|

| 4 |

+

|

| 5 |

+

import numpy as np

|

| 6 |

+

import torch

|

| 7 |

+

from PIL import Image, ImageDraw, ImageFont

|

| 8 |

+

|

| 9 |

+

import groundingdino.datasets.transforms as T

|

| 10 |

+

from groundingdino.models import build_model

|

| 11 |

+

from groundingdino.util import box_ops

|

| 12 |

+

from groundingdino.util.slconfig import SLConfig

|

| 13 |

+

from groundingdino.util.utils import clean_state_dict, get_phrases_from_posmap

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

def plot_boxes_to_image(image_pil, tgt):

|

| 17 |

+

H, W = tgt["size"]

|

| 18 |

+

boxes = tgt["boxes"]

|

| 19 |

+

labels = tgt["labels"]

|

| 20 |

+

assert len(boxes) == len(labels), "boxes and labels must have same length"

|

| 21 |

+

|

| 22 |

+

draw = ImageDraw.Draw(image_pil)

|

| 23 |

+

mask = Image.new("L", image_pil.size, 0)

|

| 24 |

+

mask_draw = ImageDraw.Draw(mask)

|

| 25 |

+

|

| 26 |

+

# draw boxes and masks

|

| 27 |

+

for box, label in zip(boxes, labels):

|

| 28 |

+

# from 0..1 to 0..W, 0..H

|

| 29 |

+

box = box * torch.Tensor([W, H, W, H])

|

| 30 |

+

# from xywh to xyxy

|

| 31 |

+

box[:2] -= box[2:] / 2

|

| 32 |

+

box[2:] += box[:2]

|

| 33 |

+

# random color

|

| 34 |

+

color = tuple(np.random.randint(0, 255, size=3).tolist())

|

| 35 |

+

# draw

|

| 36 |

+

x0, y0, x1, y1 = box

|

| 37 |

+

x0, y0, x1, y1 = int(x0), int(y0), int(x1), int(y1)

|

| 38 |

+

|

| 39 |

+

draw.rectangle([x0, y0, x1, y1], outline=color, width=6)

|

| 40 |

+

# draw.text((x0, y0), str(label), fill=color)

|

| 41 |

+

|

| 42 |

+

font = ImageFont.load_default()

|

| 43 |

+

if hasattr(font, "getbbox"):

|

| 44 |

+

bbox = draw.textbbox((x0, y0), str(label), font)

|

| 45 |

+

else:

|

| 46 |

+

w, h = draw.textsize(str(label), font)

|

| 47 |

+

bbox = (x0, y0, w + x0, y0 + h)

|

| 48 |

+

# bbox = draw.textbbox((x0, y0), str(label))

|

| 49 |

+

draw.rectangle(bbox, fill=color)

|

| 50 |

+

draw.text((x0, y0), str(label), fill="white")

|

| 51 |

+

|

| 52 |

+

mask_draw.rectangle([x0, y0, x1, y1], fill=255, width=6)

|

| 53 |

+

|

| 54 |

+

return image_pil, mask

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

def load_image(image_path):

|

| 58 |

+

# load image

|

| 59 |

+

image_pil = Image.open(image_path).convert("RGB") # load image

|

| 60 |

+

|

| 61 |

+

transform = T.Compose(

|

| 62 |

+

[

|

| 63 |

+

T.RandomResize([800], max_size=1333),

|

| 64 |

+

T.ToTensor(),

|

| 65 |

+

T.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]),

|

| 66 |

+

]

|

| 67 |

+

)

|

| 68 |

+

image, _ = transform(image_pil, None) # 3, h, w

|

| 69 |

+

return image_pil, image

|

| 70 |

+

|

| 71 |

+

|

| 72 |

+

def load_model(model_config_path, model_checkpoint_path, cpu_only=False):

|

| 73 |

+

args = SLConfig.fromfile(model_config_path)

|

| 74 |

+

args.device = "cuda" if not cpu_only else "cpu"

|

| 75 |

+

model = build_model(args)

|

| 76 |

+

checkpoint = torch.load(model_checkpoint_path, map_location="cpu")

|

| 77 |

+

load_res = model.load_state_dict(clean_state_dict(checkpoint["model"]), strict=False)

|

| 78 |

+

print(load_res)

|

| 79 |

+

_ = model.eval()

|

| 80 |

+

return model

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

def get_grounding_output(model, image, caption, box_threshold, text_threshold, with_logits=True, cpu_only=False):

|

| 84 |

+

caption = caption.lower()

|

| 85 |

+

caption = caption.strip()

|

| 86 |

+

if not caption.endswith("."):

|

| 87 |

+

caption = caption + "."

|

| 88 |

+

device = "cuda" if not cpu_only else "cpu"

|

| 89 |

+

model = model.to(device)

|

| 90 |

+

image = image.to(device)

|

| 91 |

+

with torch.no_grad():

|

| 92 |

+

outputs = model(image[None], captions=[caption])

|

| 93 |

+

logits = outputs["pred_logits"].cpu().sigmoid()[0] # (nq, 256)

|

| 94 |

+

boxes = outputs["pred_boxes"].cpu()[0] # (nq, 4)

|

| 95 |

+

logits.shape[0]

|

| 96 |

+

|

| 97 |

+

# filter output

|

| 98 |

+

logits_filt = logits.clone()

|

| 99 |

+

boxes_filt = boxes.clone()

|

| 100 |

+

filt_mask = logits_filt.max(dim=1)[0] > box_threshold

|

| 101 |

+

logits_filt = logits_filt[filt_mask] # num_filt, 256

|

| 102 |

+

boxes_filt = boxes_filt[filt_mask] # num_filt, 4

|

| 103 |

+

logits_filt.shape[0]

|

| 104 |

+

|

| 105 |

+

# get phrase

|

| 106 |

+

tokenlizer = model.tokenizer

|

| 107 |

+

tokenized = tokenlizer(caption)

|

| 108 |

+

# build pred

|

| 109 |

+

pred_phrases = []

|

| 110 |

+

for logit, box in zip(logits_filt, boxes_filt):

|

| 111 |

+

pred_phrase = get_phrases_from_posmap(logit > text_threshold, tokenized, tokenlizer)

|

| 112 |

+

if with_logits:

|

| 113 |

+

pred_phrases.append(pred_phrase + f"({str(logit.max().item())[:4]})")

|

| 114 |

+

else:

|

| 115 |

+

pred_phrases.append(pred_phrase)

|

| 116 |

+

|

| 117 |

+

return boxes_filt, pred_phrases

|

| 118 |

+

|

| 119 |

+

|

| 120 |

+

if __name__ == "__main__":

|

| 121 |

+

|

| 122 |

+

parser = argparse.ArgumentParser("Grounding DINO example", add_help=True)

|

| 123 |

+

parser.add_argument("--config_file", "-c", type=str, required=True, help="path to config file")

|

| 124 |

+

parser.add_argument(

|

| 125 |

+

"--checkpoint_path", "-p", type=str, required=True, help="path to checkpoint file"

|

| 126 |

+

)

|

| 127 |

+

parser.add_argument("--image_path", "-i", type=str, required=True, help="path to image file")

|

| 128 |

+

parser.add_argument("--text_prompt", "-t", type=str, required=True, help="text prompt")

|

| 129 |

+

parser.add_argument(

|

| 130 |

+

"--output_dir", "-o", type=str, default="outputs", required=True, help="output directory"

|

| 131 |

+

)

|

| 132 |

+

|

| 133 |

+

parser.add_argument("--box_threshold", type=float, default=0.3, help="box threshold")

|

| 134 |

+

parser.add_argument("--text_threshold", type=float, default=0.25, help="text threshold")

|

| 135 |

+

|

| 136 |

+

parser.add_argument("--cpu-only", action="store_true", help="running on cpu only!, default=False")

|

| 137 |

+

args = parser.parse_args()

|

| 138 |

+

|

| 139 |

+

# cfg

|

| 140 |

+

config_file = args.config_file # change the path of the model config file

|

| 141 |

+

checkpoint_path = args.checkpoint_path # change the path of the model

|

| 142 |

+

image_path = args.image_path

|

| 143 |

+

text_prompt = args.text_prompt

|

| 144 |

+

output_dir = args.output_dir

|

| 145 |

+

box_threshold = args.box_threshold

|

| 146 |

+

text_threshold = args.text_threshold

|

| 147 |

+

|

| 148 |

+

# make dir

|

| 149 |

+

os.makedirs(output_dir, exist_ok=True)

|

| 150 |

+

# load image

|

| 151 |

+

image_pil, image = load_image(image_path)

|

| 152 |

+

# load model

|

| 153 |

+

model = load_model(config_file, checkpoint_path, cpu_only=args.cpu_only)

|

| 154 |

+

|

| 155 |

+

# visualize raw image

|

| 156 |

+

image_pil.save(os.path.join(output_dir, "raw_image.jpg"))

|

| 157 |

+

|

| 158 |

+

# run model

|

| 159 |

+

boxes_filt, pred_phrases = get_grounding_output(

|

| 160 |

+

model, image, text_prompt, box_threshold, text_threshold, cpu_only=args.cpu_only

|

| 161 |

+

)

|

| 162 |

+

|

| 163 |

+

# visualize pred

|

| 164 |

+

size = image_pil.size

|

| 165 |

+

pred_dict = {

|

| 166 |

+

"boxes": boxes_filt,

|

| 167 |

+

"size": [size[1], size[0]], # H,W

|

| 168 |

+

"labels": pred_phrases,

|

| 169 |

+

}

|

| 170 |

+

# import ipdb; ipdb.set_trace()

|

| 171 |

+

image_with_box = plot_boxes_to_image(image_pil, pred_dict)[0]

|

| 172 |

+

image_with_box.save(os.path.join(output_dir, "pred.jpg"))

|

GroundingDINO/groundingdino/_C.cp38-win_amd64.pyd

ADDED

|

Binary file (666 kB). View file

|

|

|

GroundingDINO/groundingdino/__init__.py

ADDED

|

File without changes

|

GroundingDINO/groundingdino/__pycache__/__init__.cpython-38.pyc

ADDED

|

Binary file (155 Bytes). View file

|

|

|

GroundingDINO/groundingdino/config/GroundingDINO_SwinB.py

ADDED

|

@@ -0,0 +1,43 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

batch_size = 1

|

| 2 |

+

modelname = "groundingdino"

|

| 3 |

+

backbone = "swin_B_384_22k"

|

| 4 |

+

position_embedding = "sine"

|

| 5 |

+

pe_temperatureH = 20

|

| 6 |

+

pe_temperatureW = 20

|

| 7 |

+

return_interm_indices = [1, 2, 3]

|

| 8 |

+

backbone_freeze_keywords = None

|

| 9 |

+

enc_layers = 6

|

| 10 |

+

dec_layers = 6

|

| 11 |

+

pre_norm = False

|

| 12 |

+

dim_feedforward = 2048

|

| 13 |

+

hidden_dim = 256

|

| 14 |

+

dropout = 0.0

|

| 15 |

+

nheads = 8

|

| 16 |

+

num_queries = 900

|

| 17 |

+

query_dim = 4

|

| 18 |

+

num_patterns = 0

|

| 19 |

+

num_feature_levels = 4

|

| 20 |

+

enc_n_points = 4

|

| 21 |

+

dec_n_points = 4

|

| 22 |

+

two_stage_type = "standard"

|

| 23 |

+

two_stage_bbox_embed_share = False

|

| 24 |

+

two_stage_class_embed_share = False

|

| 25 |

+

transformer_activation = "relu"

|

| 26 |

+

dec_pred_bbox_embed_share = True

|

| 27 |

+

dn_box_noise_scale = 1.0

|

| 28 |

+

dn_label_noise_ratio = 0.5

|

| 29 |

+

dn_label_coef = 1.0

|

| 30 |

+

dn_bbox_coef = 1.0

|

| 31 |

+

embed_init_tgt = True

|

| 32 |

+

dn_labelbook_size = 2000

|

| 33 |

+

max_text_len = 256

|

| 34 |

+

text_encoder_type = "bert-base-uncased"

|

| 35 |

+

use_text_enhancer = True

|

| 36 |

+

use_fusion_layer = True

|

| 37 |

+

use_checkpoint = True

|

| 38 |

+

use_transformer_ckpt = True

|

| 39 |

+

use_text_cross_attention = True

|

| 40 |

+

text_dropout = 0.0

|

| 41 |

+

fusion_dropout = 0.0

|

| 42 |

+

fusion_droppath = 0.1

|

| 43 |

+

sub_sentence_present = True

|

GroundingDINO/groundingdino/config/GroundingDINO_SwinT_OGC.py

ADDED

|

@@ -0,0 +1,43 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

batch_size = 1

|

| 2 |

+

modelname = "groundingdino"

|

| 3 |

+

backbone = "swin_T_224_1k"

|

| 4 |

+

position_embedding = "sine"

|

| 5 |

+

pe_temperatureH = 20

|

| 6 |

+

pe_temperatureW = 20

|

| 7 |

+

return_interm_indices = [1, 2, 3]

|

| 8 |

+

backbone_freeze_keywords = None

|

| 9 |

+

enc_layers = 6

|

| 10 |

+

dec_layers = 6

|

| 11 |

+

pre_norm = False

|

| 12 |

+

dim_feedforward = 2048

|

| 13 |

+

hidden_dim = 256

|

| 14 |

+

dropout = 0.0

|

| 15 |

+

nheads = 8

|

| 16 |

+

num_queries = 900

|

| 17 |

+

query_dim = 4

|

| 18 |

+

num_patterns = 0

|

| 19 |

+

num_feature_levels = 4

|

| 20 |

+

enc_n_points = 4

|

| 21 |

+

dec_n_points = 4

|

| 22 |

+

two_stage_type = "standard"

|

| 23 |