Spaces:

Running

on

Zero

Running

on

Zero

Commit

•

830d0b4

1

Parent(s):

611a9c6

Upload 4 files

Browse files- app.py +232 -0

- doge.png +0 -0

- equation.png +0 -0

- requirements.txt +8 -0

app.py

ADDED

|

@@ -0,0 +1,232 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import torch

|

| 3 |

+

from transformers import AutoConfig, AutoModelForCausalLM

|

| 4 |

+

from janus.models import MultiModalityCausalLM, VLChatProcessor

|

| 5 |

+

from janus.utils.io import load_pil_images

|

| 6 |

+

from PIL import Image

|

| 7 |

+

|

| 8 |

+

import numpy as np

|

| 9 |

+

import os

|

| 10 |

+

import spaces # Import spaces for ZeroGPU compatibility

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

# Load model and processor

|

| 14 |

+

model_path = "deepseek-ai/Janus-1.3B"

|

| 15 |

+

config = AutoConfig.from_pretrained(model_path)

|

| 16 |

+

language_config = config.language_config

|

| 17 |

+

language_config._attn_implementation = 'eager'

|

| 18 |

+

vl_gpt = AutoModelForCausalLM.from_pretrained(model_path,

|

| 19 |

+

language_config=language_config,

|

| 20 |

+

trust_remote_code=True)

|

| 21 |

+

if torch.cuda.is_available():

|

| 22 |

+

vl_gpt = vl_gpt.to(torch.bfloat16).cuda()

|

| 23 |

+

else:

|

| 24 |

+

vl_gpt = vl_gpt.to(torch.float16)

|

| 25 |

+

|

| 26 |

+

vl_chat_processor = VLChatProcessor.from_pretrained(model_path)

|

| 27 |

+

tokenizer = vl_chat_processor.tokenizer

|

| 28 |

+

cuda_device = 'cuda' if torch.cuda.is_available() else 'cpu'

|

| 29 |

+

# Multimodal Understanding function

|

| 30 |

+

@torch.inference_mode()

|

| 31 |

+

@spaces.GPU(duration=120)

|

| 32 |

+

# Multimodal Understanding function

|

| 33 |

+

def multimodal_understanding(image, question, seed, top_p, temperature):

|

| 34 |

+

# Clear CUDA cache before generating

|

| 35 |

+

torch.cuda.empty_cache()

|

| 36 |

+

|

| 37 |

+

# set seed

|

| 38 |

+

torch.manual_seed(seed)

|

| 39 |

+

np.random.seed(seed)

|

| 40 |

+

torch.cuda.manual_seed(seed)

|

| 41 |

+

|

| 42 |

+

conversation = [

|

| 43 |

+

{

|

| 44 |

+

"role": "User",

|

| 45 |

+

"content": f"<image_placeholder>\n{question}",

|

| 46 |

+

"images": [image],

|

| 47 |

+

},

|

| 48 |

+

{"role": "Assistant", "content": ""},

|

| 49 |

+

]

|

| 50 |

+

|

| 51 |

+

pil_images = [Image.fromarray(image)]

|

| 52 |

+

prepare_inputs = vl_chat_processor(

|

| 53 |

+

conversations=conversation, images=pil_images, force_batchify=True

|

| 54 |

+

).to(cuda_device, dtype=torch.bfloat16 if torch.cuda.is_available() else torch.float16)

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

inputs_embeds = vl_gpt.prepare_inputs_embeds(**prepare_inputs)

|

| 58 |

+

|

| 59 |

+

outputs = vl_gpt.language_model.generate(

|

| 60 |

+

inputs_embeds=inputs_embeds,

|

| 61 |

+

attention_mask=prepare_inputs.attention_mask,

|

| 62 |

+

pad_token_id=tokenizer.eos_token_id,

|

| 63 |

+

bos_token_id=tokenizer.bos_token_id,

|

| 64 |

+

eos_token_id=tokenizer.eos_token_id,

|

| 65 |

+

max_new_tokens=512,

|

| 66 |

+

do_sample=False if temperature == 0 else True,

|

| 67 |

+

use_cache=True,

|

| 68 |

+

temperature=temperature,

|

| 69 |

+

top_p=top_p,

|

| 70 |

+

)

|

| 71 |

+

|

| 72 |

+

answer = tokenizer.decode(outputs[0].cpu().tolist(), skip_special_tokens=True)

|

| 73 |

+

return answer

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

def generate(input_ids,

|

| 77 |

+

width,

|

| 78 |

+

height,

|

| 79 |

+

temperature: float = 1,

|

| 80 |

+

parallel_size: int = 5,

|

| 81 |

+

cfg_weight: float = 5,

|

| 82 |

+

image_token_num_per_image: int = 576,

|

| 83 |

+

patch_size: int = 16):

|

| 84 |

+

# Clear CUDA cache before generating

|

| 85 |

+

torch.cuda.empty_cache()

|

| 86 |

+

|

| 87 |

+

tokens = torch.zeros((parallel_size * 2, len(input_ids)), dtype=torch.int).to(cuda_device)

|

| 88 |

+

for i in range(parallel_size * 2):

|

| 89 |

+

tokens[i, :] = input_ids

|

| 90 |

+

if i % 2 != 0:

|

| 91 |

+

tokens[i, 1:-1] = vl_chat_processor.pad_id

|

| 92 |

+

inputs_embeds = vl_gpt.language_model.get_input_embeddings()(tokens)

|

| 93 |

+

generated_tokens = torch.zeros((parallel_size, image_token_num_per_image), dtype=torch.int).to(cuda_device)

|

| 94 |

+

|

| 95 |

+

pkv = None

|

| 96 |

+

for i in range(image_token_num_per_image):

|

| 97 |

+

outputs = vl_gpt.language_model.model(inputs_embeds=inputs_embeds,

|

| 98 |

+

use_cache=True,

|

| 99 |

+

past_key_values=pkv)

|

| 100 |

+

pkv = outputs.past_key_values

|

| 101 |

+

hidden_states = outputs.last_hidden_state

|

| 102 |

+

logits = vl_gpt.gen_head(hidden_states[:, -1, :])

|

| 103 |

+

logit_cond = logits[0::2, :]

|

| 104 |

+

logit_uncond = logits[1::2, :]

|

| 105 |

+

logits = logit_uncond + cfg_weight * (logit_cond - logit_uncond)

|

| 106 |

+

probs = torch.softmax(logits / temperature, dim=-1)

|

| 107 |

+

next_token = torch.multinomial(probs, num_samples=1)

|

| 108 |

+

generated_tokens[:, i] = next_token.squeeze(dim=-1)

|

| 109 |

+

next_token = torch.cat([next_token.unsqueeze(dim=1), next_token.unsqueeze(dim=1)], dim=1).view(-1)

|

| 110 |

+

img_embeds = vl_gpt.prepare_gen_img_embeds(next_token)

|

| 111 |

+

inputs_embeds = img_embeds.unsqueeze(dim=1)

|

| 112 |

+

patches = vl_gpt.gen_vision_model.decode_code(generated_tokens.to(dtype=torch.int),

|

| 113 |

+

shape=[parallel_size, 8, width // patch_size, height // patch_size])

|

| 114 |

+

|

| 115 |

+

return generated_tokens.to(dtype=torch.int), patches

|

| 116 |

+

|

| 117 |

+

def unpack(dec, width, height, parallel_size=5):

|

| 118 |

+

dec = dec.to(torch.float32).cpu().numpy().transpose(0, 2, 3, 1)

|

| 119 |

+

dec = np.clip((dec + 1) / 2 * 255, 0, 255)

|

| 120 |

+

|

| 121 |

+

visual_img = np.zeros((parallel_size, width, height, 3), dtype=np.uint8)

|

| 122 |

+

visual_img[:, :, :] = dec

|

| 123 |

+

|

| 124 |

+

return visual_img

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

|

| 128 |

+

@torch.inference_mode()

|

| 129 |

+

@spaces.GPU(duration=120) # Specify a duration to avoid timeout

|

| 130 |

+

def generate_image(prompt,

|

| 131 |

+

seed=None,

|

| 132 |

+

guidance=5):

|

| 133 |

+

# Clear CUDA cache and avoid tracking gradients

|

| 134 |

+

torch.cuda.empty_cache()

|

| 135 |

+

# Set the seed for reproducible results

|

| 136 |

+

if seed is not None:

|

| 137 |

+

torch.manual_seed(seed)

|

| 138 |

+

torch.cuda.manual_seed(seed)

|

| 139 |

+

np.random.seed(seed)

|

| 140 |

+

width = 384

|

| 141 |

+

height = 384

|

| 142 |

+

parallel_size = 5

|

| 143 |

+

|

| 144 |

+

with torch.no_grad():

|

| 145 |

+

messages = [{'role': 'User', 'content': prompt},

|

| 146 |

+

{'role': 'Assistant', 'content': ''}]

|

| 147 |

+

text = vl_chat_processor.apply_sft_template_for_multi_turn_prompts(conversations=messages,

|

| 148 |

+

sft_format=vl_chat_processor.sft_format,

|

| 149 |

+

system_prompt='')

|

| 150 |

+

text = text + vl_chat_processor.image_start_tag

|

| 151 |

+

input_ids = torch.LongTensor(tokenizer.encode(text))

|

| 152 |

+

output, patches = generate(input_ids,

|

| 153 |

+

width // 16 * 16,

|

| 154 |

+

height // 16 * 16,

|

| 155 |

+

cfg_weight=guidance,

|

| 156 |

+

parallel_size=parallel_size)

|

| 157 |

+

images = unpack(patches,

|

| 158 |

+

width // 16 * 16,

|

| 159 |

+

height // 16 * 16)

|

| 160 |

+

|

| 161 |

+

return [Image.fromarray(images[i]).resize((1024, 1024), Image.LANCZOS) for i in range(parallel_size)]

|

| 162 |

+

|

| 163 |

+

|

| 164 |

+

|

| 165 |

+

# Gradio interface

|

| 166 |

+

with gr.Blocks() as demo:

|

| 167 |

+

gr.Markdown(value="# Multimodal Understanding")

|

| 168 |

+

# with gr.Row():

|

| 169 |

+

with gr.Row():

|

| 170 |

+

image_input = gr.Image()

|

| 171 |

+

with gr.Column():

|

| 172 |

+

question_input = gr.Textbox(label="Question")

|

| 173 |

+

und_seed_input = gr.Number(label="Seed", precision=0, value=42)

|

| 174 |

+

top_p = gr.Slider(minimum=0, maximum=1, value=0.95, step=0.05, label="top_p")

|

| 175 |

+

temperature = gr.Slider(minimum=0, maximum=1, value=0.1, step=0.05, label="temperature")

|

| 176 |

+

|

| 177 |

+

understanding_button = gr.Button("Chat")

|

| 178 |

+

understanding_output = gr.Textbox(label="Response")

|

| 179 |

+

|

| 180 |

+

examples_inpainting = gr.Examples(

|

| 181 |

+

label="Multimodal Understanding examples",

|

| 182 |

+

examples=[

|

| 183 |

+

[

|

| 184 |

+

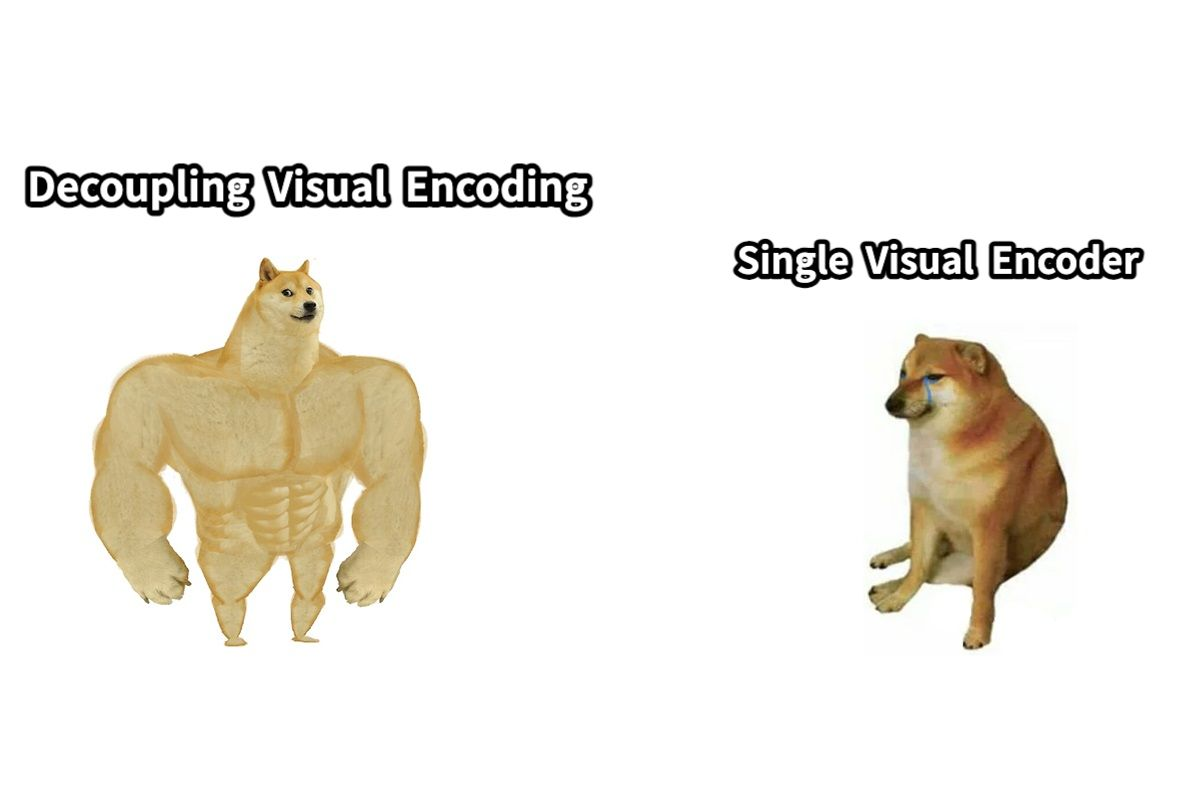

"explain this meme",

|

| 185 |

+

"doge.png",

|

| 186 |

+

],

|

| 187 |

+

[

|

| 188 |

+

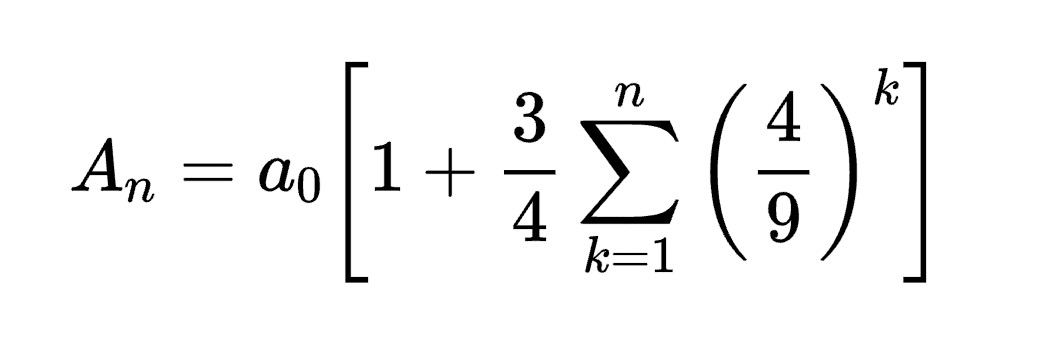

"Convert the formula into latex code.",

|

| 189 |

+

"equation.png",

|

| 190 |

+

],

|

| 191 |

+

],

|

| 192 |

+

inputs=[question_input, image_input],

|

| 193 |

+

)

|

| 194 |

+

|

| 195 |

+

|

| 196 |

+

gr.Markdown(value="# Text-to-Image Generation")

|

| 197 |

+

|

| 198 |

+

|

| 199 |

+

|

| 200 |

+

with gr.Row():

|

| 201 |

+

cfg_weight_input = gr.Slider(minimum=1, maximum=10, value=5, step=0.5, label="CFG Weight")

|

| 202 |

+

|

| 203 |

+

prompt_input = gr.Textbox(label="Prompt")

|

| 204 |

+

seed_input = gr.Number(label="Seed (Optional)", precision=0, value=12345)

|

| 205 |

+

|

| 206 |

+

generation_button = gr.Button("Generate Images")

|

| 207 |

+

|

| 208 |

+

image_output = gr.Gallery(label="Generated Images", columns=2, rows=2, height=300)

|

| 209 |

+

|

| 210 |

+

examples_t2i = gr.Examples(

|

| 211 |

+

label="Text to image generation examples. (Tips for designing prompts: Adding description like 'digital art' at the end of the prompt or writing the prompt in more detail can help produce better images!)",

|

| 212 |

+

examples=[

|

| 213 |

+

"Master shifu racoon wearing drip attire as a street gangster.",

|

| 214 |

+

"A cute and adorable baby fox with big brown eyes, autumn leaves in the background enchanting,immortal,fluffy, shiny mane,Petals,fairyism,unreal engine 5 and Octane Render,highly detailed, photorealistic, cinematic, natural colors.",

|

| 215 |

+

"The image features an intricately designed eye set against a circular backdrop adorned with ornate swirl patterns that evoke both realism and surrealism. At the center of attention is a strikingly vivid blue iris surrounded by delicate veins radiating outward from the pupil to create depth and intensity. The eyelashes are long and dark, casting subtle shadows on the skin around them which appears smooth yet slightly textured as if aged or weathered over time.\n\nAbove the eye, there's a stone-like structure resembling part of classical architecture, adding layers of mystery and timeless elegance to the composition. This architectural element contrasts sharply but harmoniously with the organic curves surrounding it. Below the eye lies another decorative motif reminiscent of baroque artistry, further enhancing the overall sense of eternity encapsulated within each meticulously crafted detail. \n\nOverall, the atmosphere exudes a mysterious aura intertwined seamlessly with elements suggesting timelessness, achieved through the juxtaposition of realistic textures and surreal artistic flourishes. Each component\u2014from the intricate designs framing the eye to the ancient-looking stone piece above\u2014contributes uniquely towards creating a visually captivating tableau imbued with enigmatic allure.",

|

| 216 |

+

],

|

| 217 |

+

inputs=prompt_input,

|

| 218 |

+

)

|

| 219 |

+

|

| 220 |

+

understanding_button.click(

|

| 221 |

+

multimodal_understanding,

|

| 222 |

+

inputs=[image_input, question_input, und_seed_input, top_p, temperature],

|

| 223 |

+

outputs=understanding_output

|

| 224 |

+

)

|

| 225 |

+

|

| 226 |

+

generation_button.click(

|

| 227 |

+

fn=generate_image,

|

| 228 |

+

inputs=[prompt_input, seed_input, cfg_weight_input],

|

| 229 |

+

outputs=image_output

|

| 230 |

+

)

|

| 231 |

+

|

| 232 |

+

demo.launch(share=True)

|

doge.png

ADDED

|

equation.png

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

accelerate

|

| 2 |

+

diffusers

|

| 3 |

+

gradio

|

| 4 |

+

numpy

|

| 5 |

+

torch

|

| 6 |

+

safetensors

|

| 7 |

+

transformers

|

| 8 |

+

git+https://github.com/deepseek-ai/Janus

|