Spaces:

Running

Running

Clémentine

commited on

Commit

•

7c5b7bd

1

Parent(s):

a146f0f

init

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -14

- README.md +11 -20

- assets/images/ifeval_score_per_model_type.png +0 -0

- assets/images/math_fn_gsm8k.png +0 -0

- assets/images/math_score_per_model_type.png +0 -0

- assets/images/normalized_vs_raw_scores.png +0 -0

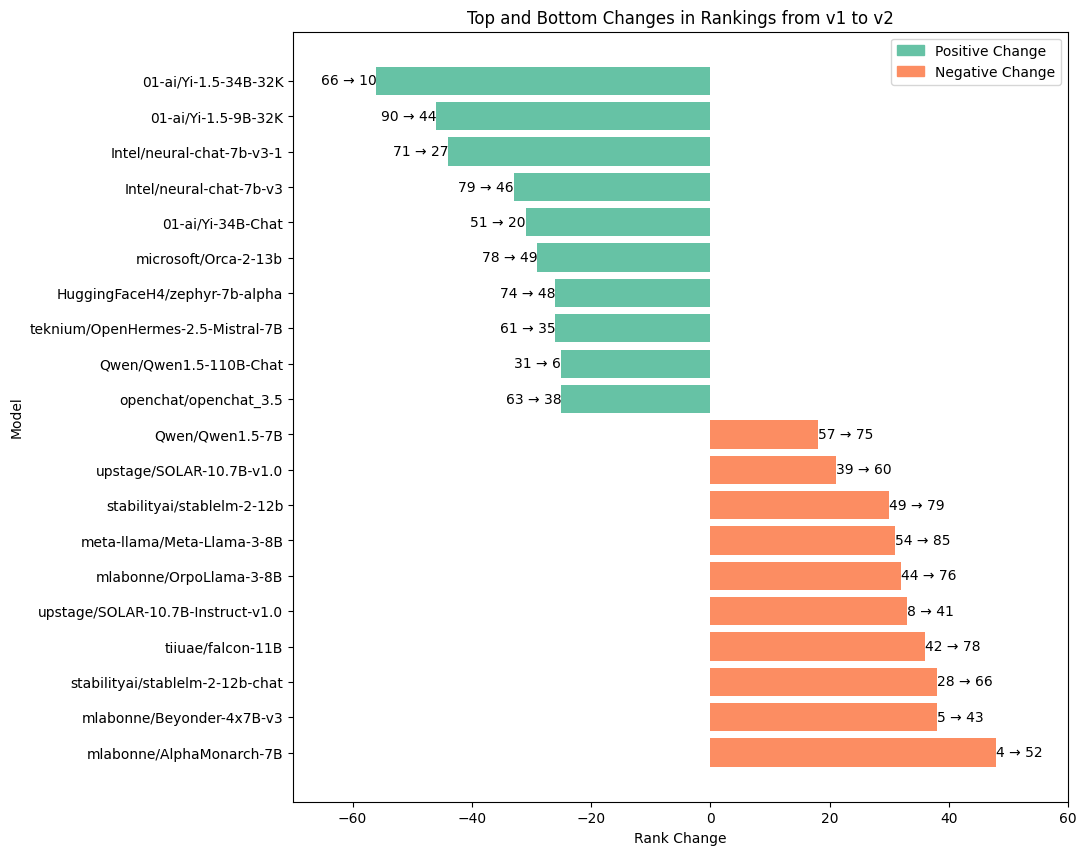

- assets/images/ranking_top10_bottom10.png +0 -0

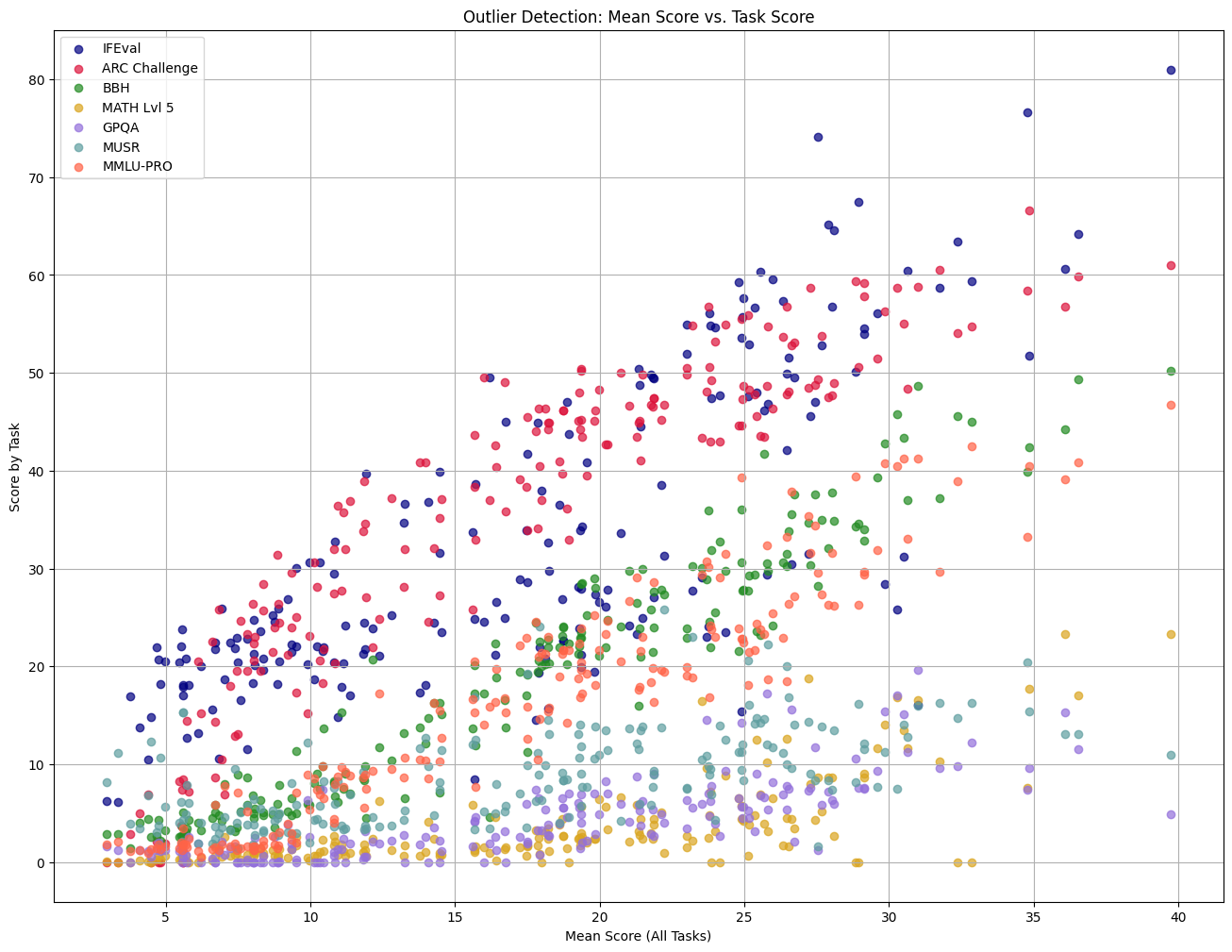

- assets/images/task_vs_mean.png +0 -0

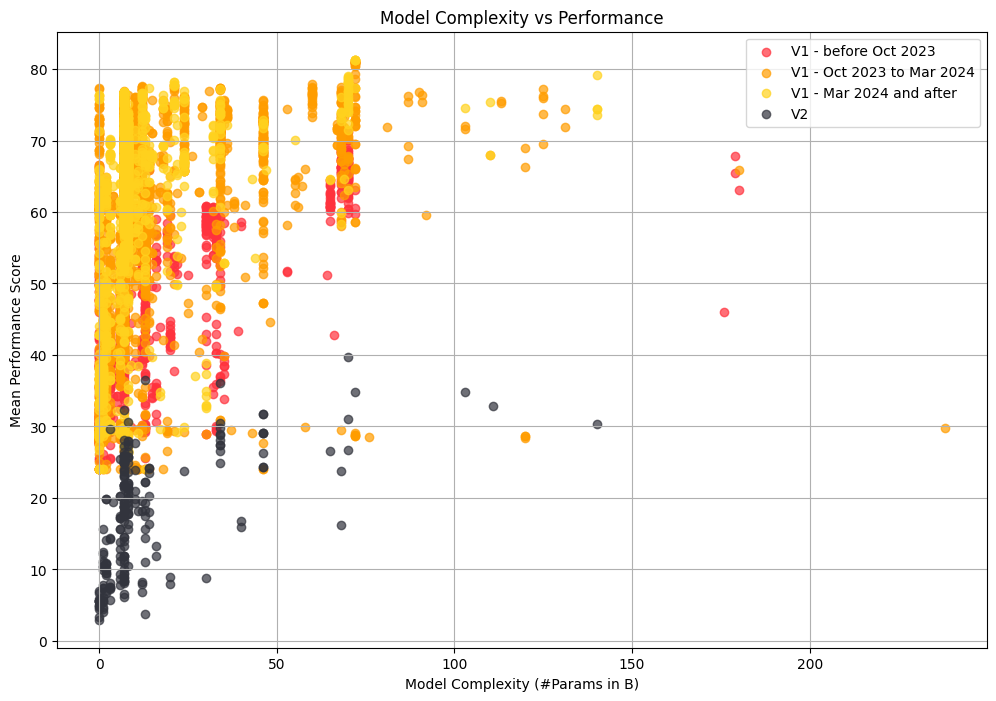

- assets/images/timewise_analysis_full.png +0 -0

- assets/images/timewise_analysis_light.png +0 -0

- assets/images/v2_correlation_heatmap.png +0 -0

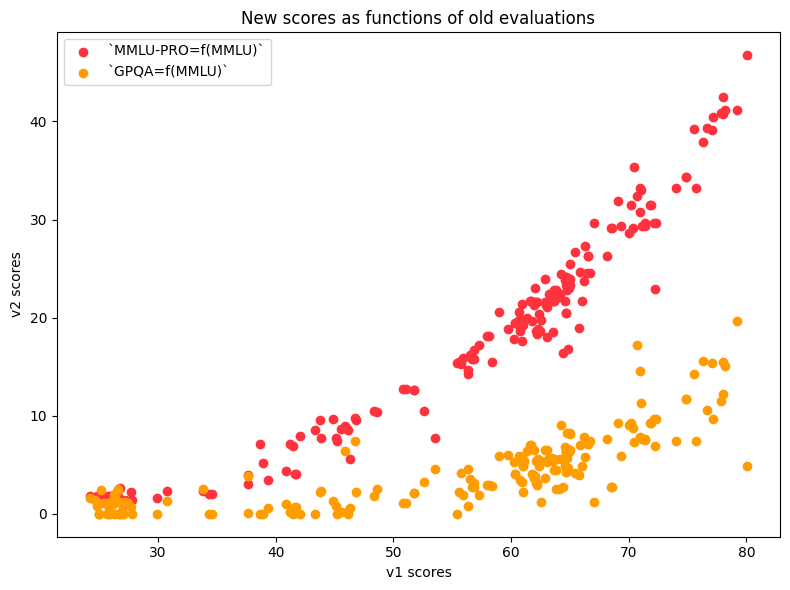

- assets/images/v2_fn_of_mmlu.png +0 -0

- dist/assets/images/ifeval_score_per_model_type.png +0 -0

- dist/assets/images/math_fn_gsm8k.png +0 -0

- dist/assets/images/math_score_per_model_type.png +0 -0

- dist/assets/images/normalized_vs_raw_scores.png +0 -0

- dist/assets/images/ranking_top10_bottom10.png +0 -0

- dist/assets/images/task_vs_mean.png +0 -0

- dist/assets/images/timewise_analysis_full.png +0 -0

- dist/assets/images/timewise_analysis_light.png +0 -0

- dist/assets/images/v2_correlation_heatmap.png +0 -0

- dist/assets/images/v2_fn_of_mmlu.png +0 -0

- dist/bibliography.bib +334 -0

- dist/distill.bundle.js +0 -0

- dist/distill.bundle.js.map +0 -0

- dist/index.html +166 -0

- dist/main.bundle.js +2 -0

- dist/main.bundle.js.map +1 -0

- dist/style.css +259 -0

- index.html +0 -435

- node_modules/.bin/acorn +1 -0

- node_modules/.bin/ansi-html +1 -0

- node_modules/.bin/browserslist +1 -0

- node_modules/.bin/cssesc +1 -0

- node_modules/.bin/envinfo +1 -0

- node_modules/.bin/flat +1 -0

- node_modules/.bin/glob +1 -0

- node_modules/.bin/he +1 -0

- node_modules/.bin/html-minifier-terser +1 -0

- node_modules/.bin/import-local-fixture +1 -0

- node_modules/.bin/is-docker +1 -0

- node_modules/.bin/is-inside-container +1 -0

- node_modules/.bin/jsesc +1 -0

- node_modules/.bin/json5 +1 -0

- node_modules/.bin/mime +1 -0

- node_modules/.bin/multicast-dns +1 -0

- node_modules/.bin/nanoid +1 -0

- node_modules/.bin/opener +1 -0

- node_modules/.bin/parser +1 -0

- node_modules/.bin/regjsparser +1 -0

.gitattributes

CHANGED

|

@@ -32,17 +32,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 32 |

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

-

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

-

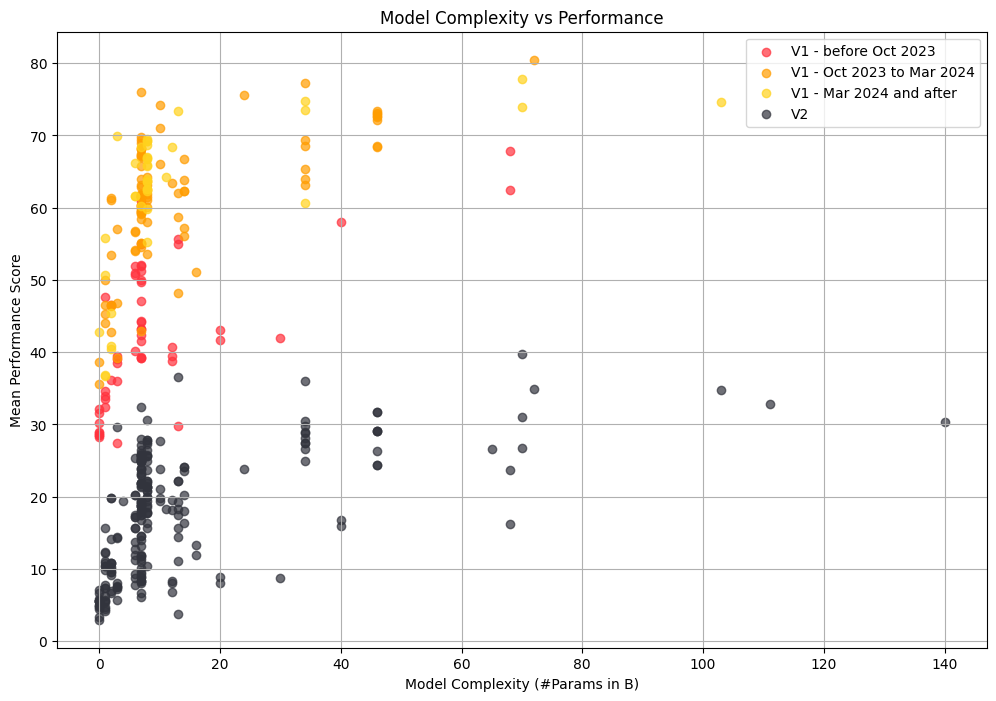

static/images/steve.webm filter=lfs diff=lfs merge=lfs -text

|

| 37 |

-

static/videos/blueshirt.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 38 |

-

static/videos/chair-tp.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 39 |

-

static/videos/coffee.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 40 |

-

static/videos/dollyzoom-depth.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 41 |

-

static/videos/dollyzoom.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 42 |

-

static/videos/mask.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 43 |

-

static/videos/matting.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 44 |

-

static/videos/replay.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 45 |

-

static/videos/shiba.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 46 |

-

static/videos/steve.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 47 |

-

static/videos/teaser.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 48 |

-

static/videos/toby.mp4 filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 32 |

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

README.md

CHANGED

|

@@ -1,25 +1,16 @@

|

|

| 1 |

---

|

| 2 |

-

title:

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

sdk: static

|

| 7 |

pinned: false

|

|

|

|

|

|

|

|

|

|

| 8 |

---

|

| 9 |

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

| 14 |

-

If you find Nerfies useful for your work please cite:

|

| 15 |

-

```

|

| 16 |

-

@article{park2021nerfies

|

| 17 |

-

author = {Park, Keunhong and Sinha, Utkarsh and Barron, Jonathan T. and Bouaziz, Sofien and Goldman, Dan B and Seitz, Steven M. and Martin-Brualla, Ricardo},

|

| 18 |

-

title = {Nerfies: Deformable Neural Radiance Fields},

|

| 19 |

-

journal = {ICCV},

|

| 20 |

-

year = {2021},

|

| 21 |

-

}

|

| 22 |

-

```

|

| 23 |

-

|

| 24 |

-

# Website License

|

| 25 |

-

<a rel="license" href="http://creativecommons.org/licenses/by-sa/4.0/"><img alt="Creative Commons License" style="border-width:0" src="https://i.creativecommons.org/l/by-sa/4.0/88x31.png" /></a><br />This work is licensed under a <a rel="license" href="http://creativecommons.org/licenses/by-sa/4.0/">Creative Commons Attribution-ShareAlike 4.0 International License</a>.

|

|

|

|

| 1 |

---

|

| 2 |

+

title: 'Open LLM Leaderboard v2: '

|

| 3 |

+

emoji: 🍷

|

| 4 |

+

colorFrom: pink

|

| 5 |

+

colorTo: red

|

| 6 |

sdk: static

|

| 7 |

pinned: false

|

| 8 |

+

header: mini

|

| 9 |

+

app_file: dist/index.html

|

| 10 |

+

#thumbnail: https://huggingface.co/spaces/open-llm-leaderboard/blogpost-v2/resolve/main/screenshot.jpeg

|

| 11 |

---

|

| 12 |

|

| 13 |

+

- `npm install`

|

| 14 |

+

- `npm run dev`

|

| 15 |

+

- change in src, then `npm run build`

|

| 16 |

+

- commit dist

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

assets/images/ifeval_score_per_model_type.png

ADDED

|

assets/images/math_fn_gsm8k.png

ADDED

|

assets/images/math_score_per_model_type.png

ADDED

|

assets/images/normalized_vs_raw_scores.png

ADDED

|

assets/images/ranking_top10_bottom10.png

ADDED

|

assets/images/task_vs_mean.png

ADDED

|

assets/images/timewise_analysis_full.png

ADDED

|

assets/images/timewise_analysis_light.png

ADDED

|

assets/images/v2_correlation_heatmap.png

ADDED

|

assets/images/v2_fn_of_mmlu.png

ADDED

|

dist/assets/images/ifeval_score_per_model_type.png

ADDED

|

dist/assets/images/math_fn_gsm8k.png

ADDED

|

dist/assets/images/math_score_per_model_type.png

ADDED

|

dist/assets/images/normalized_vs_raw_scores.png

ADDED

|

dist/assets/images/ranking_top10_bottom10.png

ADDED

|

dist/assets/images/task_vs_mean.png

ADDED

|

dist/assets/images/timewise_analysis_full.png

ADDED

|

dist/assets/images/timewise_analysis_light.png

ADDED

|

dist/assets/images/v2_correlation_heatmap.png

ADDED

|

dist/assets/images/v2_fn_of_mmlu.png

ADDED

|

dist/bibliography.bib

ADDED

|

@@ -0,0 +1,334 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

@article{radford2019language,

|

| 2 |

+

title={Language Models are Unsupervised Multitask Learners},

|

| 3 |

+

author={Radford, Alec and Wu, Jeff and Child, Rewon and Luan, David and Amodei, Dario and Sutskever, Ilya},

|

| 4 |

+

year={2019}

|

| 5 |

+

}

|

| 6 |

+

@inproceedings{barbaresi-2021-trafilatura,

|

| 7 |

+

title = {Trafilatura: A Web Scraping Library and Command-Line Tool for Text Discovery and Extraction},

|

| 8 |

+

author = "Barbaresi, Adrien",

|

| 9 |

+

booktitle = "Proceedings of the Joint Conference of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing: System Demonstrations",

|

| 10 |

+

pages = "122--131",

|

| 11 |

+

publisher = "Association for Computational Linguistics",

|

| 12 |

+

url = "https://aclanthology.org/2021.acl-demo.15",

|

| 13 |

+

year = 2021,

|

| 14 |

+

}

|

| 15 |

+

@misc{penedo2023refinedweb,

|

| 16 |

+

title={The RefinedWeb Dataset for Falcon LLM: Outperforming Curated Corpora with Web Data, and Web Data Only},

|

| 17 |

+

author={Guilherme Penedo and Quentin Malartic and Daniel Hesslow and Ruxandra Cojocaru and Alessandro Cappelli and Hamza Alobeidli and Baptiste Pannier and Ebtesam Almazrouei and Julien Launay},

|

| 18 |

+

year={2023},

|

| 19 |

+

eprint={2306.01116},

|

| 20 |

+

archivePrefix={arXiv},

|

| 21 |

+

primaryClass={cs.CL}

|

| 22 |

+

}

|

| 23 |

+

@article{joulin2016fasttext,

|

| 24 |

+

title={FastText.zip: Compressing text classification models},

|

| 25 |

+

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Douze, Matthijs and J{\'e}gou, H{\'e}rve and Mikolov, Tomas},

|

| 26 |

+

journal={arXiv preprint arXiv:1612.03651},

|

| 27 |

+

year={2016}

|

| 28 |

+

}

|

| 29 |

+

@article{joulin2016bag,

|

| 30 |

+

title={Bag of Tricks for Efficient Text Classification},

|

| 31 |

+

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Mikolov, Tomas},

|

| 32 |

+

journal={arXiv preprint arXiv:1607.01759},

|

| 33 |

+

year={2016}

|

| 34 |

+

}

|

| 35 |

+

@misc{penedo2024datatrove,

|

| 36 |

+

author = {Penedo, Guilherme and Kydlíček, Hynek and Cappelli, Alessandro and Sasko, Mario and Wolf, Thomas},

|

| 37 |

+

title = {DataTrove: large scale data processing},

|

| 38 |

+

year = {2024},

|

| 39 |

+

publisher = {GitHub},

|

| 40 |

+

journal = {GitHub repository},

|

| 41 |

+

url = {https://github.com/huggingface/datatrove}

|

| 42 |

+

}

|

| 43 |

+

@misc{chiang2024chatbot,

|

| 44 |

+

title={Chatbot Arena: An Open Platform for Evaluating LLMs by Human Preference},

|

| 45 |

+

author={Wei-Lin Chiang and Lianmin Zheng and Ying Sheng and Anastasios Nikolas Angelopoulos and Tianle Li and Dacheng Li and Hao Zhang and Banghua Zhu and Michael Jordan and Joseph E. Gonzalez and Ion Stoica},

|

| 46 |

+

year={2024},

|

| 47 |

+

eprint={2403.04132},

|

| 48 |

+

archivePrefix={arXiv},

|

| 49 |

+

primaryClass={cs.AI}

|

| 50 |

+

}

|

| 51 |

+

@misc{rae2022scaling,

|

| 52 |

+

title={Scaling Language Models: Methods, Analysis & Insights from Training Gopher},

|

| 53 |

+

author={Jack W. Rae and Sebastian Borgeaud and Trevor Cai and Katie Millican and Jordan Hoffmann and Francis Song and John Aslanides and Sarah Henderson and Roman Ring and Susannah Young and Eliza Rutherford and Tom Hennigan and Jacob Menick and Albin Cassirer and Richard Powell and George van den Driessche and Lisa Anne Hendricks and Maribeth Rauh and Po-Sen Huang and Amelia Glaese and Johannes Welbl and Sumanth Dathathri and Saffron Huang and Jonathan Uesato and John Mellor and Irina Higgins and Antonia Creswell and Nat McAleese and Amy Wu and Erich Elsen and Siddhant Jayakumar and Elena Buchatskaya and David Budden and Esme Sutherland and Karen Simonyan and Michela Paganini and Laurent Sifre and Lena Martens and Xiang Lorraine Li and Adhiguna Kuncoro and Aida Nematzadeh and Elena Gribovskaya and Domenic Donato and Angeliki Lazaridou and Arthur Mensch and Jean-Baptiste Lespiau and Maria Tsimpoukelli and Nikolai Grigorev and Doug Fritz and Thibault Sottiaux and Mantas Pajarskas and Toby Pohlen and Zhitao Gong and Daniel Toyama and Cyprien de Masson d'Autume and Yujia Li and Tayfun Terzi and Vladimir Mikulik and Igor Babuschkin and Aidan Clark and Diego de Las Casas and Aurelia Guy and Chris Jones and James Bradbury and Matthew Johnson and Blake Hechtman and Laura Weidinger and Iason Gabriel and William Isaac and Ed Lockhart and Simon Osindero and Laura Rimell and Chris Dyer and Oriol Vinyals and Kareem Ayoub and Jeff Stanway and Lorrayne Bennett and Demis Hassabis and Koray Kavukcuoglu and Geoffrey Irving},

|

| 54 |

+

year={2022},

|

| 55 |

+

eprint={2112.11446},

|

| 56 |

+

archivePrefix={arXiv},

|

| 57 |

+

primaryClass={cs.CL}

|

| 58 |

+

}

|

| 59 |

+

@misc{lee2022deduplicating,

|

| 60 |

+

title={Deduplicating Training Data Makes Language Models Better},

|

| 61 |

+

author={Katherine Lee and Daphne Ippolito and Andrew Nystrom and Chiyuan Zhang and Douglas Eck and Chris Callison-Burch and Nicholas Carlini},

|

| 62 |

+

year={2022},

|

| 63 |

+

eprint={2107.06499},

|

| 64 |

+

archivePrefix={arXiv},

|

| 65 |

+

primaryClass={cs.CL}

|

| 66 |

+

}

|

| 67 |

+

@misc{carlini2023quantifying,

|

| 68 |

+

title={Quantifying Memorization Across Neural Language Models},

|

| 69 |

+

author={Nicholas Carlini and Daphne Ippolito and Matthew Jagielski and Katherine Lee and Florian Tramer and Chiyuan Zhang},

|

| 70 |

+

year={2023},

|

| 71 |

+

eprint={2202.07646},

|

| 72 |

+

archivePrefix={arXiv},

|

| 73 |

+

primaryClass={cs.LG}

|

| 74 |

+

}

|

| 75 |

+

@misc{raffel2023exploring,

|

| 76 |

+

title={Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer},

|

| 77 |

+

author={Colin Raffel and Noam Shazeer and Adam Roberts and Katherine Lee and Sharan Narang and Michael Matena and Yanqi Zhou and Wei Li and Peter J. Liu},

|

| 78 |

+

year={2023},

|

| 79 |

+

eprint={1910.10683},

|

| 80 |

+

archivePrefix={arXiv},

|

| 81 |

+

primaryClass={cs.LG}

|

| 82 |

+

}

|

| 83 |

+

@misc{touvron2023llama,

|

| 84 |

+

title={LLaMA: Open and Efficient Foundation Language Models},

|

| 85 |

+

author={Hugo Touvron and Thibaut Lavril and Gautier Izacard and Xavier Martinet and Marie-Anne Lachaux and Timothée Lacroix and Baptiste Rozière and Naman Goyal and Eric Hambro and Faisal Azhar and Aurelien Rodriguez and Armand Joulin and Edouard Grave and Guillaume Lample},

|

| 86 |

+

year={2023},

|

| 87 |

+

eprint={2302.13971},

|

| 88 |

+

archivePrefix={arXiv},

|

| 89 |

+

primaryClass={cs.CL}

|

| 90 |

+

}

|

| 91 |

+

@article{dolma,

|

| 92 |

+

title = {Dolma: an Open Corpus of Three Trillion Tokens for Language Model Pretraining Research},

|

| 93 |

+

author={

|

| 94 |

+

Luca Soldaini and Rodney Kinney and Akshita Bhagia and Dustin Schwenk and David Atkinson and

|

| 95 |

+

Russell Authur and Ben Bogin and Khyathi Chandu and Jennifer Dumas and Yanai Elazar and

|

| 96 |

+

Valentin Hofmann and Ananya Harsh Jha and Sachin Kumar and Li Lucy and Xinxi Lyu and

|

| 97 |

+

Nathan Lambert and Ian Magnusson and Jacob Morrison and Niklas Muennighoff and Aakanksha Naik and

|

| 98 |

+

Crystal Nam and Matthew E. Peters and Abhilasha Ravichander and Kyle Richardson and Zejiang Shen and

|

| 99 |

+

Emma Strubell and Nishant Subramani and Oyvind Tafjord and Pete Walsh and Luke Zettlemoyer and

|

| 100 |

+

Noah A. Smith and Hannaneh Hajishirzi and Iz Beltagy and Dirk Groeneveld and Jesse Dodge and Kyle Lo

|

| 101 |

+

},

|

| 102 |

+

year = {2024},

|

| 103 |

+

journal={arXiv preprint},

|

| 104 |

+

}

|

| 105 |

+

@article{gao2020pile,

|

| 106 |

+

title={The {P}ile: An 800{GB} dataset of diverse text for language modeling},

|

| 107 |

+

author={Gao, Leo and Biderman, Stella and Black, Sid and Golding, Laurence and Hoppe, Travis and Foster, Charles and Phang, Jason and He, Horace and Thite, Anish and Nabeshima, Noa and others},

|

| 108 |

+

journal={arXiv preprint arXiv:2101.00027},

|

| 109 |

+

year={2020}

|

| 110 |

+

}

|

| 111 |

+

@misc{cerebras2023slimpajama,

|

| 112 |

+

author = {Soboleva, Daria and Al-Khateeb, Faisal and Myers, Robert and Steeves, Jacob R and Hestness, Joel and Dey, Nolan},

|

| 113 |

+

title = {SlimPajama: A 627B token cleaned and deduplicated version of RedPajama},

|

| 114 |

+

month = {June},

|

| 115 |

+

year = 2023,

|

| 116 |

+

url = {https://huggingface.co/datasets/cerebras/SlimPajama-627B},

|

| 117 |

+

}

|

| 118 |

+

@software{together2023redpajama,

|

| 119 |

+

author = {Together Computer},

|

| 120 |

+

title = {RedPajama: an Open Dataset for Training Large Language Models},

|

| 121 |

+

month = {October},

|

| 122 |

+

year = 2023,

|

| 123 |

+

url = {https://github.com/togethercomputer/RedPajama-Data}

|

| 124 |

+

}

|

| 125 |

+

@article{jaccard1912distribution,

|

| 126 |

+

title={The distribution of the flora in the alpine zone. 1},

|

| 127 |

+

author={Jaccard, Paul},

|

| 128 |

+

journal={New phytologist},

|

| 129 |

+

volume={11},

|

| 130 |

+

number={2},

|

| 131 |

+

pages={37--50},

|

| 132 |

+

year={1912},

|

| 133 |

+

publisher={Wiley Online Library}

|

| 134 |

+

}

|

| 135 |

+

@misc{albalak2024survey,

|

| 136 |

+

title={A Survey on Data Selection for Language Models},

|

| 137 |

+

author={Alon Albalak and Yanai Elazar and Sang Michael Xie and Shayne Longpre and Nathan Lambert and Xinyi Wang and Niklas Muennighoff and Bairu Hou and Liangming Pan and Haewon Jeong and Colin Raffel and Shiyu Chang and Tatsunori Hashimoto and William Yang Wang},

|

| 138 |

+

year={2024},

|

| 139 |

+

eprint={2402.16827},

|

| 140 |

+

archivePrefix={arXiv},

|

| 141 |

+

primaryClass={cs.CL}

|

| 142 |

+

}

|

| 143 |

+

@misc{longpre2023pretrainers,

|

| 144 |

+

title={A Pretrainer's Guide to Training Data: Measuring the Effects of Data Age, Domain Coverage, Quality, & Toxicity},

|

| 145 |

+

author={Shayne Longpre and Gregory Yauney and Emily Reif and Katherine Lee and Adam Roberts and Barret Zoph and Denny Zhou and Jason Wei and Kevin Robinson and David Mimno and Daphne Ippolito},

|

| 146 |

+

year={2023},

|

| 147 |

+

eprint={2305.13169},

|

| 148 |

+

archivePrefix={arXiv},

|

| 149 |

+

primaryClass={cs.CL}

|

| 150 |

+

}

|

| 151 |

+

@misc{wenzek2019ccnet,

|

| 152 |

+

title={CCNet: Extracting High Quality Monolingual Datasets from Web Crawl Data},

|

| 153 |

+

author={Guillaume Wenzek and Marie-Anne Lachaux and Alexis Conneau and Vishrav Chaudhary and Francisco Guzmán and Armand Joulin and Edouard Grave},

|

| 154 |

+

year={2019},

|

| 155 |

+

eprint={1911.00359},

|

| 156 |

+

archivePrefix={arXiv},

|

| 157 |

+

primaryClass={cs.CL}

|

| 158 |

+

}

|

| 159 |

+

@misc{soldaini2024dolma,

|

| 160 |

+

title={Dolma: an Open Corpus of Three Trillion Tokens for Language Model Pretraining Research},

|

| 161 |

+

author={Luca Soldaini and Rodney Kinney and Akshita Bhagia and Dustin Schwenk and David Atkinson and Russell Authur and Ben Bogin and Khyathi Chandu and Jennifer Dumas and Yanai Elazar and Valentin Hofmann and Ananya Harsh Jha and Sachin Kumar and Li Lucy and Xinxi Lyu and Nathan Lambert and Ian Magnusson and Jacob Morrison and Niklas Muennighoff and Aakanksha Naik and Crystal Nam and Matthew E. Peters and Abhilasha Ravichander and Kyle Richardson and Zejiang Shen and Emma Strubell and Nishant Subramani and Oyvind Tafjord and Pete Walsh and Luke Zettlemoyer and Noah A. Smith and Hannaneh Hajishirzi and Iz Beltagy and Dirk Groeneveld and Jesse Dodge and Kyle Lo},

|

| 162 |

+

year={2024},

|

| 163 |

+

eprint={2402.00159},

|

| 164 |

+

archivePrefix={arXiv},

|

| 165 |

+

primaryClass={cs.CL}

|

| 166 |

+

}

|

| 167 |

+

@misc{ouyang2022training,

|

| 168 |

+

title={Training language models to follow instructions with human feedback},

|

| 169 |

+

author={Long Ouyang and Jeff Wu and Xu Jiang and Diogo Almeida and Carroll L. Wainwright and Pamela Mishkin and Chong Zhang and Sandhini Agarwal and Katarina Slama and Alex Ray and John Schulman and Jacob Hilton and Fraser Kelton and Luke Miller and Maddie Simens and Amanda Askell and Peter Welinder and Paul Christiano and Jan Leike and Ryan Lowe},

|

| 170 |

+

year={2022},

|

| 171 |

+

eprint={2203.02155},

|

| 172 |

+

archivePrefix={arXiv},

|

| 173 |

+

primaryClass={cs.CL}

|

| 174 |

+

}

|

| 175 |

+

@misc{hoffmann2022training,

|

| 176 |

+

title={Training Compute-Optimal Large Language Models},

|

| 177 |

+

author={Jordan Hoffmann and Sebastian Borgeaud and Arthur Mensch and Elena Buchatskaya and Trevor Cai and Eliza Rutherford and Diego de Las Casas and Lisa Anne Hendricks and Johannes Welbl and Aidan Clark and Tom Hennigan and Eric Noland and Katie Millican and George van den Driessche and Bogdan Damoc and Aurelia Guy and Simon Osindero and Karen Simonyan and Erich Elsen and Jack W. Rae and Oriol Vinyals and Laurent Sifre},

|

| 178 |

+

year={2022},

|

| 179 |

+

eprint={2203.15556},

|

| 180 |

+

archivePrefix={arXiv},

|

| 181 |

+

primaryClass={cs.CL}

|

| 182 |

+

}

|

| 183 |

+

@misc{muennighoff2023scaling,

|

| 184 |

+

title={Scaling Data-Constrained Language Models},

|

| 185 |

+

author={Niklas Muennighoff and Alexander M. Rush and Boaz Barak and Teven Le Scao and Aleksandra Piktus and Nouamane Tazi and Sampo Pyysalo and Thomas Wolf and Colin Raffel},

|

| 186 |

+

year={2023},

|

| 187 |

+

eprint={2305.16264},

|

| 188 |

+

archivePrefix={arXiv},

|

| 189 |

+

primaryClass={cs.CL}

|

| 190 |

+

}

|

| 191 |

+

@misc{hernandez2022scaling,

|

| 192 |

+

title={Scaling Laws and Interpretability of Learning from Repeated Data},

|

| 193 |

+

author={Danny Hernandez and Tom Brown and Tom Conerly and Nova DasSarma and Dawn Drain and Sheer El-Showk and Nelson Elhage and Zac Hatfield-Dodds and Tom Henighan and Tristan Hume and Scott Johnston and Ben Mann and Chris Olah and Catherine Olsson and Dario Amodei and Nicholas Joseph and Jared Kaplan and Sam McCandlish},

|

| 194 |

+

year={2022},

|

| 195 |

+

eprint={2205.10487},

|

| 196 |

+

archivePrefix={arXiv},

|

| 197 |

+

primaryClass={cs.LG}

|

| 198 |

+

}

|

| 199 |

+

@article{llama3modelcard,

|

| 200 |

+

|

| 201 |

+

title={Llama 3 Model Card},

|

| 202 |

+

|

| 203 |

+

author={AI@Meta},

|

| 204 |

+

|

| 205 |

+

year={2024},

|

| 206 |

+

|

| 207 |

+

url = {https://github.com/meta-llama/llama3/blob/main/MODEL_CARD.md}

|

| 208 |

+

|

| 209 |

+

}

|

| 210 |

+

@misc{jiang2024mixtral,

|

| 211 |

+

title={Mixtral of Experts},

|

| 212 |

+

author={Albert Q. Jiang and Alexandre Sablayrolles and Antoine Roux and Arthur Mensch and Blanche Savary and Chris Bamford and Devendra Singh Chaplot and Diego de las Casas and Emma Bou Hanna and Florian Bressand and Gianna Lengyel and Guillaume Bour and Guillaume Lample and Lélio Renard Lavaud and Lucile Saulnier and Marie-Anne Lachaux and Pierre Stock and Sandeep Subramanian and Sophia Yang and Szymon Antoniak and Teven Le Scao and Théophile Gervet and Thibaut Lavril and Thomas Wang and Timothée Lacroix and William El Sayed},

|

| 213 |

+

year={2024},

|

| 214 |

+

eprint={2401.04088},

|

| 215 |

+

archivePrefix={arXiv},

|

| 216 |

+

primaryClass={cs.LG}

|

| 217 |

+

}

|

| 218 |

+

@article{yuan2024self,

|

| 219 |

+

title={Self-rewarding language models},

|

| 220 |

+

author={Yuan, Weizhe and Pang, Richard Yuanzhe and Cho, Kyunghyun and Sukhbaatar, Sainbayar and Xu, Jing and Weston, Jason},

|

| 221 |

+

journal={arXiv preprint arXiv:2401.10020},

|

| 222 |

+

year={2024}

|

| 223 |

+

}

|

| 224 |

+

@article{verga2024replacing,

|

| 225 |

+

title={Replacing Judges with Juries: Evaluating LLM Generations with a Panel of Diverse Models},

|

| 226 |

+

author={Verga, Pat and Hofstatter, Sebastian and Althammer, Sophia and Su, Yixuan and Piktus, Aleksandra and Arkhangorodsky, Arkady and Xu, Minjie and White, Naomi and Lewis, Patrick},

|

| 227 |

+

journal={arXiv preprint arXiv:2404.18796},

|

| 228 |

+

year={2024}

|

| 229 |

+

}

|

| 230 |

+

@article{abdin2024phi,

|

| 231 |

+

title={Phi-3 technical report: A highly capable language model locally on your phone},

|

| 232 |

+

author={Abdin, Marah and Jacobs, Sam Ade and Awan, Ammar Ahmad and Aneja, Jyoti and Awadallah, Ahmed and Awadalla, Hany and Bach, Nguyen and Bahree, Amit and Bakhtiari, Arash and Behl, Harkirat and others},

|

| 233 |

+

journal={arXiv preprint arXiv:2404.14219},

|

| 234 |

+

year={2024}

|

| 235 |

+

}

|

| 236 |

+

@misc{meta2024responsible,

|

| 237 |

+

title = {Our responsible approach to Meta AI and Meta Llama 3},

|

| 238 |

+

author = {Meta},

|

| 239 |

+

year = {2024},

|

| 240 |

+

url = {https://ai.meta.com/blog/meta-llama-3-meta-ai-responsibility/},

|

| 241 |

+

note = {Accessed: 2024-05-31}

|

| 242 |

+

}

|

| 243 |

+

@inproceedings{talmor-etal-2019-commonsenseqa,

|

| 244 |

+

title = "CommonsenseQA: A Question Answering Challenge Targeting Commonsense Knowledge",

|

| 245 |

+

author = "Talmor, Alon and

|

| 246 |

+

Herzig, Jonathan and

|

| 247 |

+

Lourie, Nicholas and

|

| 248 |

+

Berant, Jonathan",

|

| 249 |

+

booktitle = "Proceedings of the 2019 Conference of the North {A}merican Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers)",

|

| 250 |

+

month = jun,

|

| 251 |

+

year = "2019",

|

| 252 |

+

address = "Minneapolis, Minnesota",

|

| 253 |

+

publisher = "Association for Computational Linguistics",

|

| 254 |

+

url = "https://aclanthology.org/N19-1421",

|

| 255 |

+

doi = "10.18653/v1/N19-1421",

|

| 256 |

+

pages = "4149--4158",

|

| 257 |

+

archivePrefix = "arXiv",

|

| 258 |

+

eprint = "1811.00937",

|

| 259 |

+

primaryClass = "cs",

|

| 260 |

+

}

|

| 261 |

+

@inproceedings{zellers-etal-2019-hellaswag,

|

| 262 |

+

title = "HellaSwag: Can a Machine Really Finish Your Sentence?",

|

| 263 |

+

author = "Zellers, Rowan and

|

| 264 |

+

Holtzman, Ari and

|

| 265 |

+

Bisk, Yonatan and

|

| 266 |

+

Farhadi, Ali and

|

| 267 |

+

Choi, Yejin",

|

| 268 |

+

editor = "Korhonen, Anna and

|

| 269 |

+

Traum, David and

|

| 270 |

+

M{\`a}rquez, Llu{\'\i}s",

|

| 271 |

+

booktitle = "Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics",

|

| 272 |

+

month = jul,

|

| 273 |

+

year = "2019",

|

| 274 |

+

address = "Florence, Italy",

|

| 275 |

+

publisher = "Association for Computational Linguistics",

|

| 276 |

+

url = "https://aclanthology.org/P19-1472",

|

| 277 |

+

doi = "10.18653/v1/P19-1472",

|

| 278 |

+

pages = "4791--4800",

|

| 279 |

+

abstract = "Recent work by Zellers et al. (2018) introduced a new task of commonsense natural language inference: given an event description such as {``}A woman sits at a piano,{''} a machine must select the most likely followup: {``}She sets her fingers on the keys.{''} With the introduction of BERT, near human-level performance was reached. Does this mean that machines can perform human level commonsense inference? In this paper, we show that commonsense inference still proves difficult for even state-of-the-art models, by presenting HellaSwag, a new challenge dataset. Though its questions are trivial for humans ({\textgreater}95{\%} accuracy), state-of-the-art models struggle ({\textless}48{\%}). We achieve this via Adversarial Filtering (AF), a data collection paradigm wherein a series of discriminators iteratively select an adversarial set of machine-generated wrong answers. AF proves to be surprisingly robust. The key insight is to scale up the length and complexity of the dataset examples towards a critical {`}Goldilocks{'} zone wherein generated text is ridiculous to humans, yet often misclassified by state-of-the-art models. Our construction of HellaSwag, and its resulting difficulty, sheds light on the inner workings of deep pretrained models. More broadly, it suggests a new path forward for NLP research, in which benchmarks co-evolve with the evolving state-of-the-art in an adversarial way, so as to present ever-harder challenges.",

|

| 280 |

+

}

|

| 281 |

+

@inproceedings{OpenBookQA2018,

|

| 282 |

+

title={Can a Suit of Armor Conduct Electricity? A New Dataset for Open Book Question Answering},

|

| 283 |

+

author={Todor Mihaylov and Peter Clark and Tushar Khot and Ashish Sabharwal},

|

| 284 |

+

booktitle={EMNLP},

|

| 285 |

+

year={2018}

|

| 286 |

+

}

|

| 287 |

+

@misc{bisk2019piqa,

|

| 288 |

+

title={PIQA: Reasoning about Physical Commonsense in Natural Language},

|

| 289 |

+

author={Yonatan Bisk and Rowan Zellers and Ronan Le Bras and Jianfeng Gao and Yejin Choi},

|

| 290 |

+

year={2019},

|

| 291 |

+

eprint={1911.11641},

|

| 292 |

+

archivePrefix={arXiv},

|

| 293 |

+

primaryClass={cs.CL}

|

| 294 |

+

}

|

| 295 |

+

@misc{sap2019socialiqa,

|

| 296 |

+

title={SocialIQA: Commonsense Reasoning about Social Interactions},

|

| 297 |

+

author={Maarten Sap and Hannah Rashkin and Derek Chen and Ronan LeBras and Yejin Choi},

|

| 298 |

+

year={2019},

|

| 299 |

+

eprint={1904.09728},

|

| 300 |

+

archivePrefix={arXiv},

|

| 301 |

+

primaryClass={cs.CL}

|

| 302 |

+

}

|

| 303 |

+

@misc{sakaguchi2019winogrande,

|

| 304 |

+

title={WinoGrande: An Adversarial Winograd Schema Challenge at Scale},

|

| 305 |

+

author={Keisuke Sakaguchi and Ronan Le Bras and Chandra Bhagavatula and Yejin Choi},

|

| 306 |

+

year={2019},

|

| 307 |

+

eprint={1907.10641},

|

| 308 |

+

archivePrefix={arXiv},

|

| 309 |

+

primaryClass={cs.CL}

|

| 310 |

+

}

|

| 311 |

+

@misc{clark2018think,

|

| 312 |

+

title={Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge},

|

| 313 |

+

author={Peter Clark and Isaac Cowhey and Oren Etzioni and Tushar Khot and Ashish Sabharwal and Carissa Schoenick and Oyvind Tafjord},

|

| 314 |

+

year={2018},

|

| 315 |

+

eprint={1803.05457},

|

| 316 |

+

archivePrefix={arXiv},

|

| 317 |

+

primaryClass={cs.AI}

|

| 318 |

+

}

|

| 319 |

+

@misc{hendrycks2021measuring,

|

| 320 |

+

title={Measuring Massive Multitask Language Understanding},

|

| 321 |

+

author={Dan Hendrycks and Collin Burns and Steven Basart and Andy Zou and Mantas Mazeika and Dawn Song and Jacob Steinhardt},

|

| 322 |

+

year={2021},

|

| 323 |

+

eprint={2009.03300},

|

| 324 |

+

archivePrefix={arXiv},

|

| 325 |

+

primaryClass={cs.CY}

|

| 326 |

+

}

|

| 327 |

+

@misc{mitchell2023measuring,

|

| 328 |

+

title={Measuring Data},

|

| 329 |

+

author={Margaret Mitchell and Alexandra Sasha Luccioni and Nathan Lambert and Marissa Gerchick and Angelina McMillan-Major and Ezinwanne Ozoani and Nazneen Rajani and Tristan Thrush and Yacine Jernite and Douwe Kiela},

|

| 330 |

+

year={2023},

|

| 331 |

+

eprint={2212.05129},

|

| 332 |

+

archivePrefix={arXiv},

|

| 333 |

+

primaryClass={cs.AI}

|

| 334 |

+

}

|

dist/distill.bundle.js

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

dist/distill.bundle.js.map

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

dist/index.html

ADDED

|

@@ -0,0 +1,166 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<!DOCTYPE html>

|

| 2 |

+

<html>

|

| 3 |

+

<head>

|

| 4 |

+

<script src="distill.bundle.js" type="module" fetchpriority="high" blocking></script>

|

| 5 |

+

<script src="main.bundle.js" type="module" fetchpriority="low" defer></script>

|

| 6 |

+

<meta name="viewport" content="width=device-width, initial-scale=1">

|

| 7 |

+

<meta charset="utf8">

|

| 8 |

+

<base target="_blank">

|

| 9 |

+

<title>Open-LLM performances are plateauing, let’s make it steep again </title>

|

| 10 |

+

<link rel="stylesheet" href="style.css">

|

| 11 |

+

</head>

|

| 12 |

+

|

| 13 |

+

<body>

|

| 14 |

+

<d-front-matter>

|

| 15 |

+

<script id='distill-front-matter' type="text/json">{

|

| 16 |

+

"title": "Open-LLM performances are plateauing, let’s make it steep again ",

|

| 17 |

+

"description": "In this blog, we introduce the version 2 of the Open LLM Leaderboard, from the reasons for the change to the new evaluations and rankings.",

|

| 18 |

+

"published": "Jun 26, 2024",

|

| 19 |

+

"affiliation": {"name": "HuggingFace"},

|

| 20 |

+

"authors": [

|

| 21 |

+

{

|

| 22 |

+

"author":"Clementine Fourrier",

|

| 23 |

+

"authorURL":"https://huggingface.co/clefourrier"

|

| 24 |

+

},

|

| 25 |

+

{

|

| 26 |

+

"author":"Nathan Habib",

|

| 27 |

+

"authorURL":"https://huggingface.co/saylortwift"

|

| 28 |

+

},

|

| 29 |

+

{

|

| 30 |

+

"author":"Alina Lozovskaya",

|

| 31 |

+

"authorURL":"https://huggingface.co/alozowski"

|

| 32 |

+

},

|

| 33 |

+

{

|

| 34 |

+

"author":"Konrad Szafer",

|

| 35 |

+

"authorURL":"https://huggingface.co/KonradSzafer"

|

| 36 |

+

},

|

| 37 |

+

{

|

| 38 |

+

"author":"Thomas Wolf",

|

| 39 |

+

"authorURL":"https://huggingface.co/thomwolf"

|

| 40 |

+

}

|

| 41 |

+

],

|

| 42 |

+

"katex": {

|

| 43 |

+

"delimiters": [

|

| 44 |

+

{"left": "$$", "right": "$$", "display": false}

|

| 45 |

+

]

|

| 46 |

+

}

|

| 47 |

+

}

|

| 48 |

+

</script>

|

| 49 |

+

</d-front-matter>

|

| 50 |

+

<d-title>

|

| 51 |

+

<h1 class="l-page" style="text-align: center;">Open-LLM performances are plateauing, let’s make it steep again </h1>

|

| 52 |

+

<div id="title-plot" class="main-plot-container l-screen">

|

| 53 |

+

<figure>

|

| 54 |

+

<img src="assets/images/banner.png" alt="Banner">

|

| 55 |

+

</figure>

|

| 56 |

+

</div>

|

| 57 |

+

</d-title>

|

| 58 |

+

<d-byline></d-byline>

|

| 59 |

+

<d-article>

|

| 60 |

+

<d-contents>

|

| 61 |

+

</d-contents>

|

| 62 |

+

|

| 63 |

+

<p>Evaluating and comparing LLMs is hard. Our RLHF team realized this a year ago, when they wanted to reproduce and compare results from several published models.

|

| 64 |

+

It was a nearly impossible task: scores in papers or marketing releases were given without any reproducible code, sometimes doubtful but most of the case,

|

| 65 |

+

just using optimized prompts or evaluation setup to give best chances to the models. They therefore decided to create a place where reference models would be

|

| 66 |

+

evaluated in the exact same setup (same questions, asked in the same order, …), to gather completely reproducible and comparable results; and that’s how the

|

| 67 |

+

Open LLM Leaderboard was born!</p>

|

| 68 |

+

|

| 69 |

+

<p> Following a series of highly-visible model releases, it became a widely used resource in the ML community and beyond, visited by more than 2 million unique people over the last 10 months.</p>

|

| 70 |

+

|

| 71 |

+

<p> We estimate that around 300 000 community members use and collaborate on it monthly through submissions and discussions; usually to: </p>

|

| 72 |

+

<ul>

|

| 73 |

+

<li> Find state-of-the-art open source releases as the leaderboardit provides reproducible scores separating marketing fluff from actual progress in the field.</li>

|

| 74 |

+

<li> Evaluate their own work, be it pretraining or finetuning, comparing methods in the open and to the best existing models, and earning public recognition for their work.</li>

|

| 75 |

+

</ul>

|

| 76 |

+

|

| 77 |

+

<p> However, with success, both in the leaderboard and the increasing performances of the models came challenges and after one intense year and a lot of community feedback, we thought it was time for an upgrade! Therefore, we’re introducing the Open LLM Leaderboard v2!</p>

|

| 78 |

+

|

| 79 |

+

<p>Here is why we think a new leaderboard was needed 👇</p>

|

| 80 |

+

|

| 81 |

+

<h2>Harder, better, faster, stronger: Introducing the Leaderboard v2</h2>

|

| 82 |

+

|

| 83 |

+

<h3>The need for a more challenging leaderboard</h3>

|

| 84 |

+

|

| 85 |

+

<p>

|

| 86 |

+

Over the past year, the benchmarks we were using got overused/saturated:

|

| 87 |

+

</p>

|

| 88 |

+

|

| 89 |

+

<ol>

|

| 90 |

+

<li>They became too easy for models. For instance on HellaSwag, MMLU and ARC, models are now reaching baseline human performance, a phenomenon called saturation.</li>

|

| 91 |

+

<li>Some newer models also showed signs of contamination. By this we mean that models were possibly trained on benchmark data or on data very similar to benchmark data. As such, some scores stopped reflecting general performances of model and started to over-fit on some evaluation dataset instead of being reflective of the more general performances of the task being tested. This was in particular the case for GSM8K and TruthfulQA which were included in some instruction fine-tuning sets.</li>

|

| 92 |

+

<li>Some benchmarks contained errors: MMLU was recently investigated in depth by several groups who surfaced mistakes in its responses and proposed new versions. Another example was the fact that GSM8K used some specific end of generation token (`:`) which unfairly pushed down performance of many verbose models.</li>

|

| 93 |

+

</ol>

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

</d-article>

|

| 97 |

+

|

| 98 |

+

<d-appendix>

|

| 99 |

+

<d-bibliography src="bibliography.bib"></d-bibliography>

|

| 100 |

+

</d-appendix>

|

| 101 |

+

|

| 102 |

+

<script>

|

| 103 |

+

const article = document.querySelector('d-article');

|

| 104 |

+

const toc = document.querySelector('d-contents');

|

| 105 |

+

if (toc) {

|

| 106 |

+

const headings = article.querySelectorAll('h2, h3, h4');

|

| 107 |

+

let ToC = `<nav role="navigation" class="l-text figcaption"><h3>Table of contents</h3>`;

|

| 108 |

+

let prevLevel = 0;

|

| 109 |

+

|

| 110 |

+

for (const el of headings) {

|

| 111 |

+

// should element be included in TOC?

|

| 112 |

+

const isInTitle = el.parentElement.tagName == 'D-TITLE';

|

| 113 |

+

const isException = el.getAttribute('no-toc');

|

| 114 |

+

if (isInTitle || isException) continue;

|

| 115 |

+

el.setAttribute('id', el.textContent.toLowerCase().replaceAll(" ", "_"))

|

| 116 |

+

const link = '<a target="_self" href="' + '#' + el.getAttribute('id') + '">' + el.textContent + '</a>';

|

| 117 |

+

|

| 118 |

+

const level = el.tagName === 'H2' ? 0 : (el.tagName === 'H3' ? 1 : 2);

|

| 119 |

+

while (prevLevel < level) {

|

| 120 |

+

ToC += '<ul>'

|

| 121 |

+

prevLevel++;

|

| 122 |

+

}

|

| 123 |

+

while (prevLevel > level) {

|

| 124 |

+

ToC += '</ul>'

|

| 125 |

+

prevLevel--;

|

| 126 |

+

}

|

| 127 |

+

if (level === 0)

|

| 128 |

+

ToC += '<div>' + link + '</div>';

|

| 129 |

+

else

|

| 130 |

+

ToC += '<li>' + link + '</li>';

|

| 131 |

+

}

|

| 132 |

+

|

| 133 |

+

while (prevLevel > 0) {

|

| 134 |

+

ToC += '</ul>'

|

| 135 |

+

prevLevel--;

|

| 136 |

+

}

|

| 137 |

+

ToC += '</nav>';

|

| 138 |

+

toc.innerHTML = ToC;

|

| 139 |

+

toc.setAttribute('prerendered', 'true');

|

| 140 |

+

const toc_links = document.querySelectorAll('d-contents > nav a');

|

| 141 |

+

|

| 142 |

+

window.addEventListener('scroll', (_event) => {

|

| 143 |

+

if (typeof (headings) != 'undefined' && headings != null && typeof (toc_links) != 'undefined' && toc_links != null) {

|

| 144 |

+

// Then iterate forwards, on the first match highlight it and break

|

| 145 |

+

find_active: {

|

| 146 |

+

for (let i = headings.length - 1; i >= 0; i--) {

|

| 147 |

+

if (headings[i].getBoundingClientRect().top - 50 <= 0) {

|

| 148 |

+

if (!toc_links[i].classList.contains("active")) {

|

| 149 |

+

toc_links.forEach((link, _index) => {

|

| 150 |

+

link.classList.remove("active");

|

| 151 |

+

});

|

| 152 |

+

toc_links[i].classList.add('active');

|

| 153 |

+

}

|

| 154 |

+

break find_active;

|

| 155 |

+

}

|

| 156 |

+

}

|

| 157 |

+

toc_links.forEach((link, _index) => {

|

| 158 |

+

link.classList.remove("active");

|

| 159 |

+

});

|

| 160 |

+

}

|

| 161 |

+

}

|

| 162 |

+

});

|

| 163 |

+

}

|

| 164 |

+

</script>

|

| 165 |

+

</body>

|

| 166 |

+

</html>

|

dist/main.bundle.js

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

document.addEventListener("DOMContentLoaded",(function(){console.log("DOMContentLoaded")}),{once:!0});

|

| 2 |

+

//# sourceMappingURL=main.bundle.js.map

|

dist/main.bundle.js.map

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"version":3,"file":"main.bundle.js","mappings":"AAAAA,SAASC,iBAAiB,oBAAoB,WAC1CC,QAAQC,IAAI,mBAChB,GAAG,CAAEC,MAAM","sources":["webpack://blogpost/./src/index.js"],"sourcesContent":["document.addEventListener(\"DOMContentLoaded\", () => {\n console.log(\"DOMContentLoaded\");\n}, { once: true });"],"names":["document","addEventListener","console","log","once"],"sourceRoot":""}

|

dist/style.css

ADDED

|

@@ -0,0 +1,259 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

/* style.css */

|

| 2 |

+

/* Define colors */

|

| 3 |

+

:root {

|

| 4 |

+

--distill-gray: rgb(107, 114, 128);

|

| 5 |

+

--distill-gray-light: rgb(185, 185, 185);

|

| 6 |

+

--distill-gray-lighter: rgb(228, 228, 228);

|

| 7 |

+

--distill-gray-lightest: rgb(245, 245, 245);

|

| 8 |

+

--distill-blue: #007BFF;

|

| 9 |

+

}

|

| 10 |

+

|

| 11 |

+

/* Container for the controls */

|

| 12 |

+

[id^="plot-"] {

|

| 13 |

+

display: flex;

|

| 14 |

+

flex-direction: column;

|

| 15 |

+

align-items: center;

|

| 16 |

+

gap: 15px; /* Adjust the gap between controls as needed */

|

| 17 |

+

}

|

| 18 |

+

[id^="plot-"] figure {

|

| 19 |

+

margin-bottom: 0px;

|

| 20 |

+

margin-top: 0px;

|

| 21 |

+

padding: 0px;

|

| 22 |

+

}

|

| 23 |

+

|

| 24 |

+

.plotly_caption {

|

| 25 |

+

font-style: italic;

|

| 26 |

+

margin-top: 10px;

|

| 27 |

+

}

|

| 28 |

+

|

| 29 |

+

.plotly_controls {

|

| 30 |

+

display: flex;

|

| 31 |

+

flex-wrap: wrap;

|

| 32 |

+

flex-direction: row;

|

| 33 |

+

justify-content: center;

|

| 34 |

+

align-items: flex-start;

|

| 35 |

+

gap: 30px;

|

| 36 |

+

}

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

.plotly_input_container {

|

| 40 |

+

display: flex;

|

| 41 |

+

align-items: center;

|

| 42 |

+

flex-direction: column;

|

| 43 |

+

gap: 10px;

|

| 44 |

+

}

|

| 45 |

+

|

| 46 |

+

/* Style for the select dropdown */

|

| 47 |

+

.plotly_input_container > select {

|

| 48 |

+

padding: 2px 4px;

|

| 49 |

+

/* border: 1px solid #ccc; */

|

| 50 |

+

line-height: 1.5em;

|

| 51 |

+

text-align: center;

|

| 52 |

+

border-radius: 4px;

|

| 53 |

+

font-size: 12px;

|

| 54 |

+

background-color: var(--distill-gray-lightest);

|

| 55 |

+

outline: none;

|

| 56 |

+

}

|

| 57 |

+

|

| 58 |

+

/* Style for the range input */

|

| 59 |

+

|

| 60 |

+

.plotly_slider {

|

| 61 |

+

display: flex;

|

| 62 |

+

align-items: center;

|

| 63 |

+

gap: 10px;

|

| 64 |

+

}

|

| 65 |

+

|

| 66 |

+

.plotly_slider > input[type="range"] {

|

| 67 |

+

-webkit-appearance: none;

|

| 68 |

+

height: 2px;

|

| 69 |

+

background: var(--distill-gray-light);

|

| 70 |

+

border-radius: 5px;

|

| 71 |

+

outline: none;

|

| 72 |

+

}

|

| 73 |

+

|

| 74 |

+

.plotly_slider > span {

|

| 75 |

+

font-size: 14px;

|

| 76 |

+

line-height: 1.6em;

|

| 77 |

+

min-width: 16px;

|

| 78 |

+

}

|

| 79 |

+

|

| 80 |

+

.plotly_slider > input[type="range"]::-webkit-slider-thumb {

|

| 81 |

+

-webkit-appearance: none;

|

| 82 |

+

appearance: none;

|

| 83 |

+

width: 18px;

|

| 84 |

+

height: 18px;

|

| 85 |

+

border-radius: 50%;

|

| 86 |

+

background: var(--distill-blue);

|

| 87 |

+

cursor: pointer;

|

| 88 |

+

}

|

| 89 |

+

|

| 90 |

+

.plotly_slider > input[type="range"]::-moz-range-thumb {

|

| 91 |

+

width: 18px;

|

| 92 |