Upload 81 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .DS_Store +0 -0

- .gitattributes +24 -0

- .gitignore +170 -0

- LICENSE +21 -0

- OmniGen/__init__.py +4 -0

- OmniGen/__pycache__/__init__.cpython-310.pyc +0 -0

- OmniGen/__pycache__/model.cpython-310.pyc +0 -0

- OmniGen/__pycache__/pipeline.cpython-310.pyc +0 -0

- OmniGen/__pycache__/processor.cpython-310.pyc +0 -0

- OmniGen/__pycache__/scheduler.cpython-310.pyc +0 -0

- OmniGen/__pycache__/transformer.cpython-310.pyc +0 -0

- OmniGen/__pycache__/utils.cpython-310.pyc +0 -0

- OmniGen/model.py +468 -0

- OmniGen/pipeline.py +289 -0

- OmniGen/processor.py +335 -0

- OmniGen/scheduler.py +55 -0

- OmniGen/train_helper/__init__.py +2 -0

- OmniGen/train_helper/data.py +116 -0

- OmniGen/train_helper/loss.py +68 -0

- OmniGen/transformer.py +159 -0

- OmniGen/utils.py +110 -0

- README.md +93 -14

- app.py +359 -0

- docs/fine-tuning.md +172 -0

- docs/inference.md +96 -0

- imgs/.DS_Store +0 -0

- imgs/demo_cases.png +3 -0

- imgs/demo_cases/AI_Pioneers.jpg +0 -0

- imgs/demo_cases/edit.png +3 -0

- imgs/demo_cases/entity.png +3 -0

- imgs/demo_cases/reasoning.png +3 -0

- imgs/demo_cases/same_pose.png +3 -0

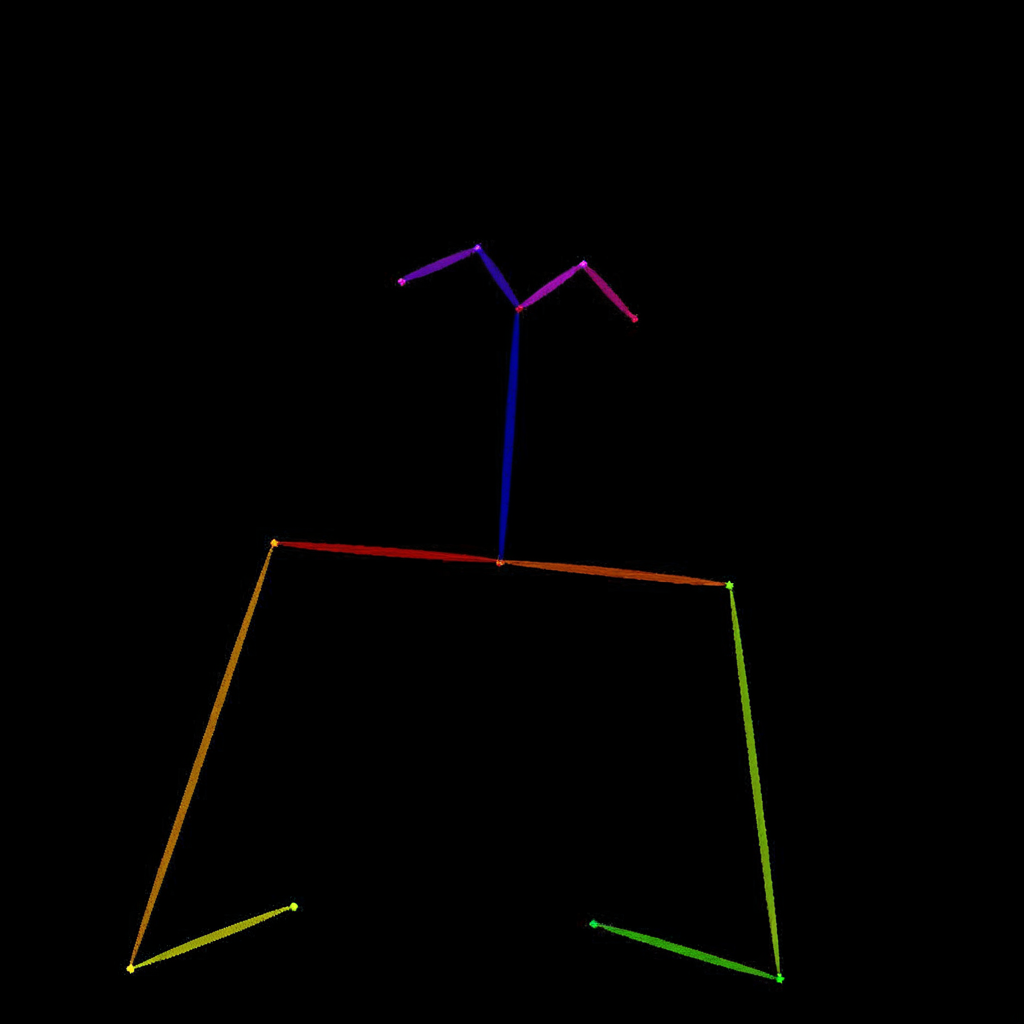

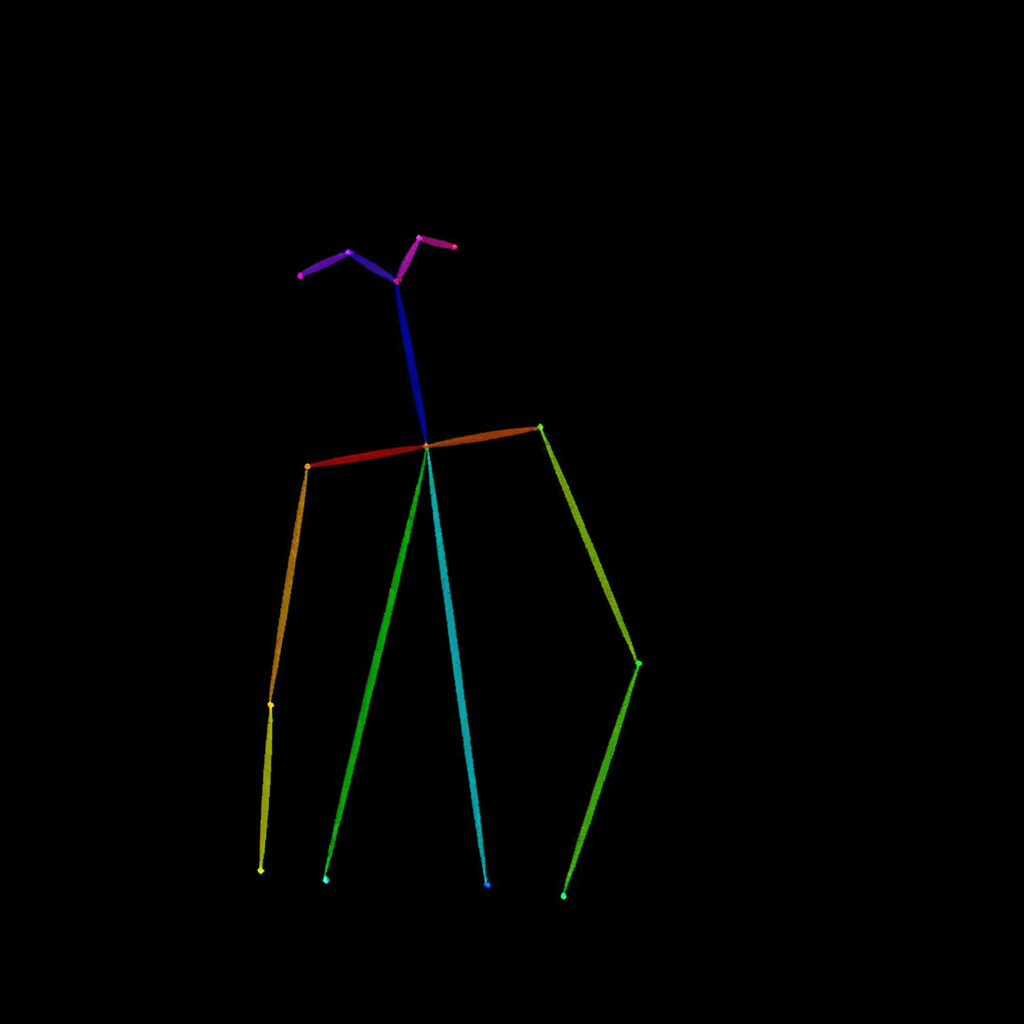

- imgs/demo_cases/skeletal.png +0 -0

- imgs/demo_cases/skeletal2img.png +3 -0

- imgs/demo_cases/t2i_woman_with_book.png +3 -0

- imgs/overall.jpg +3 -0

- imgs/referring.png +3 -0

- imgs/test_cases/1.jpg +3 -0

- imgs/test_cases/2.jpg +3 -0

- imgs/test_cases/3.jpg +3 -0

- imgs/test_cases/4.jpg +3 -0

- imgs/test_cases/Amanda.jpg +3 -0

- imgs/test_cases/control.jpg +3 -0

- imgs/test_cases/icl1.jpg +0 -0

- imgs/test_cases/icl2.jpg +0 -0

- imgs/test_cases/icl3.jpg +0 -0

- imgs/test_cases/lecun.png +0 -0

- imgs/test_cases/mckenna.jpg +3 -0

- imgs/test_cases/pose.png +0 -0

- imgs/test_cases/rose.jpg +0 -0

.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

.gitattributes

CHANGED

|

@@ -33,3 +33,27 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

imgs/demo_cases.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

imgs/demo_cases/edit.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

imgs/demo_cases/entity.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

imgs/demo_cases/reasoning.png filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

imgs/demo_cases/same_pose.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

imgs/demo_cases/skeletal2img.png filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

imgs/demo_cases/t2i_woman_with_book.png filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

imgs/overall.jpg filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

imgs/referring.png filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

imgs/test_cases/1.jpg filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

imgs/test_cases/2.jpg filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

imgs/test_cases/3.jpg filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

imgs/test_cases/4.jpg filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

imgs/test_cases/Amanda.jpg filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

imgs/test_cases/control.jpg filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

imgs/test_cases/mckenna.jpg filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

imgs/test_cases/two_man.jpg filter=lfs diff=lfs merge=lfs -text

|

| 53 |

+

imgs/test_cases/woman.png filter=lfs diff=lfs merge=lfs -text

|

| 54 |

+

toy_data/images/cat.png filter=lfs diff=lfs merge=lfs -text

|

| 55 |

+

toy_data/images/dog2.jpeg filter=lfs diff=lfs merge=lfs -text

|

| 56 |

+

toy_data/images/dog3.jpeg filter=lfs diff=lfs merge=lfs -text

|

| 57 |

+

toy_data/images/dog4.jpeg filter=lfs diff=lfs merge=lfs -text

|

| 58 |

+

toy_data/images/dog5.jpeg filter=lfs diff=lfs merge=lfs -text

|

| 59 |

+

toy_data/images/walking.png filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,170 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Byte-compiled / optimized / DLL files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.py[cod]

|

| 4 |

+

*$py.class

|

| 5 |

+

|

| 6 |

+

# C extensions

|

| 7 |

+

*.so

|

| 8 |

+

|

| 9 |

+

# Distribution / packaging

|

| 10 |

+

.Python

|

| 11 |

+

build/

|

| 12 |

+

develop-eggs/

|

| 13 |

+

dist/

|

| 14 |

+

downloads/

|

| 15 |

+

eggs/

|

| 16 |

+

.eggs/

|

| 17 |

+

lib/

|

| 18 |

+

lib64/

|

| 19 |

+

parts/

|

| 20 |

+

sdist/

|

| 21 |

+

var/

|

| 22 |

+

wheels/

|

| 23 |

+

share/python-wheels/

|

| 24 |

+

*.egg-info/

|

| 25 |

+

.installed.cfg

|

| 26 |

+

*.egg

|

| 27 |

+

MANIFEST

|

| 28 |

+

|

| 29 |

+

# PyInstaller

|

| 30 |

+

# Usually these files are written by a python script from a template

|

| 31 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 32 |

+

*.manifest

|

| 33 |

+

*.spec

|

| 34 |

+

|

| 35 |

+

# Installer logs

|

| 36 |

+

pip-log.txt

|

| 37 |

+

pip-delete-this-directory.txt

|

| 38 |

+

|

| 39 |

+

# Unit test / coverage reports

|

| 40 |

+

htmlcov/

|

| 41 |

+

.tox/

|

| 42 |

+

.nox/

|

| 43 |

+

.coverage

|

| 44 |

+

.coverage.*

|

| 45 |

+

.cache

|

| 46 |

+

nosetests.xml

|

| 47 |

+

coverage.xml

|

| 48 |

+

*.cover

|

| 49 |

+

*.py,cover

|

| 50 |

+

.hypothesis/

|

| 51 |

+

.pytest_cache/

|

| 52 |

+

cover/

|

| 53 |

+

|

| 54 |

+

# Translations

|

| 55 |

+

*.mo

|

| 56 |

+

*.pot

|

| 57 |

+

|

| 58 |

+

# Django stuff:

|

| 59 |

+

*.log

|

| 60 |

+

local_settings.py

|

| 61 |

+

db.sqlite3

|

| 62 |

+

db.sqlite3-journal

|

| 63 |

+

|

| 64 |

+

# Flask stuff:

|

| 65 |

+

instance/

|

| 66 |

+

.webassets-cache

|

| 67 |

+

|

| 68 |

+

# Scrapy stuff:

|

| 69 |

+

.scrapy

|

| 70 |

+

|

| 71 |

+

# Sphinx documentation

|

| 72 |

+

docs/_build/

|

| 73 |

+

|

| 74 |

+

# PyBuilder

|

| 75 |

+

.pybuilder/

|

| 76 |

+

target/

|

| 77 |

+

|

| 78 |

+

# Jupyter Notebook

|

| 79 |

+

.ipynb_checkpoints

|

| 80 |

+

|

| 81 |

+

# IPython

|

| 82 |

+

profile_default/

|

| 83 |

+

ipython_config.py

|

| 84 |

+

|

| 85 |

+

# pyenv

|

| 86 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 87 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 88 |

+

# .python-version

|

| 89 |

+

|

| 90 |

+

# pipenv

|

| 91 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 92 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 93 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 94 |

+

# install all needed dependencies.

|

| 95 |

+

#Pipfile.lock

|

| 96 |

+

|

| 97 |

+

# poetry

|

| 98 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 99 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 100 |

+

# commonly ignored for libraries.

|

| 101 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 102 |

+

#poetry.lock

|

| 103 |

+

|

| 104 |

+

# pdm

|

| 105 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 106 |

+

#pdm.lock

|

| 107 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 108 |

+

# in version control.

|

| 109 |

+

# https://pdm.fming.dev/latest/usage/project/#working-with-version-control

|

| 110 |

+

.pdm.toml

|

| 111 |

+

.pdm-python

|

| 112 |

+

.pdm-build/

|

| 113 |

+

|

| 114 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 115 |

+

__pypackages__/

|

| 116 |

+

|

| 117 |

+

# Celery stuff

|

| 118 |

+

celerybeat-schedule

|

| 119 |

+

celerybeat.pid

|

| 120 |

+

|

| 121 |

+

# SageMath parsed files

|

| 122 |

+

*.sage.py

|

| 123 |

+

|

| 124 |

+

# Environments

|

| 125 |

+

.env

|

| 126 |

+

.venv

|

| 127 |

+

env/

|

| 128 |

+

venv/

|

| 129 |

+

ENV/

|

| 130 |

+

env.bak/

|

| 131 |

+

venv.bak/

|

| 132 |

+

|

| 133 |

+

# Spyder project settings

|

| 134 |

+

.spyderproject

|

| 135 |

+

.spyproject

|

| 136 |

+

|

| 137 |

+

# Rope project settings

|

| 138 |

+

.ropeproject

|

| 139 |

+

|

| 140 |

+

# mkdocs documentation

|

| 141 |

+

/site

|

| 142 |

+

|

| 143 |

+

# mypy

|

| 144 |

+

.mypy_cache/

|

| 145 |

+

.dmypy.json

|

| 146 |

+

dmypy.json

|

| 147 |

+

|

| 148 |

+

# Pyre type checker

|

| 149 |

+

.pyre/

|

| 150 |

+

|

| 151 |

+

# pytype static type analyzer

|

| 152 |

+

.pytype/

|

| 153 |

+

|

| 154 |

+

# Cython debug symbols

|

| 155 |

+

cython_debug/

|

| 156 |

+

|

| 157 |

+

# PyCharm

|

| 158 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 159 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 160 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 161 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 162 |

+

#.idea/

|

| 163 |

+

|

| 164 |

+

# myfile

|

| 165 |

+

.results/

|

| 166 |

+

local.ipynb

|

| 167 |

+

convert_to_safetensor.py

|

| 168 |

+

ttt.ipynb

|

| 169 |

+

imgs/ttt/

|

| 170 |

+

*.bak

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2024 VectorSpaceLab

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

OmniGen/__init__.py

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from .model import OmniGen

|

| 2 |

+

from .processor import OmniGenProcessor

|

| 3 |

+

from .scheduler import OmniGenScheduler

|

| 4 |

+

from .pipeline import OmniGenPipeline

|

OmniGen/__pycache__/__init__.cpython-310.pyc

ADDED

|

Binary file (320 Bytes). View file

|

|

|

OmniGen/__pycache__/model.cpython-310.pyc

ADDED

|

Binary file (14.4 kB). View file

|

|

|

OmniGen/__pycache__/pipeline.cpython-310.pyc

ADDED

|

Binary file (8.89 kB). View file

|

|

|

OmniGen/__pycache__/processor.cpython-310.pyc

ADDED

|

Binary file (11.2 kB). View file

|

|

|

OmniGen/__pycache__/scheduler.cpython-310.pyc

ADDED

|

Binary file (2.74 kB). View file

|

|

|

OmniGen/__pycache__/transformer.cpython-310.pyc

ADDED

|

Binary file (3.94 kB). View file

|

|

|

OmniGen/__pycache__/utils.cpython-310.pyc

ADDED

|

Binary file (3.52 kB). View file

|

|

|

OmniGen/model.py

ADDED

|

@@ -0,0 +1,468 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# The code is revised from DiT

|

| 2 |

+

import os

|

| 3 |

+

import torch

|

| 4 |

+

import torch.nn as nn

|

| 5 |

+

import numpy as np

|

| 6 |

+

import math

|

| 7 |

+

from typing import Dict

|

| 8 |

+

import torch.nn.functional as F

|

| 9 |

+

|

| 10 |

+

from diffusers.loaders import PeftAdapterMixin

|

| 11 |

+

from timm.models.vision_transformer import PatchEmbed, Attention, Mlp

|

| 12 |

+

from huggingface_hub import snapshot_download

|

| 13 |

+

from safetensors.torch import load_file

|

| 14 |

+

|

| 15 |

+

from OmniGen.transformer import Phi3Config, Phi3Transformer

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

def modulate(x, shift, scale):

|

| 19 |

+

return x * (1 + scale.unsqueeze(1)) + shift.unsqueeze(1)

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

class TimestepEmbedder(nn.Module):

|

| 23 |

+

"""

|

| 24 |

+

Embeds scalar timesteps into vector representations.

|

| 25 |

+

"""

|

| 26 |

+

def __init__(self, hidden_size, frequency_embedding_size=256):

|

| 27 |

+

super().__init__()

|

| 28 |

+

self.mlp = nn.Sequential(

|

| 29 |

+

nn.Linear(frequency_embedding_size, hidden_size, bias=True),

|

| 30 |

+

nn.SiLU(),

|

| 31 |

+

nn.Linear(hidden_size, hidden_size, bias=True),

|

| 32 |

+

)

|

| 33 |

+

self.frequency_embedding_size = frequency_embedding_size

|

| 34 |

+

|

| 35 |

+

@staticmethod

|

| 36 |

+

def timestep_embedding(t, dim, max_period=10000):

|

| 37 |

+

"""

|

| 38 |

+

Create sinusoidal timestep embeddings.

|

| 39 |

+

:param t: a 1-D Tensor of N indices, one per batch element.

|

| 40 |

+

These may be fractional.

|

| 41 |

+

:param dim: the dimension of the output.

|

| 42 |

+

:param max_period: controls the minimum frequency of the embeddings.

|

| 43 |

+

:return: an (N, D) Tensor of positional embeddings.

|

| 44 |

+

"""

|

| 45 |

+

# https://github.com/openai/glide-text2im/blob/main/glide_text2im/nn.py

|

| 46 |

+

half = dim // 2

|

| 47 |

+

freqs = torch.exp(

|

| 48 |

+

-math.log(max_period) * torch.arange(start=0, end=half, dtype=torch.float32) / half

|

| 49 |

+

).to(device=t.device)

|

| 50 |

+

args = t[:, None].float() * freqs[None]

|

| 51 |

+

embedding = torch.cat([torch.cos(args), torch.sin(args)], dim=-1)

|

| 52 |

+

if dim % 2:

|

| 53 |

+

embedding = torch.cat([embedding, torch.zeros_like(embedding[:, :1])], dim=-1)

|

| 54 |

+

return embedding

|

| 55 |

+

|

| 56 |

+

def forward(self, t, dtype=torch.float32):

|

| 57 |

+

t_freq = self.timestep_embedding(t, self.frequency_embedding_size).to(dtype)

|

| 58 |

+

t_emb = self.mlp(t_freq)

|

| 59 |

+

return t_emb

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

class FinalLayer(nn.Module):

|

| 63 |

+

"""

|

| 64 |

+

The final layer of DiT.

|

| 65 |

+

"""

|

| 66 |

+

def __init__(self, hidden_size, patch_size, out_channels):

|

| 67 |

+

super().__init__()

|

| 68 |

+

self.norm_final = nn.LayerNorm(hidden_size, elementwise_affine=False, eps=1e-6)

|

| 69 |

+

self.linear = nn.Linear(hidden_size, patch_size * patch_size * out_channels, bias=True)

|

| 70 |

+

self.adaLN_modulation = nn.Sequential(

|

| 71 |

+

nn.SiLU(),

|

| 72 |

+

nn.Linear(hidden_size, 2 * hidden_size, bias=True)

|

| 73 |

+

)

|

| 74 |

+

|

| 75 |

+

def forward(self, x, c):

|

| 76 |

+

shift, scale = self.adaLN_modulation(c).chunk(2, dim=1)

|

| 77 |

+

x = modulate(self.norm_final(x), shift, scale)

|

| 78 |

+

x = self.linear(x)

|

| 79 |

+

return x

|

| 80 |

+

|

| 81 |

+

|

| 82 |

+

def get_2d_sincos_pos_embed(embed_dim, grid_size, cls_token=False, extra_tokens=0, interpolation_scale=1.0, base_size=1):

|

| 83 |

+

"""

|

| 84 |

+

grid_size: int of the grid height and width return: pos_embed: [grid_size*grid_size, embed_dim] or

|

| 85 |

+

[1+grid_size*grid_size, embed_dim] (w/ or w/o cls_token)

|

| 86 |

+

"""

|

| 87 |

+

if isinstance(grid_size, int):

|

| 88 |

+

grid_size = (grid_size, grid_size)

|

| 89 |

+

|

| 90 |

+

grid_h = np.arange(grid_size[0], dtype=np.float32) / (grid_size[0] / base_size) / interpolation_scale

|

| 91 |

+

grid_w = np.arange(grid_size[1], dtype=np.float32) / (grid_size[1] / base_size) / interpolation_scale

|

| 92 |

+

grid = np.meshgrid(grid_w, grid_h) # here w goes first

|

| 93 |

+

grid = np.stack(grid, axis=0)

|

| 94 |

+

|

| 95 |

+

grid = grid.reshape([2, 1, grid_size[1], grid_size[0]])

|

| 96 |

+

pos_embed = get_2d_sincos_pos_embed_from_grid(embed_dim, grid)

|

| 97 |

+

if cls_token and extra_tokens > 0:

|

| 98 |

+

pos_embed = np.concatenate([np.zeros([extra_tokens, embed_dim]), pos_embed], axis=0)

|

| 99 |

+

return pos_embed

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

def get_2d_sincos_pos_embed_from_grid(embed_dim, grid):

|

| 103 |

+

assert embed_dim % 2 == 0

|

| 104 |

+

|

| 105 |

+

# use half of dimensions to encode grid_h

|

| 106 |

+

emb_h = get_1d_sincos_pos_embed_from_grid(embed_dim // 2, grid[0]) # (H*W, D/2)

|

| 107 |

+

emb_w = get_1d_sincos_pos_embed_from_grid(embed_dim // 2, grid[1]) # (H*W, D/2)

|

| 108 |

+

|

| 109 |

+

emb = np.concatenate([emb_h, emb_w], axis=1) # (H*W, D)

|

| 110 |

+

return emb

|

| 111 |

+

|

| 112 |

+

|

| 113 |

+

def get_1d_sincos_pos_embed_from_grid(embed_dim, pos):

|

| 114 |

+

"""

|

| 115 |

+

embed_dim: output dimension for each position

|

| 116 |

+

pos: a list of positions to be encoded: size (M,)

|

| 117 |

+

out: (M, D)

|

| 118 |

+

"""

|

| 119 |

+

assert embed_dim % 2 == 0

|

| 120 |

+

omega = np.arange(embed_dim // 2, dtype=np.float64)

|

| 121 |

+

omega /= embed_dim / 2.

|

| 122 |

+

omega = 1. / 10000**omega # (D/2,)

|

| 123 |

+

|

| 124 |

+

pos = pos.reshape(-1) # (M,)

|

| 125 |

+

out = np.einsum('m,d->md', pos, omega) # (M, D/2), outer product

|

| 126 |

+

|

| 127 |

+

emb_sin = np.sin(out) # (M, D/2)

|

| 128 |

+

emb_cos = np.cos(out) # (M, D/2)

|

| 129 |

+

|

| 130 |

+

emb = np.concatenate([emb_sin, emb_cos], axis=1) # (M, D)

|

| 131 |

+

return emb

|

| 132 |

+

|

| 133 |

+

|

| 134 |

+

class PatchEmbedMR(nn.Module):

|

| 135 |

+

""" 2D Image to Patch Embedding

|

| 136 |

+

"""

|

| 137 |

+

def __init__(

|

| 138 |

+

self,

|

| 139 |

+

patch_size: int = 2,

|

| 140 |

+

in_chans: int = 4,

|

| 141 |

+

embed_dim: int = 768,

|

| 142 |

+

bias: bool = True,

|

| 143 |

+

):

|

| 144 |

+

super().__init__()

|

| 145 |

+

self.proj = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size, stride=patch_size, bias=bias)

|

| 146 |

+

|

| 147 |

+

def forward(self, x):

|

| 148 |

+

x = self.proj(x)

|

| 149 |

+

x = x.flatten(2).transpose(1, 2) # NCHW -> NLC

|

| 150 |

+

return x

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

class Int8Quantized(nn.Module):

|

| 154 |

+

def __init__(self, tensor, scale_factor=None):

|

| 155 |

+

super().__init__()

|

| 156 |

+

if scale_factor is None:

|

| 157 |

+

max_val = torch.max(torch.abs(tensor))

|

| 158 |

+

scale_factor = max_val / 127.0

|

| 159 |

+

# Store quantized weights and scale factor

|

| 160 |

+

self.register_buffer('quantized_weight', torch.round(tensor / scale_factor).to(torch.int8))

|

| 161 |

+

self.register_buffer('scale_factor', torch.tensor(scale_factor))

|

| 162 |

+

|

| 163 |

+

def forward(self, dtype=None):

|

| 164 |

+

# Dequantize and convert to specified dtype

|

| 165 |

+

weight = self.quantized_weight.float() * self.scale_factor

|

| 166 |

+

if dtype is not None:

|

| 167 |

+

weight = weight.to(dtype)

|

| 168 |

+

return weight

|

| 169 |

+

|

| 170 |

+

|

| 171 |

+

|

| 172 |

+

class QuantizedLinear(nn.Module):

|

| 173 |

+

def __init__(self, weight, bias=None):

|

| 174 |

+

super().__init__()

|

| 175 |

+

self.weight_quantized = Int8Quantized(weight)

|

| 176 |

+

if bias is not None:

|

| 177 |

+

self.register_buffer('bias', bias)

|

| 178 |

+

else:

|

| 179 |

+

self.bias = None

|

| 180 |

+

|

| 181 |

+

def forward(self, x):

|

| 182 |

+

# Dequantize weight to match input dtype

|

| 183 |

+

weight = self.weight_quantized(dtype=x.dtype)

|

| 184 |

+

return F.linear(x, weight, self.bias)

|

| 185 |

+

|

| 186 |

+

|

| 187 |

+

class OmniGen(nn.Module, PeftAdapterMixin):

|

| 188 |

+

"""

|

| 189 |

+

Diffusion model with a Transformer backbone.

|

| 190 |

+

"""

|

| 191 |

+

def __init__(

|

| 192 |

+

self,

|

| 193 |

+

transformer_config: Phi3Config,

|

| 194 |

+

patch_size=2,

|

| 195 |

+

in_channels=4,

|

| 196 |

+

pe_interpolation: float = 1.0,

|

| 197 |

+

pos_embed_max_size: int = 192,

|

| 198 |

+

):

|

| 199 |

+

super().__init__()

|

| 200 |

+

|

| 201 |

+

self.in_channels = in_channels

|

| 202 |

+

self.out_channels = in_channels

|

| 203 |

+

self.patch_size = patch_size

|

| 204 |

+

self.pos_embed_max_size = pos_embed_max_size

|

| 205 |

+

|

| 206 |

+

hidden_size = transformer_config.hidden_size

|

| 207 |

+

|

| 208 |

+

self.x_embedder = PatchEmbedMR(patch_size, in_channels, hidden_size, bias=True)

|

| 209 |

+

self.input_x_embedder = PatchEmbedMR(patch_size, in_channels, hidden_size, bias=True)

|

| 210 |

+

|

| 211 |

+

self.time_token = TimestepEmbedder(hidden_size)

|

| 212 |

+

self.t_embedder = TimestepEmbedder(hidden_size)

|

| 213 |

+

|

| 214 |

+

self.pe_interpolation = pe_interpolation

|

| 215 |

+

pos_embed = get_2d_sincos_pos_embed(hidden_size, pos_embed_max_size, interpolation_scale=self.pe_interpolation, base_size=64)

|

| 216 |

+

self.register_buffer("pos_embed", torch.from_numpy(pos_embed).float().unsqueeze(0), persistent=True)

|

| 217 |

+

|

| 218 |

+

self.final_layer = FinalLayer(hidden_size, patch_size, self.out_channels)

|

| 219 |

+

|

| 220 |

+

self.initialize_weights()

|

| 221 |

+

|

| 222 |

+

self.llm = Phi3Transformer(config=transformer_config)

|

| 223 |

+

self.llm.config.use_cache = False

|

| 224 |

+

|

| 225 |

+

def _quantize_module(self, module):

|

| 226 |

+

"""

|

| 227 |

+

Quantize a module to 8-bit precision

|

| 228 |

+

"""

|

| 229 |

+

for name, child in module.named_children():

|

| 230 |

+

if isinstance(child, nn.Linear):

|

| 231 |

+

setattr(module, name, QuantizedLinear(child.weight.data, child.bias.data if child.bias is not None else None))

|

| 232 |

+

elif isinstance(child, nn.LayerNorm):

|

| 233 |

+

# Skip quantization for LayerNorm

|

| 234 |

+

continue

|

| 235 |

+

else:

|

| 236 |

+

self._quantize_module(child)

|

| 237 |

+

|

| 238 |

+

@classmethod

|

| 239 |

+

def from_pretrained(cls, model_name, quantize=False): # Add quantize parameter

|

| 240 |

+

if not os.path.exists(model_name):

|

| 241 |

+

cache_folder = os.getenv('HF_HUB_CACHE')

|

| 242 |

+

model_name = snapshot_download(repo_id=model_name,

|

| 243 |

+

cache_dir=cache_folder,

|

| 244 |

+

ignore_patterns=['flax_model.msgpack', 'rust_model.ot', 'tf_model.h5'])

|

| 245 |

+

config = Phi3Config.from_pretrained(model_name)

|

| 246 |

+

model = cls(config)

|

| 247 |

+

if os.path.exists(os.path.join(model_name, 'model.safetensors')):

|

| 248 |

+

print("Loading safetensors")

|

| 249 |

+

ckpt = load_file(os.path.join(model_name, 'model.safetensors'))

|

| 250 |

+

else:

|

| 251 |

+

ckpt = torch.load(os.path.join(model_name, 'model.pt'), map_location='cpu')

|

| 252 |

+

|

| 253 |

+

# Load weights first

|

| 254 |

+

model.load_state_dict(ckpt)

|

| 255 |

+

|

| 256 |

+

# Only quantize if explicitly requested

|

| 257 |

+

if quantize:

|

| 258 |

+

print("Quantizing weights to 8-bit...")

|

| 259 |

+

model._quantize_module(model.llm)

|

| 260 |

+

|

| 261 |

+

return model

|

| 262 |

+

def initialize_weights(self):

|

| 263 |

+

assert not hasattr(self, "llama")

|

| 264 |

+

|

| 265 |

+

# Initialize transformer layers:

|

| 266 |

+

def _basic_init(module):

|

| 267 |

+

if isinstance(module, nn.Linear):

|

| 268 |

+

torch.nn.init.xavier_uniform_(module.weight)

|

| 269 |

+

if module.bias is not None:

|

| 270 |

+

nn.init.constant_(module.bias, 0)

|

| 271 |

+

self.apply(_basic_init)

|

| 272 |

+

|

| 273 |

+

# Initialize patch_embed like nn.Linear (instead of nn.Conv2d):

|

| 274 |

+

w = self.x_embedder.proj.weight.data

|

| 275 |

+

nn.init.xavier_uniform_(w.view([w.shape[0], -1]))

|

| 276 |

+

nn.init.constant_(self.x_embedder.proj.bias, 0)

|

| 277 |

+

|

| 278 |

+

w = self.input_x_embedder.proj.weight.data

|

| 279 |

+

nn.init.xavier_uniform_(w.view([w.shape[0], -1]))

|

| 280 |

+

nn.init.constant_(self.x_embedder.proj.bias, 0)

|

| 281 |

+

|

| 282 |

+

|

| 283 |

+

# Initialize timestep embedding MLP:

|

| 284 |

+

nn.init.normal_(self.t_embedder.mlp[0].weight, std=0.02)

|

| 285 |

+

nn.init.normal_(self.t_embedder.mlp[2].weight, std=0.02)

|

| 286 |

+

nn.init.normal_(self.time_token.mlp[0].weight, std=0.02)

|

| 287 |

+

nn.init.normal_(self.time_token.mlp[2].weight, std=0.02)

|

| 288 |

+

|

| 289 |

+

# Zero-out output layers:

|

| 290 |

+

nn.init.constant_(self.final_layer.adaLN_modulation[-1].weight, 0)

|

| 291 |

+

nn.init.constant_(self.final_layer.adaLN_modulation[-1].bias, 0)

|

| 292 |

+

nn.init.constant_(self.final_layer.linear.weight, 0)

|

| 293 |

+

nn.init.constant_(self.final_layer.linear.bias, 0)

|

| 294 |

+

|

| 295 |

+

def unpatchify(self, x, h, w):

|

| 296 |

+

"""

|

| 297 |

+

x: (N, T, patch_size**2 * C)

|

| 298 |

+

imgs: (N, H, W, C)

|

| 299 |

+

"""

|

| 300 |

+

c = self.out_channels

|

| 301 |

+

|

| 302 |

+

x = x.reshape(shape=(x.shape[0], h//self.patch_size, w//self.patch_size, self.patch_size, self.patch_size, c))

|

| 303 |

+

x = torch.einsum('nhwpqc->nchpwq', x)

|

| 304 |

+

imgs = x.reshape(shape=(x.shape[0], c, h, w))

|

| 305 |

+

return imgs

|

| 306 |

+

|

| 307 |

+

|

| 308 |

+

def cropped_pos_embed(self, height, width):

|

| 309 |

+

"""Crops positional embeddings for SD3 compatibility."""

|

| 310 |

+

if self.pos_embed_max_size is None:

|

| 311 |

+

raise ValueError("`pos_embed_max_size` must be set for cropping.")

|

| 312 |

+

|

| 313 |

+

height = height // self.patch_size

|

| 314 |

+

width = width // self.patch_size

|

| 315 |

+

if height > self.pos_embed_max_size:

|

| 316 |

+

raise ValueError(

|

| 317 |

+

f"Height ({height}) cannot be greater than `pos_embed_max_size`: {self.pos_embed_max_size}."

|

| 318 |

+

)

|

| 319 |

+

if width > self.pos_embed_max_size:

|

| 320 |

+

raise ValueError(

|

| 321 |

+

f"Width ({width}) cannot be greater than `pos_embed_max_size`: {self.pos_embed_max_size}."

|

| 322 |

+

)

|

| 323 |

+

|

| 324 |

+

top = (self.pos_embed_max_size - height) // 2

|

| 325 |

+

left = (self.pos_embed_max_size - width) // 2

|

| 326 |

+

spatial_pos_embed = self.pos_embed.reshape(1, self.pos_embed_max_size, self.pos_embed_max_size, -1)

|

| 327 |

+

spatial_pos_embed = spatial_pos_embed[:, top : top + height, left : left + width, :]

|

| 328 |

+

# print(top, top + height, left, left + width, spatial_pos_embed.size())

|

| 329 |

+

spatial_pos_embed = spatial_pos_embed.reshape(1, -1, spatial_pos_embed.shape[-1])

|

| 330 |

+

return spatial_pos_embed

|

| 331 |

+

|

| 332 |

+

|

| 333 |

+

def patch_multiple_resolutions(self, latents, padding_latent=None, is_input_images:bool=False):

|

| 334 |

+

if isinstance(latents, list):

|

| 335 |

+

return_list = False

|

| 336 |

+

if padding_latent is None:

|

| 337 |

+

padding_latent = [None] * len(latents)

|

| 338 |

+

return_list = True

|

| 339 |

+

patched_latents, num_tokens, shapes = [], [], []

|

| 340 |

+

for latent, padding in zip(latents, padding_latent):

|

| 341 |

+

height, width = latent.shape[-2:]

|

| 342 |

+

if is_input_images:

|

| 343 |

+

latent = self.input_x_embedder(latent)

|

| 344 |

+

else:

|

| 345 |

+

latent = self.x_embedder(latent)

|

| 346 |

+

pos_embed = self.cropped_pos_embed(height, width)

|

| 347 |

+

latent = latent + pos_embed

|

| 348 |

+

if padding is not None:

|

| 349 |

+

latent = torch.cat([latent, padding], dim=-2)

|

| 350 |

+

patched_latents.append(latent)

|

| 351 |

+

|

| 352 |

+

num_tokens.append(pos_embed.size(1))

|

| 353 |

+

shapes.append([height, width])

|

| 354 |

+

if not return_list:

|

| 355 |

+

latents = torch.cat(patched_latents, dim=0)

|

| 356 |

+

else:

|

| 357 |

+

latents = patched_latents

|

| 358 |

+

else:

|

| 359 |

+

height, width = latents.shape[-2:]

|

| 360 |

+

if is_input_images:

|

| 361 |

+

latents = self.input_x_embedder(latents)

|

| 362 |

+

else:

|

| 363 |

+

latents = self.x_embedder(latents)

|

| 364 |

+

pos_embed = self.cropped_pos_embed(height, width)

|

| 365 |

+

latents = latents + pos_embed

|

| 366 |

+

num_tokens = latents.size(1)

|

| 367 |

+

shapes = [height, width]

|

| 368 |

+

return latents, num_tokens, shapes

|

| 369 |

+

|

| 370 |

+

|

| 371 |

+

def forward(self, x, timestep, input_ids, input_img_latents, input_image_sizes, attention_mask, position_ids, padding_latent=None, past_key_values=None, return_past_key_values=True):

|

| 372 |

+

"""

|

| 373 |

+

|

| 374 |

+

"""

|

| 375 |

+

input_is_list = isinstance(x, list)

|

| 376 |

+

x, num_tokens, shapes = self.patch_multiple_resolutions(x, padding_latent)

|

| 377 |

+

time_token = self.time_token(timestep, dtype=x[0].dtype).unsqueeze(1)

|

| 378 |

+

|

| 379 |

+

if input_img_latents is not None:

|

| 380 |

+

input_latents, _, _ = self.patch_multiple_resolutions(input_img_latents, is_input_images=True)

|

| 381 |

+

if input_ids is not None:

|

| 382 |

+

condition_embeds = self.llm.embed_tokens(input_ids).clone()

|

| 383 |

+

input_img_inx = 0

|

| 384 |

+

for b_inx in input_image_sizes.keys():

|

| 385 |

+

for start_inx, end_inx in input_image_sizes[b_inx]:

|

| 386 |

+

condition_embeds[b_inx, start_inx: end_inx] = input_latents[input_img_inx]

|

| 387 |

+

input_img_inx += 1

|

| 388 |

+

if input_img_latents is not None:

|

| 389 |

+

assert input_img_inx == len(input_latents)

|

| 390 |

+

|

| 391 |

+

input_emb = torch.cat([condition_embeds, time_token, x], dim=1)

|

| 392 |

+

else:

|

| 393 |

+

input_emb = torch.cat([time_token, x], dim=1)

|

| 394 |

+

output = self.llm(inputs_embeds=input_emb, attention_mask=attention_mask, position_ids=position_ids, past_key_values=past_key_values)

|

| 395 |

+

output, past_key_values = output.last_hidden_state, output.past_key_values

|

| 396 |

+

if input_is_list:

|

| 397 |

+

image_embedding = output[:, -max(num_tokens):]

|

| 398 |

+

time_emb = self.t_embedder(timestep, dtype=x.dtype)

|

| 399 |

+

x = self.final_layer(image_embedding, time_emb)

|

| 400 |

+

latents = []

|

| 401 |

+

for i in range(x.size(0)):

|

| 402 |

+

latent = x[i:i+1, :num_tokens[i]]

|

| 403 |

+

latent = self.unpatchify(latent, shapes[i][0], shapes[i][1])

|

| 404 |

+

latents.append(latent)

|

| 405 |

+

else:

|

| 406 |

+

image_embedding = output[:, -num_tokens:]

|

| 407 |

+

time_emb = self.t_embedder(timestep, dtype=x.dtype)

|

| 408 |

+

x = self.final_layer(image_embedding, time_emb)

|

| 409 |

+

latents = self.unpatchify(x, shapes[0], shapes[1])

|

| 410 |

+

|

| 411 |

+

if return_past_key_values:

|

| 412 |

+

return latents, past_key_values

|

| 413 |

+

return latents

|

| 414 |

+

|

| 415 |

+

@torch.no_grad()

|

| 416 |

+

def forward_with_cfg(self, x, timestep, input_ids, input_img_latents, input_image_sizes, attention_mask, position_ids, cfg_scale, use_img_cfg, img_cfg_scale, past_key_values, use_kv_cache):

|

| 417 |

+

"""

|

| 418 |

+

Forward pass of DiT, but also batches the unconditional forward pass for classifier-free guidance.

|

| 419 |

+

"""

|

| 420 |

+

self.llm.config.use_cache = use_kv_cache

|

| 421 |

+

model_out, past_key_values = self.forward(x, timestep, input_ids, input_img_latents, input_image_sizes, attention_mask, position_ids, past_key_values=past_key_values, return_past_key_values=True)

|

| 422 |

+

if use_img_cfg:

|

| 423 |

+

cond, uncond, img_cond = torch.split(model_out, len(model_out) // 3, dim=0)

|

| 424 |

+

cond = uncond + img_cfg_scale * (img_cond - uncond) + cfg_scale * (cond - img_cond)

|

| 425 |

+

model_out = [cond, cond, cond]

|

| 426 |

+

else:

|

| 427 |

+

cond, uncond = torch.split(model_out, len(model_out) // 2, dim=0)

|

| 428 |

+

cond = uncond + cfg_scale * (cond - uncond)

|

| 429 |

+

model_out = [cond, cond]

|

| 430 |

+

|

| 431 |

+

return torch.cat(model_out, dim=0), past_key_values

|

| 432 |

+

|

| 433 |

+

|

| 434 |

+

@torch.no_grad()

|

| 435 |

+

def forward_with_separate_cfg(self, x, timestep, input_ids, input_img_latents, input_image_sizes, attention_mask, position_ids, cfg_scale, use_img_cfg, img_cfg_scale, past_key_values, use_kv_cache, return_past_key_values=True):

|

| 436 |

+

"""

|

| 437 |

+

Forward pass of DiT, but also batches the unconditional forward pass for classifier-free guidance.

|

| 438 |

+

"""

|

| 439 |

+

self.llm.config.use_cache = use_kv_cache

|

| 440 |

+

if past_key_values is None:

|

| 441 |

+

past_key_values = [None] * len(attention_mask)

|

| 442 |

+

|

| 443 |

+

x = torch.split(x, len(x) // len(attention_mask), dim=0)

|

| 444 |

+

timestep = timestep.to(x[0].dtype)

|

| 445 |

+

timestep = torch.split(timestep, len(timestep) // len(input_ids), dim=0)

|

| 446 |

+

|

| 447 |

+

model_out, pask_key_values = [], []

|

| 448 |

+

for i in range(len(input_ids)):

|

| 449 |

+

temp_out, temp_pask_key_values = self.forward(x[i], timestep[i], input_ids[i], input_img_latents[i], input_image_sizes[i], attention_mask[i], position_ids[i], past_key_values[i])

|

| 450 |

+

model_out.append(temp_out)

|

| 451 |

+

pask_key_values.append(temp_pask_key_values)

|

| 452 |

+

|

| 453 |

+

if len(model_out) == 3:

|

| 454 |

+

cond, uncond, img_cond = model_out

|

| 455 |

+

cond = uncond + img_cfg_scale * (img_cond - uncond) + cfg_scale * (cond - img_cond)

|

| 456 |

+

model_out = [cond, cond, cond]

|

| 457 |

+

elif len(model_out) == 2:

|

| 458 |

+

cond, uncond = model_out

|

| 459 |

+

cond = uncond + cfg_scale * (cond - uncond)

|

| 460 |

+

model_out = [cond, cond]

|

| 461 |

+

else:

|

| 462 |

+

return model_out[0]

|

| 463 |

+

|

| 464 |

+

return torch.cat(model_out, dim=0), pask_key_values

|

| 465 |

+

|

| 466 |

+

|

| 467 |

+

|

| 468 |

+

|

OmniGen/pipeline.py

ADDED

|

@@ -0,0 +1,289 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import inspect

|

| 3 |

+

from typing import Any, Callable, Dict, List, Optional, Union

|

| 4 |

+

|

| 5 |

+

from PIL import Image

|

| 6 |

+

import numpy as np

|

| 7 |

+

import torch

|

| 8 |

+

from huggingface_hub import snapshot_download

|

| 9 |

+

from peft import LoraConfig, PeftModel

|

| 10 |

+

from diffusers.models import AutoencoderKL

|

| 11 |

+

from diffusers.utils import (

|

| 12 |

+

USE_PEFT_BACKEND,

|

| 13 |

+

is_torch_xla_available,

|

| 14 |

+

logging,

|

| 15 |

+

replace_example_docstring,

|

| 16 |

+

scale_lora_layers,

|

| 17 |

+

unscale_lora_layers,

|

| 18 |

+

)

|

| 19 |

+

from safetensors.torch import load_file

|

| 20 |

+

|

| 21 |

+

from OmniGen import OmniGen, OmniGenProcessor, OmniGenScheduler

|

| 22 |

+

|

| 23 |

+

import gc # For clearing unused objects

|

| 24 |

+

|

| 25 |

+

logger = logging.get_logger(__name__)

|

| 26 |

+

|

| 27 |

+

EXAMPLE_DOC_STRING = """

|

| 28 |

+

Examples:

|

| 29 |

+

```py

|

| 30 |

+

>>> from OmniGen import OmniGenPipeline

|

| 31 |

+

>>> pipe = FluxControlNetPipeline.from_pretrained(

|

| 32 |

+

... base_model

|

| 33 |

+

... )

|

| 34 |

+

>>> prompt = "A woman holds a bouquet of flowers and faces the camera"

|

| 35 |

+

>>> image = pipe(

|

| 36 |

+

... prompt,

|

| 37 |

+

... guidance_scale=3.0,

|

| 38 |

+

... num_inference_steps=50,

|

| 39 |

+

... ).images[0]

|

| 40 |

+

>>> image.save("t2i.png")

|

| 41 |

+

```

|

| 42 |

+