Update README.md

Browse files

README.md

CHANGED

|

@@ -1,99 +1,99 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: mit

|

| 3 |

-

license_link: https://huggingface.co/microsoft/Florence-2-large/resolve/main/LICENSE

|

| 4 |

-

pipeline_tag: image-text-to-text

|

| 5 |

-

tags:

|

| 6 |

-

- vision

|

| 7 |

-

- ocr

|

| 8 |

-

- segmentation

|

| 9 |

-

---

|

| 10 |

-

|

| 11 |

-

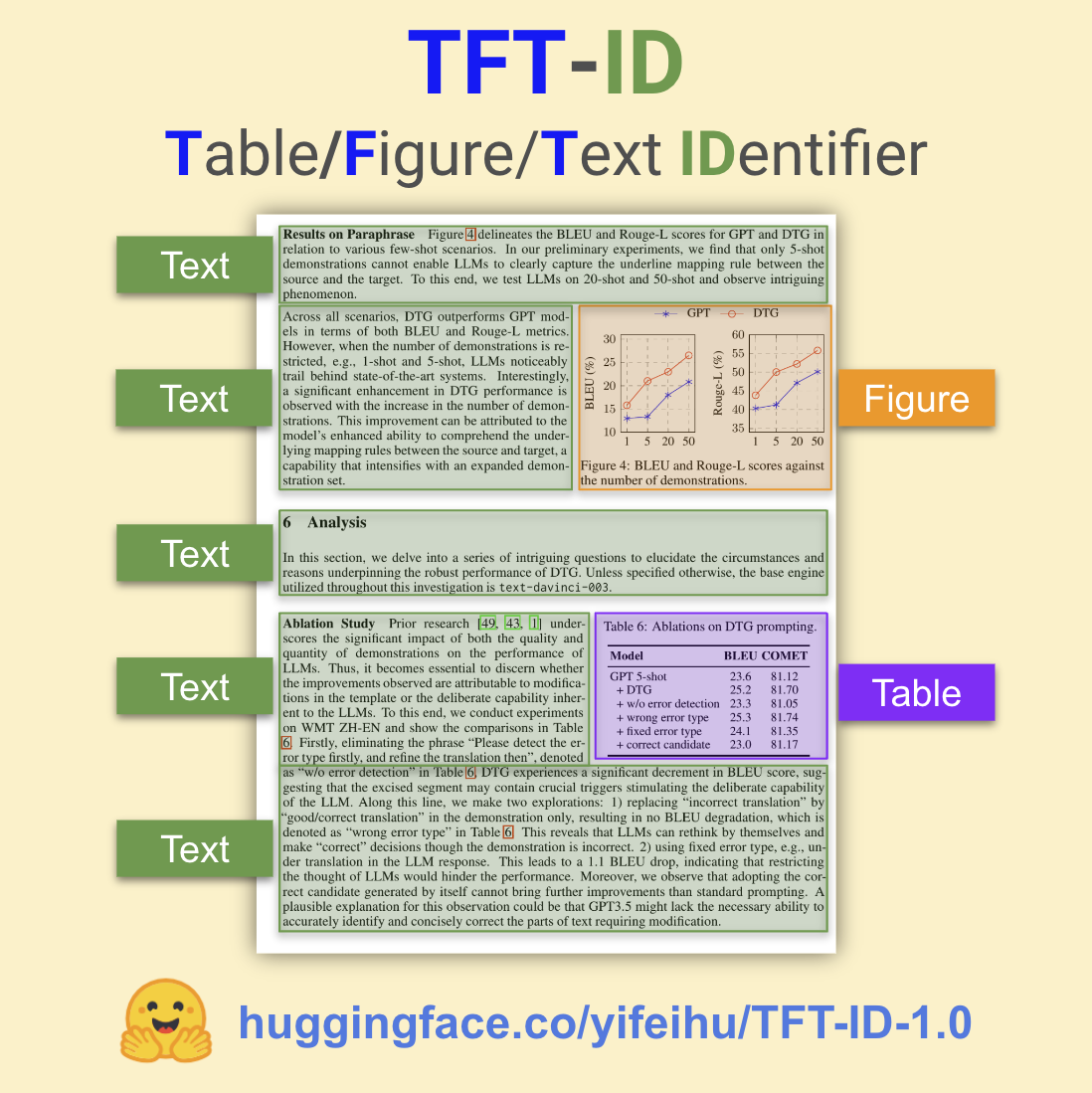

# TFT-ID: Table/Figure/Text IDentifier for academic papers

|

| 12 |

-

|

| 13 |

-

## Model Summary

|

| 14 |

-

|

| 15 |

-

TFT-ID (Table/Figure/Text IDentifier) is

|

| 16 |

-

|

| 17 |

-

checkpoints.

|

| 20 |

-

|

| 21 |

-

- The

|

| 22 |

-

- TFT-ID

|

| 23 |

-

- The text sections contain clean text content perfect for downstream OCR workflows. However, TFT-ID is not an OCR model.

|

| 24 |

-

|

| 25 |

-

Object Detection results format:

|

| 26 |

-

{'\<OD>': {'bboxes': [[x1, y1, x2, y2], ...],

|

| 27 |

-

'labels': ['label1', 'label2', ...]} }

|

| 28 |

-

|

| 29 |

-

## Training Code and Dataset

|

| 30 |

-

- Dataset: Coming soon.

|

| 31 |

-

- Code: [github.com/ai8hyf/TF-ID](https://github.com/ai8hyf/TF-ID)

|

| 32 |

-

|

| 33 |

-

## Benchmarks

|

| 34 |

-

|

| 35 |

-

|

| 36 |

-

|

| 37 |

-

Correct output - the model draws correct bounding boxes for every table/figure/text section in the given page and not missing any content

|

| 38 |

-

|

| 39 |

-

Task 1: Table, Figure, and Text Section Identification

|

| 40 |

-

| Model | Total Images | Correct Output | Success Rate |

|

| 41 |

-

|---------------------------------------------------------------|--------------|----------------|--------------|

|

| 42 |

-

| TFT-ID-1.0[[HF]](https://huggingface.co/yifeihu/TFT-ID-1.0) | 373 | 361 | 96.78% |

|

| 43 |

-

|

| 44 |

-

Task 2: Table and Figure Identification

|

| 45 |

-

| Model | Total Images | Correct Output | Success Rate |

|

| 46 |

-

|---------------------------------------------------------------|--------------|----------------|--------------|

|

| 47 |

-

| **TFT-ID-1.0**[[HF]](https://huggingface.co/yifeihu/TFT-ID-1.0) | 258 | 255 | **98.84%** |

|

| 48 |

-

| TF-ID-large[[HF]](https://huggingface.co/yifeihu/TF-ID-large) | 258 | 253 | 98.06% |

|

| 49 |

-

|

| 50 |

-

Depending on the use cases, some "incorrect" output could be totally usable. For example, the model draw two bounding boxes for one figure with two child components.

|

| 51 |

-

|

| 52 |

-

## How to Get Started with the Model

|

| 53 |

-

|

| 54 |

-

Use the code below to get started with the model.

|

| 55 |

-

|

| 56 |

-

```python

|

| 57 |

-

import requests

|

| 58 |

-

from PIL import Image

|

| 59 |

-

from transformers import AutoProcessor, AutoModelForCausalLM

|

| 60 |

-

|

| 61 |

-

model = AutoModelForCausalLM.from_pretrained("yifeihu/TFT-ID-1.0", trust_remote_code=True)

|

| 62 |

-

processor = AutoProcessor.from_pretrained("yifeihu/TFT-ID-1.0", trust_remote_code=True)

|

| 63 |

-

|

| 64 |

-

prompt = "<OD>"

|

| 65 |

-

|

| 66 |

-

url = "https://huggingface.co/yifeihu/TF-ID-base/resolve/main/arxiv_2305_10853_5.png?download=true"

|

| 67 |

-

image = Image.open(requests.get(url, stream=True).raw)

|

| 68 |

-

|

| 69 |

-

inputs = processor(text=prompt, images=image, return_tensors="pt")

|

| 70 |

-

|

| 71 |

-

generated_ids = model.generate(

|

| 72 |

-

input_ids=inputs["input_ids"],

|

| 73 |

-

pixel_values=inputs["pixel_values"],

|

| 74 |

-

max_new_tokens=1024,

|

| 75 |

-

do_sample=False,

|

| 76 |

-

num_beams=3

|

| 77 |

-

)

|

| 78 |

-

generated_text = processor.batch_decode(generated_ids, skip_special_tokens=False)[0]

|

| 79 |

-

|

| 80 |

-

parsed_answer = processor.post_process_generation(generated_text, task="<OD>", image_size=(image.width, image.height))

|

| 81 |

-

|

| 82 |

-

print(parsed_answer)

|

| 83 |

-

|

| 84 |

-

```

|

| 85 |

-

|

| 86 |

-

To visualize the results, see [this tutorial notebook](https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/how-to-finetune-florence-2-on-detection-dataset.ipynb) for more details.

|

| 87 |

-

|

| 88 |

-

## BibTex and citation info

|

| 89 |

-

|

| 90 |

-

```

|

| 91 |

-

@misc{TF-ID,

|

| 92 |

-

author = {Yifei Hu},

|

| 93 |

-

title = {TF-ID: Table/Figure IDentifier for academic papers},

|

| 94 |

-

year = {2024},

|

| 95 |

-

publisher = {GitHub},

|

| 96 |

-

journal = {GitHub repository},

|

| 97 |

-

howpublished = {\url{https://github.com/ai8hyf/TF-ID}},

|

| 98 |

-

}

|

| 99 |

```

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

license_link: https://huggingface.co/microsoft/Florence-2-large/resolve/main/LICENSE

|

| 4 |

+

pipeline_tag: image-text-to-text

|

| 5 |

+

tags:

|

| 6 |

+

- vision

|

| 7 |

+

- ocr

|

| 8 |

+

- segmentation

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# TFT-ID: Table/Figure/Text IDentifier for academic papers

|

| 12 |

+

|

| 13 |

+

## Model Summary

|

| 14 |

+

|

| 15 |

+

TFT-ID (Table/Figure/Text IDentifier) is an object detection model finetuned to extract tables, figures, and text sections in academic papers created by [Yifei Hu](https://x.com/hu_yifei).

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

TFT-ID is finetuned from [microsoft/Florence-2](https://huggingface.co/microsoft/Florence-2-large) checkpoints.

|

| 20 |

+

|

| 21 |

+

- The model was finetuned with papers from Hugging Face Daily Papers. All 36,000+ bounding boxes are manually annotated and checked by [Yifei Hu](https://x.com/hu_yifei).

|

| 22 |

+

- TFT-ID model takes an image of a single paper page as the input, and return bounding boxes for all tables, figures, and text sections in the given page.

|

| 23 |

+

- The text sections contain clean text content perfect for downstream OCR workflows. However, TFT-ID is not an OCR model.

|

| 24 |

+

|

| 25 |

+

Object Detection results format:

|

| 26 |

+

{'\<OD>': {'bboxes': [[x1, y1, x2, y2], ...],

|

| 27 |

+

'labels': ['label1', 'label2', ...]} }

|

| 28 |

+

|

| 29 |

+

## Training Code and Dataset

|

| 30 |

+

- Dataset: Coming soon.

|

| 31 |

+

- Code: [github.com/ai8hyf/TF-ID](https://github.com/ai8hyf/TF-ID)

|

| 32 |

+

|

| 33 |

+

## Benchmarks

|

| 34 |

+

|

| 35 |

+

The model was tested on paper pages outside the training dataset. The papers are a subset of huggingface daily paper.

|

| 36 |

+

|

| 37 |

+

Correct output - the model draws correct bounding boxes for every table/figure/text section in the given page and **does not missing any content**.

|

| 38 |

+

|

| 39 |

+

Task 1: Table, Figure, and Text Section Identification

|

| 40 |

+

| Model | Total Images | Correct Output | Success Rate |

|

| 41 |

+

|---------------------------------------------------------------|--------------|----------------|--------------|

|

| 42 |

+

| TFT-ID-1.0[[HF]](https://huggingface.co/yifeihu/TFT-ID-1.0) | 373 | 361 | 96.78% |

|

| 43 |

+

|

| 44 |

+

Task 2: Table and Figure Identification

|

| 45 |

+

| Model | Total Images | Correct Output | Success Rate |

|

| 46 |

+

|---------------------------------------------------------------|--------------|----------------|--------------|

|

| 47 |

+

| **TFT-ID-1.0**[[HF]](https://huggingface.co/yifeihu/TFT-ID-1.0) | 258 | 255 | **98.84%** |

|

| 48 |

+

| TF-ID-large[[HF]](https://huggingface.co/yifeihu/TF-ID-large) | 258 | 253 | 98.06% |

|

| 49 |

+

|

| 50 |

+

Note: Depending on the use cases, some "incorrect" output could be totally usable. For example, the model draw two bounding boxes for one figure with two child components.

|

| 51 |

+

|

| 52 |

+

## How to Get Started with the Model

|

| 53 |

+

|

| 54 |

+

Use the code below to get started with the model.

|

| 55 |

+

|

| 56 |

+

```python

|

| 57 |

+

import requests

|

| 58 |

+

from PIL import Image

|

| 59 |

+

from transformers import AutoProcessor, AutoModelForCausalLM

|

| 60 |

+

|

| 61 |

+

model = AutoModelForCausalLM.from_pretrained("yifeihu/TFT-ID-1.0", trust_remote_code=True)

|

| 62 |

+

processor = AutoProcessor.from_pretrained("yifeihu/TFT-ID-1.0", trust_remote_code=True)

|

| 63 |

+

|

| 64 |

+

prompt = "<OD>"

|

| 65 |

+

|

| 66 |

+

url = "https://huggingface.co/yifeihu/TF-ID-base/resolve/main/arxiv_2305_10853_5.png?download=true"

|

| 67 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 68 |

+

|

| 69 |

+

inputs = processor(text=prompt, images=image, return_tensors="pt")

|

| 70 |

+

|

| 71 |

+

generated_ids = model.generate(

|

| 72 |

+

input_ids=inputs["input_ids"],

|

| 73 |

+

pixel_values=inputs["pixel_values"],

|

| 74 |

+

max_new_tokens=1024,

|

| 75 |

+

do_sample=False,

|

| 76 |

+

num_beams=3

|

| 77 |

+

)

|

| 78 |

+

generated_text = processor.batch_decode(generated_ids, skip_special_tokens=False)[0]

|

| 79 |

+

|

| 80 |

+

parsed_answer = processor.post_process_generation(generated_text, task="<OD>", image_size=(image.width, image.height))

|

| 81 |

+

|

| 82 |

+

print(parsed_answer)

|

| 83 |

+

|

| 84 |

+

```

|

| 85 |

+

|

| 86 |

+

To visualize the results, see [this tutorial notebook](https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/how-to-finetune-florence-2-on-detection-dataset.ipynb) for more details.

|

| 87 |

+

|

| 88 |

+

## BibTex and citation info

|

| 89 |

+

|

| 90 |

+

```

|

| 91 |

+

@misc{TF-ID,

|

| 92 |

+

author = {Yifei Hu},

|

| 93 |

+

title = {TF-ID: Table/Figure IDentifier for academic papers},

|

| 94 |

+

year = {2024},

|

| 95 |

+

publisher = {GitHub},

|

| 96 |

+

journal = {GitHub repository},

|

| 97 |

+

howpublished = {\url{https://github.com/ai8hyf/TF-ID}},

|

| 98 |

+

}

|

| 99 |

```

|