Model Card

![]()

📖 Technical report | 🏠 Code | 🐰 Demo

This is GGUF format of Bunny-v1.0-4B.

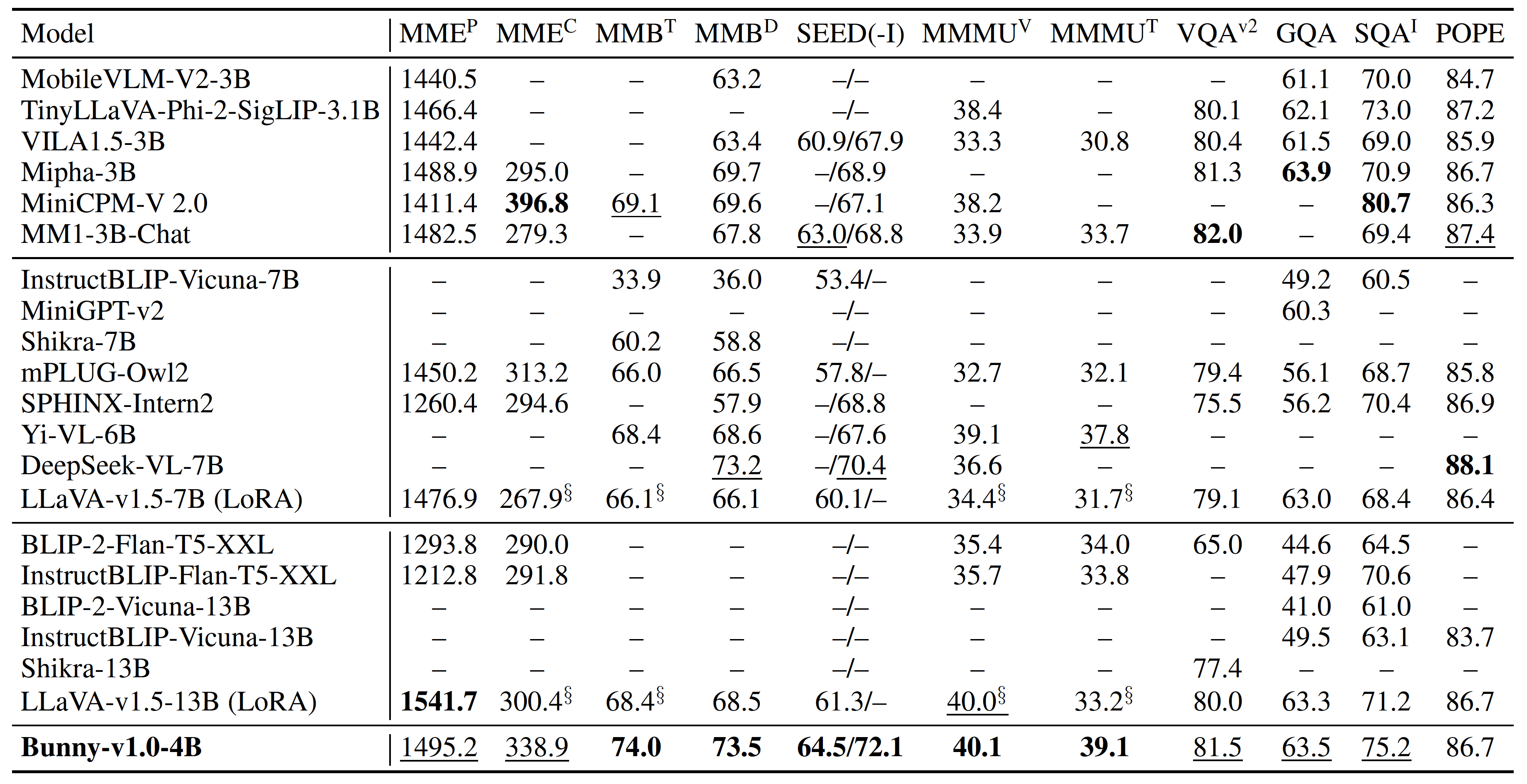

Bunny is a family of lightweight but powerful multimodal models. It offers multiple plug-and-play vision encoders, like EVA-CLIP, SigLIP and language backbones, including Phi-3-mini, Llama-3-8B, Phi-1.5, StableLM-2, Qwen1.5, MiniCPM and Phi-2. To compensate for the decrease in model size, we construct more informative training data by curated selection from a broader data source.

We provide Bunny-v1.0-4B, which is built upon SigLIP and Phi-3-Mini-4K-Instruct. More details about this model can be found in GitHub.

Quickstart

Chat by llama.cpp

# sample images can be found in images folder

# fp16

./llava-cli -m ggml-model-f16.gguf --mmproj mmproj-model-f16.gguf --image example_2.png -c 4096 -e \

-p "A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: <image>\nWhy is the image funny? ASSISTANT:" \

--temp 0.0

# int4

./llava-cli -m ggml-model-Q4_K_M.gguf --mmproj mmproj-model-f16.gguf --image example_2.png -c 4096 -e \

-p "A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: <image>\nWhy is the image funny? ASSISTANT:" \

--temp 0.0

Chat by ollama

# sample images can be found in images folder

# fp16

ollama create Bunny-Llama-3-8B-V-fp16 -f ./ollama-f16

ollama run Bunny-Llama-3-8B-V-fp16 'example_2.png

Why is the image funny?'

# int4

ollama create Bunny-Llama-3-8B-V-int4 -f ./ollama-Q4_K_M

ollama run Bunny-Llama-3-8B-V-int4 'example_2.png

Why is the image funny?'

- Downloads last month

- 113