license: other

license_name: flux-1-dev-non-commercial-license

license_link: https://huggingface.co/black-forest-labs/FLUX.1-dev/resolve/main/LICENSE.md

base_model:

- black-forest-labs/FLUX.1-dev

pipeline_tag: text-to-image

library_name: diffusers

tags:

- flux

- text-to-image

Flux.1 Lite

We want to announce the alpha version of our new distilled Flux.1 Lite model, an 8B parameter transformer model distilled from the original Flux1. dev model.

Our goal is to further reduce FLUX.1-dev transformer parameters up to 24Gb to make it compatible with most of GPU cards.

Motivation

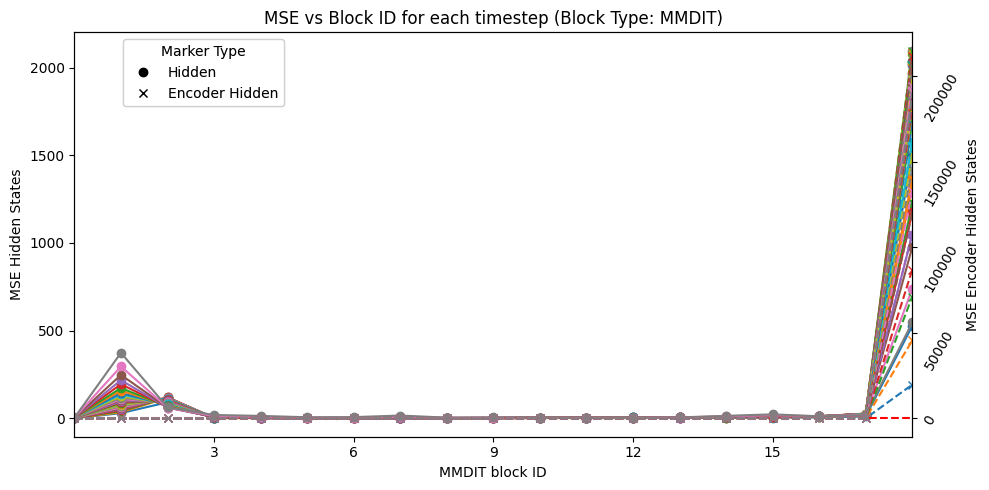

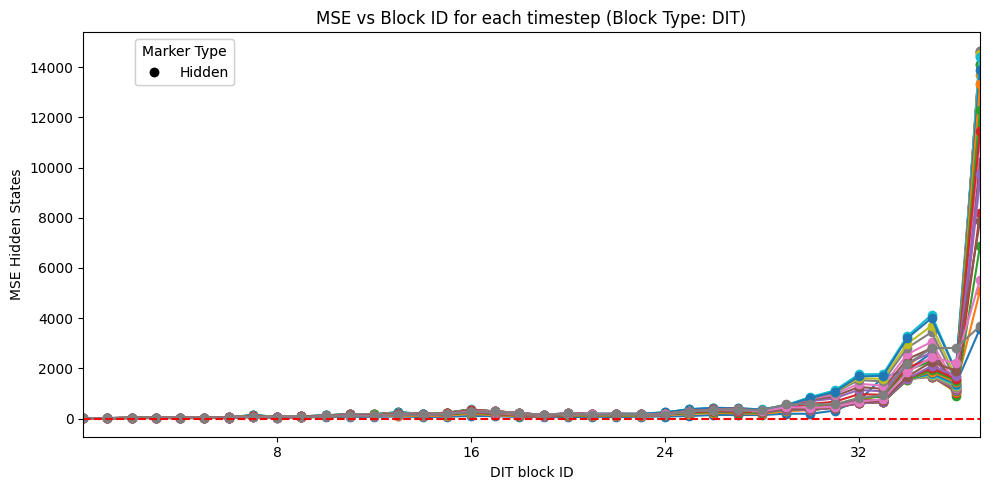

As stated by other members of the community like Ostris, it seems that each of the blocks of the Flux1.dev transformer is not contributing equally to the final image generation. To confirm this hypothesis, we can measure the MSE between the input and the output of each block. As we can see in the following images, the mse differs a lot between the different blocks.

Furthermore, as displayed in the following image, only when you skip one of the first MMDIT blocks, the performance of the model is severely affected.

Text-to-Image Usage

It is recommended to use a guidance_scale of 3.5 and a n_steps between 22 and 30 for best results.

import torch

from diffusers import FluxPipeline

base_model_id = "Freepik/flux.1-lite-8B-alpha"

torch_dtype = torch.bfloat16

device = "cuda"

# Load the pipe

model_id = "Freepik/flux.1-lite-8B-alpha"

pipe = FluxPipeline.from_pretrained(

model_id, torch_dtype=torch_dtype

).to(device)

# Inference

prompt = "A close-up image of a green alien with fluorescent skin in the middle of a dark purple forest"

guidance_scale = 3.5 # Important to keep guidance_scale to 3.5

n_steps = 28

seed = 11

with torch.inference_mode():

image = pipe(

prompt=prompt,

generator=torch.Generator(device="cpu").manual_seed(seed),

num_inference_steps=n_steps,

guidance_scale=guidance_scale,

height=1024,s

width=1024,

).images[0]

image.save("output.png")

ComfyUI

We also provided a ComfyUI workflow in comfy/flux.1-lite_workflow.json

Checkpoints

flux.1-lite-8B-alpha.safetensors: Transformer checkpoint, in Flux original format.transformers/: Contains distilled 8B transformer model, in diffusers format.

Try our Hugging Face demos:

Flux.1 Lite demo host on 🤗 flux.1-lite

News🔥🔥🔥

- Oct.18, 2024. Alpha 8B checkpoint and comparison demo 🤗 (i.e. Flux.1 Lite) is publicly available on HuggingFace Repo.

Citation

If you find our work helpful, please cite it!

@article{flux1-lite, title={Flux.1 Lite: Distilling Flux1.dev for Efficient Text-to-Image Generation}, author={Daniel Verdú, Javier Martín}, email={[email protected], [email protected]}, year={2024}, }