license: other

license_name: bria-rmbg-1.4

license_link: https://bria.ai/bria-huggingface-model-license-agreement/

pipeline_tag: image-segmentation

tags:

- remove background

- background

- background-removal

- Pytorch

- vision

- legal liability

- transformers

extra_gated_prompt: >-

This model weights by BRIA AI can be obtained after a commercial license is

agreed upon. Fill in the form below and we reach out to you.

extra_gated_fields:

Name: text

Company/Org name: text

Org Type (Early/Growth Startup, Enterprise, Academy): text

Role: text

Country: text

Email: text

By submitting this form, I agree to BRIA’s Privacy policy and Terms & conditions, see links below: checkbox

BRIA Background Removal v1.4 Model Card

RMBG v1.4 is our state-of-the-art background removal model, designed to effectively separate foreground from background in a range of categories and image types. This model has been trained on a carefully selected dataset, which includes: general stock images, e-commerce, gaming, and advertising content, making it suitable for commercial use cases powering enterprise content creation at scale. The accuracy, efficiency, and versatility currently rival leading source-available models. It is ideal where content safety, legally licensed datasets, and bias mitigation are paramount.

Developed by BRIA AI, RMBG v1.4 is available as a source-available model for non-commercial use.

Model Description

Developed by: BRIA AI

Model type: Background Removal

License: bria-rmbg-1.4

- The model is released under a Creative Commons license for non-commercial use.

- Commercial use is subject to a commercial agreement with BRIA. Contact Us for more information.

Model Description: BRIA RMBG 1.4 is a saliency segmentation model trained exclusively on a professional-grade dataset.

BRIA: Resources for more information: BRIA AI

Training data

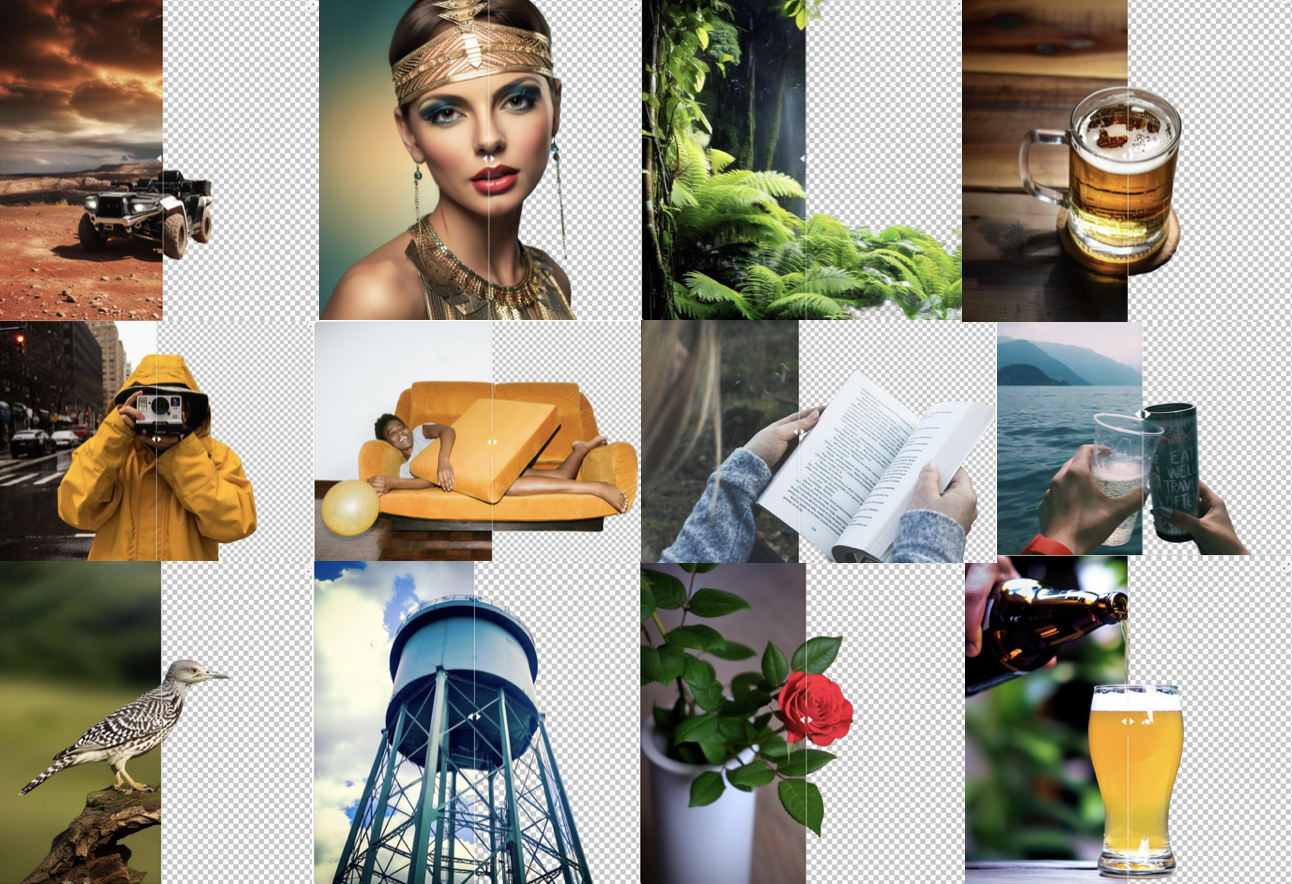

Bria-RMBG model was trained with over 12,000 high-quality, high-resolution, manually labeled (pixel-wise accuracy), fully licensed images. Our benchmark included balanced gender, balanced ethnicity, and people with different types of disabilities. For clarity, we provide our data distribution according to different categories, demonstrating our model’s versatility.

Distribution of images:

| Category | Distribution |

|---|---|

| Objects only | 45.11% |

| People with objects/animals | 25.24% |

| People only | 17.35% |

| people/objects/animals with text | 8.52% |

| Text only | 2.52% |

| Animals only | 1.89% |

| Category | Distribution |

|---|---|

| Photorealistic | 87.70% |

| Non-Photorealistic | 12.30% |

| Category | Distribution |

|---|---|

| Non Solid Background | 52.05% |

| Solid Background | 47.95% |

| Category | Distribution |

|---|---|

| Single main foreground object | 51.42% |

| Multiple objects in the foreground | 48.58% |

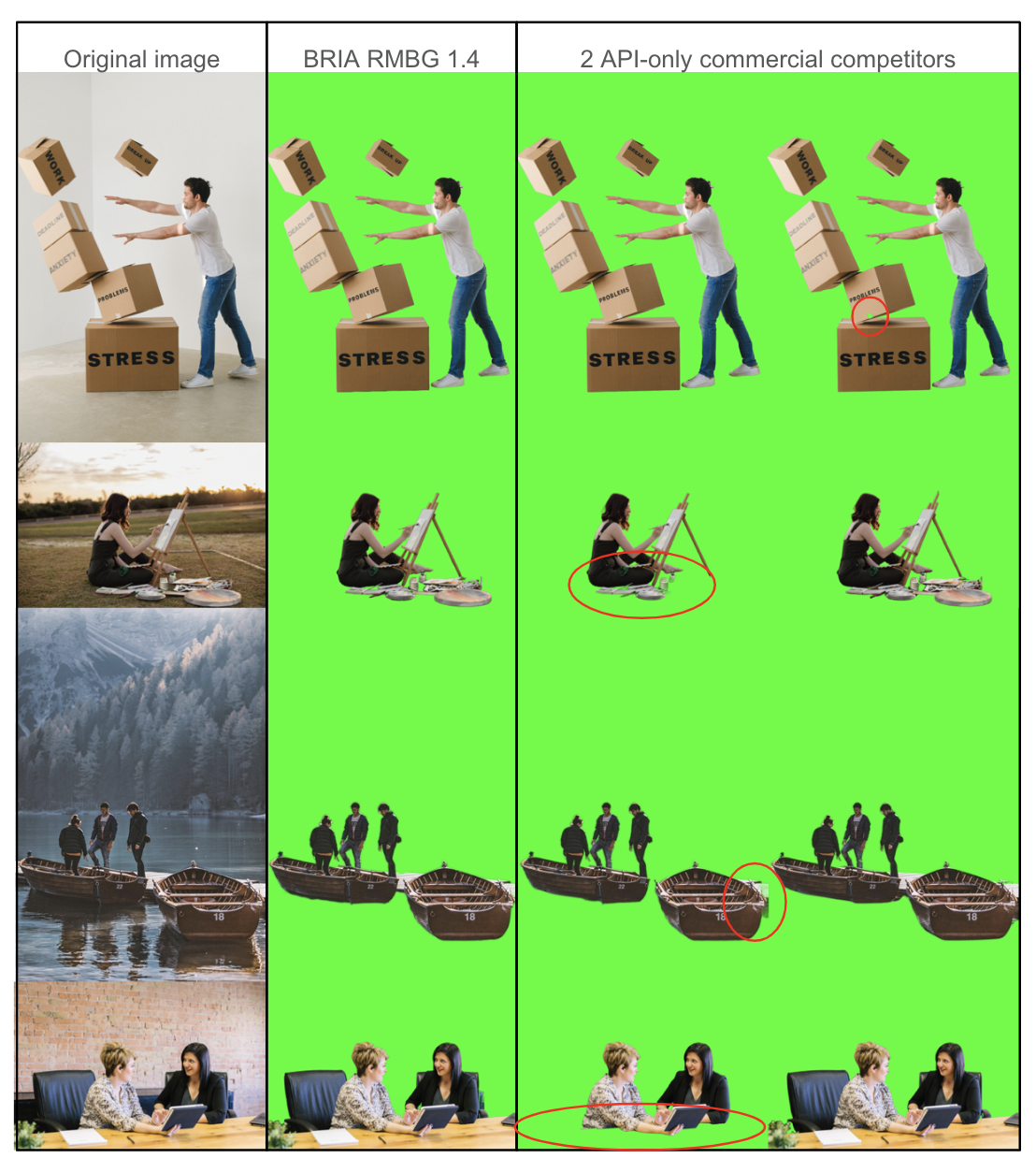

Qualitative Evaluation

Architecture

RMBG v1.4 is developed on the IS-Net enhanced with our unique training scheme and proprietary dataset. These modifications significantly improve the model’s accuracy and effectiveness in diverse image-processing scenarios.

Installation

pip install -qr https://huggingface.co/briaai/RMBG-1.4/resolve/main/requirements.txt

Usage

Either load the pipeline

from transformers import pipeline

image_path = "https://farm5.staticflickr.com/4007/4322154488_997e69e4cf_z.jpg"

pipe = pipeline("image-segmentation", model="briaai/RMBG-1.4", trust_remote_code=True)

pillow_mask = pipe(img_path, return_mask = True) # outputs a pillow mask

pillow_image = pipe(image_path) # applies mask on input and returns a pillow image

Or load the model

from transformers import AutoModelForImageSegmentation

model = AutoModelForImageSegmentation.from_pretrained("briaai/RMBG-1.4",trust_remote_code=True)

def preprocess_image(im: np.ndarray, model_input_size: list) -> torch.Tensor:

if len(im.shape) < 3:

im = im[:, :, np.newaxis]

# orig_im_size=im.shape[0:2]

im_tensor = torch.tensor(im, dtype=torch.float32).permute(2,0,1)

im_tensor = F.interpolate(torch.unsqueeze(im_tensor,0), size=model_input_size, mode='bilinear')

image = torch.divide(im_tensor,255.0)

image = normalize(image,[0.5,0.5,0.5],[1.0,1.0,1.0])

return image

def postprocess_image(result: torch.Tensor, im_size: list)-> np.ndarray:

result = torch.squeeze(F.interpolate(result, size=im_size, mode='bilinear') ,0)

ma = torch.max(result)

mi = torch.min(result)

result = (result-mi)/(ma-mi)

im_array = (result*255).permute(1,2,0).cpu().data.numpy().astype(np.uint8)

im_array = np.squeeze(im_array)

return im_array

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model.to(device)

# prepare input

image_path = "https://farm5.staticflickr.com/4007/4322154488_997e69e4cf_z.jpg"

orig_im = io.imread(im_path)

orig_im_size = orig_im.shape[0:2]

image = preprocess_image(orig_im, model_input_size).to(device)

# inference

result=model(image)

# post process

result_image = postprocess_image(result[0][0], orig_im_size)

# save result

pil_im = Image.fromarray(result_image)

no_bg_image = Image.new("RGBA", pil_im.size, (0,0,0,0))

orig_image = Image.open(im_path)

no_bg_image.paste(orig_image, mask=pil_im)