url

stringlengths 61

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 75

75

| comments_url

stringlengths 70

70

| events_url

stringlengths 68

68

| html_url

stringlengths 49

51

| id

int64 1.08B

1.73B

| node_id

stringlengths 18

19

| number

int64 3.45k

5.9k

| title

stringlengths 1

290

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

list | milestone

dict | comments

sequence | created_at

timestamp[s] | updated_at

timestamp[s] | closed_at

timestamp[s] | author_association

stringclasses 3

values | active_lock_reason

null | draft

bool 2

classes | pull_request

dict | body

stringlengths 2

36.2k

⌀ | reactions

dict | timeline_url

stringlengths 70

70

| performed_via_github_app

null | state_reason

stringclasses 3

values | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/3649 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3649/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3649/comments | https://api.github.com/repos/huggingface/datasets/issues/3649/events | https://github.com/huggingface/datasets/issues/3649 | 1,117,502,250 | I_kwDODunzps5Cm7sq | 3,649 | Add IGLUE dataset | {

"login": "lewtun",

"id": 26859204,

"node_id": "MDQ6VXNlcjI2ODU5MjA0",

"avatar_url": "https://avatars.githubusercontent.com/u/26859204?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lewtun",

"html_url": "https://github.com/lewtun",

"followers_url": "https://api.github.com/users/lewtun/followers",

"following_url": "https://api.github.com/users/lewtun/following{/other_user}",

"gists_url": "https://api.github.com/users/lewtun/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lewtun/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lewtun/subscriptions",

"organizations_url": "https://api.github.com/users/lewtun/orgs",

"repos_url": "https://api.github.com/users/lewtun/repos",

"events_url": "https://api.github.com/users/lewtun/events{/privacy}",

"received_events_url": "https://api.github.com/users/lewtun/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 2067376369,

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request",

"name": "dataset request",

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset"

},

{

"id": 3608944167,

"node_id": "LA_kwDODunzps7XHB4n",

"url": "https://api.github.com/repos/huggingface/datasets/labels/multimodal",

"name": "multimodal",

"color": "19E633",

"default": false,

"description": "Multimodal datasets"

}

] | open | false | null | [] | null | [] | 2022-01-28T14:59:41 | 2022-01-28T15:02:35 | null | MEMBER | null | null | null | ## Adding a Dataset

- **Name:** IGLUE

- **Description:** IGLUE brings together 4 vision-and-language tasks across 20 languages (Twitter [thread](https://twitter.com/ebugliarello/status/1487045497583976455?s=20&t=SB4LZGDhhkUW83ugcX_m5w))

- **Paper:** https://arxiv.org/abs/2201.11732

- **Data:** https://github.com/e-bug/iglue

- **Motivation:** This dataset would provide a nice example of combining the text and image features of `datasets` together for multimodal applications.

Note: the data / code are not yet visible on the GitHub repo, so I've pinged the authors for more information.

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3649/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3649/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3648 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3648/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3648/comments | https://api.github.com/repos/huggingface/datasets/issues/3648/events | https://github.com/huggingface/datasets/pull/3648 | 1,117,465,505 | PR_kwDODunzps4xvXig | 3,648 | Fix Windows CI: bump python to 3.7 | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2022-01-28T14:24:54 | 2022-01-28T14:40:39 | 2022-01-28T14:40:39 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3648",

"html_url": "https://github.com/huggingface/datasets/pull/3648",

"diff_url": "https://github.com/huggingface/datasets/pull/3648.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3648.patch",

"merged_at": "2022-01-28T14:40:39"

} | Python>=3.7 is needed to install `tokenizers` 0.11 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3648/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3648/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3647 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3647/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3647/comments | https://api.github.com/repos/huggingface/datasets/issues/3647/events | https://github.com/huggingface/datasets/pull/3647 | 1,117,383,675 | PR_kwDODunzps4xvGDQ | 3,647 | Fix `add_column` on datasets with indices mapping | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Sure, let's include this in today's release.",

"Cool ! The windows CI should be fixed on master now, feel free to merge :)"

] | 2022-01-28T13:06:29 | 2022-01-28T15:35:58 | 2022-01-28T15:35:58 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3647",

"html_url": "https://github.com/huggingface/datasets/pull/3647",

"diff_url": "https://github.com/huggingface/datasets/pull/3647.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3647.patch",

"merged_at": "2022-01-28T15:35:57"

} | My initial idea was to avoid the `flatten_indices` call and reorder a new column instead, but in the end I decided to follow `concatenate_datasets` and use `flatten_indices` to avoid padding when `dataset._indices.num_rows != dataset._data.num_rows`.

Fix #3599 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3647/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3647/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3646 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3646/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3646/comments | https://api.github.com/repos/huggingface/datasets/issues/3646/events | https://github.com/huggingface/datasets/pull/3646 | 1,116,544,627 | PR_kwDODunzps4xsX66 | 3,646 | Fix streaming datasets that are not reset correctly | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Works smoothly with the `transformers.Trainer` class now, thank you!"

] | 2022-01-27T17:21:02 | 2022-01-28T16:34:29 | 2022-01-28T16:34:28 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3646",

"html_url": "https://github.com/huggingface/datasets/pull/3646",

"diff_url": "https://github.com/huggingface/datasets/pull/3646.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3646.patch",

"merged_at": "2022-01-28T16:34:28"

} | Streaming datasets that use `StreamingDownloadManager.iter_archive` and `StreamingDownloadManager.iter_files` had some issues. Indeed if you try to iterate over such dataset twice, then the second time it will be empty.

This is because the two methods above are generator functions. I fixed this by making them return iterables that are reset properly instead.

Close https://github.com/huggingface/datasets/issues/3645

cc @anton-l | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3646/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3646/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3645 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3645/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3645/comments | https://api.github.com/repos/huggingface/datasets/issues/3645/events | https://github.com/huggingface/datasets/issues/3645 | 1,116,541,298 | I_kwDODunzps5CjRFy | 3,645 | Streaming dataset based on dl_manager.iter_archive/iter_files are not reset correctly | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

}

] | null | [] | 2022-01-27T17:17:41 | 2022-01-28T16:34:28 | 2022-01-28T16:34:28 | MEMBER | null | null | null | Hi ! When iterating over a streaming dataset once, it's not reset correctly because of some issues with `dl_manager.iter_archive` and `dl_manager.iter_files`. Indeed they are generator functions (so the iterator that is returned can be exhausted). They should be iterables instead, and be reset if we do a for loop again:

```python

from datasets import load_dataset

d = load_dataset("common_voice", "ab", split="test", streaming=True)

i = 0

for i, _ in enumerate(d):

pass

print(i) # 8

# let's do it again

i = 0

for i, _ in enumerate(d):

pass

print(i) # 0

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3645/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3645/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3644 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3644/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3644/comments | https://api.github.com/repos/huggingface/datasets/issues/3644/events | https://github.com/huggingface/datasets/issues/3644 | 1,116,519,670 | I_kwDODunzps5CjLz2 | 3,644 | Add a GROUP BY operator | {

"login": "felix-schneider",

"id": 208336,

"node_id": "MDQ6VXNlcjIwODMzNg==",

"avatar_url": "https://avatars.githubusercontent.com/u/208336?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/felix-schneider",

"html_url": "https://github.com/felix-schneider",

"followers_url": "https://api.github.com/users/felix-schneider/followers",

"following_url": "https://api.github.com/users/felix-schneider/following{/other_user}",

"gists_url": "https://api.github.com/users/felix-schneider/gists{/gist_id}",

"starred_url": "https://api.github.com/users/felix-schneider/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/felix-schneider/subscriptions",

"organizations_url": "https://api.github.com/users/felix-schneider/orgs",

"repos_url": "https://api.github.com/users/felix-schneider/repos",

"events_url": "https://api.github.com/users/felix-schneider/events{/privacy}",

"received_events_url": "https://api.github.com/users/felix-schneider/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | open | false | null | [] | null | [

"Hi ! At the moment you can use `to_pandas()` to get a pandas DataFrame that supports `group_by` operations (make sure your dataset fits in memory though)\r\n\r\nWe use Arrow as a back-end for `datasets` and it doesn't have native group by (see https://github.com/apache/arrow/issues/2189) unfortunately.\r\n\r\nI just drafted what it could look like to have `group_by` in `datasets`:\r\n```python\r\nfrom datasets import concatenate_datasets\r\n\r\ndef group_by(d, col, join): \r\n \"\"\"from: https://github.com/huggingface/datasets/issues/3644\"\"\"\r\n # Get the indices of each group\r\n groups = {key: [] for key in d.unique(col)} \r\n def create_groups_indices(key, i): \r\n groups[key].append(i) \r\n d.map(create_groups_indices, with_indices=True, input_columns=col) \r\n # Get one dataset object per group\r\n groups = {key: d.select(indices) for key, indices in groups.items()} \r\n # Apply join function\r\n groups = {\r\n key: dataset_group.map(join, batched=True, batch_size=len(dataset_group), remove_columns=d.column_names)\r\n for key, dataset_group in groups.items()\r\n } \r\n # Return concatenation of all the joined groups\r\n return concatenate_datasets(groups.values())\r\n```\r\n\r\nexample of usage:\r\n```python\r\n\r\ndef join(batch): \r\n # take the batch of all the examples of a group, and return a batch with one aggregated example\r\n # (we could aggregate examples into several rows instead of one, if you want)\r\n return {\"total\": [batch[\"i\"]]} \r\n\r\nd = Dataset.from_dict({\r\n \"i\": [i for i in range(50)],\r\n \"group_key\": [i % 4 for i in range(50)],\r\n})\r\nprint(group_by(d, \"group_key\", join))\r\n# total\r\n# 0 [0, 4, 8, 12, 16, 20, 24, 28, 32, 36, 40, 44, 48]\r\n# 1 [1, 5, 9, 13, 17, 21, 25, 29, 33, 37, 41, 45, 49]\r\n# 2 [2, 6, 10, 14, 18, 22, 26, 30, 34, 38, 42, 46]\r\n# 3 [3, 7, 11, 15, 19, 23, 27, 31, 35, 39, 43, 47]\r\n```\r\n\r\nLet me know if that helps !\r\n\r\ncc @albertvillanova @mariosasko for visibility",

"@lhoestq As of PyArrow 7.0.0, `pa.Table` has the [`group_by` method](https://arrow.apache.org/docs/python/generated/pyarrow.Table.html#pyarrow.Table.group_by), so we should also consider using that function for grouping. ",

"Any update on this?",

"You can use https://github.com/mariosasko/datasets_sql by @mariosasko to go group by operations using SQL queries",

"Hi, I have a similar issue as OP but the suggested solutions do not work for my case. Basically, I process documents through a model to extract the last_hidden_state, using the \"map\" method on a Dataset object, but would like to average the result over a categorical column at the end (i.e. groupby this column).\r\n- A to_pandas() saturates the memory, although it gives me the desired result through a .groupby().apply(np.mean, axis=0) on a smaller use-case,\r\n- The solution posted on Feb 4 is much too slow,\r\n- datasets_sql seems to not like the fact that I'm averaging np.arrays.\r\nSo I'm kinda out of \"non brute force\" options... Any help appreciated",

"> Hi, I have a similar issue as OP but the suggested solutions do not work for my case. Basically, I process documents through a model to extract the last_hidden_state, using the \"map\" method on a Dataset object, but would like to average the result over a categorical column at the end (i.e. groupby this column).\r\n \r\nIf you haven't yet, you could explore using [Polars](https://www.pola.rs/) for this. It's a new DataFrame library written in Rust with Python bindings. It is Pandas like it in many ways ,but does have some biggish differences in syntax/approach so it's definitely not a drop-in replacement. \r\n\r\nPolar's also uses Arrow as a backend but also supports out-of-memory operations; in this case, it's probably easiest to write out your dataset to parquet and then use the polar's `scan_parquet` method (this will lazily read from the parquet file). The thing you get back from that is a `LazyDataFrame` i.e. nothing is loaded into memory until you specify a query and call a `collect` method. \r\n\r\nExample below of doing a groupby on a dataset which definitely wouldn't fit into memory on my machine:\r\n\r\n```\r\nfrom datasets import load_dataset\r\nimport polars as pl\r\n\r\nds = load_dataset(\"blbooks\")\r\nds['train'].to_parquet(\"test.parquet\")\r\ndf = pl.scan_parquet(\"test.parquet\")\r\ndf.groupby('date').agg([pl.count()]).collect()\r\n```\r\n\r\n>datasets_sql seems to not like the fact that I'm averaging np.arrays.\r\n\r\nI am not certain how Polars will handle this either. It does have NumPy support (https://pola-rs.github.io/polars-book/user-guide/howcani/interop/numpy.html) but I assume Polars will need to have at least enough memory in each group you want to average over so you may still end up needing more memory depending on the size of your dataset/groups. \r\n\r\n\r\n",

"Hi @davanstrien , thanks a lot, I didn't know about this library and the answer works! I need to try it on the full dataset now, but I'm hopeful. Here's what my code looks like:\r\n```\r\nlist_size = 768\r\ndf.groupby(\"date\").agg(\r\n pl.concat_list(\r\n [\r\n pl.col(\"hidden_state\")\r\n .arr.slice(n, 1)\r\n .arr.first()\r\n .mean()\r\n for n in range(0, list_size)\r\n ]\r\n ).collect()\r\n```\r\n\r\nFor some reasons, the following code was giving me a \"mean() got unexpected argument 'axis'\":\r\n```\r\ndf2 = df.groupby('date').agg(\r\n pl.col(\"hidden_state\").map(np.mean).alias(\"average_hidden_state\")\r\n).collect()\r\n\r\n```\r\n\r\nEDIT: The solution works on my large dataset, the memory does not crash and the time is reasonable, thanks a lot again!",

"@jeremylhour glad this worked for you :) ",

"I find this functionality missing in my workflow as well and the workarounds with SQL and Polars unsatisfying. Since PyArrow has exposed this functionality, I hope this soon makes it into a release. (:"

] | 2022-01-27T16:57:54 | 2023-03-14T14:45:59 | null | NONE | null | null | null | **Is your feature request related to a problem? Please describe.**

Using batch mapping, we can easily split examples. However, we lack an appropriate option for merging them back together by some key. Consider this example:

```python

# features:

# {

# "example_id": datasets.Value("int32"),

# "text": datasets.Value("string")

# }

ds = datasets.Dataset()

def split(examples):

sentences = [text.split(".") for text in examples["text"]]

return {

"example_id": [

example_id

for example_id, sents in zip(examples["example_id"], sentences)

for _ in sents

],

"sentence": [sent for sents in sentences for sent in sents],

"sentence_id": [i for sents in sentences for i in range(len(sents))],

}

split_ds = ds.map(split, batched=True)

def process(examples):

outputs = some_neural_network_that_works_on_sentences(examples["sentence"])

return {"outputs": outputs}

split_ds = split_ds.map(process, batched=True)

```

I have a dataset consisting of texts that I would like to process sentence by sentence in a batched way. Afterwards, I would like to put it back together as it was, merging the outputs together.

**Describe the solution you'd like**

Ideally, it would look something like this:

```python

def join(examples):

order = np.argsort(examples["sentence_id"])

text = ".".join(examples["text"][i] for i in order)

outputs = [examples["outputs"][i] for i in order]

return {"text": text, "outputs": outputs}

ds = split_ds.group_by("example_id", join)

```

**Describe alternatives you've considered**

Right now, we can do this:

```python

def merge(example):

meeting_id = example["example_id"]

parts = split_ds.filter(lambda x: x["example_id"] == meeting_id).sort("segment_no")

return {"outputs": list(parts["outputs"])}

ds = ds.map(merge)

```

Of course, we could process the dataset like this:

```python

def process(example):

outputs = some_neural_network_that_works_on_sentences(example["text"].split("."))

return {"outputs": outputs}

ds = ds.map(process, batched=True)

```

However, that does not allow using an arbitrary batch size and may lead to very inefficient use of resources if the batch size is much larger than the number of sentences in one example.

I would very much appreciate some kind of group by operator to merge examples based on the value of one column.

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3644/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3644/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3643 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3643/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3643/comments | https://api.github.com/repos/huggingface/datasets/issues/3643/events | https://github.com/huggingface/datasets/pull/3643 | 1,116,417,428 | PR_kwDODunzps4xr8mX | 3,643 | Fix sem_eval_2018_task_1 download location | {

"login": "maxpel",

"id": 31095360,

"node_id": "MDQ6VXNlcjMxMDk1MzYw",

"avatar_url": "https://avatars.githubusercontent.com/u/31095360?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/maxpel",

"html_url": "https://github.com/maxpel",

"followers_url": "https://api.github.com/users/maxpel/followers",

"following_url": "https://api.github.com/users/maxpel/following{/other_user}",

"gists_url": "https://api.github.com/users/maxpel/gists{/gist_id}",

"starred_url": "https://api.github.com/users/maxpel/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/maxpel/subscriptions",

"organizations_url": "https://api.github.com/users/maxpel/orgs",

"repos_url": "https://api.github.com/users/maxpel/repos",

"events_url": "https://api.github.com/users/maxpel/events{/privacy}",

"received_events_url": "https://api.github.com/users/maxpel/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"I fixed those two things, the two remaining failing checks seem to be due to some dependency missing in the tests."

] | 2022-01-27T15:45:00 | 2022-02-04T15:15:26 | 2022-02-04T15:15:26 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3643",

"html_url": "https://github.com/huggingface/datasets/pull/3643",

"diff_url": "https://github.com/huggingface/datasets/pull/3643.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3643.patch",

"merged_at": "2022-02-04T15:15:26"

} | As discussed with @lhoestq in https://github.com/huggingface/datasets/issues/3549#issuecomment-1020176931_ this is the new pull request to fix the download location. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3643/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3643/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3642 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3642/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3642/comments | https://api.github.com/repos/huggingface/datasets/issues/3642/events | https://github.com/huggingface/datasets/pull/3642 | 1,116,306,986 | PR_kwDODunzps4xrj2S | 3,642 | Fix dataset slicing with negative bounds when indices mapping is not `None` | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2022-01-27T14:45:53 | 2022-01-27T18:16:23 | 2022-01-27T18:16:22 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3642",

"html_url": "https://github.com/huggingface/datasets/pull/3642",

"diff_url": "https://github.com/huggingface/datasets/pull/3642.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3642.patch",

"merged_at": "2022-01-27T18:16:22"

} | Fix #3611 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3642/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3642/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3641 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3641/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3641/comments | https://api.github.com/repos/huggingface/datasets/issues/3641/events | https://github.com/huggingface/datasets/pull/3641 | 1,116,284,268 | PR_kwDODunzps4xre7C | 3,641 | Fix numpy rngs when seed is None | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2022-01-27T14:29:09 | 2022-01-27T18:16:08 | 2022-01-27T18:16:07 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3641",

"html_url": "https://github.com/huggingface/datasets/pull/3641",

"diff_url": "https://github.com/huggingface/datasets/pull/3641.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3641.patch",

"merged_at": "2022-01-27T18:16:07"

} | Fixes the NumPy RNG when `seed` is `None`.

The problem becomes obvious after reading the NumPy notes on RNG (returned by `np.random.get_state()`):

> The MT19937 state vector consists of a 624-element array of 32-bit unsigned integers plus a single integer value between 0 and 624 that indexes the current position within the main array.

`The MT19937 state vector`: the seed which we currently index, but this value stays the same for multiple rounds.

`plus a single integer value`: the `pos` value in this PR (is 624 if `seed` is set to a fixed value with `np.random.seed`, so we take the first value in the `seed` array returned by `np.random.get_state()`: https://stackoverflow.com/questions/32172054/how-can-i-retrieve-the-current-seed-of-numpys-random-number-generator)

NumPy notes: https://numpy.org/doc/stable/reference/random/bit_generators/mt19937.html

Fix #3634 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3641/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3641/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3640 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3640/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3640/comments | https://api.github.com/repos/huggingface/datasets/issues/3640/events | https://github.com/huggingface/datasets/issues/3640 | 1,116,133,769 | I_kwDODunzps5ChtmJ | 3,640 | Issues with custom dataset in Wav2Vec2 | {

"login": "peregilk",

"id": 9079808,

"node_id": "MDQ6VXNlcjkwNzk4MDg=",

"avatar_url": "https://avatars.githubusercontent.com/u/9079808?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/peregilk",

"html_url": "https://github.com/peregilk",

"followers_url": "https://api.github.com/users/peregilk/followers",

"following_url": "https://api.github.com/users/peregilk/following{/other_user}",

"gists_url": "https://api.github.com/users/peregilk/gists{/gist_id}",

"starred_url": "https://api.github.com/users/peregilk/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/peregilk/subscriptions",

"organizations_url": "https://api.github.com/users/peregilk/orgs",

"repos_url": "https://api.github.com/users/peregilk/repos",

"events_url": "https://api.github.com/users/peregilk/events{/privacy}",

"received_events_url": "https://api.github.com/users/peregilk/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [] | null | [

"Closed and moved to transformers."

] | 2022-01-27T12:09:05 | 2022-01-27T12:29:48 | 2022-01-27T12:29:48 | NONE | null | null | null | We are training Vav2Vec using the run_speech_recognition_ctc_bnb.py-script.

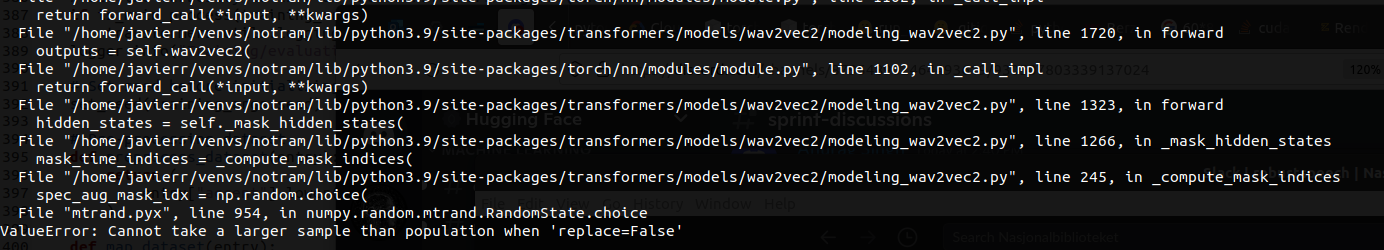

This is working fine with Common Voice, however using our custom dataset and data loader at [NbAiLab/NPSC]( https://huggingface.co/datasets/NbAiLab/NPSC) it crashes after roughly 1 epoch with the following stack trace:

We are able to work around the issue, for instance by adding this check in line#222 in transformers/models/wav2vec2/modeling_wav2vec2.py:

```python

if input_length - (mask_length - 1) < num_masked_span:

num_masked_span = input_length - (mask_length - 1)

```

Interestingly, these are the variable values before the adjustment:

```

input_length=10

mask_length=10

num_masked_span=2

````

After adjusting num_masked_spin to 1, the training script runs. The issue is also fixed by setting “replace=True” in the same function.

Do you have any idea what is causing this, and how to fix this error permanently? If you do not think this is an Datasets issue, feel free to move the issue.

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3640/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3640/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3639 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3639/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3639/comments | https://api.github.com/repos/huggingface/datasets/issues/3639/events | https://github.com/huggingface/datasets/issues/3639 | 1,116,021,420 | I_kwDODunzps5ChSKs | 3,639 | same value of precision, recall, f1 score at each epoch for classification task. | {

"login": "Dhanachandra",

"id": 10828657,

"node_id": "MDQ6VXNlcjEwODI4NjU3",

"avatar_url": "https://avatars.githubusercontent.com/u/10828657?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Dhanachandra",

"html_url": "https://github.com/Dhanachandra",

"followers_url": "https://api.github.com/users/Dhanachandra/followers",

"following_url": "https://api.github.com/users/Dhanachandra/following{/other_user}",

"gists_url": "https://api.github.com/users/Dhanachandra/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Dhanachandra/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Dhanachandra/subscriptions",

"organizations_url": "https://api.github.com/users/Dhanachandra/orgs",

"repos_url": "https://api.github.com/users/Dhanachandra/repos",

"events_url": "https://api.github.com/users/Dhanachandra/events{/privacy}",

"received_events_url": "https://api.github.com/users/Dhanachandra/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Hi @Dhanachandra, \r\n\r\nWe have tests for all our metrics and they work as expected: under the hood, we use scikit-learn implementations.\r\n\r\nMaybe the cause is somewhere else. For example:\r\n- Is it a binary or a multiclass or a multilabel classification? Default computation of these metrics is for binary classification; if you would like multiclass or multilabel, you should pass the corresponding parameters; see their documentation (e.g.: https://scikit-learn.org/stable/modules/generated/sklearn.metrics.precision_score.html) or code below:\r\n\r\nhttps://huggingface.co/docs/datasets/using_metrics.html#computing-the-metric-scores\r\n\r\n```python\r\nIn [1]: from datasets import load_metric\r\n\r\nIn [2]: precision = load_metric(\"precision\")\r\n\r\nIn [3]: print(precision.inputs_description)\r\n\r\nArgs:\r\n predictions: Predicted labels, as returned by a model.\r\n references: Ground truth labels.\r\n labels: The set of labels to include when average != 'binary', and\r\n their order if average is None. Labels present in the data can\r\n be excluded, for example to calculate a multiclass average ignoring\r\n a majority negative class, while labels not present in the data will\r\n result in 0 components in a macro average. For multilabel targets,\r\n labels are column indices. By default, all labels in y_true and\r\n y_pred are used in sorted order.\r\n average: This parameter is required for multiclass/multilabel targets.\r\n If None, the scores for each class are returned. Otherwise, this\r\n determines the type of averaging performed on the data:\r\n binary: Only report results for the class specified by pos_label.\r\n This is applicable only if targets (y_{true,pred}) are binary.\r\n micro: Calculate metrics globally by counting the total true positives,\r\n false negatives and false positives.\r\n macro: Calculate metrics for each label, and find their unweighted mean.\r\n This does not take label imbalance into account.\r\n weighted: Calculate metrics for each label, and find their average\r\n weighted by support (the number of true instances for each label).\r\n This alters ‘macro’ to account for label imbalance; it can result\r\n in an F-score that is not between precision and recall.\r\n samples: Calculate metrics for each instance, and find their average\r\n (only meaningful for multilabel classification).\r\n sample_weight: Sample weights.\r\n\r\nReturns:\r\n precision: Precision score.\r\n\r\nExamples:\r\n\r\n >>> precision_metric = datasets.load_metric(\"precision\")\r\n >>> results = precision_metric.compute(references=[0, 1], predictions=[0, 1])\r\n >>> print(results)\r\n {'precision': 1.0}\r\n\r\n >>> predictions = [0, 2, 1, 0, 0, 1]\r\n >>> references = [0, 1, 2, 0, 1, 2]\r\n >>> results = precision_metric.compute(predictions=predictions, references=references, average='macro')\r\n >>> print(results)\r\n {'precision': 0.2222222222222222}\r\n >>> results = precision_metric.compute(predictions=predictions, references=references, average='micro')\r\n >>> print(results)\r\n {'precision': 0.3333333333333333}\r\n >>> results = precision_metric.compute(predictions=predictions, references=references, average='weighted')\r\n >>> print(results)\r\n {'precision': 0.2222222222222222}\r\n >>> results = precision_metric.compute(predictions=predictions, references=references, average=None)\r\n >>> print(results)\r\n {'precision': array([0.66666667, 0. , 0. ])}\r\n```\r\n"

] | 2022-01-27T10:14:16 | 2022-02-24T09:02:18 | 2022-02-24T09:02:17 | NONE | null | null | null | **1st Epoch:**

1/27/2022 09:30:48 - INFO - datasets.metric - Removing /home/ubuntu/.cache/huggingface/metrics/f1/default/default_experiment-1-0.arrow.59it/s]

01/27/2022 09:30:48 - INFO - datasets.metric - Removing /home/ubuntu/.cache/huggingface/metrics/precision/default/default_experiment-1-0.arrow

01/27/2022 09:30:49 - INFO - datasets.metric - Removing /home/ubuntu/.cache/huggingface/metrics/recall/default/default_experiment-1-0.arrow

PRECISION: {'precision': 0.7612903225806451}

RECALL: {'recall': 0.7612903225806451}

F1: {'f1': 0.7612903225806451}

{'eval_loss': 1.4658324718475342, 'eval_accuracy': 0.7612903118133545, 'eval_runtime': 30.0054, 'eval_samples_per_second': 46.492, 'eval_steps_per_second': 46.492, 'epoch': 3.0}

**4th Epoch:**

1/27/2022 09:56:55 - INFO - datasets.metric - Removing /home/ubuntu/.cache/huggingface/metrics/f1/default/default_experiment-1-0.arrow.92it/s]

01/27/2022 09:56:56 - INFO - datasets.metric - Removing /home/ubuntu/.cache/huggingface/metrics/precision/default/default_experiment-1-0.arrow

01/27/2022 09:56:56 - INFO - datasets.metric - Removing /home/ubuntu/.cache/huggingface/metrics/recall/default/default_experiment-1-0.arrow

PRECISION: {'precision': 0.7698924731182796}

RECALL: {'recall': 0.7698924731182796}

F1: {'f1': 0.7698924731182796}

## Environment info

!git clone https://github.com/huggingface/transformers

%cd transformers

!pip install .

!pip install -r /content/transformers/examples/pytorch/token-classification/requirements.txt

!pip install datasets | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3639/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3639/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3638 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3638/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3638/comments | https://api.github.com/repos/huggingface/datasets/issues/3638/events | https://github.com/huggingface/datasets/issues/3638 | 1,115,725,703 | I_kwDODunzps5CgJ-H | 3,638 | AutoTokenizer hash value got change after datasets.map | {

"login": "tshu-w",

"id": 13161779,

"node_id": "MDQ6VXNlcjEzMTYxNzc5",

"avatar_url": "https://avatars.githubusercontent.com/u/13161779?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/tshu-w",

"html_url": "https://github.com/tshu-w",

"followers_url": "https://api.github.com/users/tshu-w/followers",

"following_url": "https://api.github.com/users/tshu-w/following{/other_user}",

"gists_url": "https://api.github.com/users/tshu-w/gists{/gist_id}",

"starred_url": "https://api.github.com/users/tshu-w/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/tshu-w/subscriptions",

"organizations_url": "https://api.github.com/users/tshu-w/orgs",

"repos_url": "https://api.github.com/users/tshu-w/repos",

"events_url": "https://api.github.com/users/tshu-w/events{/privacy}",

"received_events_url": "https://api.github.com/users/tshu-w/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"This issue was original reported at https://github.com/huggingface/transformers/issues/14931 and It seems like this issue also occur with other AutoClass like AutoFeatureExtractor.",

"Thanks for moving the issue here !\r\n\r\nI wasn't able to reproduce the issue on my env (the hashes stay the same):\r\n```\r\n- `transformers` version: 1.15.0\r\n- `tokenizers` version: 0.10.3\r\n- `datasets` version: 1.18.1\r\n- `dill` version: 0.3.4\r\n- Platform: Linux-4.19.0-18-cloud-amd64-x86_64-with-debian-10.11\r\n- Python version: 3.7.10\r\n- PyArrow version: 6.0.1\r\n```\r\nHowever I was able to reproduce it on Google Colab (the hashes end up different):\r\n```\r\n- `transformers` version: 1.15.0\r\n- `tokenizers` version: 0.10.3\r\n- `datasets` version: 1.18.1\r\n- `dill` version: 0.3.4\r\n- Platform: Linux-5.4.144+-x86_64-with-Ubuntu-18.04-bionic\r\n- Python version: 3.7.12\r\n- PyArrow version: 3.0.0\r\n```\r\nI'll investigate why it doesn't work properly on Google Colab :)",

"I found the issue: the tokenizer has something inside it that changes.\r\n\r\nBefore the call, `tokenizer._tokenizer.truncation` is None, and after the call it changes to this for some reason:\r\n```\r\n{'max_length': 512, 'strategy': 'longest_first', 'stride': 0}\r\n```\r\n\r\nDoes anybody know why calling the tokenizer would change its state this way ? cc @Narsil @SaulLu maybe ?",

"`tokenizer.encode(..)` does not accept argument like max_length, strategy or stride.\r\n\r\nIn `tokenizers` you have to modify the tokenizer state by setting various `TruncationParams` (and/or `PaddingParams`).\r\nHowever, since this is modifying the state, you need to mutably borrow the tokenizer (a rust concept). The key principle is that there can ever be only 1 mutable borrow at a time during the span of the tokenizer lifecycle.\r\n\r\nBecause of this, if `transformers` blindly set `TruncationParams` and `PaddingParams` on every call, it would cause the tokenizer to crash (or make the various threads accessing it hang, which is not necessarily better).\r\n\r\nIn order to avoid that, we decided to handle it this way : https://github.com/huggingface/transformers/pull/12550 . \r\n\r\nWhich should explain the state of the tokenizer being modified (hence its hash).\r\n\r\nNow for a temporary solution, simply encoding once with the tokenizer should give it it's proper hash (since by default the tokenizer doesn't have this state, looks at the first encoding call, and creates it).\r\n\r\nWe could try and set these 2 dicts at initialization time, but it wouldn't work if a user modified the tokenizer state later\r\n```python\r\ntokenizer = AutoTokenizer.from_pretrained(..)\r\ntokenizer.truncation_side = \"left\"\r\n# Now we have a difference between `tokenizer._tokenizer.truncation` and `tokenizer.truncation_side`\r\n```\r\nIf we wanted to fix it correctly it would mean mapping every assignation to it's proper location on `tokenizer.{padding/truncation}`\r\n\r\nI think it's important to note that we cannot guarantee a tokenizer' hash remains the same if *any* of those parameters are modified through the `.map` function.\r\n\r\nEdit: Another option would be to override the default __hash__ function, but I don't know if there's a sound implementation that could fit.",

"Thanks a lot for the explanation !\r\nI think if we set these 2 dicts at initialization time it would be amazing already\r\n\r\nShall we open an issue in `transformers` to ask for these dictionaries to be set when the tokenizer is instantiated ?\r\n\r\n> Edit: Another option would be to override the default hash function, but I don't know if there's a sound implementation that could fit.\r\n\r\nIn `datasets` we can easily have custom hashing for objects of the other HF libraries if we want. For example we ignore the cache some tokenizers have. However in this specific case it touches parameters that may change the behavior of the tokenizer itself. I'm not sure the logic that determines how a tokenizer behaves should be in `datasets`",

"A hack we could have in the `datasets` lib would be to call the tokenizer before hashing it in order to set all its parameters correctly - but it sounds a lot like a hack and I'm not sure this can work in the long run",

"Fully agree with everything you said. \r\n\r\nI think the best course of action is creating an issue in `transformers`. I can start the work on this.\r\nI think the code changes are fairly simple. Making a sound test + not breaking other stuff might be different :D",

"It should be noted that this problem also occurs in other AutoClasses, such as AutoFeatureExtractor, so I don't think handling it in Datasets is a long-term practice either.",

"> I think the best course of action is creating an issue in `transformers`. I can start the work on this.\r\n\r\n@Narsil Hi, I reopen this issue in `transformers` https://github.com/huggingface/transformers/issues/14931",

"Here is @Narsil comment from https://github.com/huggingface/transformers/issues/14931#issuecomment-1074981569\r\n> # TL;DR\r\n> Call the function once on a dummy example beforehand will fix it.\r\n> \r\n> ```python\r\n> tokenizer(\"Some\", \"test\", truncation=True)\r\n> ```\r\n> \r\n> # Long answer\r\n> If I remember the last status, it's hard doing anything, since the call itself\r\n> \r\n> ```python\r\n> tokenizer(example[\"sentence1\"], example[\"sentence2\"], truncation=True)\r\n> ```\r\n> \r\n> will modify the tokenizer. It's the `truncation=True` that modifies the tokenizer to put it into truncation mode if you will. Calling the tokenizer once with that argument would fix the cache.\r\n> \r\n> Finding a fix that :\r\n> \r\n> * Doesn't imply a huge chunk of work on `tokenizers` (with potential loss of performance, and breaking backward compatibility)\r\n> * Doesn't imply `datasets` running a first pass of the loop\r\n> * Doesn't imply `datasets` looking at the map function itself\r\n> * Uses a sound `hash` for this object in `datasets`.\r\n> \r\n> is IIRC impossible for this use case.\r\n> \r\n> I can explain a bit more why the first option is not desirable.\r\n> \r\n> In order to \"fix\" this for tokenizers, we would need to make `tokenizer(..)` purely without side effects. This means that the \"options\" of tokenization (like `truncation` and `padding` at least) would have\r\n",

"For me this workaround only works if I don't pass the `num_proc=X` argument to `datasets.map`"

] | 2022-01-27T03:19:03 | 2022-08-26T07:47:56 | null | NONE | null | null | null | ## Describe the bug

AutoTokenizer hash value got change after datasets.map

## Steps to reproduce the bug

1. trash huggingface datasets cache

2. run the following code:

```python

from transformers import AutoTokenizer, BertTokenizer

from datasets import load_dataset

from datasets.fingerprint import Hasher

tokenizer = AutoTokenizer.from_pretrained('bert-base-uncased')

def tokenize_function(example):

return tokenizer(example["sentence1"], example["sentence2"], truncation=True)

raw_datasets = load_dataset("glue", "mrpc")

print(Hasher.hash(tokenize_function))

print(Hasher.hash(tokenizer))

tokenized_datasets = raw_datasets.map(tokenize_function, batched=True)

print(Hasher.hash(tokenize_function))

print(Hasher.hash(tokenizer))

```

got

```

Reusing dataset glue (/home1/wts/.cache/huggingface/datasets/glue/mrpc/1.0.0/dacbe3125aa31d7f70367a07a8a9e72a5a0bfeb5fc42e75c9db75b96da6053ad)

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 3/3 [00:00<00:00, 1112.35it/s]

f4976bb4694ebc51

3fca35a1fd4a1251

100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 4/4 [00:00<00:00, 6.96ba/s]

100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:00<00:00, 15.25ba/s]

100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 5.81ba/s]

d32837619b7d7d01

5fd925c82edd62b6

```

3. run raw_datasets.map(tokenize_function, batched=True) again and see some dataset are not using cache.

## Expected results

`AutoTokenizer` work like specific Tokenizer (The hash value don't change after map):

```python

from transformers import AutoTokenizer, BertTokenizer

from datasets import load_dataset

from datasets.fingerprint import Hasher

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

def tokenize_function(example):

return tokenizer(example["sentence1"], example["sentence2"], truncation=True)

raw_datasets = load_dataset("glue", "mrpc")

print(Hasher.hash(tokenize_function))

print(Hasher.hash(tokenizer))

tokenized_datasets = raw_datasets.map(tokenize_function, batched=True)

print(Hasher.hash(tokenize_function))

print(Hasher.hash(tokenizer))

```

```

Reusing dataset glue (/home1/wts/.cache/huggingface/datasets/glue/mrpc/1.0.0/dacbe3125aa31d7f70367a07a8a9e72a5a0bfeb5fc42e75c9db75b96da6053ad)

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 3/3 [00:00<00:00, 1091.22it/s]

46d4b31f54153fc7

5b8771afd8d43888

Loading cached processed dataset at /home1/wts/.cache/huggingface/datasets/glue/mrpc/1.0.0/dacbe3125aa31d7f70367a07a8a9e72a5a0bfeb5fc42e75c9db75b96da6053ad/cache-6b07ff82ae9d5c51.arrow

Loading cached processed dataset at /home1/wts/.cache/huggingface/datasets/glue/mrpc/1.0.0/dacbe3125aa31d7f70367a07a8a9e72a5a0bfeb5fc42e75c9db75b96da6053ad/cache-af738a6d84f3864b.arrow

Loading cached processed dataset at /home1/wts/.cache/huggingface/datasets/glue/mrpc/1.0.0/dacbe3125aa31d7f70367a07a8a9e72a5a0bfeb5fc42e75c9db75b96da6053ad/cache-531d2a603ba713c1.arrow

46d4b31f54153fc7

5b8771afd8d43888

```

## Environment info

- `datasets` version: 1.18.0

- Platform: Linux-5.4.0-91-generic-x86_64-with-glibc2.27

- Python version: 3.9.7

- PyArrow version: 6.0.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3638/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3638/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3637 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3637/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3637/comments | https://api.github.com/repos/huggingface/datasets/issues/3637/events | https://github.com/huggingface/datasets/issues/3637 | 1,115,526,438 | I_kwDODunzps5CfZUm | 3,637 | [TypeError: Couldn't cast array of type] Cannot load dataset in v1.18 | {

"login": "lewtun",

"id": 26859204,

"node_id": "MDQ6VXNlcjI2ODU5MjA0",

"avatar_url": "https://avatars.githubusercontent.com/u/26859204?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lewtun",

"html_url": "https://github.com/lewtun",

"followers_url": "https://api.github.com/users/lewtun/followers",

"following_url": "https://api.github.com/users/lewtun/following{/other_user}",

"gists_url": "https://api.github.com/users/lewtun/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lewtun/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lewtun/subscriptions",

"organizations_url": "https://api.github.com/users/lewtun/orgs",

"repos_url": "https://api.github.com/users/lewtun/repos",

"events_url": "https://api.github.com/users/lewtun/events{/privacy}",

"received_events_url": "https://api.github.com/users/lewtun/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [] | null | [

"Hi @lewtun!\r\n \r\nThis one was tricky to debug. Initially, I tought there is a bug in the recently-added (by @lhoestq ) `cast_array_to_feature` function because `git bisect` points to the https://github.com/huggingface/datasets/commit/6ca96c707502e0689f9b58d94f46d871fa5a3c9c commit. Then, I noticed that the feature tpye of the `dialogue` field is `list`, which explains why you didn't get an error in earlier versions. Is there a specific reason why you use `list` instead of `Sequence` in the script? Maybe to avoid turning list of dicts to dicts of lists as it's done by `Sequence` for compatibility with TFDS or for performance reasons? If the field was `Sequence`, you would get an error in `encode_nested_example` because **the scripts yields some additional (nested) columns which are not specified in the `features` dictionary**. Previously, these additional columns would've been ignored by PyArrow (1), but now we have a check for them (2).\r\n(1) See PyArrow behavior:\r\n```\r\n>>> pa.array([{\"a\": 2, \"b\": 3}], type=pa.struct({\"a\": pa.int32()})) # pyarrow ignores the extra column\r\n-- is_valid: all not null\r\n-- child 0 type: int32\r\n [\r\n 2\r\n ]\r\n ```\r\n\r\n(2) Check:\r\nhttps://github.com/huggingface/datasets/blob/4c417d52def6e20359ca16c6723e0a2855e5c3fd/src/datasets/table.py#L1059\r\n\r\nThe fix is very simple: just add the missing columns to the _EMPTY_BELIEF_STATE list:\r\n```python\r\n_EMPTY_BELIEF_STATE.extend(['通用-产品类别', '火车-舱位档次', '通用-系列', '通用-价格区间', '通用-品牌'])\r\n```",

"Hey @mariosasko, thank you so much for figuring this one out - it certainly looks like a tricky bug 😱 ! I don't think there's a specific reason to use `list` instead of `Sequence` with the script, but I'll let the dataset creators know to see if your suggestion is acceptable.\r\n\r\nThank you again!",

"Thanks, this was indeed the fix! Would it make sense to produce a more informative error message in such cases? \r\n\r\nThe issue can be closed. \r\n\r\n"

] | 2022-01-26T21:38:02 | 2022-02-09T16:15:53 | 2022-02-09T16:15:53 | MEMBER | null | null | null | ## Describe the bug

I am trying to load the [`GEM/RiSAWOZ` dataset](https://huggingface.co/datasets/GEM/RiSAWOZ) in `datasets` v1.18.1 and am running into a type error when casting the features. The strange thing is that I can load the dataset with v1.17.0. Note that the error is also present if I install from `master` too.

As far as I can tell, the dataset loading script is correct and the problematic features [here](https://huggingface.co/datasets/GEM/RiSAWOZ/blob/main/RiSAWOZ.py#L237) also look fine to me.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dset = load_dataset("GEM/RiSAWOZ")

```

## Expected results

I can load the dataset without error.

## Actual results

<details><summary>Traceback</summary>

```

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/builder.py in _prepare_split(self, split_generator)

1083 example = self.info.features.encode_example(record)

-> 1084 writer.write(example, key)

1085 finally:

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/arrow_writer.py in write(self, example, key, writer_batch_size)

445

--> 446 self.write_examples_on_file()

447

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/arrow_writer.py in write_examples_on_file(self)

403 batch_examples[col] = [row[0][col] for row in self.current_examples]

--> 404 self.write_batch(batch_examples=batch_examples)

405 self.current_examples = []

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/arrow_writer.py in write_batch(self, batch_examples, writer_batch_size)

496 typed_sequence = OptimizedTypedSequence(batch_examples[col], type=col_type, try_type=col_try_type, col=col)

--> 497 arrays.append(pa.array(typed_sequence))

498 inferred_features[col] = typed_sequence.get_inferred_type()

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/pyarrow/array.pxi in pyarrow.lib.array()

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/pyarrow/array.pxi in pyarrow.lib._handle_arrow_array_protocol()

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/arrow_writer.py in __arrow_array__(self, type)

204 # We only do it if trying_type is False - since this is what the user asks for.

--> 205 out = cast_array_to_feature(out, type, allow_number_to_str=not self.trying_type)

206 return out

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

943 array = _sanitize(array)

--> 944 return func(array, *args, **kwargs)

945

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

919 else:

--> 920 return func(array, *args, **kwargs)

921

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in cast_array_to_feature(array, feature, allow_number_to_str)

1064 if isinstance(feature, list):

-> 1065 return pa.ListArray.from_arrays(array.offsets, _c(array.values, feature[0]))

1066 elif isinstance(feature, Sequence):

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

943 array = _sanitize(array)

--> 944 return func(array, *args, **kwargs)

945

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

919 else:

--> 920 return func(array, *args, **kwargs)

921

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in cast_array_to_feature(array, feature, allow_number_to_str)

1059 if isinstance(feature, dict) and set(field.name for field in array.type) == set(feature):

-> 1060 arrays = [_c(array.field(name), subfeature) for name, subfeature in feature.items()]

1061 return pa.StructArray.from_arrays(arrays, names=list(feature))

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in <listcomp>(.0)

1059 if isinstance(feature, dict) and set(field.name for field in array.type) == set(feature):

-> 1060 arrays = [_c(array.field(name), subfeature) for name, subfeature in feature.items()]

1061 return pa.StructArray.from_arrays(arrays, names=list(feature))

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

943 array = _sanitize(array)

--> 944 return func(array, *args, **kwargs)

945

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

919 else:

--> 920 return func(array, *args, **kwargs)

921

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in cast_array_to_feature(array, feature, allow_number_to_str)

1059 if isinstance(feature, dict) and set(field.name for field in array.type) == set(feature):

-> 1060 arrays = [_c(array.field(name), subfeature) for name, subfeature in feature.items()]

1061 return pa.StructArray.from_arrays(arrays, names=list(feature))

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in <listcomp>(.0)

1059 if isinstance(feature, dict) and set(field.name for field in array.type) == set(feature):

-> 1060 arrays = [_c(array.field(name), subfeature) for name, subfeature in feature.items()]

1061 return pa.StructArray.from_arrays(arrays, names=list(feature))

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

943 array = _sanitize(array)

--> 944 return func(array, *args, **kwargs)

945

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in wrapper(array, *args, **kwargs)

919 else:

--> 920 return func(array, *args, **kwargs)

921

~/miniconda3/envs/huggingface/lib/python3.8/site-packages/datasets/table.py in cast_array_to_feature(array, feature, allow_number_to_str)

1086 return array_cast(array, feature(), allow_number_to_str=allow_number_to_str)

-> 1087 raise TypeError(f"Couldn't cast array of type\n{array.type}\nto\n{feature}")

1088

TypeError: Couldn't cast array of type

struct<医院-3.0T MRI: string, 医院-CT: string, 医院-DSA: string, 医院-公交线路: string, 医院-区域: string, 医院-名称: string, 医院-地址: string, 医院-地铁可达: string, 医院-地铁线路: string, 医院-性质: string, 医院-挂号时间: string, 医院-电话: string, 医院-等级: string, 医院-类别: string, 医院-重点科室: string, 医院-门诊时间: string, 天气-城市: string, 天气-天气: string, 天气-日期: string, 天气-温度: string, 天气-紫外线强度: string, 天气-风力风向: string, 旅游景点-区域: string, 旅游景点-名称: string, 旅游景点-地址: string, 旅游景点-开放时间: string, 旅游景点-是否地铁直达: string, 旅游景点-景点类型: string, 旅游景点-最适合人群: string, 旅游景点-消费: string, 旅游景点-特点: string, 旅游景点-电话号码: string, 旅游景点-评分: string, 旅游景点-门票价格: string, 汽车-价格(万元): string, 汽车-倒车影像: string, 汽车-动力水平: string, 汽车-厂商: string, 汽车-发动机排量(L): string, 汽车-发动机马力(Ps): string, 汽车-名称: string, 汽车-定速巡航: string, 汽车-巡航系统: string, 汽车-座位数: string, 汽车-座椅加热: string, 汽车-座椅通风: string, 汽车-所属价格区间: string, 汽车-油耗水平: string, 汽车-环保标准: string, 汽车-级别: string, 汽车-综合油耗(L/100km): string, 汽车-能源类型: string, 汽车-车型: string, 汽车-车系: string, 汽车-车身尺寸(mm): string, 汽车-驱动方式: string, 汽车-驾驶辅助影像: string, 火车-出发地: string, 火车-出发时间: string, 火车-到达时间: string, 火车-坐席: string, 火车-日期: string, 火车-时长: string, 火车-目的地: string, 火车-票价: string, 火车-舱位档次: string, 火车-车型: string, 火车-车次信息: string, 电影-主演: string, 电影-主演名单: string, 电影-具体上映时间: string, 电影-制片国家/地区: string, 电影-导演: string, 电影-年代: string, 电影-片名: string, 电影-片长: string, 电影-类型: string, 电影-豆瓣评分: string, 电脑-CPU: string, 电脑-CPU型号: string, 电脑-产品类别: string, 电脑-价格: string, 电脑-价格区间: string, 电脑-内存容量: string, 电脑-分类: string, 电脑-品牌: string, 电脑-商品名称: string, 电脑-屏幕尺寸: string, 电脑-待机时长: string, 电脑-显卡型号: string, 电脑-显卡类别: string, 电脑-游戏性能: string, 电脑-特性: string, 电脑-硬盘容量: string, 电脑-系列: string, 电脑-系统: string, 电脑-色系: string, 电脑-裸机重量: string, 电视剧-主演: string, 电视剧-主演名单: string, 电视剧-制片国家/地区: string, 电视剧-单集片长: string, 电视剧-导演: string, 电视剧-年代: string, 电视剧-片名: string, 电视剧-类型: string, 电视剧-豆瓣评分: string, 电视剧-集数: string, 电视剧-首播时间: string, 辅导班-上课方式: string, 辅导班-上课时间: string, 辅导班-下课时间: string, 辅导班-价格: string, 辅导班-区域: string, 辅导班-年级: string, 辅导班-开始日期: string, 辅导班-教室地点: string, 辅导班-教师: string, 辅导班-教师网址: string, 辅导班-时段: string, 辅导班-校区: string, 辅导班-每周: string, 辅导班-班号: string, 辅导班-科目: string, 辅导班-结束日期: string, 辅导班-课时: string, 辅导班-课次: string, 辅导班-课程网址: string, 辅导班-难度: string, 通用-产品类别: string, 通用-价格区间: string, 通用-品牌: string, 通用-系列: string, 酒店-价位: string, 酒店-停车场: string, 酒店-区域: string, 酒店-名称: string, 酒店-地址: string, 酒店-房型: string, 酒店-房费: string, 酒店-星级: string, 酒店-电话号码: string, 酒店-评分: string, 酒店-酒店类型: string, 飞机-准点率: string, 飞机-出发地: string, 飞机-到达时间: string, 飞机-日期: string, 飞机-目的地: string, 飞机-票价: string, 飞机-航班信息: string, 飞机-舱位档次: string, 飞机-起飞时间: string, 餐厅-人均消费: string, 餐厅-价位: string, 餐厅-区域: string, 餐厅-名称: string, 餐厅-地址: string, 餐厅-推荐菜: string, 餐厅-是否地铁直达: string, 餐厅-电话号码: string, 餐厅-菜系: string, 餐厅-营业时间: string, 餐厅-评分: string>

to