url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 599M

1.15B

| node_id

stringlengths 18

32

| number

int64 1

3.78k

| title

stringlengths 1

276

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

list | milestone

dict | comments

sequence | created_at

int64 1,587B

1,646B

| updated_at

int64 1,587B

1,646B

| closed_at

int64 1,587B

1,646B

⌀ | author_association

stringclasses 3

values | active_lock_reason

null | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

null | draft

bool 2

classes | pull_request

dict | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/3778 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3778/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3778/comments | https://api.github.com/repos/huggingface/datasets/issues/3778/events | https://github.com/huggingface/datasets/issues/3778 | 1,147,898,946 | I_kwDODunzps5Ea4xC | 3,778 | Not be able to download dataset - "Newroom" | {

"login": "Darshan2104",

"id": 61326242,

"node_id": "MDQ6VXNlcjYxMzI2MjQy",

"avatar_url": "https://avatars.githubusercontent.com/u/61326242?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Darshan2104",

"html_url": "https://github.com/Darshan2104",

"followers_url": "https://api.github.com/users/Darshan2104/followers",

"following_url": "https://api.github.com/users/Darshan2104/following{/other_user}",

"gists_url": "https://api.github.com/users/Darshan2104/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Darshan2104/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Darshan2104/subscriptions",

"organizations_url": "https://api.github.com/users/Darshan2104/orgs",

"repos_url": "https://api.github.com/users/Darshan2104/repos",

"events_url": "https://api.github.com/users/Darshan2104/events{/privacy}",

"received_events_url": "https://api.github.com/users/Darshan2104/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | open | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,611,350,000 | 1,645,611,350,000 | null | NONE | null | Hello,

I tried to download the **newsroom** dataset but it didn't work out for me. it said me to **download it manually**!

For manually, Link is also didn't work! It is sawing some ad or something!

If anybody has solved this issue please help me out or if somebody has this dataset please share your google drive link, it would be a great help!

Thanks

Darshan Tank | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3778/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3778/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3777 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3777/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3777/comments | https://api.github.com/repos/huggingface/datasets/issues/3777/events | https://github.com/huggingface/datasets/pull/3777 | 1,147,232,875 | PR_kwDODunzps4zTVrz | 3,777 | Start removing canonical datasets logic | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"I'm not sure if the documentation explains why the dataset identifiers might have a namespace or not (the user/org): 'glue' vs 'severo/glue'. Do you think we should explain it, and relate it to the GitHub/Hub distinction?"

] | 1,645,554,210,000 | 1,645,604,646,000 | null | MEMBER | null | I updated the source code and the documentation to start removing the "canonical datasets" logic.

Indeed this makes the documentation confusing and we don't want this distinction anymore in the future. Ideally users should share their datasets on the Hub directly.

### Changes

- the documentation about dataset loading mentions the datasets on the Hub (no difference between canonical and community, since they all have their own repository now)

- the documentation about adding a dataset doesn't explain the technical differences between canonical and community anymore, and only presents how to add a community dataset. There is still a small section at the bottom that mentions the datasets that are still on GitHub and redirects to the `ADD_NEW_DATASET.md` guide on GitHub about how to contribute a dataset to the `datasets` library

- the code source doesn't mention "canonical" anymore anywhere. There is still a `GitHubDatasetModuleFactory` class that is left, but I updated the docstring to say that it will be eventually removed in favor of the `HubDatasetModuleFactory` classes that already exist

Would love to have your feedbacks on this !

cc @julien-c @thomwolf @SBrandeis | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3777/reactions",

"total_count": 4,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 2,

"rocket": 2,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3777/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3777",

"html_url": "https://github.com/huggingface/datasets/pull/3777",

"diff_url": "https://github.com/huggingface/datasets/pull/3777.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3777.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3776 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3776/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3776/comments | https://api.github.com/repos/huggingface/datasets/issues/3776/events | https://github.com/huggingface/datasets/issues/3776 | 1,146,932,871 | I_kwDODunzps5EXM6H | 3,776 | Allow download only some files from the Wikipedia dataset | {

"login": "jvanz",

"id": 1514798,

"node_id": "MDQ6VXNlcjE1MTQ3OTg=",

"avatar_url": "https://avatars.githubusercontent.com/u/1514798?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jvanz",

"html_url": "https://github.com/jvanz",

"followers_url": "https://api.github.com/users/jvanz/followers",

"following_url": "https://api.github.com/users/jvanz/following{/other_user}",

"gists_url": "https://api.github.com/users/jvanz/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jvanz/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jvanz/subscriptions",

"organizations_url": "https://api.github.com/users/jvanz/orgs",

"repos_url": "https://api.github.com/users/jvanz/repos",

"events_url": "https://api.github.com/users/jvanz/events{/privacy}",

"received_events_url": "https://api.github.com/users/jvanz/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | open | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"Hi @jvanz, thank you for your proposal.\r\n\r\nIn fact, we are aware that it is very common the problem you mention. Because of that, we are currently working in implementing a new version of wikipedia on the Hub, with all data preprocessed (no need to use Apache Beam), from where you will be able to use `data_files` to load only a specific subset of the data files.\r\n\r\nSee:\r\n- #3401 "

] | 1,645,537,601,000 | 1,645,541,402,000 | null | NONE | null | **Is your feature request related to a problem? Please describe.**

The Wikipedia dataset can be really big. This is a problem if you want to use it locally in a laptop with the Apache Beam `DirectRunner`. Even if your laptop have a considerable amount of memory (e.g. 32gb).

**Describe the solution you'd like**

I would like to use the `data_files` argument in the `load_dataset` function to define which file in the wikipedia dataset I would like to download. Thus, I can work with the dataset in a smaller machine using the Apache Beam `DirectRunner`.

**Describe alternatives you've considered**

I've tried to use the `simple` Wikipedia dataset. But it's in English and I would like to use Portuguese texts in my model.

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3776/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3776/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3775 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3775/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3775/comments | https://api.github.com/repos/huggingface/datasets/issues/3775/events | https://github.com/huggingface/datasets/pull/3775 | 1,146,849,454 | PR_kwDODunzps4zSEd4 | 3,775 | Fix gigaword download url | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,532,836,000 | 1,645,532,836,000 | null | CONTRIBUTOR | null | Reported on the forum: https://discuss.huggingface.co/t/error-loading-dataset/14999 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3775/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3775/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3775",

"html_url": "https://github.com/huggingface/datasets/pull/3775",

"diff_url": "https://github.com/huggingface/datasets/pull/3775.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3775.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3774 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3774/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3774/comments | https://api.github.com/repos/huggingface/datasets/issues/3774/events | https://github.com/huggingface/datasets/pull/3774 | 1,146,843,177 | PR_kwDODunzps4zSDHC | 3,774 | Fix reddit_tifu data URL | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,532,475,000 | 1,645,533,525,000 | 1,645,533,524,000 | MEMBER | null | Fix #3773. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3774/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3774/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3774",

"html_url": "https://github.com/huggingface/datasets/pull/3774",

"diff_url": "https://github.com/huggingface/datasets/pull/3774.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3774.patch",

"merged_at": 1645533524000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3773 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3773/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3773/comments | https://api.github.com/repos/huggingface/datasets/issues/3773/events | https://github.com/huggingface/datasets/issues/3773 | 1,146,758,335 | I_kwDODunzps5EWiS_ | 3,773 | Checksum mismatch for the reddit_tifu dataset | {

"login": "anna-kay",

"id": 56791604,

"node_id": "MDQ6VXNlcjU2NzkxNjA0",

"avatar_url": "https://avatars.githubusercontent.com/u/56791604?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/anna-kay",

"html_url": "https://github.com/anna-kay",

"followers_url": "https://api.github.com/users/anna-kay/followers",

"following_url": "https://api.github.com/users/anna-kay/following{/other_user}",

"gists_url": "https://api.github.com/users/anna-kay/gists{/gist_id}",

"starred_url": "https://api.github.com/users/anna-kay/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/anna-kay/subscriptions",

"organizations_url": "https://api.github.com/users/anna-kay/orgs",

"repos_url": "https://api.github.com/users/anna-kay/repos",

"events_url": "https://api.github.com/users/anna-kay/events{/privacy}",

"received_events_url": "https://api.github.com/users/anna-kay/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Thanks for reporting, @anna-kay. We are fixing it."

] | 1,645,527,427,000 | 1,645,533,524,000 | 1,645,533,524,000 | NONE | null | ## Describe the bug

A checksum occurs when downloading the reddit_tifu data (both long & short).

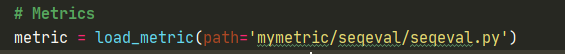

## Steps to reproduce the bug

reddit_tifu_dataset = load_dataset('reddit_tifu', 'long')

## Expected results

The expected result is for the dataset to be downloaded and cached locally.

## Actual results

File "/.../lib/python3.9/site-packages/datasets/utils/info_utils.py", line 40, in verify_checksums

raise NonMatchingChecksumError(error_msg + str(bad_urls))

datasets.utils.info_utils.NonMatchingChecksumError: Checksums didn't match for dataset source files:

['https://drive.google.com/uc?export=download&id=1ffWfITKFMJeqjT8loC8aiCLRNJpc_XnF']

## Environment info

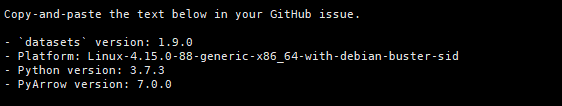

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.18.3

- Platform: Linux-5.13.0-30-generic-x86_64-with-glibc2.31

- Python version: 3.9.7

- PyArrow version: 7.0.0

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3773/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3773/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3772 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3772/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3772/comments | https://api.github.com/repos/huggingface/datasets/issues/3772/events | https://github.com/huggingface/datasets/pull/3772 | 1,146,718,630 | PR_kwDODunzps4zRor8 | 3,772 | Fix: dataset name is stored in keys | {

"login": "thomasw21",

"id": 24695242,

"node_id": "MDQ6VXNlcjI0Njk1MjQy",

"avatar_url": "https://avatars.githubusercontent.com/u/24695242?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/thomasw21",

"html_url": "https://github.com/thomasw21",

"followers_url": "https://api.github.com/users/thomasw21/followers",

"following_url": "https://api.github.com/users/thomasw21/following{/other_user}",

"gists_url": "https://api.github.com/users/thomasw21/gists{/gist_id}",

"starred_url": "https://api.github.com/users/thomasw21/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/thomasw21/subscriptions",

"organizations_url": "https://api.github.com/users/thomasw21/orgs",

"repos_url": "https://api.github.com/users/thomasw21/repos",

"events_url": "https://api.github.com/users/thomasw21/events{/privacy}",

"received_events_url": "https://api.github.com/users/thomasw21/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,525,237,000 | 1,645,528,114,000 | 1,645,528,113,000 | MEMBER | null | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3772/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3772/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3772",

"html_url": "https://github.com/huggingface/datasets/pull/3772",

"diff_url": "https://github.com/huggingface/datasets/pull/3772.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3772.patch",

"merged_at": 1645528113000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3771 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3771/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3771/comments | https://api.github.com/repos/huggingface/datasets/issues/3771/events | https://github.com/huggingface/datasets/pull/3771 | 1,146,561,140 | PR_kwDODunzps4zRHsd | 3,771 | Fix DuplicatedKeysError on msr_sqa dataset | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,515,864,000 | 1,645,517,560,000 | 1,645,517,559,000 | MEMBER | null | Fix #3770. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3771/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3771/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3771",

"html_url": "https://github.com/huggingface/datasets/pull/3771",

"diff_url": "https://github.com/huggingface/datasets/pull/3771.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3771.patch",

"merged_at": 1645517559000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3770 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3770/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3770/comments | https://api.github.com/repos/huggingface/datasets/issues/3770/events | https://github.com/huggingface/datasets/issues/3770 | 1,146,336,667 | I_kwDODunzps5EU7Wb | 3,770 | DuplicatedKeysError on msr_sqa dataset | {

"login": "kolk",

"id": 9049591,

"node_id": "MDQ6VXNlcjkwNDk1OTE=",

"avatar_url": "https://avatars.githubusercontent.com/u/9049591?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/kolk",

"html_url": "https://github.com/kolk",

"followers_url": "https://api.github.com/users/kolk/followers",

"following_url": "https://api.github.com/users/kolk/following{/other_user}",

"gists_url": "https://api.github.com/users/kolk/gists{/gist_id}",

"starred_url": "https://api.github.com/users/kolk/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/kolk/subscriptions",

"organizations_url": "https://api.github.com/users/kolk/orgs",

"repos_url": "https://api.github.com/users/kolk/repos",

"events_url": "https://api.github.com/users/kolk/events{/privacy}",

"received_events_url": "https://api.github.com/users/kolk/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Thanks for reporting, @kolk.\r\n\r\nWe are fixing it. "

] | 1,645,490,613,000 | 1,645,517,559,000 | 1,645,517,559,000 | NONE | null | ### Describe the bug

Failure to generate dataset msr_sqa because of duplicate keys.

### Steps to reproduce the bug

```

from datasets import load_dataset

load_dataset("msr_sqa")

```

### Expected results

The examples keys should be unique.

**Actual results**

```

>>> load_dataset("msr_sqa")

Downloading:

6.72k/? [00:00<00:00, 148kB/s]

Downloading:

2.93k/? [00:00<00:00, 53.8kB/s]

Using custom data configuration default

Downloading and preparing dataset msr_sqa/default (download: 4.57 MiB, generated: 26.25 MiB, post-processed: Unknown size, total: 30.83 MiB) to /root/.cache/huggingface/datasets/msr_sqa/default/0.0.0/70b2a497bd3cc8fc960a3557d2bad1eac5edde824505e15c9c8ebe4c260fd4d1...

Downloading: 100%

4.80M/4.80M [00:00<00:00, 7.49MB/s]

---------------------------------------------------------------------------

DuplicatedKeysError Traceback (most recent call last)

[/usr/local/lib/python3.7/dist-packages/datasets/builder.py](https://localhost:8080/#) in _prepare_split(self, split_generator)

1080 example = self.info.features.encode_example(record)

-> 1081 writer.write(example, key)

1082 finally:

8 frames

DuplicatedKeysError: FAILURE TO GENERATE DATASET !

Found duplicate Key: nt-639

Keys should be unique and deterministic in nature

During handling of the above exception, another exception occurred:

DuplicatedKeysError Traceback (most recent call last)

[/usr/local/lib/python3.7/dist-packages/datasets/arrow_writer.py](https://localhost:8080/#) in check_duplicate_keys(self)

449 for hash, key in self.hkey_record:

450 if hash in tmp_record:

--> 451 raise DuplicatedKeysError(key)

452 else:

453 tmp_record.add(hash)

DuplicatedKeysError: FAILURE TO GENERATE DATASET !

Found duplicate Key: nt-639

Keys should be unique and deterministic in nature

```

### Environment info

datasets version: 1.18.3

Platform: Google colab notebook

Python version: 3.7

PyArrow version: 6.0.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3770/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3770/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3769 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3769/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3769/comments | https://api.github.com/repos/huggingface/datasets/issues/3769/events | https://github.com/huggingface/datasets/issues/3769 | 1,146,258,023 | I_kwDODunzps5EUoJn | 3,769 | `dataset = dataset.map()` causes faiss index lost | {

"login": "Oaklight",

"id": 13076552,

"node_id": "MDQ6VXNlcjEzMDc2NTUy",

"avatar_url": "https://avatars.githubusercontent.com/u/13076552?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Oaklight",

"html_url": "https://github.com/Oaklight",

"followers_url": "https://api.github.com/users/Oaklight/followers",

"following_url": "https://api.github.com/users/Oaklight/following{/other_user}",

"gists_url": "https://api.github.com/users/Oaklight/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Oaklight/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Oaklight/subscriptions",

"organizations_url": "https://api.github.com/users/Oaklight/orgs",

"repos_url": "https://api.github.com/users/Oaklight/repos",

"events_url": "https://api.github.com/users/Oaklight/events{/privacy}",

"received_events_url": "https://api.github.com/users/Oaklight/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,480,763,000 | 1,645,484,262,000 | null | NONE | null | ## Describe the bug

assigning the resulted dataset to original dataset causes lost of the faiss index

## Steps to reproduce the bug

`my_dataset` is a regular loaded dataset. It's a part of a customed dataset structure

```python

self.dataset.add_faiss_index('embeddings')

self.dataset.list_indexes()

# ['embeddings']

dataset2 = my_dataset.map(

lambda x: self._get_nearest_examples_batch(x['text']), batch=True

)

# the unexpected result:

dataset2.list_indexes()

# []

self.dataset.list_indexes()

# ['embeddings']

```

in case something wrong with my `_get_nearest_examples_batch()`, it's like this

```python

def _get_nearest_examples_batch(self, examples, k=5):

queries = embed(examples)

scores_batch, retrievals_batch = self.dataset.get_nearest_examples_batch(self.faiss_column, queries, k)

return {

'neighbors': [batch['text'] for batch in retrievals_batch],

'scores': scores_batch

}

```

## Expected results

`map` shouldn't drop the indexes, in another word, indexes should be carried to the generated dataset

## Actual results

map drops the indexes

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.18.3

- Platform: Ubuntu 20.04.3 LTS

- Python version: 3.8.12

- PyArrow version: 7.0.0

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3769/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3769/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3768 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3768/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3768/comments | https://api.github.com/repos/huggingface/datasets/issues/3768/events | https://github.com/huggingface/datasets/pull/3768 | 1,146,102,442 | PR_kwDODunzps4zPobl | 3,768 | Fix HfFileSystem docstring | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,467,280,000 | 1,645,521,183,000 | 1,645,521,182,000 | MEMBER | null | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3768/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3768/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3768",

"html_url": "https://github.com/huggingface/datasets/pull/3768",

"diff_url": "https://github.com/huggingface/datasets/pull/3768.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3768.patch",

"merged_at": 1645521182000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3767 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3767/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3767/comments | https://api.github.com/repos/huggingface/datasets/issues/3767/events | https://github.com/huggingface/datasets/pull/3767 | 1,146,036,648 | PR_kwDODunzps4zPahh | 3,767 | Expose method and fix param | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,462,667,000 | 1,645,518,903,000 | 1,645,518,902,000 | CONTRIBUTOR | null | A fix + expose a new method, following https://github.com/huggingface/datasets/pull/3670 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3767/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3767/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3767",

"html_url": "https://github.com/huggingface/datasets/pull/3767",

"diff_url": "https://github.com/huggingface/datasets/pull/3767.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3767.patch",

"merged_at": 1645518902000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3766 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3766/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3766/comments | https://api.github.com/repos/huggingface/datasets/issues/3766/events | https://github.com/huggingface/datasets/pull/3766 | 1,145,829,289 | PR_kwDODunzps4zOujH | 3,766 | Fix head_qa data URL | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,451,570,000 | 1,645,454,360,000 | 1,645,454,359,000 | MEMBER | null | Fix #3758. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3766/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3766/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3766",

"html_url": "https://github.com/huggingface/datasets/pull/3766",

"diff_url": "https://github.com/huggingface/datasets/pull/3766.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3766.patch",

"merged_at": 1645454359000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3765 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3765/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3765/comments | https://api.github.com/repos/huggingface/datasets/issues/3765/events | https://github.com/huggingface/datasets/pull/3765 | 1,145,126,881 | PR_kwDODunzps4zMdIL | 3,765 | Update URL for tagging app | {

"login": "lewtun",

"id": 26859204,

"node_id": "MDQ6VXNlcjI2ODU5MjA0",

"avatar_url": "https://avatars.githubusercontent.com/u/26859204?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lewtun",

"html_url": "https://github.com/lewtun",

"followers_url": "https://api.github.com/users/lewtun/followers",

"following_url": "https://api.github.com/users/lewtun/following{/other_user}",

"gists_url": "https://api.github.com/users/lewtun/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lewtun/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lewtun/subscriptions",

"organizations_url": "https://api.github.com/users/lewtun/orgs",

"repos_url": "https://api.github.com/users/lewtun/repos",

"events_url": "https://api.github.com/users/lewtun/events{/privacy}",

"received_events_url": "https://api.github.com/users/lewtun/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"Oh, this URL shouldn't be updated to the tagging app as it's actually used for creating the README - closing this."

] | 1,645,389,271,000 | 1,645,389,370,000 | 1,645,389,366,000 | MEMBER | null | This PR updates the URL for the tagging app to be the one on Spaces. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3765/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3765/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3765",

"html_url": "https://github.com/huggingface/datasets/pull/3765",

"diff_url": "https://github.com/huggingface/datasets/pull/3765.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3765.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/3764 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3764/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3764/comments | https://api.github.com/repos/huggingface/datasets/issues/3764/events | https://github.com/huggingface/datasets/issues/3764 | 1,145,107,050 | I_kwDODunzps5EQPJq | 3,764 | ! | {

"login": "LesiaFedorenko",

"id": 77545307,

"node_id": "MDQ6VXNlcjc3NTQ1MzA3",

"avatar_url": "https://avatars.githubusercontent.com/u/77545307?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/LesiaFedorenko",

"html_url": "https://github.com/LesiaFedorenko",

"followers_url": "https://api.github.com/users/LesiaFedorenko/followers",

"following_url": "https://api.github.com/users/LesiaFedorenko/following{/other_user}",

"gists_url": "https://api.github.com/users/LesiaFedorenko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/LesiaFedorenko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/LesiaFedorenko/subscriptions",

"organizations_url": "https://api.github.com/users/LesiaFedorenko/orgs",

"repos_url": "https://api.github.com/users/LesiaFedorenko/repos",

"events_url": "https://api.github.com/users/LesiaFedorenko/events{/privacy}",

"received_events_url": "https://api.github.com/users/LesiaFedorenko/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [] | 1,645,383,943,000 | 1,645,433,758,000 | 1,645,433,758,000 | NONE | null | ## Dataset viewer issue for '*name of the dataset*'

**Link:** *link to the dataset viewer page*

*short description of the issue*

Am I the one who added this dataset ? Yes-No

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3764/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3764/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3763 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3763/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3763/comments | https://api.github.com/repos/huggingface/datasets/issues/3763/events | https://github.com/huggingface/datasets/issues/3763 | 1,145,099,878 | I_kwDODunzps5EQNZm | 3,763 | It's not possible download `20200501.pt` dataset | {

"login": "jvanz",

"id": 1514798,

"node_id": "MDQ6VXNlcjE1MTQ3OTg=",

"avatar_url": "https://avatars.githubusercontent.com/u/1514798?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jvanz",

"html_url": "https://github.com/jvanz",

"followers_url": "https://api.github.com/users/jvanz/followers",

"following_url": "https://api.github.com/users/jvanz/following{/other_user}",

"gists_url": "https://api.github.com/users/jvanz/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jvanz/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jvanz/subscriptions",

"organizations_url": "https://api.github.com/users/jvanz/orgs",

"repos_url": "https://api.github.com/users/jvanz/repos",

"events_url": "https://api.github.com/users/jvanz/events{/privacy}",

"received_events_url": "https://api.github.com/users/jvanz/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"Hi @jvanz, thanks for reporting.\r\n\r\nPlease note that Wikimedia website does not longer host Wikipedia dumps for so old dates.\r\n\r\nFor a list of accessible dump dates of `pt` Wikipedia, please see: https://dumps.wikimedia.org/ptwiki/\r\n\r\nYou can load for example `20220220` `pt` Wikipedia:\r\n```python\r\ndataset = load_dataset(\"wikipedia\", language=\"pt\", date=\"20220220\", beam_runner=\"DirectRunner\")\r\n```",

"> ```python\r\n> dataset = load_dataset(\"wikipedia\", language=\"pt\", date=\"20220220\", beam_runner=\"DirectRunner\")\r\n> ```\r\n\r\nThank you! I did not know that I can do this. I was following the example in the error message when I do not define which language dataset I'm trying to download.\r\n\r\nI've tried something similar changing the date in the `load_dataset` call that I've shared in the bug description. Obviously, it did not work. I need to read the docs more carefully next time. My bad!\r\n\r\nThanks again and sorry for the noise.\r\n\r\n"

] | 1,645,382,098,000 | 1,645,445,172,000 | 1,645,435,506,000 | NONE | null | ## Describe the bug

The dataset `20200501.pt` is broken.

The available datasets: https://dumps.wikimedia.org/ptwiki/

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("wikipedia", "20200501.pt", beam_runner='DirectRunner')

```

## Expected results

I expect to download the dataset locally.

## Actual results

```

>>> from datasets import load_dataset

>>> dataset = load_dataset("wikipedia", "20200501.pt", beam_runner='DirectRunner')

Downloading and preparing dataset wikipedia/20200501.pt to /home/jvanz/.cache/huggingface/datasets/wikipedia/20200501.pt/1.0.0/009f923d9b6dd00c00c8cdc7f408f2b47f45dd4f5fb7982a21f9448f4afbe475...

/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/apache_beam/__init__.py:79: UserWarning: This version of Apache Beam has not been sufficiently tested on Python 3.9. You may encounter bugs or missing features.

warnings.warn(

0%| | 0/1 [00:00<?, ?it/s]

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/load.py", line 1702, in load_dataset

builder_instance.download_and_prepare(

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/builder.py", line 594, in download_and_prepare

self._download_and_prepare(

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/builder.py", line 1245, in _download_and_prepare

super()._download_and_prepare(

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/builder.py", line 661, in _download_and_prepare

split_generators = self._split_generators(dl_manager, **split_generators_kwargs)

File "/home/jvanz/.cache/huggingface/modules/datasets_modules/datasets/wikipedia/009f923d9b6dd00c00c8cdc7f408f2b47f45dd4f5fb7982a21f9448f4afbe475/wikipedia.py", line 420, in _split_generators

downloaded_files = dl_manager.download_and_extract({"info": info_url})

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/download_manager.py", line 307, in download_and_extract

return self.extract(self.download(url_or_urls))

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/download_manager.py", line 195, in download

downloaded_path_or_paths = map_nested(

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/py_utils.py", line 260, in map_nested

mapped = [

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/py_utils.py", line 261, in <listcomp>

_single_map_nested((function, obj, types, None, True))

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/py_utils.py", line 196, in _single_map_nested

return function(data_struct)

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/download_manager.py", line 216, in _download

return cached_path(url_or_filename, download_config=download_config)

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/file_utils.py", line 298, in cached_path

output_path = get_from_cache(

File "/home/jvanz/anaconda3/envs/tf-gpu/lib/python3.9/site-packages/datasets/utils/file_utils.py", line 612, in get_from_cache

raise FileNotFoundError(f"Couldn't find file at {url}")

FileNotFoundError: Couldn't find file at https://dumps.wikimedia.org/ptwiki/20200501/dumpstatus.json

```

## Environment info

```

- `datasets` version: 1.18.3

- Platform: Linux-5.3.18-150300.59.49-default-x86_64-with-glibc2.31

- Python version: 3.9.7

- PyArrow version: 6.0.1

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3763/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3763/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3762 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3762/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3762/comments | https://api.github.com/repos/huggingface/datasets/issues/3762/events | https://github.com/huggingface/datasets/issues/3762 | 1,144,849,557 | I_kwDODunzps5EPQSV | 3,762 | `Dataset.class_encode` should support custom class names | {

"login": "Dref360",

"id": 8976546,

"node_id": "MDQ6VXNlcjg5NzY1NDY=",

"avatar_url": "https://avatars.githubusercontent.com/u/8976546?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Dref360",

"html_url": "https://github.com/Dref360",

"followers_url": "https://api.github.com/users/Dref360/followers",

"following_url": "https://api.github.com/users/Dref360/following{/other_user}",

"gists_url": "https://api.github.com/users/Dref360/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Dref360/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Dref360/subscriptions",

"organizations_url": "https://api.github.com/users/Dref360/orgs",

"repos_url": "https://api.github.com/users/Dref360/repos",

"events_url": "https://api.github.com/users/Dref360/events{/privacy}",

"received_events_url": "https://api.github.com/users/Dref360/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"Hi @Dref360, thanks a lot for your proposal.\r\n\r\nIt totally makes sense to have more flexibility when class encoding, I agree.\r\n\r\nYou could even further customize the class encoding by passing an instance of `ClassLabel` itself (instead of replicating `ClassLabel` instantiation arguments as `Dataset.class_encode_column` arguments).\r\n\r\nAnd the latter made me think of `Dataset.cast_column`...\r\n\r\nMaybe better to have some others' opinions @lhoestq @mariosasko ",

"Hi @Dref360! You can use [`Dataset.align_labels_with_mapping`](https://huggingface.co/docs/datasets/master/package_reference/main_classes.html#datasets.Dataset.align_labels_with_mapping) after `Dataset.class_encode_column` to assign a different mapping of labels to ids.\r\n\r\n@albertvillanova I'd like to avoid adding more complexity to the API where it's not (absolutely) needed, so I don't think introducing a new param in `Dataset.class_encode_column` is a good idea.\r\n\r\n",

"I wasn't aware that it existed thank you for the link.\n\nClosing then! "

] | 1,645,305,705,000 | 1,645,445,795,000 | 1,645,445,795,000 | CONTRIBUTOR | null | I can make a PR, just wanted approval before starting.

**Is your feature request related to a problem? Please describe.**

It is often the case that classes are not ordered in alphabetical order. Current `class_encode_column` sort the classes before indexing.

https://github.com/huggingface/datasets/blob/master/src/datasets/arrow_dataset.py#L1235

**Describe the solution you'd like**

I would like to add a **optional** parameter `class_names` to `class_encode_column` that would be used for the mapping instead of sorting the unique values.

**Describe alternatives you've considered**

One can use map instead. I find it harder to read.

```python

CLASS_NAMES = ['apple', 'orange', 'potato']

ds = ds.map(lambda item: CLASS_NAMES.index(item[label_column]))

# Proposition

ds = ds.class_encode_column(label_column, CLASS_NAMES)

```

**Additional context**

I can make the PR if this feature is accepted.

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3762/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3762/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3761 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3761/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3761/comments | https://api.github.com/repos/huggingface/datasets/issues/3761/events | https://github.com/huggingface/datasets/issues/3761 | 1,144,830,702 | I_kwDODunzps5EPLru | 3,761 | Know your data for HF hub | {

"login": "Muhtasham",

"id": 20128202,

"node_id": "MDQ6VXNlcjIwMTI4MjAy",

"avatar_url": "https://avatars.githubusercontent.com/u/20128202?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Muhtasham",

"html_url": "https://github.com/Muhtasham",

"followers_url": "https://api.github.com/users/Muhtasham/followers",

"following_url": "https://api.github.com/users/Muhtasham/following{/other_user}",

"gists_url": "https://api.github.com/users/Muhtasham/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Muhtasham/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Muhtasham/subscriptions",

"organizations_url": "https://api.github.com/users/Muhtasham/orgs",

"repos_url": "https://api.github.com/users/Muhtasham/repos",

"events_url": "https://api.github.com/users/Muhtasham/events{/privacy}",

"received_events_url": "https://api.github.com/users/Muhtasham/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"Hi @Muhtasham you should take a look at https://huggingface.co/blog/data-measurements-tool and accompanying demo app at https://huggingface.co/spaces/huggingface/data-measurements-tool\r\n\r\nWe would be interested in your feedback. cc @meg-huggingface @sashavor @yjernite "

] | 1,645,300,127,000 | 1,645,452,923,000 | 1,645,452,923,000 | NONE | null | **Is your feature request related to a problem? Please describe.**

Would be great to see be able to understand datasets with the goal of improving data quality, and helping mitigate fairness and bias issues.

**Describe the solution you'd like**

Something like https://knowyourdata.withgoogle.com/ for HF hub | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3761/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3761/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3760 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3760/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3760/comments | https://api.github.com/repos/huggingface/datasets/issues/3760/events | https://github.com/huggingface/datasets/issues/3760 | 1,144,804,558 | I_kwDODunzps5EPFTO | 3,760 | Unable to view the Gradio flagged call back dataset | {

"login": "kingabzpro",

"id": 36753484,

"node_id": "MDQ6VXNlcjM2NzUzNDg0",

"avatar_url": "https://avatars.githubusercontent.com/u/36753484?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/kingabzpro",

"html_url": "https://github.com/kingabzpro",

"followers_url": "https://api.github.com/users/kingabzpro/followers",

"following_url": "https://api.github.com/users/kingabzpro/following{/other_user}",

"gists_url": "https://api.github.com/users/kingabzpro/gists{/gist_id}",

"starred_url": "https://api.github.com/users/kingabzpro/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/kingabzpro/subscriptions",

"organizations_url": "https://api.github.com/users/kingabzpro/orgs",

"repos_url": "https://api.github.com/users/kingabzpro/repos",

"events_url": "https://api.github.com/users/kingabzpro/events{/privacy}",

"received_events_url": "https://api.github.com/users/kingabzpro/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | open | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"Hi @kingabzpro.\r\n\r\nI think you need to create a loading script that creates the dataset from the CSV file and the image paths.\r\n\r\nAs example, you could have a look at the Food-101 dataset: https://huggingface.co/datasets/food101\r\n- Loading script: https://huggingface.co/datasets/food101/blob/main/food101.py\r\n\r\nOnce the loading script is created, the viewer will show a previsualization of your dataset. ",

"@albertvillanova I don't think this is the issue. I have created another dataset with similar files and format and it works. https://huggingface.co/datasets/kingabzpro/savtadepth-flags-V2",

"Yes, you are right, that was not the issue.\r\n\r\nJust take into account that sometimes the viewer can take some time until it shows the preview of the dataset.\r\nAfter some time, yours is finally properly shown: https://huggingface.co/datasets/kingabzpro/savtadepth-flags",

"The problem was resolved by deleted the dataset and creating new one with similar name and then clicking on flag button.",

"I think if you make manual changes to dataset the whole system breaks. "

] | 1,645,292,708,000 | 1,645,513,342,000 | null | NONE | null | ## Dataset viewer issue for '*savtadepth-flags*'

**Link:** *[savtadepth-flags](https://huggingface.co/datasets/kingabzpro/savtadepth-flags)*

*with the Gradio 2.8.1 the dataset viers stopped working. I tried to add values manually but its not working. The dataset is also not showing the link with the app https://huggingface.co/spaces/kingabzpro/savtadepth.*

Am I the one who added this dataset ? Yes

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3760/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3760/timeline | null | null | {

"url": "",

"html_url": "",

"diff_url": "",

"patch_url": "",

"merged_at": 0

} | false |

https://api.github.com/repos/huggingface/datasets/issues/3759 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3759/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3759/comments | https://api.github.com/repos/huggingface/datasets/issues/3759/events | https://github.com/huggingface/datasets/pull/3759 | 1,143,400,770 | PR_kwDODunzps4zGhQu | 3,759 | Rename GenerateMode to DownloadMode | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "",

"id": 0,

"node_id": "",

"avatar_url": "",

"gravatar_id": "",

"url": "",

"html_url": "",

"followers_url": "",

"following_url": "",

"gists_url": "",

"starred_url": "",

"subscriptions_url": "",

"organizations_url": "",

"repos_url": "",

"events_url": "",

"received_events_url": "",

"type": "",

"site_admin": false

} | [] | null | [

"Thanks! Used here: https://github.com/huggingface/datasets-preview-backend/blob/main/src/datasets_preview_backend/models/dataset.py#L26 :) "

] | 1,645,203,233,000 | 1,645,538,244,000 | 1,645,532,572,000 | MEMBER | null | This PR:

- Renames `GenerateMode` to `DownloadMode`

- Implements `DeprecatedEnum`

- Deprecates `GenerateMode`

Close #769. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3759/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3759/timeline | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3759",

"html_url": "https://github.com/huggingface/datasets/pull/3759",

"diff_url": "https://github.com/huggingface/datasets/pull/3759.diff",