modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

unknown | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

unknown | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

akdeniz27/bert-base-turkish-cased-ner | akdeniz27 | "2024-06-18T09:42:03Z" | 718,164 | 16 | transformers | [

"transformers",

"pytorch",

"onnx",

"safetensors",

"bert",

"token-classification",

"tr",

"doi:10.57967/hf/0949",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | "2022-03-02T23:29:05Z" | ---

language: tr

license: mit

widget:

- text: "Mustafa Kemal Atatürk 19 Mayıs 1919'da Samsun'a çıktı."

---

# Turkish Named Entity Recognition (NER) Model

This model is the fine-tuned model of "dbmdz/bert-base-turkish-cased"

using a reviewed version of well known Turkish NER dataset

(https://github.com/stefan-it/turkish-bert/files/4558187/nerdata.txt).

# Fine-tuning parameters:

```

task = "ner"

model_checkpoint = "dbmdz/bert-base-turkish-cased"

batch_size = 8

label_list = ['O', 'B-PER', 'I-PER', 'B-ORG', 'I-ORG', 'B-LOC', 'I-LOC']

max_length = 512

learning_rate = 2e-5

num_train_epochs = 3

weight_decay = 0.01

```

# How to use:

```

model = AutoModelForTokenClassification.from_pretrained("akdeniz27/bert-base-turkish-cased-ner")

tokenizer = AutoTokenizer.from_pretrained("akdeniz27/bert-base-turkish-cased-ner")

ner = pipeline('ner', model=model, tokenizer=tokenizer, aggregation_strategy="first")

ner("your text here")

```

Pls refer "https://huggingface.co/transformers/_modules/transformers/pipelines/token_classification.html" for entity grouping with aggregation_strategy parameter.

# Reference test results:

* accuracy: 0.9933935699477056

* f1: 0.9592969472710453

* precision: 0.9543530277931161

* recall: 0.9642923563325274

Evaluation results with the test sets proposed in ["Küçük, D., Küçük, D., Arıcı, N. 2016. Türkçe Varlık İsmi Tanıma için bir Veri Kümesi ("A Named Entity Recognition Dataset for Turkish"). IEEE Sinyal İşleme, İletişim ve Uygulamaları Kurultayı. Zonguldak, Türkiye."](https://ieeexplore.ieee.org/document/7495744) paper.

* Test Set Acc. Prec. Rec. F1-Score

* 20010000 0.9946 0.9871 0.9463 0.9662

* 20020000 0.9928 0.9134 0.9206 0.9170

* 20030000 0.9942 0.9814 0.9186 0.9489

* 20040000 0.9943 0.9660 0.9522 0.9590

* 20050000 0.9971 0.9539 0.9932 0.9732

* 20060000 0.9993 0.9942 0.9942 0.9942

* 20070000 0.9970 0.9806 0.9439 0.9619

* 20080000 0.9988 0.9821 0.9649 0.9735

* 20090000 0.9977 0.9891 0.9479 0.9681

* 20100000 0.9961 0.9684 0.9293 0.9485

* Overall 0.9961 0.9720 0.9516 0.9617 |

facebook/dinov2-large | facebook | "2023-09-06T11:23:50Z" | 713,069 | 26 | transformers | [

"transformers",

"pytorch",

"safetensors",

"dinov2",

"image-feature-extraction",

"dino",

"vision",

"arxiv:2304.07193",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | image-feature-extraction | "2023-07-17T16:47:01Z" | ---

license: apache-2.0

tags:

- dino

- vision

---

# Vision Transformer (large-sized model) trained using DINOv2

Vision Transformer (ViT) model trained using the DINOv2 method. It was introduced in the paper [DINOv2: Learning Robust Visual Features without Supervision](https://arxiv.org/abs/2304.07193) by Oquab et al. and first released in [this repository](https://github.com/facebookresearch/dinov2).

Disclaimer: The team releasing DINOv2 did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

The Vision Transformer (ViT) is a transformer encoder model (BERT-like) pretrained on a large collection of images in a self-supervised fashion.

Images are presented to the model as a sequence of fixed-size patches, which are linearly embedded. One also adds a [CLS] token to the beginning of a sequence to use it for classification tasks. One also adds absolute position embeddings before feeding the sequence to the layers of the Transformer encoder.

Note that this model does not include any fine-tuned heads.

By pre-training the model, it learns an inner representation of images that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled images for instance, you can train a standard classifier by placing a linear layer on top of the pre-trained encoder. One typically places a linear layer on top of the [CLS] token, as the last hidden state of this token can be seen as a representation of an entire image.

## Intended uses & limitations

You can use the raw model for feature extraction. See the [model hub](https://huggingface.co/models?search=facebook/dinov2) to look for

fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model:

```python

from transformers import AutoImageProcessor, AutoModel

from PIL import Image

import requests

url = 'http://images.cocodataset.org/val2017/000000039769.jpg'

image = Image.open(requests.get(url, stream=True).raw)

processor = AutoImageProcessor.from_pretrained('facebook/dinov2-large')

model = AutoModel.from_pretrained('facebook/dinov2-large')

inputs = processor(images=image, return_tensors="pt")

outputs = model(**inputs)

last_hidden_states = outputs.last_hidden_state

```

### BibTeX entry and citation info

```bibtex

misc{oquab2023dinov2,

title={DINOv2: Learning Robust Visual Features without Supervision},

author={Maxime Oquab and Timothée Darcet and Théo Moutakanni and Huy Vo and Marc Szafraniec and Vasil Khalidov and Pierre Fernandez and Daniel Haziza and Francisco Massa and Alaaeldin El-Nouby and Mahmoud Assran and Nicolas Ballas and Wojciech Galuba and Russell Howes and Po-Yao Huang and Shang-Wen Li and Ishan Misra and Michael Rabbat and Vasu Sharma and Gabriel Synnaeve and Hu Xu and Hervé Jegou and Julien Mairal and Patrick Labatut and Armand Joulin and Piotr Bojanowski},

year={2023},

eprint={2304.07193},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

``` |

facebook/mask2former-swin-base-coco-panoptic | facebook | "2023-09-06T19:14:44Z" | 709,470 | 12 | transformers | [

"transformers",

"pytorch",

"safetensors",

"mask2former",

"vision",

"image-segmentation",

"dataset:coco",

"arxiv:2112.01527",

"arxiv:2107.06278",

"license:other",

"endpoints_compatible",

"region:us"

] | image-segmentation | "2023-01-02T16:06:26Z" | ---

license: other

tags:

- vision

- image-segmentation

datasets:

- coco

widget:

- src: http://images.cocodataset.org/val2017/000000039769.jpg

example_title: Cats

- src: http://images.cocodataset.org/val2017/000000039770.jpg

example_title: Castle

---

# Mask2Former

Mask2Former model trained on COCO panoptic segmentation (base-sized version, Swin backbone). It was introduced in the paper [Masked-attention Mask Transformer for Universal Image Segmentation

](https://arxiv.org/abs/2112.01527) and first released in [this repository](https://github.com/facebookresearch/Mask2Former/).

Disclaimer: The team releasing Mask2Former did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

Mask2Former addresses instance, semantic and panoptic segmentation with the same paradigm: by predicting a set of masks and corresponding labels. Hence, all 3 tasks are treated as if they were instance segmentation. Mask2Former outperforms the previous SOTA,

[MaskFormer](https://arxiv.org/abs/2107.06278) both in terms of performance an efficiency by (i) replacing the pixel decoder with a more advanced multi-scale deformable attention Transformer, (ii) adopting a Transformer decoder with masked attention to boost performance without

without introducing additional computation and (iii) improving training efficiency by calculating the loss on subsampled points instead of whole masks.

## Intended uses & limitations

You can use this particular checkpoint for panoptic segmentation. See the [model hub](https://huggingface.co/models?search=mask2former) to look for other

fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model:

```python

import requests

import torch

from PIL import Image

from transformers import AutoImageProcessor, Mask2FormerForUniversalSegmentation

# load Mask2Former fine-tuned on COCO panoptic segmentation

processor = AutoImageProcessor.from_pretrained("facebook/mask2former-swin-base-coco-panoptic")

model = Mask2FormerForUniversalSegmentation.from_pretrained("facebook/mask2former-swin-base-coco-panoptic")

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

inputs = processor(images=image, return_tensors="pt")

with torch.no_grad():

outputs = model(**inputs)

# model predicts class_queries_logits of shape `(batch_size, num_queries)`

# and masks_queries_logits of shape `(batch_size, num_queries, height, width)`

class_queries_logits = outputs.class_queries_logits

masks_queries_logits = outputs.masks_queries_logits

# you can pass them to processor for postprocessing

result = processor.post_process_panoptic_segmentation(outputs, target_sizes=[image.size[::-1]])[0]

# we refer to the demo notebooks for visualization (see "Resources" section in the Mask2Former docs)

predicted_panoptic_map = result["segmentation"]

```

For more code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/master/en/model_doc/mask2former). |

mistralai/Mistral-7B-v0.1 | mistralai | "2023-12-11T19:27:24Z" | 706,916 | 3,285 | transformers | [

"transformers",

"pytorch",

"safetensors",

"mistral",

"text-generation",

"pretrained",

"en",

"arxiv:2310.06825",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | "2023-09-20T13:03:50Z" | ---

license: apache-2.0

pipeline_tag: text-generation

language:

- en

tags:

- pretrained

inference:

parameters:

temperature: 0.7

---

# Model Card for Mistral-7B-v0.1

The Mistral-7B-v0.1 Large Language Model (LLM) is a pretrained generative text model with 7 billion parameters.

Mistral-7B-v0.1 outperforms Llama 2 13B on all benchmarks we tested.

For full details of this model please read our [paper](https://arxiv.org/abs/2310.06825) and [release blog post](https://mistral.ai/news/announcing-mistral-7b/).

## Model Architecture

Mistral-7B-v0.1 is a transformer model, with the following architecture choices:

- Grouped-Query Attention

- Sliding-Window Attention

- Byte-fallback BPE tokenizer

## Troubleshooting

- If you see the following error:

```

KeyError: 'mistral'

```

- Or:

```

NotImplementedError: Cannot copy out of meta tensor; no data!

```

Ensure you are utilizing a stable version of Transformers, 4.34.0 or newer.

## Notice

Mistral 7B is a pretrained base model and therefore does not have any moderation mechanisms.

## The Mistral AI Team

Albert Jiang, Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guillaume Lample, Lélio Renard Lavaud, Lucile Saulnier, Marie-Anne Lachaux, Pierre Stock, Teven Le Scao, Thibaut Lavril, Thomas Wang, Timothée Lacroix, William El Sayed. |

facebook/detr-resnet-101 | facebook | "2023-12-14T17:21:17Z" | 690,324 | 92 | transformers | [

"transformers",

"pytorch",

"safetensors",

"detr",

"object-detection",

"vision",

"dataset:coco",

"arxiv:2005.12872",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | object-detection | "2022-03-02T23:29:05Z" | ---

license: apache-2.0

tags:

- object-detection

- vision

datasets:

- coco

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/savanna.jpg

example_title: Savanna

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/football-match.jpg

example_title: Football Match

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/airport.jpg

example_title: Airport

---

# DETR (End-to-End Object Detection) model with ResNet-101 backbone

DEtection TRansformer (DETR) model trained end-to-end on COCO 2017 object detection (118k annotated images). It was introduced in the paper [End-to-End Object Detection with Transformers](https://arxiv.org/abs/2005.12872) by Carion et al. and first released in [this repository](https://github.com/facebookresearch/detr).

Disclaimer: The team releasing DETR did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

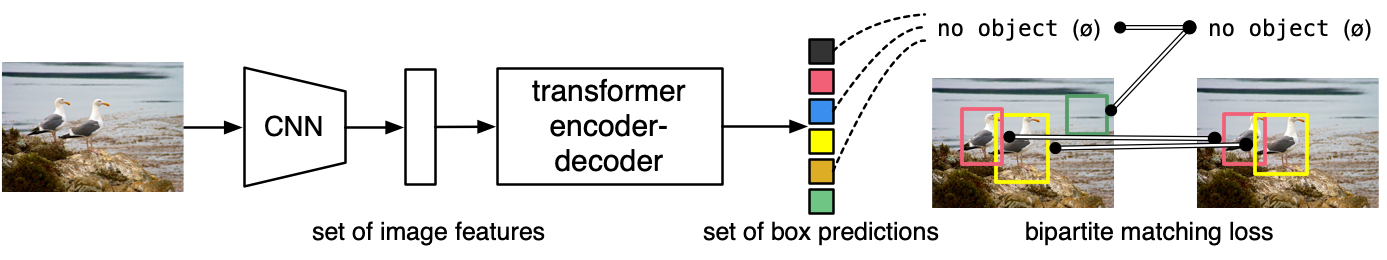

The DETR model is an encoder-decoder transformer with a convolutional backbone. Two heads are added on top of the decoder outputs in order to perform object detection: a linear layer for the class labels and a MLP (multi-layer perceptron) for the bounding boxes. The model uses so-called object queries to detect objects in an image. Each object query looks for a particular object in the image. For COCO, the number of object queries is set to 100.

The model is trained using a "bipartite matching loss": one compares the predicted classes + bounding boxes of each of the N = 100 object queries to the ground truth annotations, padded up to the same length N (so if an image only contains 4 objects, 96 annotations will just have a "no object" as class and "no bounding box" as bounding box). The Hungarian matching algorithm is used to create an optimal one-to-one mapping between each of the N queries and each of the N annotations. Next, standard cross-entropy (for the classes) and a linear combination of the L1 and generalized IoU loss (for the bounding boxes) are used to optimize the parameters of the model.

## Intended uses & limitations

You can use the raw model for object detection. See the [model hub](https://huggingface.co/models?search=facebook/detr) to look for all available DETR models.

### How to use

Here is how to use this model:

```python

from transformers import DetrImageProcessor, DetrForObjectDetection

import torch

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

# you can specify the revision tag if you don't want the timm dependency

processor = DetrImageProcessor.from_pretrained("facebook/detr-resnet-101", revision="no_timm")

model = DetrForObjectDetection.from_pretrained("facebook/detr-resnet-101", revision="no_timm")

inputs = processor(images=image, return_tensors="pt")

outputs = model(**inputs)

# convert outputs (bounding boxes and class logits) to COCO API

# let's only keep detections with score > 0.9

target_sizes = torch.tensor([image.size[::-1]])

results = processor.post_process_object_detection(outputs, target_sizes=target_sizes, threshold=0.9)[0]

for score, label, box in zip(results["scores"], results["labels"], results["boxes"]):

box = [round(i, 2) for i in box.tolist()]

print(

f"Detected {model.config.id2label[label.item()]} with confidence "

f"{round(score.item(), 3)} at location {box}"

)

```

This should output (something along the lines of):

```

Detected cat with confidence 0.998 at location [344.06, 24.85, 640.34, 373.74]

Detected remote with confidence 0.997 at location [328.13, 75.93, 372.81, 187.66]

Detected remote with confidence 0.997 at location [39.34, 70.13, 175.56, 118.78]

Detected cat with confidence 0.998 at location [15.36, 51.75, 316.89, 471.16]

Detected couch with confidence 0.995 at location [-0.19, 0.71, 639.73, 474.17]

```

Currently, both the feature extractor and model support PyTorch.

## Training data

The DETR model was trained on [COCO 2017 object detection](https://cocodataset.org/#download), a dataset consisting of 118k/5k annotated images for training/validation respectively.

## Training procedure

### Preprocessing

The exact details of preprocessing of images during training/validation can be found [here](https://github.com/google-research/vision_transformer/blob/master/vit_jax/input_pipeline.py).

Images are resized/rescaled such that the shortest side is at least 800 pixels and the largest side at most 1333 pixels, and normalized across the RGB channels with the ImageNet mean (0.485, 0.456, 0.406) and standard deviation (0.229, 0.224, 0.225).

### Training

The model was trained for 300 epochs on 16 V100 GPUs. This takes 3 days, with 4 images per GPU (hence a total batch size of 64).

## Evaluation results

This model achieves an AP (average precision) of **43.5** on COCO 2017 validation. For more details regarding evaluation results, we refer to table 1 of the original paper.

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-2005-12872,

author = {Nicolas Carion and

Francisco Massa and

Gabriel Synnaeve and

Nicolas Usunier and

Alexander Kirillov and

Sergey Zagoruyko},

title = {End-to-End Object Detection with Transformers},

journal = {CoRR},

volume = {abs/2005.12872},

year = {2020},

url = {https://arxiv.org/abs/2005.12872},

archivePrefix = {arXiv},

eprint = {2005.12872},

timestamp = {Thu, 28 May 2020 17:38:09 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2005-12872.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

``` |

TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ | TheBloke | "2023-12-14T14:30:44Z" | 689,581 | 127 | transformers | [

"transformers",

"safetensors",

"mixtral",

"text-generation",

"conversational",

"fr",

"it",

"de",

"es",

"en",

"base_model:mistralai/Mixtral-8x7B-Instruct-v0.1",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"4-bit",

"gptq",

"region:us"

] | text-generation | "2023-12-11T18:49:53Z" | ---

base_model: mistralai/Mixtral-8x7B-Instruct-v0.1

inference: false

language:

- fr

- it

- de

- es

- en

license: apache-2.0

model_creator: Mistral AI_

model_name: Mixtral 8X7B Instruct v0.1

model_type: mixtral

prompt_template: '[INST] {prompt} [/INST]

'

quantized_by: TheBloke

widget:

- output:

text: 'Arr, shiver me timbers! Ye have a llama on yer lawn, ye say? Well, that

be a new one for me! Here''s what I''d suggest, arr:

1. Firstly, ensure yer safety. Llamas may look gentle, but they can be protective

if they feel threatened.

2. Try to make the area less appealing to the llama. Remove any food sources

or water that might be attracting it.

3. Contact local animal control or a wildlife rescue organization. They be the

experts and can provide humane ways to remove the llama from yer property.

4. If ye have any experience with animals, you could try to gently herd the

llama towards a nearby field or open space. But be careful, arr!

Remember, arr, it be important to treat the llama with respect and care. It

be a creature just trying to survive, like the rest of us.'

text: '[INST] You are a pirate chatbot who always responds with Arr and pirate speak!

There''s a llama on my lawn, how can I get rid of him? [/INST]'

---

<!-- markdownlint-disable MD041 -->

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Mixtral 8X7B Instruct v0.1 - GPTQ

- Model creator: [Mistral AI_](https://huggingface.co/mistralai)

- Original model: [Mixtral 8X7B Instruct v0.1](https://huggingface.co/mistralai/Mixtral-8x7B-Instruct-v0.1)

<!-- description start -->

# Description

This repo contains GPTQ model files for [Mistral AI_'s Mixtral 8X7B Instruct v0.1](https://huggingface.co/mistralai/Mixtral-8x7B-Instruct-v0.1).

Mixtral GPTQs currently require:

* Transformers 4.36.0 or later

* either, AutoGPTQ 0.6 compiled from source, or

* Transformers 4.37.0.dev0 compiled from Github with: `pip3 install git+https://github.com/huggingface/transformers`

Multiple GPTQ parameter permutations are provided; see Provided Files below for details of the options provided, their parameters, and the software used to create them.

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GGUF)

* [Mistral AI_'s original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/mistralai/Mixtral-8x7B-Instruct-v0.1)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Mistral

```

[INST] {prompt} [/INST]

```

<!-- prompt-template end -->

<!-- README_GPTQ.md-compatible clients start -->

## Known compatible clients / servers

GPTQ models are currently supported on Linux (NVidia/AMD) and Windows (NVidia only). macOS users: please use GGUF models.

Mixtral GPTQs currently have special requirements - see Description above.

<!-- README_GPTQ.md-compatible clients end -->

<!-- README_GPTQ.md-provided-files start -->

## Provided files, and GPTQ parameters

Multiple quantisation parameters are provided, to allow you to choose the best one for your hardware and requirements.

Each separate quant is in a different branch. See below for instructions on fetching from different branches.

Most GPTQ files are made with AutoGPTQ. Mistral models are currently made with Transformers.

<details>

<summary>Explanation of GPTQ parameters</summary>

- Bits: The bit size of the quantised model.

- GS: GPTQ group size. Higher numbers use less VRAM, but have lower quantisation accuracy. "None" is the lowest possible value.

- Act Order: True or False. Also known as `desc_act`. True results in better quantisation accuracy. Some GPTQ clients have had issues with models that use Act Order plus Group Size, but this is generally resolved now.

- Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 0.01 is default, but 0.1 results in slightly better accuracy.

- GPTQ dataset: The calibration dataset used during quantisation. Using a dataset more appropriate to the model's training can improve quantisation accuracy. Note that the GPTQ calibration dataset is not the same as the dataset used to train the model - please refer to the original model repo for details of the training dataset(s).

- Sequence Length: The length of the dataset sequences used for quantisation. Ideally this is the same as the model sequence length. For some very long sequence models (16+K), a lower sequence length may have to be used. Note that a lower sequence length does not limit the sequence length of the quantised model. It only impacts the quantisation accuracy on longer inference sequences.

- ExLlama Compatibility: Whether this file can be loaded with ExLlama, which currently only supports Llama and Mistral models in 4-bit.

</details>

| Branch | Bits | GS | Act Order | Damp % | GPTQ Dataset | Seq Len | Size | ExLlama | Desc |

| ------ | ---- | -- | --------- | ------ | ------------ | ------- | ---- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ/tree/main) | 4 | None | Yes | 0.1 | [VMware Open Instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 8192 | 23.81 GB | No | 4-bit, with Act Order. No group size, to lower VRAM requirements. |

| [gptq-4bit-128g-actorder_True](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ/tree/gptq-4bit-128g-actorder_True) | 4 | 128 | Yes | 0.1 | [VMware Open Instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 8192 | 24.70 GB | No | 4-bit, with Act Order and group size 128g. Uses even less VRAM than 64g, but with slightly lower accuracy. |

| [gptq-4bit-32g-actorder_True](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ/tree/gptq-4bit-32g-actorder_True) | 4 | 32 | Yes | 0.1 | [VMware Open Instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 8192 | 27.42 GB | No | 4-bit, with Act Order and group size 32g. Gives highest possible inference quality, with maximum VRAM usage. |

| [gptq-3bit--1g-actorder_True](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ/tree/gptq-3bit--1g-actorder_True) | 3 | None | Yes | 0.1 | [VMware Open Instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 8192 | 18.01 GB | No | 3-bit, with Act Order and no group size. Lowest possible VRAM requirements. May be lower quality than 3-bit 128g. |

| [gptq-3bit-128g-actorder_True](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ/tree/gptq-3bit-128g-actorder_True) | 3 | 128 | Yes | 0.1 | [VMware Open Instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 8192 | 18.85 GB | No | 3-bit, with group size 128g and act-order. Higher quality than 128g-False. |

| [gptq-8bit--1g-actorder_True](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ/tree/gptq-8bit--1g-actorder_True) | 8 | None | Yes | 0.1 | [VMware Open Instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 8192 | 47.04 GB | No | 8-bit, with Act Order. No group size, to lower VRAM requirements. |

| [gptq-8bit-128g-actorder_True](https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ/tree/gptq-8bit-128g-actorder_True) | 8 | 128 | Yes | 0.1 | [VMware Open Instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 8192 | 48.10 GB | No | 8-bit, with group size 128g for higher inference quality and with Act Order for even higher accuracy. |

<!-- README_GPTQ.md-provided-files end -->

<!-- README_GPTQ.md-download-from-branches start -->

## How to download, including from branches

### In text-generation-webui

To download from the `main` branch, enter `TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ` in the "Download model" box.

To download from another branch, add `:branchname` to the end of the download name, eg `TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ:gptq-4bit-128g-actorder_True`

### From the command line

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub

```

To download the `main` branch to a folder called `Mixtral-8x7B-Instruct-v0.1-GPTQ`:

```shell

mkdir Mixtral-8x7B-Instruct-v0.1-GPTQ

huggingface-cli download TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ --local-dir Mixtral-8x7B-Instruct-v0.1-GPTQ --local-dir-use-symlinks False

```

To download from a different branch, add the `--revision` parameter:

```shell

mkdir Mixtral-8x7B-Instruct-v0.1-GPTQ

huggingface-cli download TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ --revision gptq-4bit-128g-actorder_True --local-dir Mixtral-8x7B-Instruct-v0.1-GPTQ --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

If you remove the `--local-dir-use-symlinks False` parameter, the files will instead be stored in the central Hugging Face cache directory (default location on Linux is: `~/.cache/huggingface`), and symlinks will be added to the specified `--local-dir`, pointing to their real location in the cache. This allows for interrupted downloads to be resumed, and allows you to quickly clone the repo to multiple places on disk without triggering a download again. The downside, and the reason why I don't list that as the default option, is that the files are then hidden away in a cache folder and it's harder to know where your disk space is being used, and to clear it up if/when you want to remove a download model.

The cache location can be changed with the `HF_HOME` environment variable, and/or the `--cache-dir` parameter to `huggingface-cli`.

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

mkdir Mixtral-8x7B-Instruct-v0.1-GPTQ

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ --local-dir Mixtral-8x7B-Instruct-v0.1-GPTQ --local-dir-use-symlinks False

```

Windows Command Line users: You can set the environment variable by running `set HF_HUB_ENABLE_HF_TRANSFER=1` before the download command.

</details>

### With `git` (**not** recommended)

To clone a specific branch with `git`, use a command like this:

```shell

git clone --single-branch --branch gptq-4bit-128g-actorder_True https://huggingface.co/TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ

```

Note that using Git with HF repos is strongly discouraged. It will be much slower than using `huggingface-hub`, and will use twice as much disk space as it has to store the model files twice (it stores every byte both in the intended target folder, and again in the `.git` folder as a blob.)

<!-- README_GPTQ.md-download-from-branches end -->

<!-- README_GPTQ.md-text-generation-webui start -->

## How to easily download and use this model in [text-generation-webui](https://github.com/oobabooga/text-generation-webui)

**NOTE**: Requires:

* Transformers 4.36.0, or Transformers 4.37.0.dev0 from Github

* Either AutoGPTQ 0.6 compiled from source and `Loader: AutoGPTQ`,

* or, `Loader: Transformers`, if you installed Transformers from Github: `pip3 install git+https://github.com/huggingface/transformers`

Please make sure you're using the latest version of [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

1. Click the **Model tab**.

2. Under **Download custom model or LoRA**, enter `TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ`.

- To download from a specific branch, enter for example `TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ:gptq-4bit-128g-actorder_True`

- see Provided Files above for the list of branches for each option.

3. Click **Download**.

4. The model will start downloading. Once it's finished it will say "Done".

5. In the top left, click the refresh icon next to **Model**.

6. In the **Model** dropdown, choose the model you just downloaded: `Mixtral-8x7B-Instruct-v0.1-GPTQ`

7. The model will automatically load, and is now ready for use!

8. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

- Note that you do not need to and should not set manual GPTQ parameters any more. These are set automatically from the file `quantize_config.json`.

9. Once you're ready, click the **Text Generation** tab and enter a prompt to get started!

<!-- README_GPTQ.md-text-generation-webui end -->

<!-- README_GPTQ.md-use-from-tgi start -->

## Serving this model from Text Generation Inference (TGI)

Not currently supported for Mixtral models.

<!-- README_GPTQ.md-use-from-tgi end -->

<!-- README_GPTQ.md-use-from-python start -->

## Python code example: inference from this GPTQ model

### Install the necessary packages

Requires: Transformers 4.37.0.dev0 from Github, Optimum 1.16.0 or later, and AutoGPTQ 0.5.1 or later.

```shell

pip3 install --upgrade "git+https://github.com/huggingface/transformers" optimum

# If using PyTorch 2.1 + CUDA 12.x:

pip3 install --upgrade auto-gptq

# or, if using PyTorch 2.1 + CUDA 11.x:

pip3 install --upgrade auto-gptq --extra-index-url https://huggingface.github.io/autogptq-index/whl/cu118/

```

If you are using PyTorch 2.0, you will need to install AutoGPTQ from source. Likewise if you have problems with the pre-built wheels, you should try building from source:

```shell

pip3 uninstall -y auto-gptq

git clone https://github.com/PanQiWei/AutoGPTQ

cd AutoGPTQ

DISABLE_QIGEN=1 pip3 install .

```

### Example Python code

```python

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

model_name_or_path = "TheBloke/Mixtral-8x7B-Instruct-v0.1-GPTQ"

# To use a different branch, change revision

# For example: revision="gptq-4bit-128g-actorder_True"

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

device_map="auto",

trust_remote_code=False,

revision="main")

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

prompt = "Write a story about llamas"

system_message = "You are a story writing assistant"

prompt_template=f'''[INST] {prompt} [/INST]

'''

print("\n\n*** Generate:")

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, do_sample=True, top_p=0.95, top_k=40, max_new_tokens=512)

print(tokenizer.decode(output[0]))

# Inference can also be done using transformers' pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

```

<!-- README_GPTQ.md-use-from-python end -->

<!-- README_GPTQ.md-compatibility start -->

## Compatibility

The files provided are tested to work with AutoGPTQ 0.6 (compiled from source) and Transformers 4.37.0 (installed from Github).

<!-- README_GPTQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Michael Levine, 阿明, Trailburnt, Nikolai Manek, John Detwiler, Randy H, Will Dee, Sebastain Graf, NimbleBox.ai, Eugene Pentland, Emad Mostaque, Ai Maven, Jim Angel, Jeff Scroggin, Michael Davis, Manuel Alberto Morcote, Stephen Murray, Robert, Justin Joy, Luke @flexchar, Brandon Frisco, Elijah Stavena, S_X, Dan Guido, Undi ., Komninos Chatzipapas, Shadi, theTransient, Lone Striker, Raven Klaugh, jjj, Cap'n Zoog, Michel-Marie MAUDET (LINAGORA), Matthew Berman, David, Fen Risland, Omer Bin Jawed, Luke Pendergrass, Kalila, OG, Erik Bjäreholt, Rooh Singh, Joseph William Delisle, Dan Lewis, TL, John Villwock, AzureBlack, Brad, Pedro Madruga, Caitlyn Gatomon, K, jinyuan sun, Mano Prime, Alex, Jeffrey Morgan, Alicia Loh, Illia Dulskyi, Chadd, transmissions 11, fincy, Rainer Wilmers, ReadyPlayerEmma, knownsqashed, Mandus, biorpg, Deo Leter, Brandon Phillips, SuperWojo, Sean Connelly, Iucharbius, Jack West, Harry Royden McLaughlin, Nicholas, terasurfer, Vitor Caleffi, Duane Dunston, Johann-Peter Hartmann, David Ziegler, Olakabola, Ken Nordquist, Trenton Dambrowitz, Tom X Nguyen, Vadim, Ajan Kanaga, Leonard Tan, Clay Pascal, Alexandros Triantafyllidis, JM33133, Xule, vamX, ya boyyy, subjectnull, Talal Aujan, Alps Aficionado, wassieverse, Ari Malik, James Bentley, Woland, Spencer Kim, Michael Dempsey, Fred von Graf, Elle, zynix, William Richards, Stanislav Ovsiannikov, Edmond Seymore, Jonathan Leane, Martin Kemka, usrbinkat, Enrico Ros

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: Mistral AI_'s Mixtral 8X7B Instruct v0.1

# Model Card for Mixtral-8x7B

The Mixtral-8x7B Large Language Model (LLM) is a pretrained generative Sparse Mixture of Experts. The Mixtral-8x7B outperforms Llama 2 70B on most benchmarks we tested.

For full details of this model please read our [release blog post](https://mistral.ai/news/mixtral-of-experts/).

## Warning

This repo contains weights that are compatible with [vLLM](https://github.com/vllm-project/vllm) serving of the model as well as Hugging Face [transformers](https://github.com/huggingface/transformers) library. It is based on the original Mixtral [torrent release](magnet:?xt=urn:btih:5546272da9065eddeb6fcd7ffddeef5b75be79a7&dn=mixtral-8x7b-32kseqlen&tr=udp%3A%2F%http://2Fopentracker.i2p.rocks%3A6969%2Fannounce&tr=http%3A%2F%http://2Ftracker.openbittorrent.com%3A80%2Fannounce), but the file format and parameter names are different. Please note that model cannot (yet) be instantiated with HF.

## Instruction format

This format must be strictly respected, otherwise the model will generate sub-optimal outputs.

The template used to build a prompt for the Instruct model is defined as follows:

```

<s> [INST] Instruction [/INST] Model answer</s> [INST] Follow-up instruction [/INST]

```

Note that `<s>` and `</s>` are special tokens for beginning of string (BOS) and end of string (EOS) while [INST] and [/INST] are regular strings.

As reference, here is the pseudo-code used to tokenize instructions during fine-tuning:

```python

def tokenize(text):

return tok.encode(text, add_special_tokens=False)

[BOS_ID] +

tokenize("[INST]") + tokenize(USER_MESSAGE_1) + tokenize("[/INST]") +

tokenize(BOT_MESSAGE_1) + [EOS_ID] +

…

tokenize("[INST]") + tokenize(USER_MESSAGE_N) + tokenize("[/INST]") +

tokenize(BOT_MESSAGE_N) + [EOS_ID]

```

In the pseudo-code above, note that the `tokenize` method should not add a BOS or EOS token automatically, but should add a prefix space.

## Run the model

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mixtral-8x7B-Instruct-v0.1"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id)

text = "Hello my name is"

inputs = tokenizer(text, return_tensors="pt")

outputs = model.generate(**inputs, max_new_tokens=20)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

By default, transformers will load the model in full precision. Therefore you might be interested to further reduce down the memory requirements to run the model through the optimizations we offer in HF ecosystem:

### In half-precision

Note `float16` precision only works on GPU devices

<details>

<summary> Click to expand </summary>

```diff

+ import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mixtral-8x7B-Instruct-v0.1"

tokenizer = AutoTokenizer.from_pretrained(model_id)

+ model = AutoModelForCausalLM.from_pretrained(model_id, torch_dtype=torch.float16).to(0)

text = "Hello my name is"

+ inputs = tokenizer(text, return_tensors="pt").to(0)

outputs = model.generate(**inputs, max_new_tokens=20)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

</details>

### Lower precision using (8-bit & 4-bit) using `bitsandbytes`

<details>

<summary> Click to expand </summary>

```diff

+ import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mixtral-8x7B-Instruct-v0.1"

tokenizer = AutoTokenizer.from_pretrained(model_id)

+ model = AutoModelForCausalLM.from_pretrained(model_id, load_in_4bit=True)

text = "Hello my name is"

+ inputs = tokenizer(text, return_tensors="pt").to(0)

outputs = model.generate(**inputs, max_new_tokens=20)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

</details>

### Load the model with Flash Attention 2

<details>

<summary> Click to expand </summary>

```diff

+ import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mixtral-8x7B-Instruct-v0.1"

tokenizer = AutoTokenizer.from_pretrained(model_id)

+ model = AutoModelForCausalLM.from_pretrained(model_id, use_flash_attention_2=True)

text = "Hello my name is"

+ inputs = tokenizer(text, return_tensors="pt").to(0)

outputs = model.generate(**inputs, max_new_tokens=20)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

</details>

## Limitations

The Mixtral-8x7B Instruct model is a quick demonstration that the base model can be easily fine-tuned to achieve compelling performance.

It does not have any moderation mechanisms. We're looking forward to engaging with the community on ways to

make the model finely respect guardrails, allowing for deployment in environments requiring moderated outputs.

# The Mistral AI Team

Albert Jiang, Alexandre Sablayrolles, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Emma Bou Hanna, Florian Bressand, Gianna Lengyel, Guillaume Bour, Guillaume Lample, Lélio Renard Lavaud, Louis Ternon, Lucile Saulnier, Marie-Anne Lachaux, Pierre Stock, Teven Le Scao, Théophile Gervet, Thibaut Lavril, Thomas Wang, Timothée Lacroix, William El Sayed.

|

lmsys/fastchat-t5-3b-v1.0 | lmsys | "2023-06-29T22:39:04Z" | 680,695 | 351 | transformers | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | text2text-generation | "2023-04-27T23:48:43Z" | ---

license: apache-2.0

inference: false

---

# FastChat-T5 Model Card

## Model details

**Model type:**

FastChat-T5 is an open-source chatbot trained by fine-tuning Flan-t5-xl (3B parameters) on user-shared conversations collected from ShareGPT.

It is based on an encoder-decoder transformer architecture, and can autoregressively generate responses to users' inputs.

**Model date:**

FastChat-T5 was trained on April 2023.

**Organizations developing the model:**

The FastChat developers, primarily Dacheng Li, Lianmin Zheng and Hao Zhang.

**Paper or resources for more information:**

https://github.com/lm-sys/FastChat#FastChat-T5

**License:**

Apache License 2.0

**Where to send questions or comments about the model:**

https://github.com/lm-sys/FastChat/issues

## Intended use

**Primary intended uses:**

The primary use of FastChat-T5 is the commercial usage of large language models and chatbots. It can also be used for research purposes.

**Primary intended users:**

The primary intended users of the model are entrepreneurs and researchers in natural language processing, machine learning, and artificial intelligence.

## Training dataset

70K conversations collected from ShareGPT.com.

## Training details

It processes the ShareGPT data in the form of question answering. Each ChatGPT response is processed as an answer, and previous conversations between the user and the ChatGPT are processed as the question.

The encoder bi-directionally encodes a question into a hidden representation. The decoder uses cross-attention to attend to this representation while generating an answer uni-directionally from a start token.

This model is fine-tuned for 3 epochs, with a max learning rate 2e-5, warmup ratio 0.03, and a cosine learning rate schedule.

## Evaluation dataset

A preliminary evaluation of the model quality is conducted by creating a set of 80 diverse questions and utilizing GPT-4 to judge the model outputs. See https://vicuna.lmsys.org/ for more details.

|

pyannote/speaker-diarization-3.0 | pyannote | "2024-05-10T19:37:39Z" | 677,144 | 150 | pyannote-audio | [

"pyannote-audio",

"pyannote",

"pyannote-audio-pipeline",

"audio",

"voice",

"speech",

"speaker",

"speaker-diarization",

"speaker-change-detection",

"voice-activity-detection",

"overlapped-speech-detection",

"automatic-speech-recognition",

"arxiv:2111.14448",

"arxiv:2012.01477",

"license:mit",

"region:us"

] | automatic-speech-recognition | "2023-09-22T13:40:36Z" | ---

tags:

- pyannote

- pyannote-audio

- pyannote-audio-pipeline

- audio

- voice

- speech

- speaker

- speaker-diarization

- speaker-change-detection

- voice-activity-detection

- overlapped-speech-detection

- automatic-speech-recognition

license: mit

extra_gated_prompt: "The collected information will help acquire a better knowledge of pyannote.audio userbase and help its maintainers improve it further. Though this pipeline uses MIT license and will always remain open-source, we will occasionnally email you about premium pipelines and paid services around pyannote."

extra_gated_fields:

Company/university: text

Website: text

---

Using this open-source model in production?

Consider switching to [pyannoteAI](https://www.pyannote.ai) for better and faster options.

# 🎹 Speaker diarization 3.0

This pipeline has been trained by Séverin Baroudi with [pyannote.audio](https://github.com/pyannote/pyannote-audio) `3.0.0` using a combination of the training sets of AISHELL, AliMeeting, AMI, AVA-AVD, DIHARD, Ego4D, MSDWild, REPERE, and VoxConverse.

It ingests mono audio sampled at 16kHz and outputs speaker diarization as an [`Annotation`](http://pyannote.github.io/pyannote-core/structure.html#annotation) instance:

* stereo or multi-channel audio files are automatically downmixed to mono by averaging the channels.

* audio files sampled at a different rate are resampled to 16kHz automatically upon loading.

## Requirements

1. Install [`pyannote.audio`](https://github.com/pyannote/pyannote-audio) `3.0` with `pip install pyannote.audio`

2. Accept [`pyannote/segmentation-3.0`](https://hf.co/pyannote/segmentation-3.0) user conditions

3. Accept [`pyannote/speaker-diarization-3.0`](https://hf.co/pyannote-speaker-diarization-3.0) user conditions

4. Create access token at [`hf.co/settings/tokens`](https://hf.co/settings/tokens).

## Usage

```python

# instantiate the pipeline

from pyannote.audio import Pipeline

pipeline = Pipeline.from_pretrained(

"pyannote/speaker-diarization-3.0",

use_auth_token="HUGGINGFACE_ACCESS_TOKEN_GOES_HERE")

# run the pipeline on an audio file

diarization = pipeline("audio.wav")

# dump the diarization output to disk using RTTM format

with open("audio.rttm", "w") as rttm:

diarization.write_rttm(rttm)

```

### Processing on GPU

`pyannote.audio` pipelines run on CPU by default.

You can send them to GPU with the following lines:

```python

import torch

pipeline.to(torch.device("cuda"))

```

Real-time factor is around 2.5% using one Nvidia Tesla V100 SXM2 GPU (for the neural inference part) and one Intel Cascade Lake 6248 CPU (for the clustering part).

In other words, it takes approximately 1.5 minutes to process a one hour conversation.

### Processing from memory

Pre-loading audio files in memory may result in faster processing:

```python

waveform, sample_rate = torchaudio.load("audio.wav")

diarization = pipeline({"waveform": waveform, "sample_rate": sample_rate})

```

### Monitoring progress

Hooks are available to monitor the progress of the pipeline:

```python

from pyannote.audio.pipelines.utils.hook import ProgressHook

with ProgressHook() as hook:

diarization = pipeline("audio.wav", hook=hook)

```

### Controlling the number of speakers

In case the number of speakers is known in advance, one can use the `num_speakers` option:

```python

diarization = pipeline("audio.wav", num_speakers=2)

```

One can also provide lower and/or upper bounds on the number of speakers using `min_speakers` and `max_speakers` options:

```python

diarization = pipeline("audio.wav", min_speakers=2, max_speakers=5)

```

## Benchmark

This pipeline has been benchmarked on a large collection of datasets.

Processing is fully automatic:

* no manual voice activity detection (as is sometimes the case in the literature)

* no manual number of speakers (though it is possible to provide it to the pipeline)

* no fine-tuning of the internal models nor tuning of the pipeline hyper-parameters to each dataset

... with the least forgiving diarization error rate (DER) setup (named *"Full"* in [this paper](https://doi.org/10.1016/j.csl.2021.101254)):

* no forgiveness collar

* evaluation of overlapped speech

| Benchmark | [DER%](. "Diarization error rate") | [FA%](. "False alarm rate") | [Miss%](. "Missed detection rate") | [Conf%](. "Speaker confusion rate") | Expected output | File-level evaluation |

| ------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------- | --------------------------- | ---------------------------------- | ----------------------------------- | ----------------------------------------------------------------------------------------------------------------------------- | ----------------------------------------------------------------------------------------------------------------------------- |

| [AISHELL-4](http://www.openslr.org/111/) | 12.3 | 3.8 | 4.4 | 4.1 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AISHELL.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AISHELL.SpeakerDiarization.Benchmark.test.eval) |

| [AliMeeting (*channel 1*)](https://www.openslr.org/119/) | 24.3 | 4.4 | 10.0 | 9.9 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AliMeeting.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AliMeeting.SpeakerDiarization.Benchmark.test.eval) |

| [AMI (*headset mix,*](https://groups.inf.ed.ac.uk/ami/corpus/) [*only_words*)](https://github.com/BUTSpeechFIT/AMI-diarization-setup) | 19.0 | 3.6 | 9.5 | 5.9 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AMI.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AMI.SpeakerDiarization.Benchmark.test.eval) |

| [AMI (*array1, channel 1,*](https://groups.inf.ed.ac.uk/ami/corpus/) [*only_words)*](https://github.com/BUTSpeechFIT/AMI-diarization-setup) | 22.2 | 3.8 | 11.2 | 7.3 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AMI-SDM.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AMI-SDM.SpeakerDiarization.Benchmark.test.eval) |

| [AVA-AVD](https://arxiv.org/abs/2111.14448) | 49.1 | 10.8 | 15.7| 22.5 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AVA-AVD.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/AVA-AVD.SpeakerDiarization.Benchmark.test.eval) |

| [DIHARD 3 (*Full*)](https://arxiv.org/abs/2012.01477) | 21.7 | 6.2 | 8.1 | 7.3 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/DIHARD.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/DIHARD.SpeakerDiarization.Benchmark.test.eval) |

| [MSDWild](https://x-lance.github.io/MSDWILD/) | 24.6 | 5.8 | 8.0 | 10.7 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/MSDWILD.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/MSDWILD.SpeakerDiarization.Benchmark.test.eval) |

| [REPERE (*phase 2*)](https://islrn.org/resources/360-758-359-485-0/) | 7.8 | 1.8 | 2.6 | 3.5 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/REPERE.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/REPERE.SpeakerDiarization.Benchmark.test.eval) |

| [VoxConverse (*v0.3*)](https://github.com/joonson/voxconverse) | 11.3 | 4.1 | 3.4 | 3.8 | [RTTM](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/VoxConverse.SpeakerDiarization.Benchmark.test.rttm) | [eval](https://huggingface.co/pyannote/speaker-diarization-3.0.0/blob/main/reproducible_research/VoxConverse.SpeakerDiarization.Benchmark.test.eval) |

## Citations

```bibtex

@inproceedings{Plaquet23,

author={Alexis Plaquet and Hervé Bredin},

title={{Powerset multi-class cross entropy loss for neural speaker diarization}},

year=2023,

booktitle={Proc. INTERSPEECH 2023},

}

```

```bibtex

@inproceedings{Bredin23,

author={Hervé Bredin},

title={{pyannote.audio 2.1 speaker diarization pipeline: principle, benchmark, and recipe}},

year=2023,

booktitle={Proc. INTERSPEECH 2023},

}

```

|

mistralai/Mistral-7B-Instruct-v0.2 | mistralai | "2024-06-24T08:24:10Z" | 676,997 | 2,398 | transformers | [

"transformers",

"pytorch",

"safetensors",

"mistral",

"text-generation",

"finetuned",

"conversational",

"arxiv:2310.06825",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | "2023-12-11T13:18:44Z" | ---

license: apache-2.0

pipeline_tag: text-generation

tags:

- finetuned

inference: true

widget:

- messages:

- role: user

content: What is your favorite condiment?

---

# Model Card for Mistral-7B-Instruct-v0.2

###

> [!CAUTION]

> ⚠️

> The `transformers` tokenizer might give incorrect results as it has not been tested by the Mistral team. To make sure that your encoding and decoding is correct, please use `mistral_common` as shown below:

## Encode and Decode with `mistral_common`

```py

from mistral_common.tokens.tokenizers.mistral import MistralTokenizer

from mistral_common.protocol.instruct.messages import UserMessage

from mistral_common.protocol.instruct.request import ChatCompletionRequest

mistral_models_path = "MISTRAL_MODELS_PATH"

tokenizer = MistralTokenizer.v1()

completion_request = ChatCompletionRequest(messages=[UserMessage(content="Explain Machine Learning to me in a nutshell.")])

tokens = tokenizer.encode_chat_completion(completion_request).tokens

```

## Inference with `mistral_inference`

```py

from mistral_inference.model import Transformer

from mistral_inference.generate import generate

model = Transformer.from_folder(mistral_models_path)

out_tokens, _ = generate([tokens], model, max_tokens=64, temperature=0.0, eos_id=tokenizer.instruct_tokenizer.tokenizer.eos_id)

result = tokenizer.decode(out_tokens[0])

print(result)

```

## Inference with hugging face `transformers`

```py

from transformers import AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained("mistralai/Mistral-7B-Instruct-v0.2")

model.to("cuda")

generated_ids = model.generate(tokens, max_new_tokens=1000, do_sample=True)

# decode with mistral tokenizer

result = tokenizer.decode(generated_ids[0].tolist())

print(result)

```

> [!TIP]

> PRs to correct the `transformers` tokenizer so that it gives 1-to-1 the same results as the `mistral_common` reference implementation are very welcome!

---

The Mistral-7B-Instruct-v0.2 Large Language Model (LLM) is an instruct fine-tuned version of the Mistral-7B-v0.2.

Mistral-7B-v0.2 has the following changes compared to Mistral-7B-v0.1

- 32k context window (vs 8k context in v0.1)

- Rope-theta = 1e6

- No Sliding-Window Attention

For full details of this model please read our [paper](https://arxiv.org/abs/2310.06825) and [release blog post](https://mistral.ai/news/la-plateforme/).

## Instruction format

In order to leverage instruction fine-tuning, your prompt should be surrounded by `[INST]` and `[/INST]` tokens. The very first instruction should begin with a begin of sentence id. The next instructions should not. The assistant generation will be ended by the end-of-sentence token id.

E.g.

```

text = "<s>[INST] What is your favourite condiment? [/INST]"

"Well, I'm quite partial to a good squeeze of fresh lemon juice. It adds just the right amount of zesty flavour to whatever I'm cooking up in the kitchen!</s> "

"[INST] Do you have mayonnaise recipes? [/INST]"

```

This format is available as a [chat template](https://huggingface.co/docs/transformers/main/chat_templating) via the `apply_chat_template()` method:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

device = "cuda" # the device to load the model onto

model = AutoModelForCausalLM.from_pretrained("mistralai/Mistral-7B-Instruct-v0.2")

tokenizer = AutoTokenizer.from_pretrained("mistralai/Mistral-7B-Instruct-v0.2")

messages = [

{"role": "user", "content": "What is your favourite condiment?"},

{"role": "assistant", "content": "Well, I'm quite partial to a good squeeze of fresh lemon juice. It adds just the right amount of zesty flavour to whatever I'm cooking up in the kitchen!"},

{"role": "user", "content": "Do you have mayonnaise recipes?"}

]

encodeds = tokenizer.apply_chat_template(messages, return_tensors="pt")

model_inputs = encodeds.to(device)

model.to(device)

generated_ids = model.generate(model_inputs, max_new_tokens=1000, do_sample=True)

decoded = tokenizer.batch_decode(generated_ids)

print(decoded[0])

```

## Troubleshooting

- If you see the following error:

```

Traceback (most recent call last):

File "", line 1, in

File "/transformers/models/auto/auto_factory.py", line 482, in from_pretrained

config, kwargs = AutoConfig.from_pretrained(

File "/transformers/models/auto/configuration_auto.py", line 1022, in from_pretrained

config_class = CONFIG_MAPPING[config_dict["model_type"]]

File "/transformers/models/auto/configuration_auto.py", line 723, in getitem

raise KeyError(key)

KeyError: 'mistral'

```

Installing transformers from source should solve the issue

pip install git+https://github.com/huggingface/transformers

This should not be required after transformers-v4.33.4.

## Limitations

The Mistral 7B Instruct model is a quick demonstration that the base model can be easily fine-tuned to achieve compelling performance.

It does not have any moderation mechanisms. We're looking forward to engaging with the community on ways to

make the model finely respect guardrails, allowing for deployment in environments requiring moderated outputs.

## The Mistral AI Team

Albert Jiang, Alexandre Sablayrolles, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Emma Bou Hanna, Florian Bressand, Gianna Lengyel, Guillaume Bour, Guillaume Lample, Lélio Renard Lavaud, Louis Ternon, Lucile Saulnier, Marie-Anne Lachaux, Pierre Stock, Teven Le Scao, Théophile Gervet, Thibaut Lavril, Thomas Wang, Timothée Lacroix, William El Sayed. |

WhereIsAI/UAE-Large-V1 | WhereIsAI | "2024-05-26T04:38:54Z" | 675,913 | 194 | sentence-transformers | [

"sentence-transformers",

"onnx",

"safetensors",

"bert",

"feature-extraction",

"mteb",

"sentence_embedding",

"feature_extraction",

"transformers",

"transformers.js",

"en",

"arxiv:2309.12871",

"license:mit",

"model-index",

"endpoints_compatible",

"region:us"

] | feature-extraction | "2023-12-04T02:03:27Z" | ---

tags:

- mteb

- sentence_embedding

- feature_extraction

- sentence-transformers

- transformers

- transformers.js

model-index:

- name: UAE-Large-V1

results:

- task:

type: Classification

dataset:

type: mteb/amazon_counterfactual

name: MTEB AmazonCounterfactualClassification (en)

config: en

split: test

revision: e8379541af4e31359cca9fbcf4b00f2671dba205

metrics:

- type: accuracy

value: 75.55223880597015

- type: ap

value: 38.264070815317794

- type: f1

value: 69.40977934769845

- task:

type: Classification

dataset:

type: mteb/amazon_polarity

name: MTEB AmazonPolarityClassification

config: default

split: test

revision: e2d317d38cd51312af73b3d32a06d1a08b442046

metrics:

- type: accuracy

value: 92.84267499999999

- type: ap

value: 89.57568507997713

- type: f1

value: 92.82590734337774

- task:

type: Classification

dataset:

type: mteb/amazon_reviews_multi

name: MTEB AmazonReviewsClassification (en)

config: en

split: test

revision: 1399c76144fd37290681b995c656ef9b2e06e26d

metrics:

- type: accuracy

value: 48.292

- type: f1

value: 47.90257816032778

- task:

type: Retrieval

dataset:

type: arguana

name: MTEB ArguAna

config: default

split: test

revision: None

metrics:

- type: map_at_1

value: 42.105

- type: map_at_10

value: 58.181000000000004

- type: map_at_100

value: 58.653999999999996

- type: map_at_1000

value: 58.657000000000004

- type: map_at_3

value: 54.386

- type: map_at_5

value: 56.757999999999996

- type: mrr_at_1

value: 42.745

- type: mrr_at_10

value: 58.437

- type: mrr_at_100

value: 58.894999999999996

- type: mrr_at_1000

value: 58.897999999999996

- type: mrr_at_3

value: 54.635

- type: mrr_at_5

value: 56.99999999999999

- type: ndcg_at_1

value: 42.105

- type: ndcg_at_10

value: 66.14999999999999

- type: ndcg_at_100

value: 68.048

- type: ndcg_at_1000

value: 68.11399999999999

- type: ndcg_at_3

value: 58.477000000000004

- type: ndcg_at_5

value: 62.768

- type: precision_at_1

value: 42.105

- type: precision_at_10

value: 9.110999999999999

- type: precision_at_100

value: 0.991

- type: precision_at_1000

value: 0.1

- type: precision_at_3

value: 23.447000000000003

- type: precision_at_5

value: 16.159000000000002

- type: recall_at_1

value: 42.105

- type: recall_at_10

value: 91.11

- type: recall_at_100

value: 99.14699999999999

- type: recall_at_1000

value: 99.644

- type: recall_at_3

value: 70.341

- type: recall_at_5

value: 80.797

- task:

type: Clustering

dataset:

type: mteb/arxiv-clustering-p2p

name: MTEB ArxivClusteringP2P

config: default

split: test

revision: a122ad7f3f0291bf49cc6f4d32aa80929df69d5d

metrics:

- type: v_measure

value: 49.02580759154173

- task:

type: Clustering

dataset:

type: mteb/arxiv-clustering-s2s

name: MTEB ArxivClusteringS2S

config: default

split: test

revision: f910caf1a6075f7329cdf8c1a6135696f37dbd53

metrics:

- type: v_measure

value: 43.093601280163554

- task:

type: Reranking

dataset:

type: mteb/askubuntudupquestions-reranking

name: MTEB AskUbuntuDupQuestions

config: default

split: test

revision: 2000358ca161889fa9c082cb41daa8dcfb161a54

metrics:

- type: map

value: 64.19590406875427

- type: mrr

value: 77.09547992788991

- task:

type: STS

dataset:

type: mteb/biosses-sts

name: MTEB BIOSSES

config: default

split: test

revision: d3fb88f8f02e40887cd149695127462bbcf29b4a

metrics:

- type: cos_sim_pearson

value: 87.86678362843676

- type: cos_sim_spearman

value: 86.1423242570783

- type: euclidean_pearson

value: 85.98994198511751

- type: euclidean_spearman

value: 86.48209103503942

- type: manhattan_pearson

value: 85.6446436316182

- type: manhattan_spearman

value: 86.21039809734357

- task:

type: Classification

dataset:

type: mteb/banking77

name: MTEB Banking77Classification

config: default

split: test

revision: 0fd18e25b25c072e09e0d92ab615fda904d66300

metrics:

- type: accuracy

value: 87.69155844155844

- type: f1

value: 87.68109381943547

- task:

type: Clustering

dataset:

type: mteb/biorxiv-clustering-p2p

name: MTEB BiorxivClusteringP2P

config: default

split: test

revision: 65b79d1d13f80053f67aca9498d9402c2d9f1f40

metrics:

- type: v_measure

value: 39.37501687500394

- task:

type: Clustering

dataset:

type: mteb/biorxiv-clustering-s2s

name: MTEB BiorxivClusteringS2S

config: default

split: test

revision: 258694dd0231531bc1fd9de6ceb52a0853c6d908

metrics:

- type: v_measure

value: 37.23401405155885

- task:

type: Retrieval

dataset:

type: BeIR/cqadupstack

name: MTEB CQADupstackAndroidRetrieval

config: default

split: test

revision: None

metrics:

- type: map_at_1

value: 30.232

- type: map_at_10

value: 41.404999999999994

- type: map_at_100

value: 42.896

- type: map_at_1000

value: 43.028

- type: map_at_3

value: 37.925

- type: map_at_5

value: 39.865

- type: mrr_at_1

value: 36.338

- type: mrr_at_10

value: 46.969

- type: mrr_at_100

value: 47.684

- type: mrr_at_1000

value: 47.731

- type: mrr_at_3

value: 44.063

- type: mrr_at_5

value: 45.908

- type: ndcg_at_1

value: 36.338

- type: ndcg_at_10

value: 47.887

- type: ndcg_at_100

value: 53.357

- type: ndcg_at_1000

value: 55.376999999999995

- type: ndcg_at_3

value: 42.588

- type: ndcg_at_5

value: 45.132

- type: precision_at_1

value: 36.338

- type: precision_at_10

value: 9.17

- type: precision_at_100

value: 1.4909999999999999

- type: precision_at_1000

value: 0.196

- type: precision_at_3

value: 20.315

- type: precision_at_5

value: 14.793000000000001

- type: recall_at_1

value: 30.232

- type: recall_at_10

value: 60.67399999999999

- type: recall_at_100

value: 83.628

- type: recall_at_1000

value: 96.209

- type: recall_at_3

value: 45.48

- type: recall_at_5

value: 52.354

- task:

type: Retrieval

dataset:

type: BeIR/cqadupstack

name: MTEB CQADupstackEnglishRetrieval

config: default

split: test

revision: None

metrics:

- type: map_at_1

value: 32.237

- type: map_at_10

value: 42.829

- type: map_at_100

value: 44.065

- type: map_at_1000

value: 44.199

- type: map_at_3

value: 39.885999999999996

- type: map_at_5

value: 41.55

- type: mrr_at_1

value: 40.064

- type: mrr_at_10

value: 48.611

- type: mrr_at_100

value: 49.245

- type: mrr_at_1000

value: 49.29

- type: mrr_at_3

value: 46.561

- type: mrr_at_5

value: 47.771

- type: ndcg_at_1

value: 40.064

- type: ndcg_at_10

value: 48.388

- type: ndcg_at_100

value: 52.666999999999994

- type: ndcg_at_1000

value: 54.67100000000001

- type: ndcg_at_3

value: 44.504

- type: ndcg_at_5

value: 46.303

- type: precision_at_1

value: 40.064

- type: precision_at_10

value: 9.051

- type: precision_at_100

value: 1.4500000000000002

- type: precision_at_1000

value: 0.193

- type: precision_at_3

value: 21.444

- type: precision_at_5

value: 15.045

- type: recall_at_1

value: 32.237

- type: recall_at_10

value: 57.943999999999996

- type: recall_at_100

value: 75.98700000000001

- type: recall_at_1000

value: 88.453

- type: recall_at_3

value: 46.268

- type: recall_at_5

value: 51.459999999999994

- task:

type: Retrieval

dataset:

type: BeIR/cqadupstack

name: MTEB CQADupstackGamingRetrieval

config: default

split: test

revision: None

metrics:

- type: map_at_1

value: 38.797

- type: map_at_10

value: 51.263000000000005

- type: map_at_100

value: 52.333

- type: map_at_1000

value: 52.393

- type: map_at_3

value: 47.936

- type: map_at_5

value: 49.844

- type: mrr_at_1

value: 44.389

- type: mrr_at_10

value: 54.601

- type: mrr_at_100

value: 55.300000000000004

- type: mrr_at_1000

value: 55.333

- type: mrr_at_3

value: 52.068999999999996

- type: mrr_at_5

value: 53.627

- type: ndcg_at_1

value: 44.389

- type: ndcg_at_10

value: 57.193000000000005

- type: ndcg_at_100

value: 61.307

- type: ndcg_at_1000

value: 62.529

- type: ndcg_at_3

value: 51.607

- type: ndcg_at_5

value: 54.409

- type: precision_at_1

value: 44.389

- type: precision_at_10

value: 9.26

- type: precision_at_100

value: 1.222

- type: precision_at_1000

value: 0.13699999999999998

- type: precision_at_3

value: 23.03

- type: precision_at_5

value: 15.887

- type: recall_at_1

value: 38.797

- type: recall_at_10

value: 71.449

- type: recall_at_100

value: 88.881

- type: recall_at_1000

value: 97.52

- type: recall_at_3

value: 56.503

- type: recall_at_5

value: 63.392

- task:

type: Retrieval

dataset:

type: BeIR/cqadupstack

name: MTEB CQADupstackGisRetrieval

config: default

split: test

revision: None

metrics:

- type: map_at_1

value: 27.291999999999998

- type: map_at_10

value: 35.65

- type: map_at_100

value: 36.689

- type: map_at_1000

value: 36.753

- type: map_at_3

value: 32.995000000000005

- type: map_at_5

value: 34.409

- type: mrr_at_1

value: 29.04

- type: mrr_at_10

value: 37.486000000000004

- type: mrr_at_100

value: 38.394

- type: mrr_at_1000

value: 38.445

- type: mrr_at_3

value: 35.028

- type: mrr_at_5

value: 36.305

- type: ndcg_at_1

value: 29.04

- type: ndcg_at_10

value: 40.613

- type: ndcg_at_100

value: 45.733000000000004

- type: ndcg_at_1000

value: 47.447

- type: ndcg_at_3

value: 35.339999999999996

- type: ndcg_at_5

value: 37.706

- type: precision_at_1

value: 29.04

- type: precision_at_10

value: 6.192

- type: precision_at_100

value: 0.9249999999999999

- type: precision_at_1000

value: 0.11

- type: precision_at_3

value: 14.802000000000001

- type: precision_at_5

value: 10.305

- type: recall_at_1

value: 27.291999999999998

- type: recall_at_10

value: 54.25299999999999

- type: recall_at_100

value: 77.773

- type: recall_at_1000

value: 90.795

- type: recall_at_3

value: 39.731

- type: recall_at_5

value: 45.403999999999996

- task:

type: Retrieval

dataset:

type: BeIR/cqadupstack

name: MTEB CQADupstackMathematicaRetrieval

config: default

split: test

revision: None

metrics:

- type: map_at_1

value: 18.326

- type: map_at_10

value: 26.290999999999997

- type: map_at_100

value: 27.456999999999997

- type: map_at_1000

value: 27.583000000000002

- type: map_at_3

value: 23.578

- type: map_at_5

value: 25.113000000000003

- type: mrr_at_1

value: 22.637

- type: mrr_at_10

value: 31.139

- type: mrr_at_100

value: 32.074999999999996

- type: mrr_at_1000

value: 32.147

- type: mrr_at_3

value: 28.483000000000004

- type: mrr_at_5

value: 29.963

- type: ndcg_at_1

value: 22.637

- type: ndcg_at_10

value: 31.717000000000002

- type: ndcg_at_100

value: 37.201

- type: ndcg_at_1000

value: 40.088

- type: ndcg_at_3

value: 26.686

- type: ndcg_at_5

value: 29.076999999999998

- type: precision_at_1

value: 22.637

- type: precision_at_10

value: 5.7090000000000005

- type: precision_at_100

value: 0.979

- type: precision_at_1000

value: 0.13799999999999998

- type: precision_at_3

value: 12.894

- type: precision_at_5

value: 9.328

- type: recall_at_1

value: 18.326

- type: recall_at_10

value: 43.824999999999996

- type: recall_at_100

value: 67.316

- type: recall_at_1000

value: 87.481

- type: recall_at_3

value: 29.866999999999997

- type: recall_at_5