text

stringlengths 23

371k

| source

stringlengths 32

152

|

|---|---|

--

title: NIST_MT

emoji: 🤗

colorFrom: purple

colorTo: red

sdk: gradio

sdk_version: 3.19.1

app_file: app.py

pinned: false

tags:

- evaluate

- metric

- machine-translation

description:

DARPA commissioned NIST to develop an MT evaluation facility based on the BLEU score.

---

# Metric Card for NIST's MT metric

## Metric Description

DARPA commissioned NIST to develop an MT evaluation facility based on the BLEU

score. The official script used by NIST to compute BLEU and NIST score is

mteval-14.pl. The main differences are:

- BLEU uses geometric mean of the ngram overlaps, NIST uses arithmetic mean.

- NIST has a different brevity penalty

- NIST score from mteval-14.pl has a self-contained tokenizer (in the Hugging Face implementation we rely on NLTK's

implementation of the NIST-specific tokenizer)

## Intended Uses

NIST was developed for machine translation evaluation.

## How to Use

```python

import evaluate

nist_mt = evaluate.load("nist_mt")

hypothesis1 = "It is a guide to action which ensures that the military always obeys the commands of the party"

reference1 = "It is a guide to action that ensures that the military will forever heed Party commands"

reference2 = "It is the guiding principle which guarantees the military forces always being under the command of the Party"

nist_mt.compute(hypothesis1, [reference1, reference2])

# {'nist_mt': 3.3709935957649324}

```

### Inputs

- **predictions**: tokenized predictions to score. For sentence-level NIST, a list of tokens (str);

for corpus-level NIST, a list (sentences) of lists of tokens (str)

- **references**: potentially multiple tokenized references for each prediction. For sentence-level NIST, a

list (multiple potential references) of list of tokens (str); for corpus-level NIST, a list (corpus) of lists

(multiple potential references) of lists of tokens (str)

- **n**: highest n-gram order

- **tokenize_kwargs**: arguments passed to the tokenizer (see: https://github.com/nltk/nltk/blob/90fa546ea600194f2799ee51eaf1b729c128711e/nltk/tokenize/nist.py#L139)

### Output Values

- **nist_mt** (`float`): NIST score

Output Example:

```python

{'nist_mt': 3.3709935957649324}

```

## Citation

```bibtex

@inproceedings{10.5555/1289189.1289273,

author = {Doddington, George},

title = {Automatic Evaluation of Machine Translation Quality Using N-Gram Co-Occurrence Statistics},

year = {2002},

publisher = {Morgan Kaufmann Publishers Inc.},

address = {San Francisco, CA, USA},

booktitle = {Proceedings of the Second International Conference on Human Language Technology Research},

pages = {138–145},

numpages = {8},

location = {San Diego, California},

series = {HLT '02}

}

```

## Further References

This Hugging Face implementation uses [the NLTK implementation](https://github.com/nltk/nltk/blob/develop/nltk/translate/nist_score.py)

| huggingface/evaluate/blob/main/metrics/nist_mt/README.md |

SSL ResNet

**Residual Networks**, or **ResNets**, learn residual functions with reference to the layer inputs, instead of learning unreferenced functions. Instead of hoping each few stacked layers directly fit a desired underlying mapping, residual nets let these layers fit a residual mapping. They stack [residual blocks](https://paperswithcode.com/method/residual-block) ontop of each other to form network: e.g. a ResNet-50 has fifty layers using these blocks.

The model in this collection utilises semi-supervised learning to improve the performance of the model. The approach brings important gains to standard architectures for image, video and fine-grained classification.

Please note the CC-BY-NC 4.0 license on theses weights, non-commercial use only.

## How do I use this model on an image?

To load a pretrained model:

```py

>>> import timm

>>> model = timm.create_model('ssl_resnet18', pretrained=True)

>>> model.eval()

```

To load and preprocess the image:

```py

>>> import urllib

>>> from PIL import Image

>>> from timm.data import resolve_data_config

>>> from timm.data.transforms_factory import create_transform

>>> config = resolve_data_config({}, model=model)

>>> transform = create_transform(**config)

>>> url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

>>> urllib.request.urlretrieve(url, filename)

>>> img = Image.open(filename).convert('RGB')

>>> tensor = transform(img).unsqueeze(0) # transform and add batch dimension

```

To get the model predictions:

```py

>>> import torch

>>> with torch.no_grad():

... out = model(tensor)

>>> probabilities = torch.nn.functional.softmax(out[0], dim=0)

>>> print(probabilities.shape)

>>> # prints: torch.Size([1000])

```

To get the top-5 predictions class names:

```py

>>> # Get imagenet class mappings

>>> url, filename = ("https://raw.githubusercontent.com/pytorch/hub/master/imagenet_classes.txt", "imagenet_classes.txt")

>>> urllib.request.urlretrieve(url, filename)

>>> with open("imagenet_classes.txt", "r") as f:

... categories = [s.strip() for s in f.readlines()]

>>> # Print top categories per image

>>> top5_prob, top5_catid = torch.topk(probabilities, 5)

>>> for i in range(top5_prob.size(0)):

... print(categories[top5_catid[i]], top5_prob[i].item())

>>> # prints class names and probabilities like:

>>> # [('Samoyed', 0.6425196528434753), ('Pomeranian', 0.04062102362513542), ('keeshond', 0.03186424449086189), ('white wolf', 0.01739676296710968), ('Eskimo dog', 0.011717947199940681)]

```

Replace the model name with the variant you want to use, e.g. `ssl_resnet18`. You can find the IDs in the model summaries at the top of this page.

To extract image features with this model, follow the [timm feature extraction examples](../feature_extraction), just change the name of the model you want to use.

## How do I finetune this model?

You can finetune any of the pre-trained models just by changing the classifier (the last layer).

```py

>>> model = timm.create_model('ssl_resnet18', pretrained=True, num_classes=NUM_FINETUNE_CLASSES)

```

To finetune on your own dataset, you have to write a training loop or adapt [timm's training

script](https://github.com/rwightman/pytorch-image-models/blob/master/train.py) to use your dataset.

## How do I train this model?

You can follow the [timm recipe scripts](../scripts) for training a new model afresh.

## Citation

```BibTeX

@article{DBLP:journals/corr/abs-1905-00546,

author = {I. Zeki Yalniz and

Herv{\'{e}} J{\'{e}}gou and

Kan Chen and

Manohar Paluri and

Dhruv Mahajan},

title = {Billion-scale semi-supervised learning for image classification},

journal = {CoRR},

volume = {abs/1905.00546},

year = {2019},

url = {http://arxiv.org/abs/1905.00546},

archivePrefix = {arXiv},

eprint = {1905.00546},

timestamp = {Mon, 28 Sep 2020 08:19:37 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-1905-00546.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

<!--

Type: model-index

Collections:

- Name: SSL ResNet

Paper:

Title: Billion-scale semi-supervised learning for image classification

URL: https://paperswithcode.com/paper/billion-scale-semi-supervised-learning-for

Models:

- Name: ssl_resnet18

In Collection: SSL ResNet

Metadata:

FLOPs: 2337073152

Parameters: 11690000

File Size: 46811375

Architecture:

- 1x1 Convolution

- Batch Normalization

- Bottleneck Residual Block

- Convolution

- Global Average Pooling

- Max Pooling

- ReLU

- Residual Block

- Residual Connection

- Softmax

Tasks:

- Image Classification

Training Techniques:

- SGD with Momentum

- Weight Decay

Training Data:

- ImageNet

- YFCC-100M

Training Resources: 64x GPUs

ID: ssl_resnet18

LR: 0.0015

Epochs: 30

Layers: 18

Crop Pct: '0.875'

Batch Size: 1536

Image Size: '224'

Weight Decay: 0.0001

Interpolation: bilinear

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/resnet.py#L894

Weights: https://dl.fbaipublicfiles.com/semiweaksupervision/model_files/semi_supervised_resnet18-d92f0530.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 72.62%

Top 5 Accuracy: 91.42%

- Name: ssl_resnet50

In Collection: SSL ResNet

Metadata:

FLOPs: 5282531328

Parameters: 25560000

File Size: 102480594

Architecture:

- 1x1 Convolution

- Batch Normalization

- Bottleneck Residual Block

- Convolution

- Global Average Pooling

- Max Pooling

- ReLU

- Residual Block

- Residual Connection

- Softmax

Tasks:

- Image Classification

Training Techniques:

- SGD with Momentum

- Weight Decay

Training Data:

- ImageNet

- YFCC-100M

Training Resources: 64x GPUs

ID: ssl_resnet50

LR: 0.0015

Epochs: 30

Layers: 50

Crop Pct: '0.875'

Batch Size: 1536

Image Size: '224'

Weight Decay: 0.0001

Interpolation: bilinear

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/resnet.py#L904

Weights: https://dl.fbaipublicfiles.com/semiweaksupervision/model_files/semi_supervised_resnet50-08389792.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 79.24%

Top 5 Accuracy: 94.83%

--> | huggingface/pytorch-image-models/blob/main/hfdocs/source/models/ssl-resnet.mdx |

--

title: What's new in Diffusers? 🎨

thumbnail: /blog/assets/102_diffusers_2nd_month/inpainting.png

authors:

- user: osanseviero

---

# What's new in Diffusers? 🎨

A month and a half ago we released `diffusers`, a library that provides a modular toolbox for diffusion models across modalities. A couple of weeks later, we released support for Stable Diffusion, a high quality text-to-image model, with a free demo for anyone to try out. Apart from burning lots of GPUs, in the last three weeks the team has decided to add one or two new features to the library that we hope the community enjoys! This blog post gives a high-level overview of the new features in `diffusers` version 0.3! Remember to give a ⭐ to the [GitHub repository](https://github.com/huggingface/diffusers).

- [Image to Image pipelines](#image-to-image-pipeline)

- [Textual Inversion](#textual-inversion)

- [Inpainting](#experimental-inpainting-pipeline)

- [Optimizations for Smaller GPUs](#optimizations-for-smaller-gpus)

- [Run on Mac](#diffusers-in-mac-os)

- [ONNX Exporter](#experimental-onnx-exporter-and-pipeline)

- [New docs](#new-docs)

- [Community](#community)

- [Generate videos with SD latent space](#stable-diffusion-videos)

- [Model Explainability](#diffusers-interpret)

- [Japanese Stable Diffusion](#japanese-stable-diffusion)

- [High quality fine-tuned model](#waifu-diffusion)

- [Cross Attention Control with Stable Diffusion](#cross-attention-control)

- [Reusable seeds](#reusable-seeds)

## Image to Image pipeline

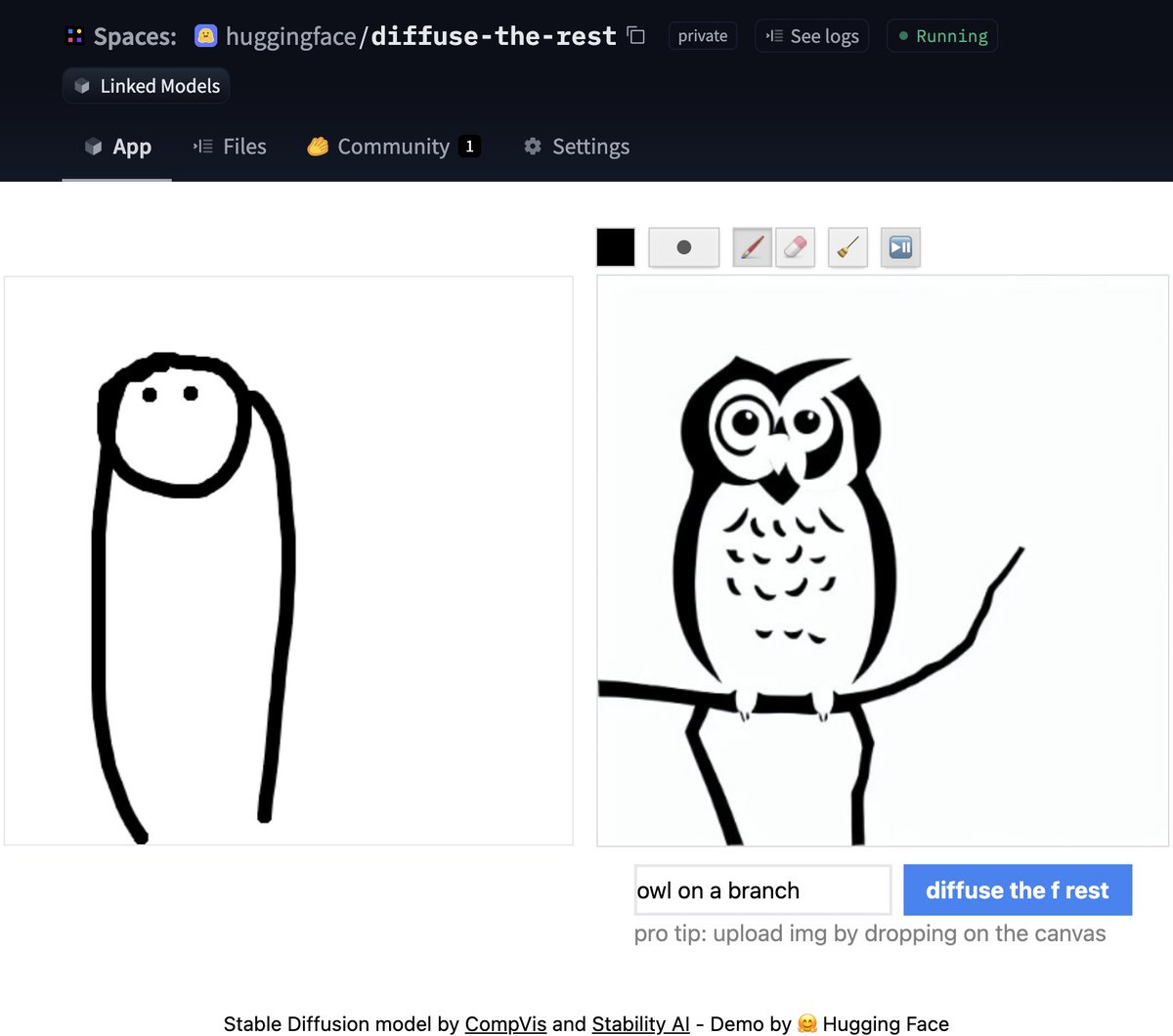

One of the most requested features was to have image to image generation. This pipeline allows you to input an image and a prompt, and it will generate an image based on that!

Let's see some code based on the official Colab [notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb).

```python

from diffusers import StableDiffusionImg2ImgPipeline

pipe = StableDiffusionImg2ImgPipeline.from_pretrained(

"CompVis/stable-diffusion-v1-4",

revision="fp16",

torch_dtype=torch.float16,

use_auth_token=True

)

# Download an initial image

# ...

init_image = preprocess(init_img)

prompt = "A fantasy landscape, trending on artstation"

images = pipe(prompt=prompt, init_image=init_image, strength=0.75, guidance_scale=7.5, generator=generator)["sample"]

```

Don't have time for code? No worries, we also created a [Space demo](https://huggingface.co/spaces/huggingface/diffuse-the-rest) where you can try it out directly

## Textual Inversion

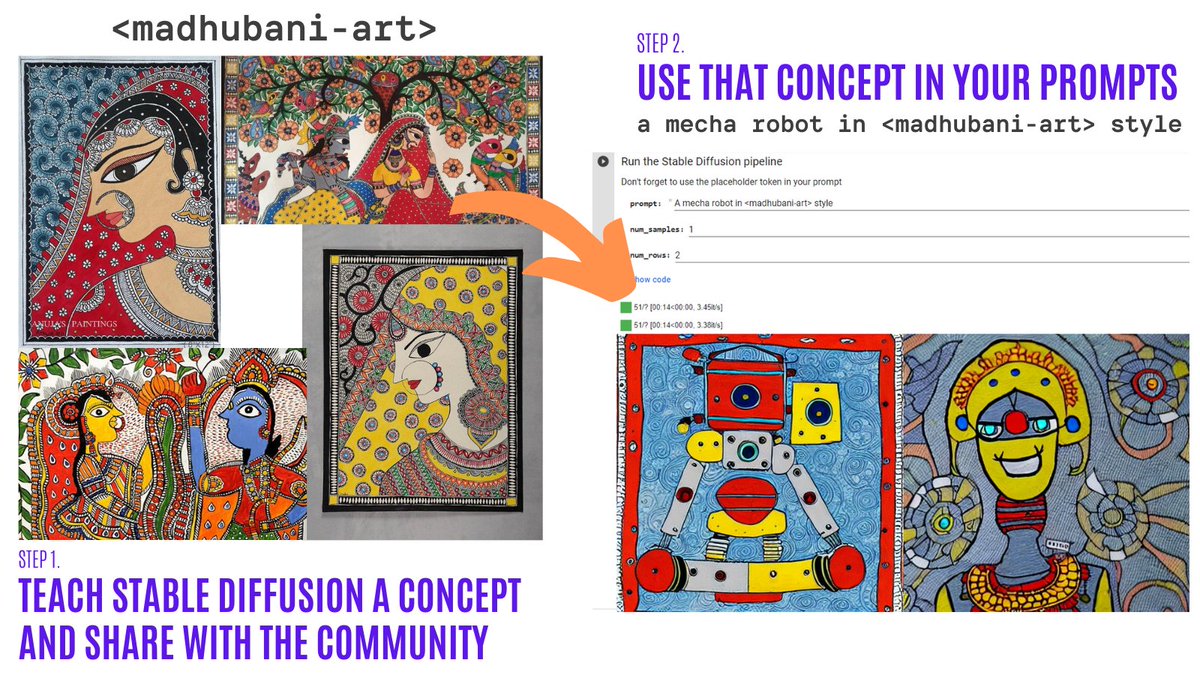

Textual Inversion lets you personalize a Stable Diffusion model on your own images with just 3-5 samples. With this tool, you can train a model on a concept, and then share the concept with the rest of the community!

In just a couple of days, the community shared over 200 concepts! Check them out!

* [Organization](https://huggingface.co/sd-concepts-library) with the concepts.

* [Navigator Colab](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_diffusion_textual_inversion_library_navigator.ipynb): Browse visually and use over 150 concepts created by the community.

* [Training Colab](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb): Teach Stable Diffusion a new concept and share it with the rest of the community.

* [Inference Colab](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb): Run Stable Diffusion with the learned concepts.

## Experimental inpainting pipeline

Inpainting allows to provide an image, then select an area in the image (or provide a mask), and use Stable Diffusion to replace the mask. Here is an example:

<figure class="image table text-center m-0 w-full">

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/diffusers-2nd-month/inpainting.png" alt="Example inpaint of owl being generated from an initial image and a prompt"/>

</figure>

You can try out a minimal Colab [notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/in_painting_with_stable_diffusion_using_diffusers.ipynb) or check out the code below. A demo is coming soon!

```python

from diffusers import StableDiffusionInpaintPipeline

pipe = StableDiffusionInpaintPipeline.from_pretrained(

"CompVis/stable-diffusion-v1-4",

revision="fp16",

torch_dtype=torch.float16,

use_auth_token=True

).to(device)

images = pipe(

prompt=["a cat sitting on a bench"] * 3,

init_image=init_image,

mask_image=mask_image,

strength=0.75,

guidance_scale=7.5,

generator=None

).images

```

Please note this is experimental, so there is room for improvement.

## Optimizations for smaller GPUs

After some improvements, the diffusion models can take much less VRAM. 🔥 For example, Stable Diffusion only takes 3.2GB! This yields the exact same results at the expense of 10% of speed. Here is how to use these optimizations

```python

from diffusers import StableDiffusionPipeline

pipe = StableDiffusionPipeline.from_pretrained(

"CompVis/stable-diffusion-v1-4",

revision="fp16",

torch_dtype=torch.float16,

use_auth_token=True

)

pipe = pipe.to("cuda")

pipe.enable_attention_slicing()

```

This is super exciting as this will reduce even more the barrier to use these models!

## Diffusers in Mac OS

🍎 That's right! Another widely requested feature was just released! Read the full instructions in the [official docs](https://huggingface.co/docs/diffusers/optimization/mps) (including performance comparisons, specs, and more).

Using the PyTorch mps device, people with M1/M2 hardware can run inference with Stable Diffusion. 🤯 This requires minimal setup for users, try it out!

```python

from diffusers import StableDiffusionPipeline

pipe = StableDiffusionPipeline.from_pretrained("CompVis/stable-diffusion-v1-4", use_auth_token=True)

pipe = pipe.to("mps")

prompt = "a photo of an astronaut riding a horse on mars"

image = pipe(prompt).images[0]

```

## Experimental ONNX exporter and pipeline

The new experimental pipeline allows users to run Stable Diffusion on any hardware that supports ONNX. Here is an example of how to use it (note that the `onnx` revision is being used)

```python

from diffusers import StableDiffusionOnnxPipeline

pipe = StableDiffusionOnnxPipeline.from_pretrained(

"CompVis/stable-diffusion-v1-4",

revision="onnx",

provider="CPUExecutionProvider",

use_auth_token=True,

)

prompt = "a photo of an astronaut riding a horse on mars"

image = pipe(prompt).images[0]

```

Alternatively, you can also convert your SD checkpoints to ONNX directly with the exporter script.

```

python scripts/convert_stable_diffusion_checkpoint_to_onnx.py --model_path="CompVis/stable-diffusion-v1-4" --output_path="./stable_diffusion_onnx"

```

## New docs

All of the previous features are very cool. As maintainers of open-source libraries, we know about the importance of high quality documentation to make it as easy as possible for anyone to try out the library.

💅 Because of this, we did a Docs sprint and we're very excited to do a first release of our [documentation](https://huggingface.co/docs/diffusers/v0.3.0/en/index). This is a first version, so there are many things we plan to add (and contributions are always welcome!).

Some highlights of the docs:

* Techniques for [optimization](https://huggingface.co/docs/diffusers/optimization/fp16)

* The [training overview](https://huggingface.co/docs/diffusers/training/overview)

* A [contributing guide](https://huggingface.co/docs/diffusers/conceptual/contribution)

* In-depth API docs for [schedulers](https://huggingface.co/docs/diffusers/api/schedulers)

* In-depth API docs for [pipelines](https://huggingface.co/docs/diffusers/api/pipelines/overview)

## Community

And while we were doing all of the above, the community did not stay idle! Here are some highlights (although not exhaustive) of what has been done out there

### Stable Diffusion Videos

Create 🔥 videos with Stable Diffusion by exploring the latent space and morphing between text prompts. You can:

* Dream different versions of the same prompt

* Morph between different prompts

The [Stable Diffusion Videos](https://github.com/nateraw/stable-diffusion-videos) tool is pip-installable, comes with a Colab notebook and a Gradio notebook, and is super easy to use!

Here is an example

```python

from stable_diffusion_videos import walk

video_path = walk(['a cat', 'a dog'], [42, 1337], num_steps=3, make_video=True)

```

### Diffusers Interpret

[Diffusers interpret](https://github.com/JoaoLages/diffusers-interpret) is an explainability tool built on top of `diffusers`. It has cool features such as:

* See all the images in the diffusion process

* Analyze how each token in the prompt influences the generation

* Analyze within specified bounding boxes if you want to understand a part of the image

(Image from the tool repository)

```python

# pass pipeline to the explainer class

explainer = StableDiffusionPipelineExplainer(pipe)

# generate an image with `explainer`

prompt = "Corgi with the Eiffel Tower"

output = explainer(

prompt,

num_inference_steps=15

)

output.normalized_token_attributions # (token, attribution_percentage)

#[('corgi', 40),

# ('with', 5),

# ('the', 5),

# ('eiffel', 25),

# ('tower', 25)]

```

### Japanese Stable Diffusion

The name says it all! The goal of JSD was to train a model that also captures information about the culture, identity and unique expressions. It was trained with 100 million images with Japanese captions. You can read more about how the model was trained in the [model card](https://huggingface.co/rinna/japanese-stable-diffusion)

### Waifu Diffusion

[Waifu Diffusion](https://huggingface.co/hakurei/waifu-diffusion) is a fine-tuned SD model for high-quality anime images generation.

<figure class="image table text-center m-0 w-full">

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/diffusers-2nd-month/waifu.png" alt="Images of high quality anime"/>

</figure>

(Image from the tool repository)

### Cross Attention Control

[Cross Attention Control](https://github.com/bloc97/CrossAttentionControl) allows fine control of the prompts by modifying the attention maps of the diffusion models. Some cool things you can do:

* Replace a target in the prompt (e.g. replace cat by dog)

* Reduce or increase the importance of words in the prompt (e.g. if you want less attention to be given to "rocks")

* Easily inject styles

And much more! Check out the repo.

### Reusable Seeds

One of the most impressive early demos of Stable Diffusion was the reuse of seeds to tweak images. The idea is to use the seed of an image of interest to generate a new image, with a different prompt. This yields some cool results! Check out the [Colab](https://colab.research.google.com/github/pcuenca/diffusers-examples/blob/main/notebooks/stable-diffusion-seeds.ipynb)

## Thanks for reading!

I hope you enjoy reading this! Remember to give a Star in our [GitHub Repository](https://github.com/huggingface/diffusers) and join the [Hugging Face Discord Server](https://hf.co/join/discord), where we have a category of channels just for Diffusion models. Over there the latest news in the library are shared!

Feel free to open issues with feature requests and bug reports! Everything that has been achieved couldn't have been done without such an amazing community.

| huggingface/blog/blob/main/diffusers-2nd-month.md |

Adversarial Inception v3

**Inception v3** is a convolutional neural network architecture from the Inception family that makes several improvements including using [Label Smoothing](https://paperswithcode.com/method/label-smoothing), Factorized 7 x 7 convolutions, and the use of an [auxiliary classifer](https://paperswithcode.com/method/auxiliary-classifier) to propagate label information lower down the network (along with the use of batch normalization for layers in the sidehead). The key building block is an [Inception Module](https://paperswithcode.com/method/inception-v3-module).

This particular model was trained for study of adversarial examples (adversarial training).

The weights from this model were ported from [Tensorflow/Models](https://github.com/tensorflow/models).

## How do I use this model on an image?

To load a pretrained model:

```python

import timm

model = timm.create_model('adv_inception_v3', pretrained=True)

model.eval()

```

To load and preprocess the image:

```python

import urllib

from PIL import Image

from timm.data import resolve_data_config

from timm.data.transforms_factory import create_transform

config = resolve_data_config({}, model=model)

transform = create_transform(**config)

url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

urllib.request.urlretrieve(url, filename)

img = Image.open(filename).convert('RGB')

tensor = transform(img).unsqueeze(0) # transform and add batch dimension

```

To get the model predictions:

```python

import torch

with torch.no_grad():

out = model(tensor)

probabilities = torch.nn.functional.softmax(out[0], dim=0)

print(probabilities.shape)

# prints: torch.Size([1000])

```

To get the top-5 predictions class names:

```python

# Get imagenet class mappings

url, filename = ("https://raw.githubusercontent.com/pytorch/hub/master/imagenet_classes.txt", "imagenet_classes.txt")

urllib.request.urlretrieve(url, filename)

with open("imagenet_classes.txt", "r") as f:

categories = [s.strip() for s in f.readlines()]

# Print top categories per image

top5_prob, top5_catid = torch.topk(probabilities, 5)

for i in range(top5_prob.size(0)):

print(categories[top5_catid[i]], top5_prob[i].item())

# prints class names and probabilities like:

# [('Samoyed', 0.6425196528434753), ('Pomeranian', 0.04062102362513542), ('keeshond', 0.03186424449086189), ('white wolf', 0.01739676296710968), ('Eskimo dog', 0.011717947199940681)]

```

Replace the model name with the variant you want to use, e.g. `adv_inception_v3`. You can find the IDs in the model summaries at the top of this page.

To extract image features with this model, follow the [timm feature extraction examples](https://rwightman.github.io/pytorch-image-models/feature_extraction/), just change the name of the model you want to use.

## How do I finetune this model?

You can finetune any of the pre-trained models just by changing the classifier (the last layer).

```python

model = timm.create_model('adv_inception_v3', pretrained=True, num_classes=NUM_FINETUNE_CLASSES)

```

To finetune on your own dataset, you have to write a training loop or adapt [timm's training

script](https://github.com/rwightman/pytorch-image-models/blob/master/train.py) to use your dataset.

## How do I train this model?

You can follow the [timm recipe scripts](https://rwightman.github.io/pytorch-image-models/scripts/) for training a new model afresh.

## Citation

```BibTeX

@article{DBLP:journals/corr/abs-1804-00097,

author = {Alexey Kurakin and

Ian J. Goodfellow and

Samy Bengio and

Yinpeng Dong and

Fangzhou Liao and

Ming Liang and

Tianyu Pang and

Jun Zhu and

Xiaolin Hu and

Cihang Xie and

Jianyu Wang and

Zhishuai Zhang and

Zhou Ren and

Alan L. Yuille and

Sangxia Huang and

Yao Zhao and

Yuzhe Zhao and

Zhonglin Han and

Junjiajia Long and

Yerkebulan Berdibekov and

Takuya Akiba and

Seiya Tokui and

Motoki Abe},

title = {Adversarial Attacks and Defences Competition},

journal = {CoRR},

volume = {abs/1804.00097},

year = {2018},

url = {http://arxiv.org/abs/1804.00097},

archivePrefix = {arXiv},

eprint = {1804.00097},

timestamp = {Thu, 31 Oct 2019 16:31:22 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1804-00097.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

<!--

Type: model-index

Collections:

- Name: Adversarial Inception v3

Paper:

Title: Adversarial Attacks and Defences Competition

URL: https://paperswithcode.com/paper/adversarial-attacks-and-defences-competition

Models:

- Name: adv_inception_v3

In Collection: Adversarial Inception v3

Metadata:

FLOPs: 7352418880

Parameters: 23830000

File Size: 95549439

Architecture:

- 1x1 Convolution

- Auxiliary Classifier

- Average Pooling

- Average Pooling

- Batch Normalization

- Convolution

- Dense Connections

- Dropout

- Inception-v3 Module

- Max Pooling

- ReLU

- Softmax

Tasks:

- Image Classification

Training Data:

- ImageNet

ID: adv_inception_v3

Crop Pct: '0.875'

Image Size: '299'

Interpolation: bicubic

Code: https://github.com/rwightman/pytorch-image-models/blob/d8e69206be253892b2956341fea09fdebfaae4e3/timm/models/inception_v3.py#L456

Weights: https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-weights/adv_inception_v3-9e27bd63.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 77.58%

Top 5 Accuracy: 93.74%

--> | huggingface/pytorch-image-models/blob/main/docs/models/adversarial-inception-v3.md |

`@gradio/code`

```html

<script>

import { BaseCode, BaseCopy, BaseDownload, BaseWidget, BaseExample} from "gradio/code";

</script>

```

BaseCode

```javascript

export let classNames = "";

export let value = "";

export let dark_mode: boolean;

export let basic = true;

export let language: string;

export let lines = 5;

export let extensions: Extension[] = [];

export let useTab = true;

export let readonly = false;

export let placeholder: string | HTMLElement | null | undefined = undefined;

```

BaseCopy

```javascript

export let value: string;

```

BaseDownload

```javascript

export let value: string;

export let language: string;

```

BaseWidget

```javascript

export let value: string;

export let language: string;

```

BaseExample

```

export let value: string;

export let type: "gallery" | "table";

export let selected = false;

``` | gradio-app/gradio/blob/main/js/code/README.md |

!--⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# 安装

在开始之前,您需要通过安装适当的软件包来设置您的环境

huggingface_hub 在 Python 3.8 或更高版本上进行了测试,可以保证在这些版本上正常运行。如果您使用的是 Python 3.7 或更低版本,可能会出现兼容性问题

## 使用 pip 安装

我们建议将huggingface_hub安装在[虚拟环境](https://docs.python.org/3/library/venv.html)中.

如果你不熟悉 Python虚拟环境,可以看看这个[指南](https://packaging.python.org/en/latest/guides/installing-using-pip-and-virtual-environments/).

虚拟环境可以更容易地管理不同的项目,避免依赖项之间的兼容性问题

首先在你的项目目录中创建一个虚拟环境,请运行以下代码:

```bash

python -m venv .env

```

在Linux和macOS上,请运行以下代码激活虚拟环境:

```bash

source .env/bin/activate

```

在 Windows 上,请运行以下代码激活虚拟环境:

```bash

.env/Scripts/activate

```

现在您可以从[PyPi注册表](https://pypi.org/project/huggingface-hub/)安装 `huggingface_hub`:

```bash

pip install --upgrade huggingface_hub

```

完成后,[检查安装](#check-installation)是否正常工作

### 安装可选依赖项

`huggingface_hub`的某些依赖项是 [可选](https://setuptools.pypa.io/en/latest/userguide/dependency_management.html#optional-dependencies) 的,因为它们不是运行`huggingface_hub`的核心功能所必需的.但是,如果没有安装可选依赖项, `huggingface_hub` 的某些功能可能会无法使用

您可以通过`pip`安装可选依赖项,请运行以下代码:

```bash

# 安装 TensorFlow 特定功能的依赖项

# /!\ 注意:这不等同于 `pip install tensorflow`

pip install 'huggingface_hub[tensorflow]'

# 安装 TensorFlow 特定功能和 CLI 特定功能的依赖项

pip install 'huggingface_hub[cli,torch]'

```

这里列出了 `huggingface_hub` 的可选依赖项:

- `cli`:为 `huggingface_hub` 提供更方便的命令行界面

- `fastai`,` torch`, `tensorflow`: 运行框架特定功能所需的依赖项

- `dev`:用于为库做贡献的依赖项。包括 `testing`(用于运行测试)、`typing`(用于运行类型检查器)和 `quality`(用于运行 linter)

### 从源代码安装

在某些情况下,直接从源代码安装`huggingface_hub`会更有趣。因为您可以使用最新的主版本`main`而非最新的稳定版本

`main`版本更有利于跟进平台的最新开发进度,例如,在最近一次官方发布之后和最新的官方发布之前所修复的某个错误

但是,这意味着`main`版本可能不总是稳定的。我们会尽力让其正常运行,大多数问题通常会在几小时或一天内解决。如果您遇到问题,请创建一个 Issue ,以便我们可以更快地解决!

```bash

pip install git+https://github.com/huggingface/huggingface_hub # 使用pip从GitHub仓库安装Hugging Face Hub库

```

从源代码安装时,您还可以指定特定的分支。如果您想测试尚未合并的新功能或新错误修复,这很有用

```bash

pip install git+https://github.com/huggingface/huggingface_hub@my-feature-branch # 使用pip从指定的GitHub分支(my-feature-branch)安装Hugging Face Hub库

```

完成安装后,请[检查安装](#check-installation)是否正常工作

### 可编辑安装

从源代码安装允许您设置[可编辑安装](https://pip.pypa.io/en/stable/topics/local-project-installs/#editable-installs).如果您计划为`huggingface_hub`做出贡献并需要测试代码更改,这是一个更高级的安装方式。您需要在本机上克隆一个`huggingface_hub`的本地副本

```bash

# 第一,使用以下命令克隆代码库

git clone https://github.com/huggingface/huggingface_hub.git

# 然后,使用以下命令启动虚拟环境

cd huggingface_hub

pip install -e .

```

这些命令将你克隆存储库的文件夹与你的 Python 库路径链接起来。Python 现在将除了正常的库路径之外,还会在你克隆到的文件夹中查找。例如,如果你的 Python 包通常安装在`./.venv/lib/python3.11/site-packages/`中,Python 还会搜索你克隆的文件夹`./huggingface_hub/`

## 通过 conda 安装

如果你更熟悉它,你可以使用[conda-forge channel](https://anaconda.org/conda-forge/huggingface_hub)渠道来安装 `huggingface_hub`

请运行以下代码:

```bash

conda install -c conda-forge huggingface_hub

```

完成安装后,请[检查安装](#check-installation)是否正常工作

## 验证安装

安装完成后,通过运行以下命令检查`huggingface_hub`是否正常工作:

```bash

python -c "from huggingface_hub import model_info; print(model_info('gpt2'))"

```

这个命令将从 Hub 获取有关 [gpt2](https://huggingface.co/gpt2) 模型的信息。

输出应如下所示:

```text

Model Name: gpt2 模型名称

Tags: ['pytorch', 'tf', 'jax', 'tflite', 'rust', 'safetensors', 'gpt2', 'text-generation', 'en', 'doi:10.57967/hf/0039', 'transformers', 'exbert', 'license:mit', 'has_space'] 标签

Task: text-generation 任务:文本生成

```

## Windows局限性

为了实现让每个人都能使用机器学习的目标,我们构建了 `huggingface_hub`库,使其成为一个跨平台的库,尤其可以在 Unix 和 Windows 系统上正常工作。但是,在某些情况下,`huggingface_hub`在Windows上运行时会有一些限制。以下是一些已知问题的完整列表。如果您遇到任何未记录的问题,请打开 [Github上的issue](https://github.com/huggingface/huggingface_hub/issues/new/choose).让我们知道

- `huggingface_hub`的缓存系统依赖于符号链接来高效地缓存从Hub下载的文件。在Windows上,您必须激活开发者模式或以管理员身份运行您的脚本才能启用符号链接。如果它们没有被激活,缓存系统仍然可以工作,但效率较低。有关更多详细信息,请阅读[缓存限制](./guides/manage-cache#limitations)部分。

- Hub上的文件路径可能包含特殊字符(例如:`path/to?/my/file`)。Windows对[特殊字符](https://learn.microsoft.com/en-us/windows/win32/intl/character-sets-used-in-file-names)更加严格,这使得在Windows上下载这些文件变得不可能。希望这是罕见的情况。如果您认为这是一个错误,请联系存储库所有者或我们,以找出解决方案。

## 后记

一旦您在机器上正确安装了`huggingface_hub`,您可能需要[配置环境变量](package_reference/environment_variables)或者[查看我们的指南之一](guides/overview)以开始使用。 | huggingface/huggingface_hub/blob/main/docs/source/cn/installation.md |

p align="center">

<picture>

<source media="(prefers-color-scheme: dark)" srcset="https://huggingface.co/datasets/safetensors/assets/raw/main/banner-dark.svg">

<source media="(prefers-color-scheme: light)" srcset="https://huggingface.co/datasets/safetensors/assets/raw/main/banner-light.svg">

<img alt="Hugging Face Safetensors Library" src="https://huggingface.co/datasets/safetensors/assets/raw/main/banner-light.svg" style="max-width: 100%;">

</picture>

<br/>

<br/>

</p>

Python

[](https://pypi.org/pypi/safetensors/)

[](https://huggingface.co/docs/safetensors/index)

[](https://codecov.io/gh/huggingface/safetensors)

[](https://pepy.tech/project/safetensors)

Rust

[](https://crates.io/crates/safetensors)

[](https://docs.rs/safetensors/)

[](https://codecov.io/gh/huggingface/safetensors)

[](https://deps.rs/repo/github/huggingface/safetensors?path=safetensors)

# safetensors

## Safetensors

This repository implements a new simple format for storing tensors

safely (as opposed to pickle) and that is still fast (zero-copy).

### Installation

#### Pip

You can install safetensors via the pip manager:

```bash

pip install safetensors

```

#### From source

For the sources, you need Rust

```bash

# Install Rust

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh

# Make sure it's up to date and using stable channel

rustup update

git clone https://github.com/huggingface/safetensors

cd safetensors/bindings/python

pip install setuptools_rust

pip install -e .

```

### Getting started

```python

import torch

from safetensors import safe_open

from safetensors.torch import save_file

tensors = {

"weight1": torch.zeros((1024, 1024)),

"weight2": torch.zeros((1024, 1024))

}

save_file(tensors, "model.safetensors")

tensors = {}

with safe_open("model.safetensors", framework="pt", device="cpu") as f:

for key in f.keys():

tensors[key] = f.get_tensor(key)

```

[Python documentation](https://huggingface.co/docs/safetensors/index)

### Format

- 8 bytes: `N`, an unsigned little-endian 64-bit integer, containing the size of the header

- N bytes: a JSON UTF-8 string representing the header.

- The header data MUST begin with a `{` character (0x7B).

- The header data MAY be trailing padded with whitespace (0x20).

- The header is a dict like `{"TENSOR_NAME": {"dtype": "F16", "shape": [1, 16, 256], "data_offsets": [BEGIN, END]}, "NEXT_TENSOR_NAME": {...}, ...}`,

- `data_offsets` point to the tensor data relative to the beginning of the byte buffer (i.e. not an absolute position in the file),

with `BEGIN` as the starting offset and `END` as the one-past offset (so total tensor byte size = `END - BEGIN`).

- A special key `__metadata__` is allowed to contain free form string-to-string map. Arbitrary JSON is not allowed, all values must be strings.

- Rest of the file: byte-buffer.

Notes:

- Duplicate keys are disallowed. Not all parsers may respect this.

- In general the subset of JSON is implicitly decided by `serde_json` for

this library. Anything obscure might be modified at a later time, that odd ways

to represent integer, newlines and escapes in utf-8 strings. This would only

be done for safety concerns

- Tensor values are not checked against, in particular NaN and +/-Inf could

be in the file

- Empty tensors (tensors with 1 dimension being 0) are allowed.

They are not storing any data in the databuffer, yet retaining size in the header.

They don't really bring a lot of values but are accepted since they are valid tensors

from traditional tensor libraries perspective (torch, tensorflow, numpy, ..).

- 0-rank Tensors (tensors with shape `[]`) are allowed, they are merely a scalar.

- The byte buffer needs to be entirely indexed, and cannot contain holes. This prevents

the creation of polyglot files.

- Endianness: Little-endian.

moment.

- Order: 'C' or row-major.

### Yet another format ?

The main rationale for this crate is to remove the need to use

`pickle` on `PyTorch` which is used by default.

There are other formats out there used by machine learning and more general

formats.

Let's take a look at alternatives and why this format is deemed interesting.

This is my very personal and probably biased view:

| Format | Safe | Zero-copy | Lazy loading | No file size limit | Layout control | Flexibility | Bfloat16/Fp8

| ----------------------- | --- | --- | --- | --- | --- | --- | --- |

| pickle (PyTorch) | ✗ | ✗ | ✗ | 🗸 | ✗ | 🗸 | 🗸 |

| H5 (Tensorflow) | 🗸 | ✗ | 🗸 | 🗸 | ~ | ~ | ✗ |

| SavedModel (Tensorflow) | 🗸 | ✗ | ✗ | 🗸 | 🗸 | ✗ | 🗸 |

| MsgPack (flax) | 🗸 | 🗸 | ✗ | 🗸 | ✗ | ✗ | 🗸 |

| Protobuf (ONNX) | 🗸 | ✗ | ✗ | ✗ | ✗ | ✗ | 🗸 |

| Cap'n'Proto | 🗸 | 🗸 | ~ | 🗸 | 🗸 | ~ | ✗ |

| Arrow | ? | ? | ? | ? | ? | ? | ✗ |

| Numpy (npy,npz) | 🗸 | ? | ? | ✗ | 🗸 | ✗ | ✗ |

| pdparams (Paddle) | ✗ | ✗ | ✗ | 🗸 | ✗ | 🗸 | 🗸 |

| SafeTensors | 🗸 | 🗸 | 🗸 | 🗸 | 🗸 | ✗ | 🗸 |

- Safe: Can I use a file randomly downloaded and expect not to run arbitrary code ?

- Zero-copy: Does reading the file require more memory than the original file ?

- Lazy loading: Can I inspect the file without loading everything ? And loading only

some tensors in it without scanning the whole file (distributed setting) ?

- Layout control: Lazy loading, is not necessarily enough since if the information about tensors is spread out in your file, then even if the information is lazily accessible you might have to access most of your file to read the available tensors (incurring many DISK -> RAM copies). Controlling the layout to keep fast access to single tensors is important.

- No file size limit: Is there a limit to the file size ?

- Flexibility: Can I save custom code in the format and be able to use it later with zero extra code ? (~ means we can store more than pure tensors, but no custom code)

- Bfloat16/Fp8: Does the format support native bfloat16/fp8 (meaning no weird workarounds are

necessary)? This is becoming increasingly important in the ML world.

### Main oppositions

- Pickle: Unsafe, runs arbitrary code

- H5: Apparently now discouraged for TF/Keras. Seems like a great fit otherwise actually. Some classic use after free issues: <https://www.cvedetails.com/vulnerability-list/vendor_id-15991/product_id-35054/Hdfgroup-Hdf5.html>. On a very different level than pickle security-wise. Also 210k lines of code vs ~400 lines for this lib currently.

- SavedModel: Tensorflow specific (it contains TF graph information).

- MsgPack: No layout control to enable lazy loading (important for loading specific parts in distributed setting)

- Protobuf: Hard 2Go max file size limit

- Cap'n'proto: Float16 support is not present [link](https://capnproto.org/language.html#built-in-types) so using a manual wrapper over a byte-buffer would be necessary. Layout control seems possible but not trivial as buffers have limitations [link](https://stackoverflow.com/questions/48458839/capnproto-maximum-filesize).

- Numpy (npz): No `bfloat16` support. Vulnerable to zip bombs (DOS). Not zero-copy.

- Arrow: No `bfloat16` support. Seem to require decoding [link](https://arrow.apache.org/docs/python/parquet.html#reading-parquet-and-memory-mapping)

### Notes

- Zero-copy: No format is really zero-copy in ML, it needs to go from disk to RAM/GPU RAM (that takes time). On CPU, if the file is already in cache, then it can

truly be zero-copy, whereas on GPU there is not such disk cache, so a copy is always required

but you can bypass allocating all the tensors on CPU at any given point.

SafeTensors is not zero-copy for the header. The choice of JSON is pretty arbitrary, but since deserialization is <<< of the time required to load the actual tensor data and is readable I went that way, (also space is <<< to the tensor data).

- Endianness: Little-endian. This can be modified later, but it feels really unnecessary at the

moment.

- Order: 'C' or row-major. This seems to have won. We can add that information later if needed.

- Stride: No striding, all tensors need to be packed before being serialized. I have yet to see a case where it seems useful to have a strided tensor stored in serialized format.

### Benefits

Since we can invent a new format we can propose additional benefits:

- Prevent DOS attacks: We can craft the format in such a way that it's almost

impossible to use malicious files to DOS attack a user. Currently, there's a limit

on the size of the header of 100MB to prevent parsing extremely large JSON.

Also when reading the file, there's a guarantee that addresses in the file

do not overlap in any way, meaning when you're loading a file you should never

exceed the size of the file in memory

- Faster load: PyTorch seems to be the fastest file to load out in the major

ML formats. However, it does seem to have an extra copy on CPU, which we

can bypass in this lib by using `torch.UntypedStorage.from_file`.

Currently, CPU loading times are extremely fast with this lib compared to pickle.

GPU loading times are as fast or faster than PyTorch equivalent.

Loading first on CPU with memmapping with torch, and then moving all tensors to GPU seems

to be faster too somehow (similar behavior in torch pickle)

- Lazy loading: in distributed (multi-node or multi-gpu) settings, it's nice to be able to

load only part of the tensors on the various models. For

[BLOOM](https://huggingface.co/bigscience/bloom) using this format enabled

to load the model on 8 GPUs from 10mn with regular PyTorch weights down to 45s.

This really speeds up feedbacks loops when developing on the model. For instance

you don't have to have separate copies of the weights when changing the distribution

strategy (for instance Pipeline Parallelism vs Tensor Parallelism).

License: Apache-2.0

| huggingface/safetensors/blob/main/README.md |

!--Copyright 2020 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

specific language governing permissions and limitations under the License.

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# Auto Classes

In many cases, the architecture you want to use can be guessed from the name or the path of the pretrained model you

are supplying to the `from_pretrained()` method. AutoClasses are here to do this job for you so that you

automatically retrieve the relevant model given the name/path to the pretrained weights/config/vocabulary.

Instantiating one of [`AutoConfig`], [`AutoModel`], and

[`AutoTokenizer`] will directly create a class of the relevant architecture. For instance

```python

model = AutoModel.from_pretrained("bert-base-cased")

```

will create a model that is an instance of [`BertModel`].

There is one class of `AutoModel` for each task, and for each backend (PyTorch, TensorFlow, or Flax).

## Extending the Auto Classes

Each of the auto classes has a method to be extended with your custom classes. For instance, if you have defined a

custom class of model `NewModel`, make sure you have a `NewModelConfig` then you can add those to the auto

classes like this:

```python

from transformers import AutoConfig, AutoModel

AutoConfig.register("new-model", NewModelConfig)

AutoModel.register(NewModelConfig, NewModel)

```

You will then be able to use the auto classes like you would usually do!

<Tip warning={true}>

If your `NewModelConfig` is a subclass of [`~transformers.PretrainedConfig`], make sure its

`model_type` attribute is set to the same key you use when registering the config (here `"new-model"`).

Likewise, if your `NewModel` is a subclass of [`PreTrainedModel`], make sure its

`config_class` attribute is set to the same class you use when registering the model (here

`NewModelConfig`).

</Tip>

## AutoConfig

[[autodoc]] AutoConfig

## AutoTokenizer

[[autodoc]] AutoTokenizer

## AutoFeatureExtractor

[[autodoc]] AutoFeatureExtractor

## AutoImageProcessor

[[autodoc]] AutoImageProcessor

## AutoProcessor

[[autodoc]] AutoProcessor

## Generic model classes

The following auto classes are available for instantiating a base model class without a specific head.

### AutoModel

[[autodoc]] AutoModel

### TFAutoModel

[[autodoc]] TFAutoModel

### FlaxAutoModel

[[autodoc]] FlaxAutoModel

## Generic pretraining classes

The following auto classes are available for instantiating a model with a pretraining head.

### AutoModelForPreTraining

[[autodoc]] AutoModelForPreTraining

### TFAutoModelForPreTraining

[[autodoc]] TFAutoModelForPreTraining

### FlaxAutoModelForPreTraining

[[autodoc]] FlaxAutoModelForPreTraining

## Natural Language Processing

The following auto classes are available for the following natural language processing tasks.

### AutoModelForCausalLM

[[autodoc]] AutoModelForCausalLM

### TFAutoModelForCausalLM

[[autodoc]] TFAutoModelForCausalLM

### FlaxAutoModelForCausalLM

[[autodoc]] FlaxAutoModelForCausalLM

### AutoModelForMaskedLM

[[autodoc]] AutoModelForMaskedLM

### TFAutoModelForMaskedLM

[[autodoc]] TFAutoModelForMaskedLM

### FlaxAutoModelForMaskedLM

[[autodoc]] FlaxAutoModelForMaskedLM

### AutoModelForMaskGeneration

[[autodoc]] AutoModelForMaskGeneration

### TFAutoModelForMaskGeneration

[[autodoc]] TFAutoModelForMaskGeneration

### AutoModelForSeq2SeqLM

[[autodoc]] AutoModelForSeq2SeqLM

### TFAutoModelForSeq2SeqLM

[[autodoc]] TFAutoModelForSeq2SeqLM

### FlaxAutoModelForSeq2SeqLM

[[autodoc]] FlaxAutoModelForSeq2SeqLM

### AutoModelForSequenceClassification

[[autodoc]] AutoModelForSequenceClassification

### TFAutoModelForSequenceClassification

[[autodoc]] TFAutoModelForSequenceClassification

### FlaxAutoModelForSequenceClassification

[[autodoc]] FlaxAutoModelForSequenceClassification

### AutoModelForMultipleChoice

[[autodoc]] AutoModelForMultipleChoice

### TFAutoModelForMultipleChoice

[[autodoc]] TFAutoModelForMultipleChoice

### FlaxAutoModelForMultipleChoice

[[autodoc]] FlaxAutoModelForMultipleChoice

### AutoModelForNextSentencePrediction

[[autodoc]] AutoModelForNextSentencePrediction

### TFAutoModelForNextSentencePrediction

[[autodoc]] TFAutoModelForNextSentencePrediction

### FlaxAutoModelForNextSentencePrediction

[[autodoc]] FlaxAutoModelForNextSentencePrediction

### AutoModelForTokenClassification

[[autodoc]] AutoModelForTokenClassification

### TFAutoModelForTokenClassification

[[autodoc]] TFAutoModelForTokenClassification

### FlaxAutoModelForTokenClassification

[[autodoc]] FlaxAutoModelForTokenClassification

### AutoModelForQuestionAnswering

[[autodoc]] AutoModelForQuestionAnswering

### TFAutoModelForQuestionAnswering

[[autodoc]] TFAutoModelForQuestionAnswering

### FlaxAutoModelForQuestionAnswering

[[autodoc]] FlaxAutoModelForQuestionAnswering

### AutoModelForTextEncoding

[[autodoc]] AutoModelForTextEncoding

### TFAutoModelForTextEncoding

[[autodoc]] TFAutoModelForTextEncoding

## Computer vision

The following auto classes are available for the following computer vision tasks.

### AutoModelForDepthEstimation

[[autodoc]] AutoModelForDepthEstimation

### AutoModelForImageClassification

[[autodoc]] AutoModelForImageClassification

### TFAutoModelForImageClassification

[[autodoc]] TFAutoModelForImageClassification

### FlaxAutoModelForImageClassification

[[autodoc]] FlaxAutoModelForImageClassification

### AutoModelForVideoClassification

[[autodoc]] AutoModelForVideoClassification

### AutoModelForMaskedImageModeling

[[autodoc]] AutoModelForMaskedImageModeling

### TFAutoModelForMaskedImageModeling

[[autodoc]] TFAutoModelForMaskedImageModeling

### AutoModelForObjectDetection

[[autodoc]] AutoModelForObjectDetection

### AutoModelForImageSegmentation

[[autodoc]] AutoModelForImageSegmentation

### AutoModelForImageToImage

[[autodoc]] AutoModelForImageToImage

### AutoModelForSemanticSegmentation

[[autodoc]] AutoModelForSemanticSegmentation

### TFAutoModelForSemanticSegmentation

[[autodoc]] TFAutoModelForSemanticSegmentation

### AutoModelForInstanceSegmentation

[[autodoc]] AutoModelForInstanceSegmentation

### AutoModelForUniversalSegmentation

[[autodoc]] AutoModelForUniversalSegmentation

### AutoModelForZeroShotImageClassification

[[autodoc]] AutoModelForZeroShotImageClassification

### TFAutoModelForZeroShotImageClassification

[[autodoc]] TFAutoModelForZeroShotImageClassification

### AutoModelForZeroShotObjectDetection

[[autodoc]] AutoModelForZeroShotObjectDetection

## Audio

The following auto classes are available for the following audio tasks.

### AutoModelForAudioClassification

[[autodoc]] AutoModelForAudioClassification

### AutoModelForAudioFrameClassification

[[autodoc]] TFAutoModelForAudioClassification

### TFAutoModelForAudioFrameClassification

[[autodoc]] AutoModelForAudioFrameClassification

### AutoModelForCTC

[[autodoc]] AutoModelForCTC

### AutoModelForSpeechSeq2Seq

[[autodoc]] AutoModelForSpeechSeq2Seq

### TFAutoModelForSpeechSeq2Seq

[[autodoc]] TFAutoModelForSpeechSeq2Seq

### FlaxAutoModelForSpeechSeq2Seq

[[autodoc]] FlaxAutoModelForSpeechSeq2Seq

### AutoModelForAudioXVector

[[autodoc]] AutoModelForAudioXVector

### AutoModelForTextToSpectrogram

[[autodoc]] AutoModelForTextToSpectrogram

### AutoModelForTextToWaveform

[[autodoc]] AutoModelForTextToWaveform

## Multimodal

The following auto classes are available for the following multimodal tasks.

### AutoModelForTableQuestionAnswering

[[autodoc]] AutoModelForTableQuestionAnswering

### TFAutoModelForTableQuestionAnswering

[[autodoc]] TFAutoModelForTableQuestionAnswering

### AutoModelForDocumentQuestionAnswering

[[autodoc]] AutoModelForDocumentQuestionAnswering

### TFAutoModelForDocumentQuestionAnswering

[[autodoc]] TFAutoModelForDocumentQuestionAnswering

### AutoModelForVisualQuestionAnswering

[[autodoc]] AutoModelForVisualQuestionAnswering

### AutoModelForVision2Seq

[[autodoc]] AutoModelForVision2Seq

### TFAutoModelForVision2Seq

[[autodoc]] TFAutoModelForVision2Seq

### FlaxAutoModelForVision2Seq

[[autodoc]] FlaxAutoModelForVision2Seq

| huggingface/transformers/blob/main/docs/source/en/model_doc/auto.md |

Gradio Demo: gif_maker

```

!pip install -q gradio opencv-python

```

```

import cv2

import gradio as gr

def gif_maker(img_files):

img_array = []

for filename in img_files:

img = cv2.imread(filename.name)

height, width, _ = img.shape

size = (width,height)

img_array.append(img)

output_file = "test.mp4"

out = cv2.VideoWriter(output_file,cv2.VideoWriter_fourcc(*'h264'), 15, size)

for i in range(len(img_array)):

out.write(img_array[i])

out.release()

return output_file

demo = gr.Interface(gif_maker, inputs=gr.File(file_count="multiple"), outputs=gr.Video())

if __name__ == "__main__":

demo.launch()

```

| gradio-app/gradio/blob/main/demo/gif_maker/run.ipynb |

SWSL ResNet

**Residual Networks**, or **ResNets**, learn residual functions with reference to the layer inputs, instead of learning unreferenced functions. Instead of hoping each few stacked layers directly fit a desired underlying mapping, residual nets let these layers fit a residual mapping. They stack [residual blocks](https://paperswithcode.com/method/residual-block) ontop of each other to form network: e.g. a ResNet-50 has fifty layers using these blocks.

The models in this collection utilise semi-weakly supervised learning to improve the performance of the model. The approach brings important gains to standard architectures for image, video and fine-grained classification.

Please note the CC-BY-NC 4.0 license on theses weights, non-commercial use only.

## How do I use this model on an image?

To load a pretrained model:

```python

import timm

model = timm.create_model('swsl_resnet18', pretrained=True)

model.eval()

```

To load and preprocess the image:

```python

import urllib

from PIL import Image

from timm.data import resolve_data_config

from timm.data.transforms_factory import create_transform

config = resolve_data_config({}, model=model)

transform = create_transform(**config)

url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

urllib.request.urlretrieve(url, filename)

img = Image.open(filename).convert('RGB')

tensor = transform(img).unsqueeze(0) # transform and add batch dimension

```

To get the model predictions:

```python

import torch

with torch.no_grad():

out = model(tensor)

probabilities = torch.nn.functional.softmax(out[0], dim=0)

print(probabilities.shape)

# prints: torch.Size([1000])

```

To get the top-5 predictions class names:

```python

# Get imagenet class mappings

url, filename = ("https://raw.githubusercontent.com/pytorch/hub/master/imagenet_classes.txt", "imagenet_classes.txt")

urllib.request.urlretrieve(url, filename)

with open("imagenet_classes.txt", "r") as f:

categories = [s.strip() for s in f.readlines()]

# Print top categories per image

top5_prob, top5_catid = torch.topk(probabilities, 5)

for i in range(top5_prob.size(0)):

print(categories[top5_catid[i]], top5_prob[i].item())

# prints class names and probabilities like:

# [('Samoyed', 0.6425196528434753), ('Pomeranian', 0.04062102362513542), ('keeshond', 0.03186424449086189), ('white wolf', 0.01739676296710968), ('Eskimo dog', 0.011717947199940681)]

```

Replace the model name with the variant you want to use, e.g. `swsl_resnet18`. You can find the IDs in the model summaries at the top of this page.

To extract image features with this model, follow the [timm feature extraction examples](https://rwightman.github.io/pytorch-image-models/feature_extraction/), just change the name of the model you want to use.

## How do I finetune this model?

You can finetune any of the pre-trained models just by changing the classifier (the last layer).

```python

model = timm.create_model('swsl_resnet18', pretrained=True, num_classes=NUM_FINETUNE_CLASSES)

```

To finetune on your own dataset, you have to write a training loop or adapt [timm's training

script](https://github.com/rwightman/pytorch-image-models/blob/master/train.py) to use your dataset.

## How do I train this model?

You can follow the [timm recipe scripts](https://rwightman.github.io/pytorch-image-models/scripts/) for training a new model afresh.

## Citation

```BibTeX

@article{DBLP:journals/corr/abs-1905-00546,

author = {I. Zeki Yalniz and

Herv{\'{e}} J{\'{e}}gou and

Kan Chen and

Manohar Paluri and

Dhruv Mahajan},

title = {Billion-scale semi-supervised learning for image classification},

journal = {CoRR},

volume = {abs/1905.00546},

year = {2019},

url = {http://arxiv.org/abs/1905.00546},

archivePrefix = {arXiv},

eprint = {1905.00546},

timestamp = {Mon, 28 Sep 2020 08:19:37 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-1905-00546.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

<!--

Type: model-index

Collections:

- Name: SWSL ResNet

Paper:

Title: Billion-scale semi-supervised learning for image classification

URL: https://paperswithcode.com/paper/billion-scale-semi-supervised-learning-for

Models:

- Name: swsl_resnet18

In Collection: SWSL ResNet

Metadata:

FLOPs: 2337073152

Parameters: 11690000

File Size: 46811375

Architecture:

- 1x1 Convolution

- Batch Normalization

- Bottleneck Residual Block

- Convolution

- Global Average Pooling

- Max Pooling

- ReLU

- Residual Block

- Residual Connection

- Softmax

Tasks:

- Image Classification

Training Techniques:

- SGD with Momentum

- Weight Decay

Training Data:

- IG-1B-Targeted

- ImageNet

Training Resources: 64x GPUs

ID: swsl_resnet18

LR: 0.0015

Epochs: 30

Layers: 18

Crop Pct: '0.875'

Batch Size: 1536

Image Size: '224'

Weight Decay: 0.0001

Interpolation: bilinear

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/resnet.py#L954

Weights: https://dl.fbaipublicfiles.com/semiweaksupervision/model_files/semi_weakly_supervised_resnet18-118f1556.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 73.28%

Top 5 Accuracy: 91.76%

- Name: swsl_resnet50

In Collection: SWSL ResNet

Metadata:

FLOPs: 5282531328

Parameters: 25560000

File Size: 102480594

Architecture:

- 1x1 Convolution

- Batch Normalization

- Bottleneck Residual Block

- Convolution

- Global Average Pooling

- Max Pooling

- ReLU

- Residual Block

- Residual Connection

- Softmax

Tasks:

- Image Classification

Training Techniques:

- SGD with Momentum

- Weight Decay

Training Data:

- IG-1B-Targeted

- ImageNet

Training Resources: 64x GPUs

ID: swsl_resnet50

LR: 0.0015

Epochs: 30

Layers: 50

Crop Pct: '0.875'

Batch Size: 1536

Image Size: '224'

Weight Decay: 0.0001

Interpolation: bilinear

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/resnet.py#L965

Weights: https://dl.fbaipublicfiles.com/semiweaksupervision/model_files/semi_weakly_supervised_resnet50-16a12f1b.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 81.14%

Top 5 Accuracy: 95.97%

--> | huggingface/pytorch-image-models/blob/main/docs/models/swsl-resnet.md |

!--Copyright 2020 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

specific language governing permissions and limitations under the License.

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# CamemBERT

## Overview

The CamemBERT model was proposed in [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) by

Louis Martin, Benjamin Muller, Pedro Javier Ortiz Suárez, Yoann Dupont, Laurent Romary, Éric Villemonte de la

Clergerie, Djamé Seddah, and Benoît Sagot. It is based on Facebook's RoBERTa model released in 2019. It is a model

trained on 138GB of French text.

The abstract from the paper is the following:

*Pretrained language models are now ubiquitous in Natural Language Processing. Despite their success, most available

models have either been trained on English data or on the concatenation of data in multiple languages. This makes

practical use of such models --in all languages except English-- very limited. Aiming to address this issue for French,

we release CamemBERT, a French version of the Bi-directional Encoders for Transformers (BERT). We measure the

performance of CamemBERT compared to multilingual models in multiple downstream tasks, namely part-of-speech tagging,

dependency parsing, named-entity recognition, and natural language inference. CamemBERT improves the state of the art

for most of the tasks considered. We release the pretrained model for CamemBERT hoping to foster research and

downstream applications for French NLP.*

This model was contributed by [camembert](https://huggingface.co/camembert). The original code can be found [here](https://camembert-model.fr/).

<Tip>

This implementation is the same as RoBERTa. Refer to the [documentation of RoBERTa](roberta) for usage examples as well

as the information relative to the inputs and outputs.

</Tip>

## Resources

- [Text classification task guide](../tasks/sequence_classification)

- [Token classification task guide](../tasks/token_classification)

- [Question answering task guide](../tasks/question_answering)

- [Causal language modeling task guide](../tasks/language_modeling)

- [Masked language modeling task guide](../tasks/masked_language_modeling)

- [Multiple choice task guide](../tasks/multiple_choice)

## CamembertConfig

[[autodoc]] CamembertConfig

## CamembertTokenizer

[[autodoc]] CamembertTokenizer

- build_inputs_with_special_tokens

- get_special_tokens_mask

- create_token_type_ids_from_sequences

- save_vocabulary

## CamembertTokenizerFast

[[autodoc]] CamembertTokenizerFast

<frameworkcontent>

<pt>

## CamembertModel

[[autodoc]] CamembertModel

## CamembertForCausalLM

[[autodoc]] CamembertForCausalLM

## CamembertForMaskedLM

[[autodoc]] CamembertForMaskedLM

## CamembertForSequenceClassification

[[autodoc]] CamembertForSequenceClassification

## CamembertForMultipleChoice

[[autodoc]] CamembertForMultipleChoice

## CamembertForTokenClassification

[[autodoc]] CamembertForTokenClassification

## CamembertForQuestionAnswering

[[autodoc]] CamembertForQuestionAnswering

</pt>

<tf>

## TFCamembertModel

[[autodoc]] TFCamembertModel

## TFCamembertForCasualLM

[[autodoc]] TFCamembertForCausalLM

## TFCamembertForMaskedLM

[[autodoc]] TFCamembertForMaskedLM

## TFCamembertForSequenceClassification

[[autodoc]] TFCamembertForSequenceClassification

## TFCamembertForMultipleChoice

[[autodoc]] TFCamembertForMultipleChoice

## TFCamembertForTokenClassification

[[autodoc]] TFCamembertForTokenClassification

## TFCamembertForQuestionAnswering

[[autodoc]] TFCamembertForQuestionAnswering

</tf>

</frameworkcontent>

| huggingface/transformers/blob/main/docs/source/en/model_doc/camembert.md |

(Tensorflow) EfficientNet

**EfficientNet** is a convolutional neural network architecture and scaling method that uniformly scales all dimensions of depth/width/resolution using a *compound coefficient*. Unlike conventional practice that arbitrary scales these factors, the EfficientNet scaling method uniformly scales network width, depth, and resolution with a set of fixed scaling coefficients. For example, if we want to use $2^N$ times more computational resources, then we can simply increase the network depth by $\alpha ^ N$, width by $\beta ^ N$, and image size by $\gamma ^ N$, where $\alpha, \beta, \gamma$ are constant coefficients determined by a small grid search on the original small model. EfficientNet uses a compound coefficient $\phi$ to uniformly scales network width, depth, and resolution in a principled way.

The compound scaling method is justified by the intuition that if the input image is bigger, then the network needs more layers to increase the receptive field and more channels to capture more fine-grained patterns on the bigger image.

The base EfficientNet-B0 network is based on the inverted bottleneck residual blocks of [MobileNetV2](https://paperswithcode.com/method/mobilenetv2), in addition to [squeeze-and-excitation blocks](https://paperswithcode.com/method/squeeze-and-excitation-block).

The weights from this model were ported from [Tensorflow/TPU](https://github.com/tensorflow/tpu).

## How do I use this model on an image?

To load a pretrained model:

```python

import timm

model = timm.create_model('tf_efficientnet_b0', pretrained=True)

model.eval()

```

To load and preprocess the image:

```python

import urllib

from PIL import Image

from timm.data import resolve_data_config

from timm.data.transforms_factory import create_transform

config = resolve_data_config({}, model=model)

transform = create_transform(**config)

url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

urllib.request.urlretrieve(url, filename)

img = Image.open(filename).convert('RGB')

tensor = transform(img).unsqueeze(0) # transform and add batch dimension

```

To get the model predictions:

```python

import torch

with torch.no_grad():

out = model(tensor)

probabilities = torch.nn.functional.softmax(out[0], dim=0)

print(probabilities.shape)

# prints: torch.Size([1000])

```

To get the top-5 predictions class names:

```python

# Get imagenet class mappings

url, filename = ("https://raw.githubusercontent.com/pytorch/hub/master/imagenet_classes.txt", "imagenet_classes.txt")

urllib.request.urlretrieve(url, filename)

with open("imagenet_classes.txt", "r") as f:

categories = [s.strip() for s in f.readlines()]

# Print top categories per image

top5_prob, top5_catid = torch.topk(probabilities, 5)

for i in range(top5_prob.size(0)):

print(categories[top5_catid[i]], top5_prob[i].item())

# prints class names and probabilities like:

# [('Samoyed', 0.6425196528434753), ('Pomeranian', 0.04062102362513542), ('keeshond', 0.03186424449086189), ('white wolf', 0.01739676296710968), ('Eskimo dog', 0.011717947199940681)]

```

Replace the model name with the variant you want to use, e.g. `tf_efficientnet_b0`. You can find the IDs in the model summaries at the top of this page.

To extract image features with this model, follow the [timm feature extraction examples](https://rwightman.github.io/pytorch-image-models/feature_extraction/), just change the name of the model you want to use.

## How do I finetune this model?

You can finetune any of the pre-trained models just by changing the classifier (the last layer).

```python

model = timm.create_model('tf_efficientnet_b0', pretrained=True, num_classes=NUM_FINETUNE_CLASSES)

```

To finetune on your own dataset, you have to write a training loop or adapt [timm's training

script](https://github.com/rwightman/pytorch-image-models/blob/master/train.py) to use your dataset.

## How do I train this model?

You can follow the [timm recipe scripts](https://rwightman.github.io/pytorch-image-models/scripts/) for training a new model afresh.

## Citation

```BibTeX

@misc{tan2020efficientnet,

title={EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks},

author={Mingxing Tan and Quoc V. Le},

year={2020},

eprint={1905.11946},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

```

<!--

Type: model-index

Collections:

- Name: TF EfficientNet

Paper:

Title: 'EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks'

URL: https://paperswithcode.com/paper/efficientnet-rethinking-model-scaling-for

Models:

- Name: tf_efficientnet_b0

In Collection: TF EfficientNet

Metadata:

FLOPs: 488688572

Parameters: 5290000

File Size: 21383997

Architecture:

- 1x1 Convolution

- Average Pooling

- Batch Normalization

- Convolution

- Dense Connections

- Dropout

- Inverted Residual Block

- Squeeze-and-Excitation Block

- Swish

Tasks:

- Image Classification

Training Techniques:

- AutoAugment

- Label Smoothing

- RMSProp

- Stochastic Depth

- Weight Decay

Training Data:

- ImageNet

Training Resources: TPUv3 Cloud TPU

ID: tf_efficientnet_b0

LR: 0.256

Epochs: 350

Crop Pct: '0.875'

Momentum: 0.9

Batch Size: 2048

Image Size: '224'

Weight Decay: 1.0e-05

Interpolation: bicubic

RMSProp Decay: 0.9

Label Smoothing: 0.1

BatchNorm Momentum: 0.99

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/efficientnet.py#L1241

Weights: https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-weights/tf_efficientnet_b0_aa-827b6e33.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 76.85%

Top 5 Accuracy: 93.23%

- Name: tf_efficientnet_b1

In Collection: TF EfficientNet

Metadata:

FLOPs: 883633200

Parameters: 7790000

File Size: 31512534

Architecture:

- 1x1 Convolution

- Average Pooling

- Batch Normalization

- Convolution

- Dense Connections

- Dropout

- Inverted Residual Block

- Squeeze-and-Excitation Block

- Swish

Tasks:

- Image Classification

Training Techniques:

- AutoAugment

- Label Smoothing

- RMSProp

- Stochastic Depth

- Weight Decay

Training Data:

- ImageNet

ID: tf_efficientnet_b1

LR: 0.256

Epochs: 350

Crop Pct: '0.882'

Momentum: 0.9

Batch Size: 2048

Image Size: '240'

Weight Decay: 1.0e-05

Interpolation: bicubic

RMSProp Decay: 0.9

Label Smoothing: 0.1

BatchNorm Momentum: 0.99

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/efficientnet.py#L1251

Weights: https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-weights/tf_efficientnet_b1_aa-ea7a6ee0.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 78.84%

Top 5 Accuracy: 94.2%

- Name: tf_efficientnet_b2

In Collection: TF EfficientNet

Metadata:

FLOPs: 1234321170

Parameters: 9110000

File Size: 36797929

Architecture:

- 1x1 Convolution

- Average Pooling

- Batch Normalization

- Convolution

- Dense Connections

- Dropout

- Inverted Residual Block

- Squeeze-and-Excitation Block

- Swish

Tasks:

- Image Classification

Training Techniques:

- AutoAugment

- Label Smoothing

- RMSProp

- Stochastic Depth

- Weight Decay

Training Data:

- ImageNet

ID: tf_efficientnet_b2

LR: 0.256

Epochs: 350

Crop Pct: '0.89'

Momentum: 0.9

Batch Size: 2048

Image Size: '260'

Weight Decay: 1.0e-05

Interpolation: bicubic

RMSProp Decay: 0.9

Label Smoothing: 0.1

BatchNorm Momentum: 0.99

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/efficientnet.py#L1261

Weights: https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-weights/tf_efficientnet_b2_aa-60c94f97.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 80.07%

Top 5 Accuracy: 94.9%

- Name: tf_efficientnet_b3

In Collection: TF EfficientNet

Metadata:

FLOPs: 2275247568

Parameters: 12230000

File Size: 49381362

Architecture:

- 1x1 Convolution

- Average Pooling

- Batch Normalization

- Convolution

- Dense Connections

- Dropout

- Inverted Residual Block

- Squeeze-and-Excitation Block

- Swish

Tasks:

- Image Classification

Training Techniques:

- AutoAugment

- Label Smoothing

- RMSProp

- Stochastic Depth

- Weight Decay

Training Data:

- ImageNet

ID: tf_efficientnet_b3

LR: 0.256

Epochs: 350

Crop Pct: '0.904'

Momentum: 0.9

Batch Size: 2048

Image Size: '300'

Weight Decay: 1.0e-05

Interpolation: bicubic

RMSProp Decay: 0.9

Label Smoothing: 0.1

BatchNorm Momentum: 0.99

Code: https://github.com/rwightman/pytorch-image-models/blob/9a25fdf3ad0414b4d66da443fe60ae0aa14edc84/timm/models/efficientnet.py#L1271

Weights: https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-weights/tf_efficientnet_b3_aa-84b4657e.pth

Results:

- Task: Image Classification

Dataset: ImageNet

Metrics:

Top 1 Accuracy: 81.65%

Top 5 Accuracy: 95.72%

- Name: tf_efficientnet_b4

In Collection: TF EfficientNet

Metadata:

FLOPs: 5749638672

Parameters: 19340000

File Size: 77989689

Architecture:

- 1x1 Convolution

- Average Pooling

- Batch Normalization

- Convolution

- Dense Connections

- Dropout

- Inverted Residual Block

- Squeeze-and-Excitation Block

- Swish

Tasks:

- Image Classification

Training Techniques:

- AutoAugment

- Label Smoothing

- RMSProp

- Stochastic Depth

- Weight Decay

Training Data:

- ImageNet

Training Resources: TPUv3 Cloud TPU

ID: tf_efficientnet_b4

LR: 0.256

Epochs: 350

Crop Pct: '0.922'

Momentum: 0.9

Batch Size: 2048

Image Size: '380'

Weight Decay: 1.0e-05

Interpolation: bicubic

RMSProp Decay: 0.9

Label Smoothing: 0.1

BatchNorm Momentum: 0.99