data-intermediate

If you are looking for our test ready version, please refer to mango-ttic/data

Find more about us at mango.ttic.edu

Folder Structure

Each folder inside data-intermediate contains

all intermediate files we used during data annotation and generation. Here is the tree structure from game data-intermediate/night .

data-intermediate/night/

├── night.all2all.json # all simple paths between any 2 nodes

├── night.all_pairs.json # all connectivity between any 2 nodes

├── night.anno2code.json # annotation to codename mapping

├── night.code2anno.json # codename to annotation mapping

├── night.edges.json # list of all edges

├── night.map.human # human map derived from human annotation

├── night.map.machine # machine map derived from exported action sequences

├── night.map.reversed # reverse map derived from human annotation map

├── night.moves # list of mentioned actions

├── night.nodes.json # list of all nodes

├── night.valid_moves.csv # human annotation

├── night.walkthrough # enriched walkthrough exported from Jericho simulator

└── night.walkthrough_acts # action sequences exported from Jericho simulator

Variations

70-step vs all-step version

In our paper, we benchmark using the first 70 steps of the walkthrough from each game. We also provide all-step versions of both data and data-intermediate collection.

70-step

data-intermediate-70steps.tar.zst: contains the first 70 steps of each walkthrough. If the complete walkthrough is shorter than 70 steps, then all steps are used.All-step

data-intermediate.tar.zst: contains all steps of each walkthrough.

Word-only & Word+ID

Word-only

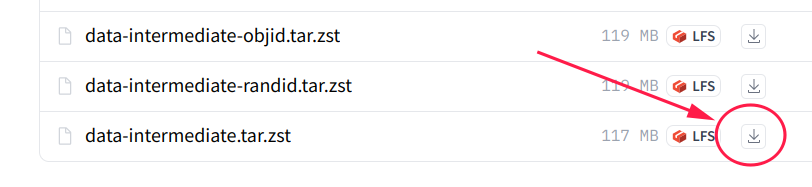

data-intermediate.tar.zst: Nodes are annotated by additional descriptive text to distinguish different locations with similar names.Word + Object ID

data-intermediate-objid.tar.zst: variation of the word-only version, where nodes are labeled using minimaly fixed names with object id from Jericho simulator.Word + Random ID

data-intermediate-randid.tar.zst: variation of the Jericho ID version, where the Jericho object id replaced with randomly generated integer.

We primarily rely on the word-only version as benchmark, yet providing word+ID version for diverse benchmark settings.

How to use

We use data-intermediate.tar.zst as an example here.

1. download from Huggingface

by directly download

You can selectively download certain variation of your choice.

by git

Make sure you have git-lfs installed

git lfs install

git clone https://huggingface.co/datasets/mango-ttic/data-intermediate

# or, use hf-mirror if your connection to huggingface.co is slow

# git clone https://hf-mirror.com/datasets/mango-ttic/data-intermediate

If you want to clone without large files - just their pointers

GIT_LFS_SKIP_SMUDGE=1 git clone https://huggingface.co/datasets/mango-ttic/data-intermediate

# or, use hf-mirror if your connection to huggingface.co is slow

# GIT_LFS_SKIP_SMUDGE=1 git clone https://hf-mirror.com/datasets/mango-ttic/data-intermediate

2. decompress

Because some json files are huge, we use tar.zst to package the data efficiently.

silently decompress

tar -I 'zstd -d' -xf data-intermediate.tar.zst

or, verbosely decompress

zstd -d -c data-intermediate.tar.zst | tar -xvf -