metadata

library_name: transformers

license: mit

base_model: openai-community/gpt2

tags:

- generated_from_trainer

model-index:

- name: arabic-nano-gpt-v2

results: []

datasets:

- wikimedia/wikipedia

language:

- ar

arabic-nano-gpt-v2

This model is a fine-tuned version of openai-community/gpt2 on the arabic wikimedia/wikipedia dataset.

Repository on GitHub: e-hossam96/arabic-nano-gpt

The model achieves the following results on the held-out test set:

- Loss: 3.25564

How to Use

import torch

from transformers import pipeline

model_ckpt = "e-hossam96/arabic-nano-gpt-v2"

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

lm = pipeline(task="text-generation", model=model_ckpt, device=device)

prompt = """المحرك النفاث هو محرك ينفث الموائع (الماء أو الهواء) بسرعة فائقة \

لينتج قوة دافعة اعتمادا على مبدأ قانون نيوتن الثالث للحركة. \

هذا التعريف الواسع للمحركات النفاثة يتضمن أيضا"""

output = lm(prompt, max_new_tokens=128)

print(output[0]["generated_text"])

Model description

- Embedding Size: 384

- Attention Heads: 6

- Attention Layers: 8

Training and evaluation data

The entire wikipedia dataset was split into three splits based on the 90-5-5 ratios.

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 8

- total_train_batch_size: 256

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.01

- num_epochs: 8

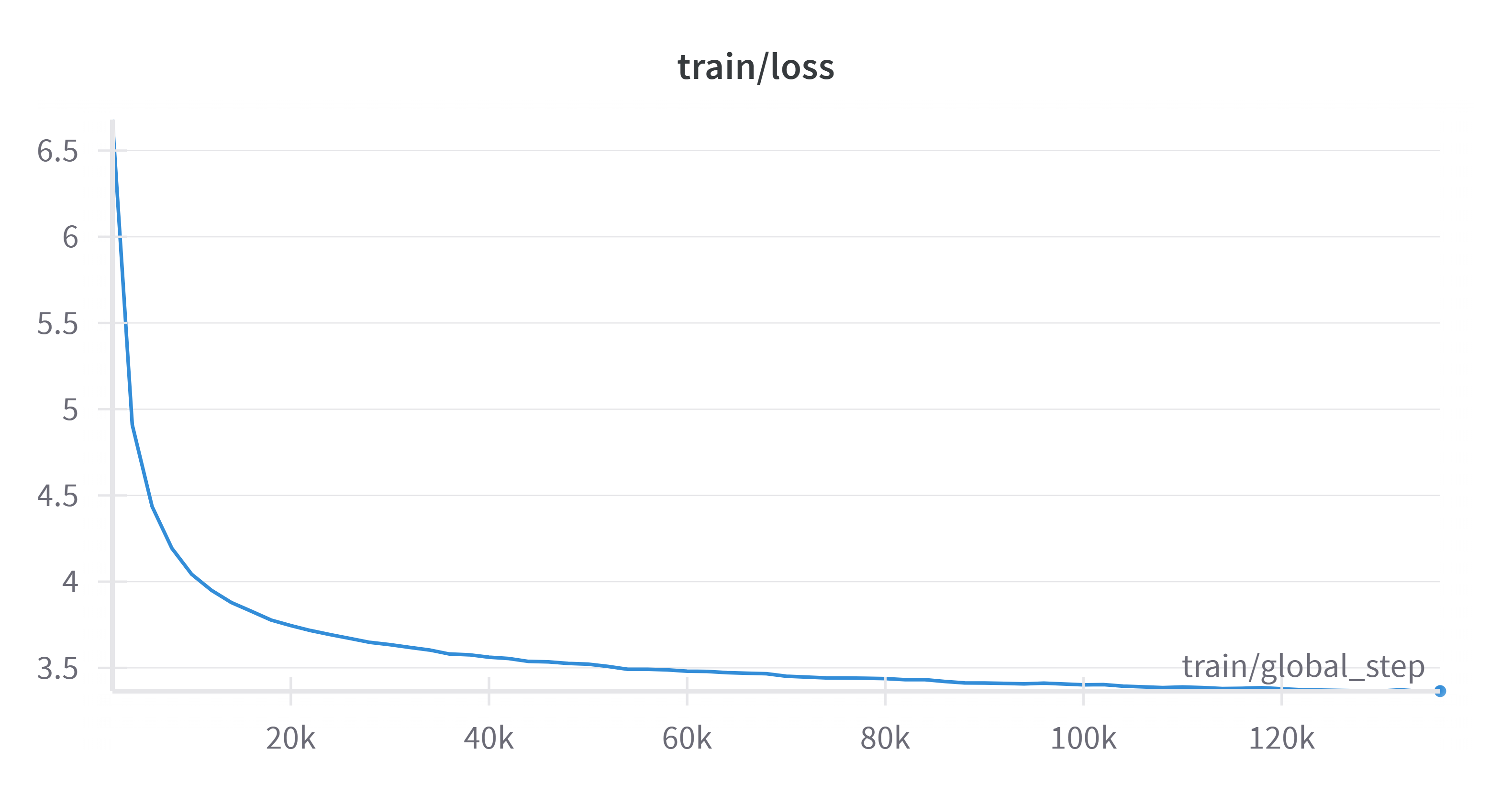

Training Loss

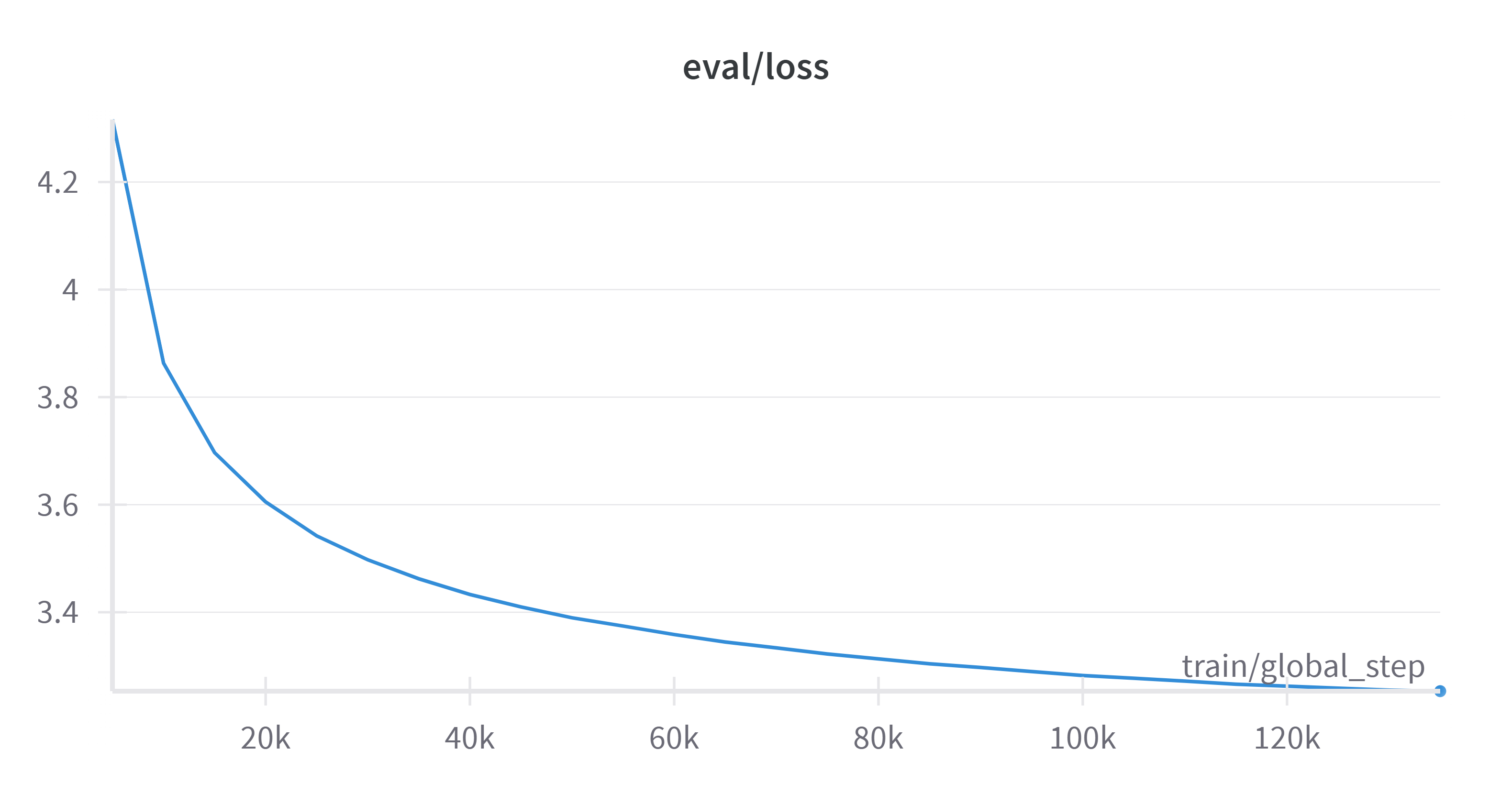

Validation Loss

Framework versions

- Transformers 4.45.2

- Pytorch 2.5.0

- Datasets 3.0.1

- Tokenizers 0.20.1