stable-diffusion-xl-base-1.0-GGUF

!!! Experimental supported by gpustack/llama-box v0.0.75+ only !!!

Model creator: Stability AI

Original model: stable-diffusion-xl-base-1.0

GGUF quantization: based on stable-diffusion.cpp ac54e that patched by llama-box.

VAE From: madebyollin/sdxl-vae-fp16-fix.

| Quantization | OpenAI CLIP ViT-L/14 Quantization | OpenCLIP ViT-G/14 Quantization | VAE Quantization |

|---|---|---|---|

| FP16 | FP16 | FP16 | FP16 |

| Q8_0 | FP16 | FP16 | FP16 |

| Q4_1 | FP16 | FP16 | FP16 |

| Q4_0 | FP16 | FP16 | FP16 |

SD-XL 1.0-base Model Card

Model

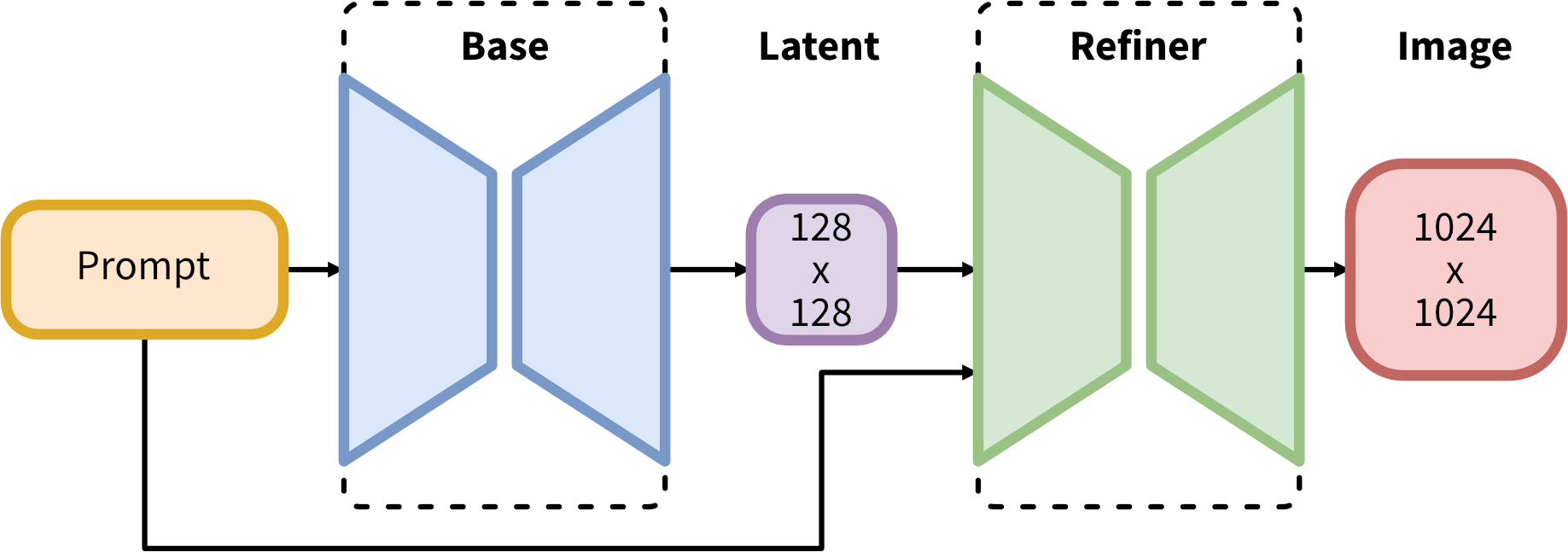

SDXL consists of an ensemble of experts pipeline for latent diffusion: In a first step, the base model is used to generate (noisy) latents, which are then further processed with a refinement model (available here: https://huggingface.co/stabilityai/stable-diffusion-xl-refiner-1.0/) specialized for the final denoising steps. Note that the base model can be used as a standalone module.

Alternatively, we can use a two-stage pipeline as follows: First, the base model is used to generate latents of the desired output size. In the second step, we use a specialized high-resolution model and apply a technique called SDEdit (https://arxiv.org/abs/2108.01073, also known as "img2img") to the latents generated in the first step, using the same prompt. This technique is slightly slower than the first one, as it requires more function evaluations.

Source code is available at https://github.com/Stability-AI/generative-models .

Model Description

- Developed by: Stability AI

- Model type: Diffusion-based text-to-image generative model

- License: CreativeML Open RAIL++-M License

- Model Description: This is a model that can be used to generate and modify images based on text prompts. It is a Latent Diffusion Model that uses two fixed, pretrained text encoders (OpenCLIP-ViT/G and CLIP-ViT/L).

- Resources for more information: Check out our GitHub Repository and the SDXL report on arXiv.

Model Sources

For research purposes, we recommend our generative-models Github repository (https://github.com/Stability-AI/generative-models), which implements the most popular diffusion frameworks (both training and inference) and for which new functionalities like distillation will be added over time.

Clipdrop provides free SDXL inference.

- Repository: https://github.com/Stability-AI/generative-models

- Demo: https://clipdrop.co/stable-diffusion

Evaluation

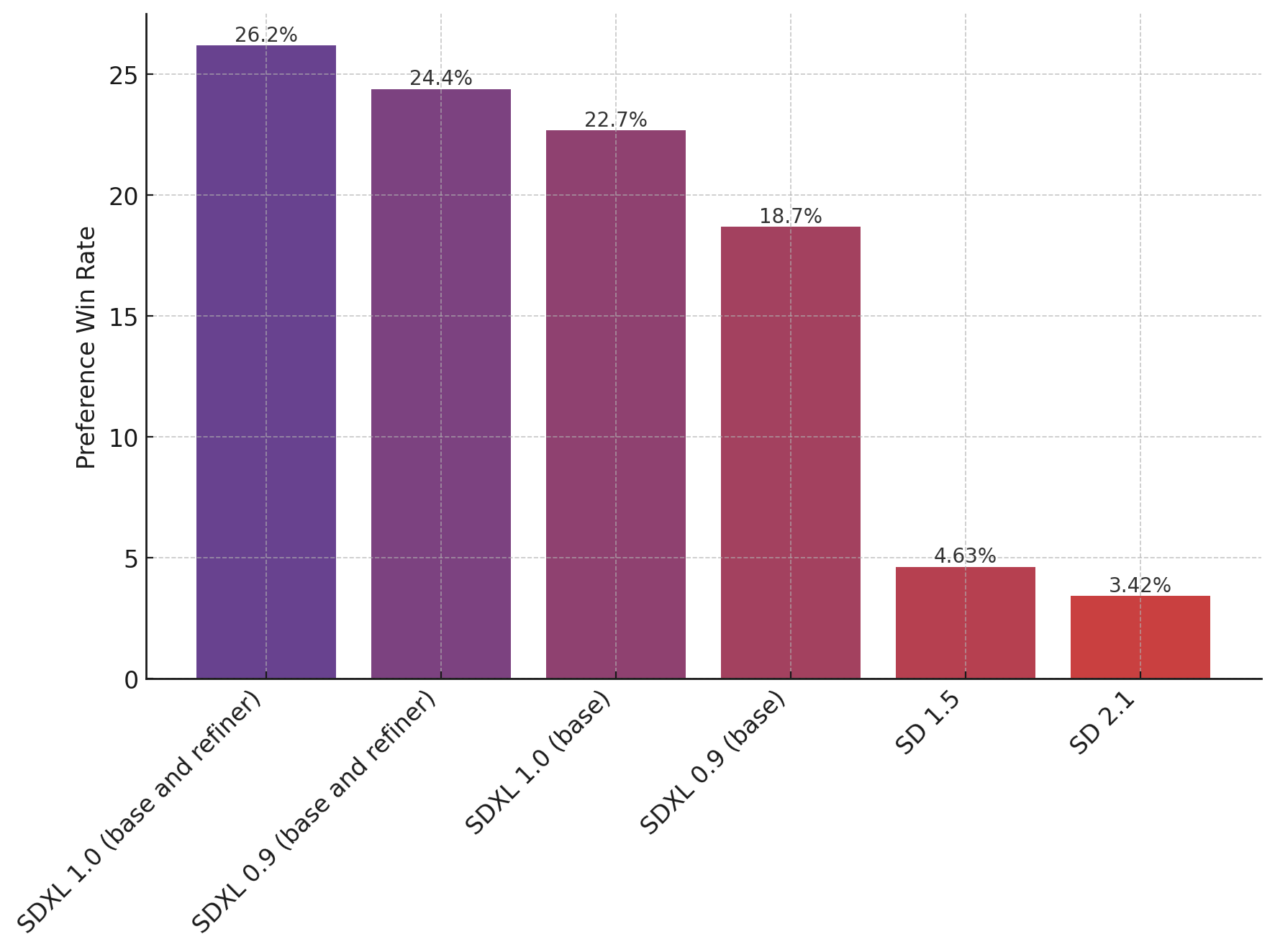

The chart above evaluates user preference for SDXL (with and without refinement) over SDXL 0.9 and Stable Diffusion 1.5 and 2.1.

The SDXL base model performs significantly better than the previous variants, and the model combined with the refinement module achieves the best overall performance.

The chart above evaluates user preference for SDXL (with and without refinement) over SDXL 0.9 and Stable Diffusion 1.5 and 2.1.

The SDXL base model performs significantly better than the previous variants, and the model combined with the refinement module achieves the best overall performance.

🧨 Diffusers

Make sure to upgrade diffusers to >= 0.19.0:

pip install diffusers --upgrade

In addition make sure to install transformers, safetensors, accelerate as well as the invisible watermark:

pip install invisible_watermark transformers accelerate safetensors

To just use the base model, you can run:

from diffusers import DiffusionPipeline

import torch

pipe = DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-xl-base-1.0", torch_dtype=torch.float16, use_safetensors=True, variant="fp16")

pipe.to("cuda")

# if using torch < 2.0

# pipe.enable_xformers_memory_efficient_attention()

prompt = "An astronaut riding a green horse"

images = pipe(prompt=prompt).images[0]

To use the whole base + refiner pipeline as an ensemble of experts you can run:

from diffusers import DiffusionPipeline

import torch

# load both base & refiner

base = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-base-1.0", torch_dtype=torch.float16, variant="fp16", use_safetensors=True

)

base.to("cuda")

refiner = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-refiner-1.0",

text_encoder_2=base.text_encoder_2,

vae=base.vae,

torch_dtype=torch.float16,

use_safetensors=True,

variant="fp16",

)

refiner.to("cuda")

# Define how many steps and what % of steps to be run on each experts (80/20) here

n_steps = 40

high_noise_frac = 0.8

prompt = "A majestic lion jumping from a big stone at night"

# run both experts

image = base(

prompt=prompt,

num_inference_steps=n_steps,

denoising_end=high_noise_frac,

output_type="latent",

).images

image = refiner(

prompt=prompt,

num_inference_steps=n_steps,

denoising_start=high_noise_frac,

image=image,

).images[0]

When using torch >= 2.0, you can improve the inference speed by 20-30% with torch.compile. Simple wrap the unet with torch compile before running the pipeline:

pipe.unet = torch.compile(pipe.unet, mode="reduce-overhead", fullgraph=True)

If you are limited by GPU VRAM, you can enable cpu offloading by calling pipe.enable_model_cpu_offload

instead of .to("cuda"):

- pipe.to("cuda")

+ pipe.enable_model_cpu_offload()

For more information on how to use Stable Diffusion XL with diffusers, please have a look at the Stable Diffusion XL Docs.

Optimum

Optimum provides a Stable Diffusion pipeline compatible with both OpenVINO and ONNX Runtime.

OpenVINO

To install Optimum with the dependencies required for OpenVINO :

pip install optimum[openvino]

To load an OpenVINO model and run inference with OpenVINO Runtime, you need to replace StableDiffusionXLPipeline with Optimum OVStableDiffusionXLPipeline. In case you want to load a PyTorch model and convert it to the OpenVINO format on-the-fly, you can set export=True.

- from diffusers import StableDiffusionXLPipeline

+ from optimum.intel import OVStableDiffusionXLPipeline

model_id = "stabilityai/stable-diffusion-xl-base-1.0"

- pipeline = StableDiffusionXLPipeline.from_pretrained(model_id)

+ pipeline = OVStableDiffusionXLPipeline.from_pretrained(model_id)

prompt = "A majestic lion jumping from a big stone at night"

image = pipeline(prompt).images[0]

You can find more examples (such as static reshaping and model compilation) in optimum documentation.

ONNX

To install Optimum with the dependencies required for ONNX Runtime inference :

pip install optimum[onnxruntime]

To load an ONNX model and run inference with ONNX Runtime, you need to replace StableDiffusionXLPipeline with Optimum ORTStableDiffusionXLPipeline. In case you want to load a PyTorch model and convert it to the ONNX format on-the-fly, you can set export=True.

- from diffusers import StableDiffusionXLPipeline

+ from optimum.onnxruntime import ORTStableDiffusionXLPipeline

model_id = "stabilityai/stable-diffusion-xl-base-1.0"

- pipeline = StableDiffusionXLPipeline.from_pretrained(model_id)

+ pipeline = ORTStableDiffusionXLPipeline.from_pretrained(model_id)

prompt = "A majestic lion jumping from a big stone at night"

image = pipeline(prompt).images[0]

You can find more examples in optimum documentation.

Uses

Direct Use

The model is intended for research purposes only. Possible research areas and tasks include

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

Excluded uses are described below.

Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

Limitations and Bias

Limitations

- The model does not achieve perfect photorealism

- The model cannot render legible text

- The model struggles with more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

- Faces and people in general may not be generated properly.

- The autoencoding part of the model is lossy.

Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

- Downloads last month

- 204