metadata

license: llama2

datasets:

- tatsu-lab/alpaca

- OpenAssistant/oasst1

language:

- zh

- en

library_name: transformers

tags:

- baichuan

- lora

pipeline_tag: text-generation

inference: false

A bilingual instruction-tuned LoRA model of https://huggingface.co/meta-llama/Llama-2-13b-hf

- Instruction-following datasets used: alpaca, alpaca-zh, open assistant

- Training framework: https://github.com/hiyouga/LLaMA-Efficient-Tuning

Usage:

from transformers import AutoModelForCausalLM, AutoTokenizer, TextStreamer

tokenizer = AutoTokenizer.from_pretrained("hiyouga/Llama-2-Chinese-13b-chat")

model = AutoModelForCausalLM.from_pretrained("hiyouga/Llama-2-Chinese-13b-chat").cuda()

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

query = "晚上睡不着怎么办"

template = (

"A chat between a curious user and an artificial intelligence assistant. "

"The assistant gives helpful, detailed, and polite answers to the user's questions.\n"

"Human: {}\nAssistant: "

)

inputs = tokenizer([template.format(query)], return_tensors="pt")

inputs = inputs.to("cuda")

generate_ids = model.generate(**inputs, max_new_tokens=256, streamer=streamer)

You could also alternatively launch a CLI demo by using the script in https://github.com/hiyouga/LLaMA-Efficient-Tuning

python src/cli_demo.py --model_name_or_path hiyouga/Llama-2-Chinese-13b-chat

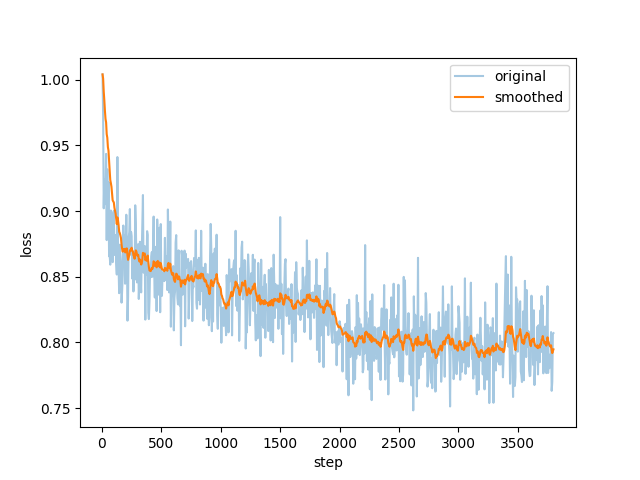

Loss curve: