license: apache-2.0

tags:

- Pytorch

- Geospatial

- Temporal ViT

- Vit

Model and Inputs

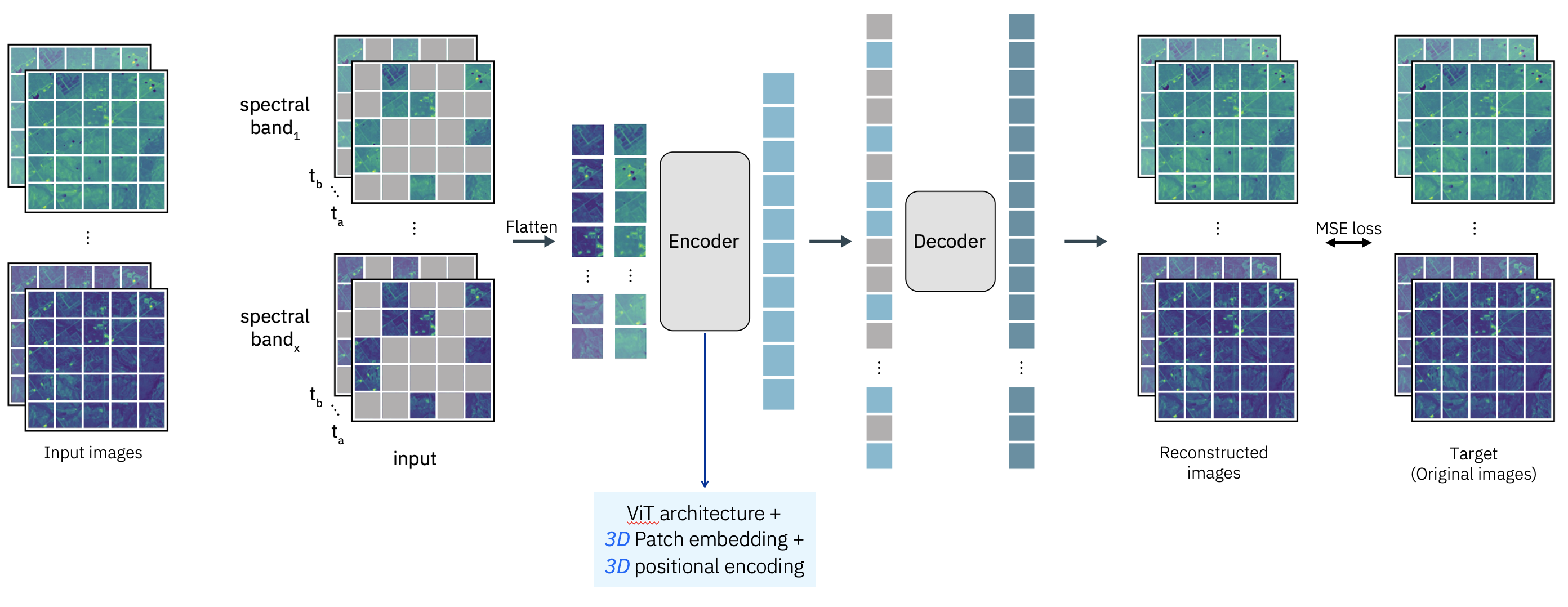

Prithvi is a first-of-its-kind temporal Vision transformer pre-trained by the IBM and NASA team on continental US Harmonised Landsat Sentinel 2 (HLS) data. Particularly, the model adopts a self-supervised encoder developed with a ViT architecture and Masked AutoEncoder learning strategy with an L1 loss function. The model includes spatial attention across multiple patches and also temporal attention for each patch.

The model expects remote sensing data in a video format (B, C, T, H, W). Note that the temporal dimension is very important here and not present in most other works around remote sensing modeling. Being able to handle a time series of remote sensing images can benefit a variety of downstream tasks. The model can also handle static images, which can be simply fed into the model with T=1.

Pre-training

The model was pre-trained with NASA's HLS2 L30 product (30m granularity) from the Continental United States. The bands that were used are the following:

- Blue

- Green

- Red

- Narrow NIR

- SWIR 1

- SWIR 2

Code

The model follows the original mae repo with some modifications including:

- replace 2D patch embed with 3D patch embed;

- replace 2D positional embed with 3D positional embed;

- replace 2D patchify and unpatchify with 3D.

- adding infrared bands besides RGB

Inference and demo

There is an inference script (Prithvi_run_inference.py) that allows to run the image reconstruction on a set of three HLS images. These images have to be geotiff format, including the channels described above (Blue, Green, Red, Narrow NIR, SWIR 1, SWIR 2) in reflectance units. There is also a demo that leverages the same code here.

Finetuning examples

Two examples of finetuning the model for image segmentation (i.e. flood detection and burn scars detection) using the mmsegmentation library are available through github.