Update: 🔥 Merlinite-7B: Lab on Mistral-7b

Model Card for Labradorite 13b 🔥 Paper

Overview

Performance

| Model | Alignment | Base | Teacher | MTBench (Avg) | MMLU(5-shot) | ARC-C(25-shot) | HellaSwag(10-shot) | Winogrande(5-shot) | GSM8K(5-shot- strict) |

|---|---|---|---|---|---|---|---|---|---|

| Llama-2-13b-Chat | RLHF | Llama-2-13b | Human Annotators | 6.65 ** | 54.58 | 59.81 | 82.52 | 75.93 | 34.80 |

| Orca-2 | Progressive Training | Llama-2-13b | GPT-4 | 6.15 ** | 60.37 ** | 59.73 | 79.86 | 78.22 | 48.22 |

| WizardLM-13B-V1.2 | Evol-Instruct | Llama-2-13b | GPT-4 | 7.20 ** | 54.83 | 60.24 | 82.62 | 76.40 | 43.75 |

| Labradorite-13b | Large-scale Alignment for chatBots (LAB) | Llama-2-13b | Mixtral-8x7B-Instruct | 7.23 ^ | 58.89 | 61.69 | 83.15 | 79.56 | 40.11 |

[**] Numbers taken from lmsys/chatbot-arena-leaderboard [^] Average across 4 runs

Method

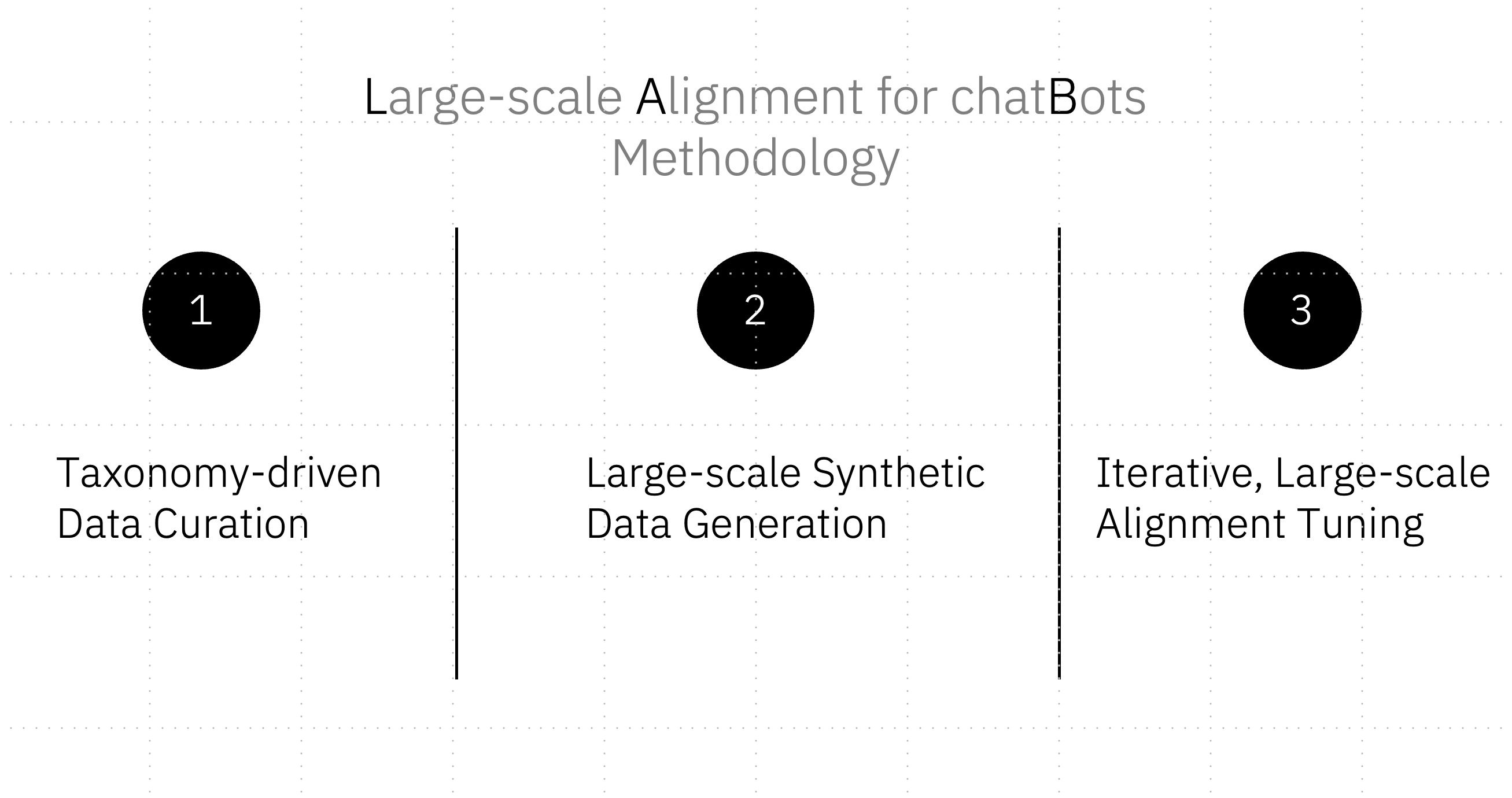

LAB: Large-scale Alignment for chatBots is a novel synthetic data-based alignment tuning method for LLMs from IBM Research. Labradorite-13b is a LLaMA-2-13b-derivative model trained with the LAB methodology, using Mixtral-8x7b-Instruct as a teacher model.

LAB consists of three key components:

- Taxonomy-driven data curation process

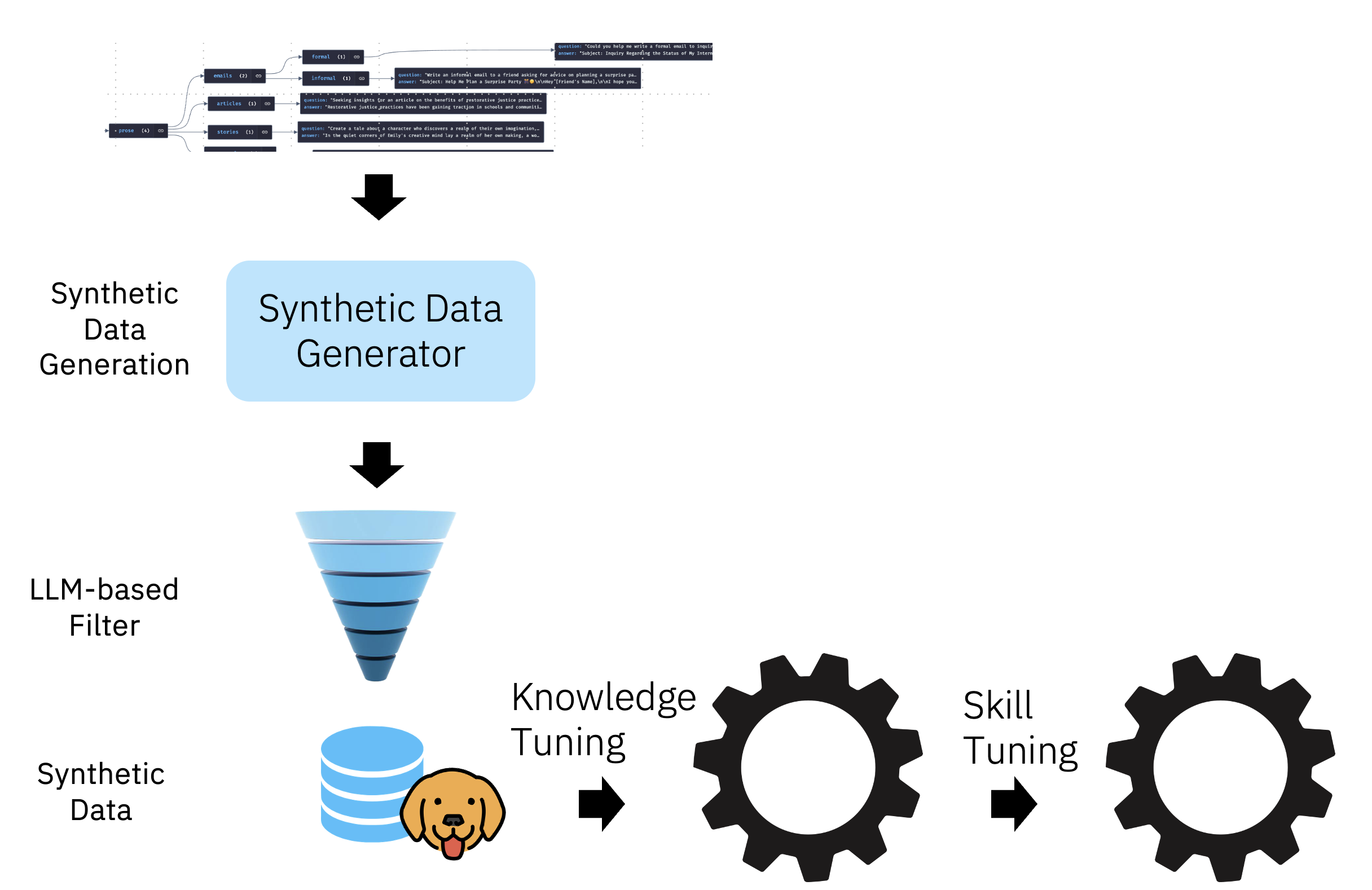

- Large-scale synthetic data generator

- Two-phased-training with replay buffers

LAB approach allows for adding new knowledge and skills, in an incremental fashion, to an already pre-trained model without suffering from catastrophic forgetting.

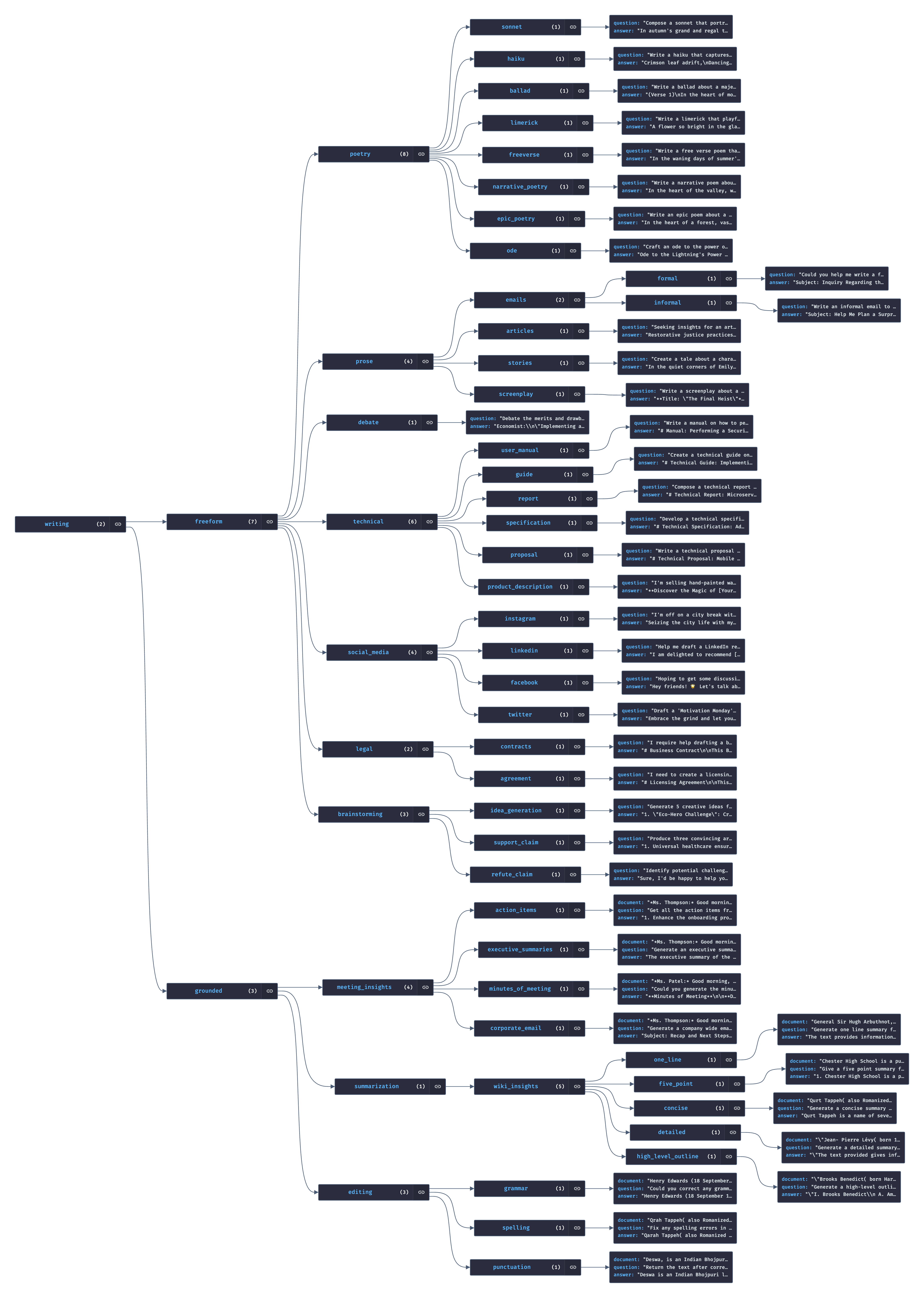

Taxonomy is a tree of seed examples that are used to prompt a teacher model to generate synthetic data; the sub-tree for the skill of “writing” is illustrated in the figure below.

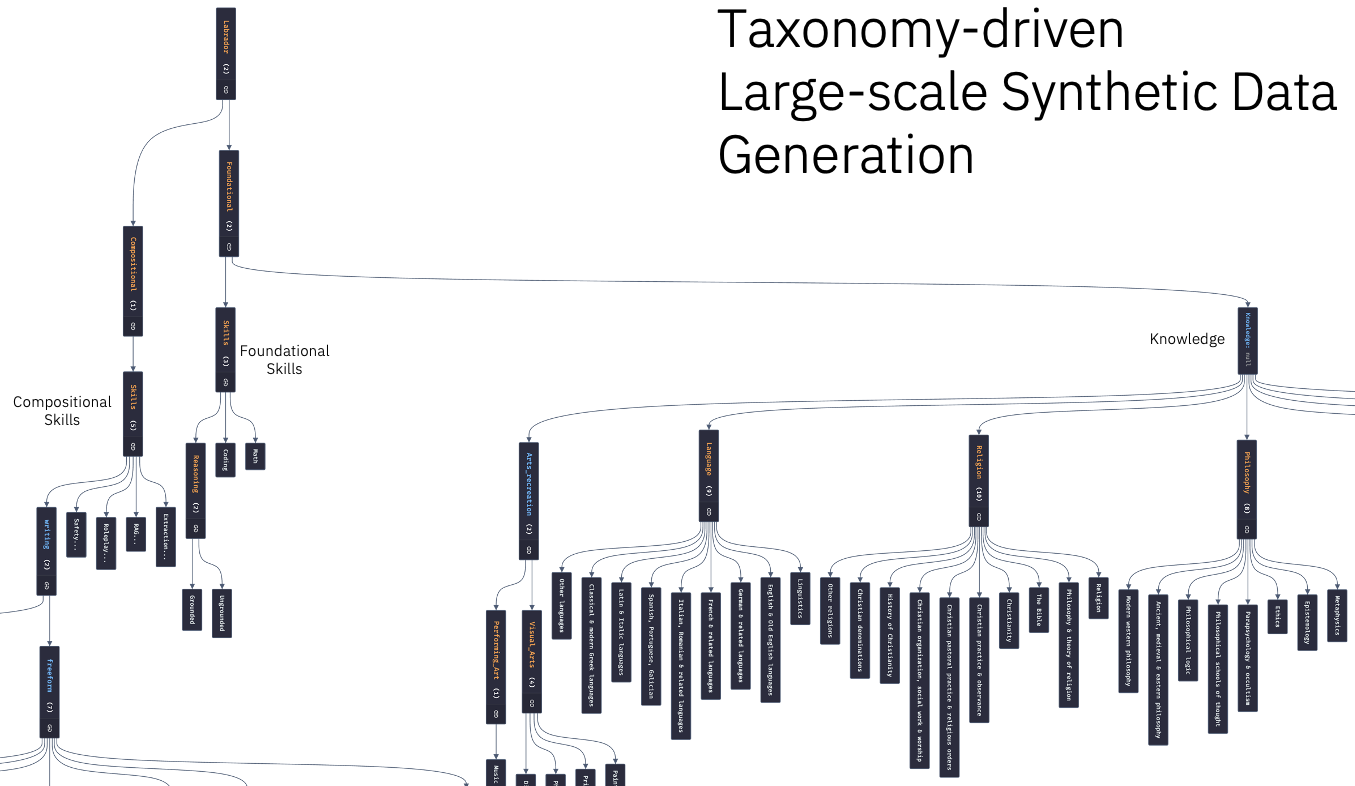

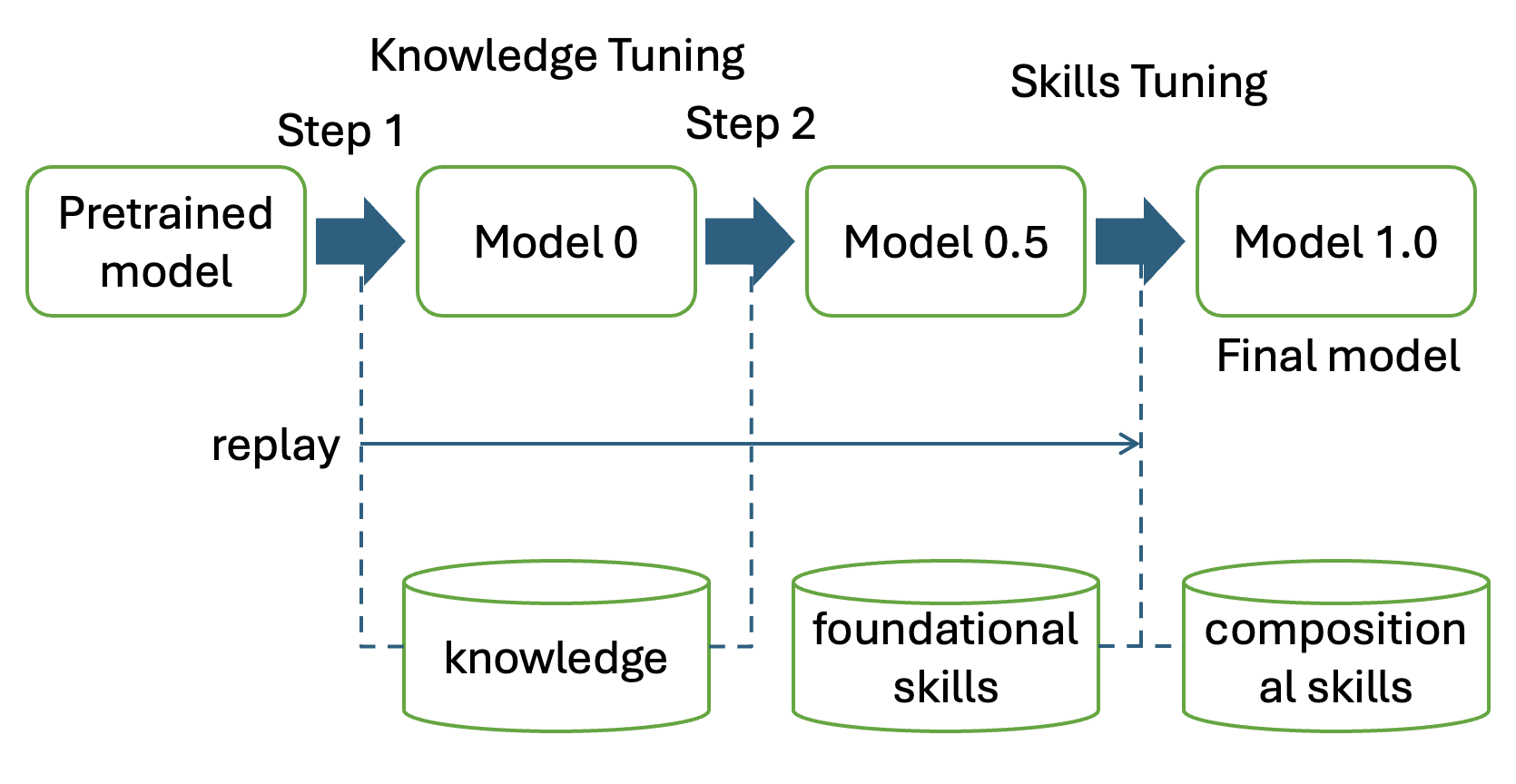

Taxonomy allows the data curator or the model designer to easily specify a diverse set of the knowledge-domains and skills that they would like to include in their LLM. At a high level, these can be categorized into three high-level bins - knowledge, foundational skills, and compositional skills. The leaf nodes of the taxonomy are tasks associated with one or more seed examples.

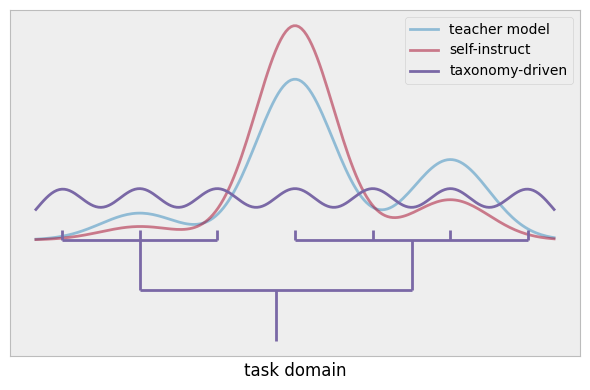

During the synthetic data generation, unlike previous approaches where seed examples are uniformly drawn from the entire pool (i.e. self-instruct), we use the taxonomy to drive the sampling process: For each knowledge/skill, we only use the local examples within the leaf node as seeds to prompt the teacher model. This makes the teacher model better exploit the task distributions defined by the local examples of each node and the diversity in the taxonomy itself ensures the entire generation covers a wide range of tasks, as illustrated below. In turns, this allows for using Mixtral 8x7B as the teacher model for generation while performing very competitively with models such as ORCA-2 and WizardLM that rely on synthetic data generated by much larger and capable models like GPT-4.

For adding new domain-specific knowledge, we provide an external knowledge source (document) and prompt the model to generate questions and answers based on the document. Foundational skills such as reasoning and compositional skills such as creative writing are generated through in-context learning using the seed examples from the taxonomy.

Additionally, to ensure the data is high-quality and safe, we employ steps to check the questions and answers to ensure that they are grounded and safe. This is done using the same teacher model that generated the data.

Our training consists of two major phases: knowledge tuning and skills tuning. There are two steps in knowledge tuning where the first step learns simple knowledge (short samples) and the second step learns complicated knowledge (longer samples). The second step uses replay a replay buffer with data from the first step. Both foundational skills and compositional skills are learned during the skills tuning phases, where a replay buffer of data from the knowledge phase is used. Importantly, we use a set of hyper-parameters for training that are very different from standard small-scale supervised fine-training: larger batch size and carefully optimized learning rate and scheduler.

Model description

- Language(s): Primarily English

- License: Labradorite-13b is a LLaMA 2 derivative and is licensed under the LLAMA 2 Community License

- Base model: meta-llama/Llama-2-13b-hf

- Teacher Model: mistralai/Mixtral-8x7B-Instruct-v0.1

Prompt Template

sys_prompt = """You are Labrador, an AI language model developed by IBM DMF (Data Model Factory) Alignment Team. You are a cautious assistant. You carefully follow instructions. You are helpful and harmless and you follow ethical guidelines and promote positive behavior."""

prompt = f'<|system|>\n{sys_prompt}\n<|user|>\n{inputs}\n<|assistant|>\n'

stop_token = '<|endoftext|>'

We advise utilizing the system prompt employed during the model's training for optimal inference performance, as there could be performance variations based on the provided instructions.

For chatbot usecases, we recommend testing the following system prompt:

sys_prompt = """You are Labrador, an AI language model developed by IBM DMF (Data Model Factory) Alignment Team. You are a cautious assistant. You carefully follow instructions. You are helpful and harmless and you follow ethical guidelines and promote positive behavior. You always respond to greetings (for example, hi, hello, g'day, morning, afternoon, evening, night, what's up, nice to meet you, sup, etc) with "Hello! I am Labrador, created by the IBM DMF Alignment Team. How can I help you today?". Please do not say anything else and do not start a conversation."""

Bias, Risks, and Limitations

Labradorite-13b has not been aligned to human preferences, so the model might produce problematic outputs. The model might also maintain the limitations and constraints that arise from the base model and other members of the Llama 2 model family.

The model undergoes training on synthetic data, leading to the potential inheritance of both advantages and limitations from the underlying teacher models and data generation methods. The incorporation of safety measures during Labradorite-13b's training process is considered beneficial. However, a nuanced understanding of the associated risks requires detailed studies for more accurate quantification.

In the absence of adequate safeguards and RLHF, there exists a risk of malicious utilization of these models for generating disinformation or harmful content. Caution is urged against complete reliance on a specific language model for crucial decisions or impactful information, as preventing these models from fabricating content is not straightforward. Additionally, it remains uncertain whether smaller models might exhibit increased susceptibility to hallucination in ungrounded generation scenarios due to their reduced sizes and memorization capacities. This aspect is currently an active area of research, and we anticipate more rigorous exploration, comprehension, and mitigations in this domain.

- Downloads last month

- 479