pipeline_tag: visual-question-answering

MiniCPM-V 2.0

MiniCPM-V 2.8B is an efficient version with promising performance for deployment. The model is built based on SigLip-400M and MiniCPM-2.4B, connected by a perceiver resampler. Our latest version, MiniCPM-V 2.0 has several notable features.

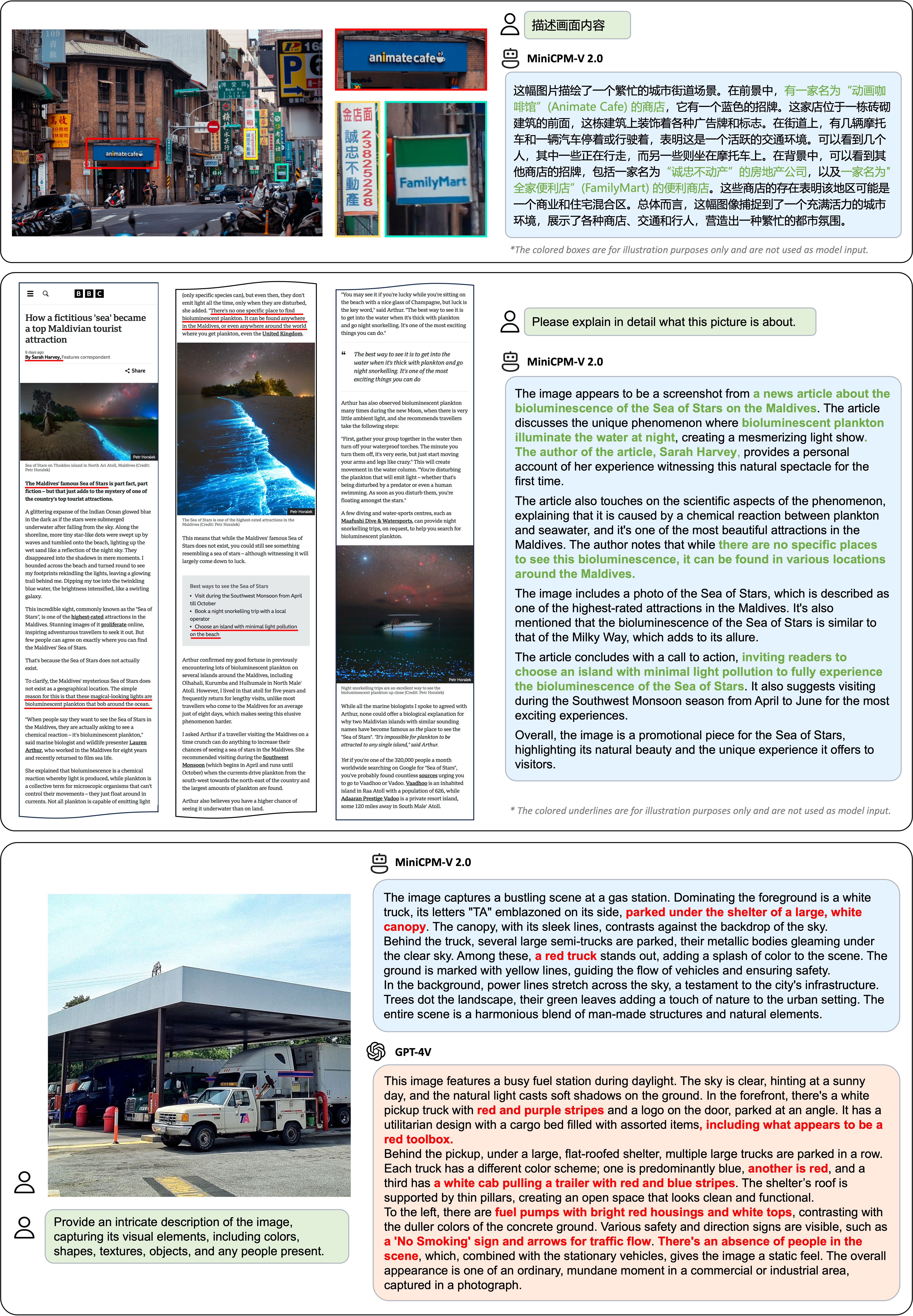

🔥 State-of-the-art Performance.

MiniCPM-V 2.0 achieves state-of-the-art performance on multiple benchmarks (including OCRBench, TextVQA, MME, MMB, MathVista, etc) among models under 7B parameters. It even outperforms strong Qwen-VL-Chat 9.6B, CogVLM-Chat 17.4B, and Yi-VL 34B on OpenCompass, a comprehesive evaluation over 11 popular benchmarks. Notably, MiniCPM-V 2.0 shows strong OCR capability, achieving comparable performance to Gemini Pro in scene-text understanding, and state-of-the-art performance on OCRBench among open-source models.

🏆 Trustworthy Behavior.

LMMs are known for suffering from hallucination, often generating text not factually grounded in images. MiniCPM-V 2.0 is the first end-side LMM aligned via multimodal RLHF for trustworthy behavior (using the recent RLHF-V [CVPR'24] series technique). This allows the model to match GPT-4V in preventing hallucinations on Object HalBench.

🌟 High-Resolution Images at Any Aspect Raito.

MiniCPM-V 2.0 can accept 1.8 million pixel (e.g., 1344x1344) images at any aspect ratio. This enables better perception of fine-grained visual information such as small objects and optical characters, which is achieved via a recent technique from LLaVA-UHD.

⚡️ High Efficiency.

MiniCPM-V 2.0 can be efficiently deployed on most GPU cards and personal computers, and even on end devices such as mobile phones. For visual encoding, we compress the image representations into much fewer tokens via a perceiver resampler. This allows MiniCPM-V 2.0 to operate with favorable memory cost and speed during inference even when dealing with high-resolution images.

🙌 Bilingual Support.

MiniCPM-V 2.0 supports strong bilingual multimodal capabilities in both English and Chinese. This is enabled by generalizing multimodal capabilities across languages, a technique from VisCPM [ICLR'24 Spotlight].

Evaluation

Click to view results on TextVQA, DocVQA, OCRBench, OpenCompass, MME, MMBench, MMMU, MathVista, LLaVA Bench, Object HalBench.

| Model | Size | TextVQA val | DocVQA test | OCRBench | OpenCompass | MME | MMB dev(en) | MMB dev(zh) | MMMU val | MathVista | LLaVA Bench | Object HalBench |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Proprietary models | ||||||||||||

| Gemini Pro Vision | - | 74.6 | 88.1 | 680 | 63.8 | 2148.9 | 75.2 | 74.0 | 48.9 | 45.8 | 79.9 | - |

| GPT-4V | - | 78.0 | 88.4 | 516 | 63.2 | 1771.5 | 75.1 | 75.0 | 53.8 | 47.8 | 93.1 | 86.4 / 92.7 |

| Open-source models 6B~34B | ||||||||||||

| Yi-VL-6B | 6.7B | 45.5* | 17.1* | 290 | 49.3 | 1915.1 | 68.6 | 68.3 | 40.3 | 28.8 | 51.9 | - |

| Qwen-VL-Chat | 9.6B | 61.5 | 62.6 | 488 | 52.1 | 1860.0 | 60.6 | 56.7 | 37.0 | 33.8 | 67.7 | 56.2 / 80.0 |

| Yi-VL-34B | 34B | 43.4* | 16.9* | 290 | 52.6 | 2050.2 | 71.1 | 71.4 | 45.1 | 30.7 | 62.3 | - |

| DeepSeek-VL-7B | 7.3B | 64.7* | 47.0* | 435 | 55.6 | 1765.4 | 74.1 | 72.8 | 38.3 | 36.8 | 77.8 | - |

| TextMonkey | 9.7B | 64.3 | 66.7 | 558 | - | - | - | - | - | - | - | - |

| CogVLM-Chat | 17.4B | 70.4 | 33.3* | 590 | 52.5 | 1736.6 | 63.7 | 53.8 | 37.3 | 34.7 | 73.9 | 73.6 / 87.4 |

| Open-source models 1B~3B | ||||||||||||

| DeepSeek-VL-1.3B | 1.7B | 58.4* | 37.9* | 413 | 46.0 | 1531.6 | 64.0 | 61.2 | 33.8 | 29.4 | 51.1 | - |

| MobileVLM V2 | 3.1B | 57.5 | 19.4* | - | - | 1440.5(P) | 63.2 | - | - | - | - | - |

| Mini-Gemini | 2.2B | 56.2 | 34.2* | - | - | 1653.0 | 59.8 | - | 31.7 | - | - | - |

| MiniCPM-V | 2.8B | 60.6 | 38.2 | 366 | 47.6 | 1650.2 | 67.9 | 65.3 | 38.3 | 28.9 | 51.3 | 78.4 / 88.5 |

| MiniCPM-V 2.0 | 2.8B | 74.1 | 71.9 | 605 | 55.0 | 1808.6 | 69.6 | 68.1 | 38.2 | 38.7 | 69.2 | 85.5 / 92.2 |

Examples

We deploy MiniCPM-V 2.0 on end devices. The demo video is the raw screen recording on a Xiaomi 14 Pro without edition.

Demo

Click here to try out the Demo of MiniCPM-V-2.0.

Deployment on Mobile Phone

MiniCPM-V-2.0 can be deployed on mobile phones with Android and Harmony operating systems. 🚀 Try it out here.

Usage

Inference using Huggingface transformers on Nivdia GPUs or Mac with MPS (Apple silicon or AMD GPUs). Requirements tested on python 3.10:

Pillow==10.1.0

timm==0.9.10

torch==2.1.2

torchvision==0.16.2

transformers==4.36.0

sentencepiece==0.1.99

# test.py

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-2.0', trust_remote_code=True, torch_dtype=torch.bfloat16)

# For Nvidia GPUs support BF16 (like A100, H100, RTX3090)

model = model.to(device='cuda', dtype=torch.bfloat16)

# For Nvidia GPUs do NOT support BF16 (like V100, T4, RTX2080)

#model = model.to(device='cuda', dtype=torch.float16)

# For Mac with MPS (Apple silicon or AMD GPUs).

# Run with `PYTORCH_ENABLE_MPS_FALLBACK=1 python test.py`

#model = model.to(device='mps', dtype=torch.float16)

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V', trust_remote_code=True)

model.eval()

image = Image.open('xx.jpg').convert('RGB')

question = 'What is in the image?'

msgs = [{'role': 'user', 'content': question}]

res, context, _ = model.chat(

image=image,

msgs=msgs,

context=None,

tokenizer=tokenizer,

sampling=True,

temperature=0.7

)

print(res)

Please look at GitHub for more detail about usage.

MiniCPM-V 1.0

Please see the info about MiniCPM-V 1.0 here.

License

Model License

- The code in this repo is released according to Apache-2.0

- The usage of MiniCPM-V's parameters is subject to "General Model License Agreement - Source Notes - Publicity Restrictions - Commercial License"

- The parameters are fully open to acedemic research

- Please contact [email protected] to obtain a written authorization for commercial uses. Free commercial use is also allowed after registration.

Statement

- As a LLM, MiniCPM-V generates contents by learning a large mount of texts, but it cannot comprehend, express personal opinions or make value judgement. Anything generated by MiniCPM-V does not represent the views and positions of the model developers

- We will not be liable for any problems arising from the use of the MinCPM-V open Source model, including but not limited to data security issues, risk of public opinion, or any risks and problems arising from the misdirection, misuse, dissemination or misuse of the model.