Model: PRIMO ⭐

Lang: IT

Model description

This model is a causal language model for the Italian language, based on a GPT-like [1] architecture (more specifically, the model has been obtained by modifying Meta's XGLM architecture [2] and exploiting its 7.5B pre-trained checkpoint as a starting point).

The model has ~6.6B parameters and a vocabulary of 50.335 tokens. It's an instruction-based model, trained with low-rank adaptation, and it's mainly suitable for general purpose prompt-based tasks involving natural language inputs and outputs.

The current release is a first prototype of this model, and it will be upgraded over time.

Example

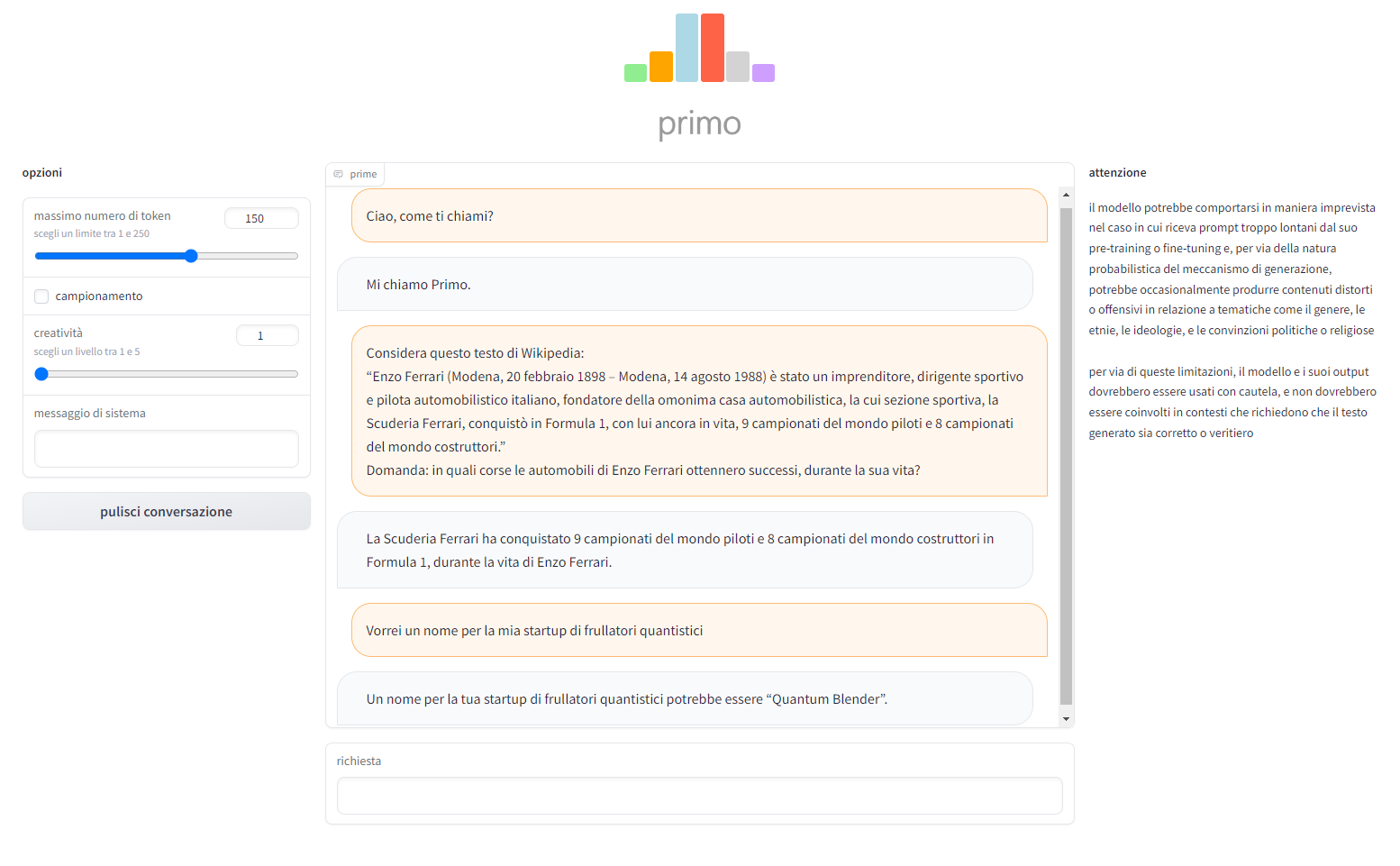

This is an example of intended use of the model:

Quantization

The released checkpoint is quantized in 8-bit, so that it can easily be loaded and used for training and inference on ordinary hardware like consumer GPUs, and it requires the installation of the transformers library version >= 4.30.1 and the bitsandbytes library, version >= 0.37.2

On Windows operating systems, the bitsandbytes-windows module also needs to be installed on top. However, it appears that the module is not yet updated with some recent features, like the possibility to save the 8-bit quantized models. In order to include this, you can install the fork in this repo, using:

pip install git+https://github.com/francesco-russo-githubber/bitsandbytes-windows.git

Training

The model has been fine-tuned on a dataset of ~100K Italian prompt-response pairs, using low-rank adaptation, which took about 3 days on a NVIDIA GeForce RTX 3060 GPU. The training has been carried out with a batch size of 10 and a constant learning rate of 1e-5, exploiting gradient accumulation and gradient checkpointing in order to manage the considerable memory consumption.

Quick usage

In order to use the model for inference, it's strongly recommended to build a Gradio interface using the code provided in this script, or this script (if you want an Italian interface).

Limitations

The model might behave erratically when presented with prompts which are too far away from its pre-training or fine-tuning and, because of the probabilistic nature of its generation mechanism, it might occasionally produce biased or offensive content with respect to gender, race, ideologies, and political or religious beliefs. These limitations imply that the model and its outputs should be used with caution, and should not be involved in situations that require the generated text to be fair or true.

References

[1] https://arxiv.org/abs/2005.14165

[2] https://arxiv.org/abs/2112.10668

License

The model is released under CC-BY-NC-SA-3.0 license, which means it is NOT available for commercial use. This is due to the experimental nature of the model and the license of the training data.

- Downloads last month

- 47