working plots

Browse files- __pycache__/app.cpython-310.pyc +0 -0

- app.py +159 -219

- data.csv +65 -0

- plt.png +0 -0

__pycache__/app.cpython-310.pyc

ADDED

|

Binary file (6.36 kB). View file

|

|

|

app.py

CHANGED

|

@@ -2,280 +2,220 @@ import matplotlib

|

|

| 2 |

matplotlib.use('Agg')

|

| 3 |

|

| 4 |

import functools

|

| 5 |

-

|

| 6 |

import gradio as gr

|

| 7 |

import matplotlib.pyplot as plt

|

| 8 |

import seaborn as sns

|

| 9 |

import pandas as pd

|

| 10 |

|

| 11 |

|

| 12 |

-

# benchmark order: pytorch, tf eager, tf xla; units = ms

|

| 13 |

-

BENCHMARK_DATA = {

|

| 14 |

-

"Greedy Decoding": {

|

| 15 |

-

"DistilGPT2": {

|

| 16 |

-

"T4": [336.22, 3976.23, 115.84],

|

| 17 |

-

"3090": [158.38, 1835.82, 46.56],

|

| 18 |

-

"A100": [371.49, 4073.84, 60.94],

|

| 19 |

-

},

|

| 20 |

-

"GPT2": {

|

| 21 |

-

"T4": [607.31, 7140.23, 185.12],

|

| 22 |

-

"3090": [297.03, 3308.31, 76.68],

|

| 23 |

-

"A100": [691.75, 7323.60, 110.72],

|

| 24 |

-

},

|

| 25 |

-

"OPT-1.3B": {

|

| 26 |

-

"T4": [1303.41, 15939.07, 1488.15],

|

| 27 |

-

"3090": [428.33, 7259.43, 468.37],

|

| 28 |

-

"A100": [1125.00, 16713.63, 384.52],

|

| 29 |

-

},

|

| 30 |

-

"GPTJ-6B": {

|

| 31 |

-

"T4": [0, 0, 0],

|

| 32 |

-

"3090": [0, 0, 0],

|

| 33 |

-

"A100": [2664.28, 32783.09, 1440.06],

|

| 34 |

-

},

|

| 35 |

-

"T5 Small": {

|

| 36 |

-

"T4": [99.88, 1527.73, 18.78],

|

| 37 |

-

"3090": [55.09, 665.70, 9.25],

|

| 38 |

-

"A100": [124.91, 1642.07, 13.72],

|

| 39 |

-

},

|

| 40 |

-

"T5 Base": {

|

| 41 |

-

"T4": [416.56, 6095.05, 106.12],

|

| 42 |

-

"3090": [223.00, 2503.28, 46.67],

|

| 43 |

-

"A100": [550.76, 6504.11, 64.57],

|

| 44 |

-

},

|

| 45 |

-

"T5 Large": {

|

| 46 |

-

"T4": [645.05, 9587.67, 225.17],

|

| 47 |

-

"3090": [377.74, 4216.41, 97.92],

|

| 48 |

-

"A100": [944.17, 10572.43, 116.52],

|

| 49 |

-

},

|

| 50 |

-

"T5 3B": {

|

| 51 |

-

"T4": [1493.61, 13629.80, 1494.80],

|

| 52 |

-

"3090": [694.75, 6316.79, 489.33],

|

| 53 |

-

"A100": [1801.68, 16707.71, 411.93],

|

| 54 |

-

},

|

| 55 |

-

},

|

| 56 |

-

"Sampling": {

|

| 57 |

-

"DistilGPT2": {

|

| 58 |

-

"T4": [617.40, 6078.81, 221.65],

|

| 59 |

-

"3090": [310.37, 2843.73, 85.44],

|

| 60 |

-

"A100": [729.05, 7140.05, 121.83],

|

| 61 |

-

},

|

| 62 |

-

"GPT2": {

|

| 63 |

-

"T4": [1205.34, 12256.98, 378.69],

|

| 64 |

-

"3090": [577.12, 5637.11, 160.02],

|

| 65 |

-

"A100": [1377.68, 15605.72, 234.47],

|

| 66 |

-

},

|

| 67 |

-

"OPT-1.3B": {

|

| 68 |

-

"T4": [2166.72, 19126.25, 2341.32],

|

| 69 |

-

"3090": [706.50, 9616.97, 731.58],

|

| 70 |

-

"A100": [2019.70, 28621.09, 690.36],

|

| 71 |

-

},

|

| 72 |

-

"GPTJ-6B": {

|

| 73 |

-

"T4": [0, 0, 0],

|

| 74 |

-

"3090": [0, 0, 0],

|

| 75 |

-

"A100": [5150.35, 70554.07, 2744.49],

|

| 76 |

-

},

|

| 77 |

-

"T5 Small": {

|

| 78 |

-

"T4": [235.93, 3599.47, 41.07],

|

| 79 |

-

"3090": [100.41, 1093.33, 23.24],

|

| 80 |

-

"A100": [267.42, 3366.73, 28.53],

|

| 81 |

-

},

|

| 82 |

-

"T5 Base": {

|

| 83 |

-

"T4": [812.59, 7966.73, 196.85],

|

| 84 |

-

"3090": [407.81, 4904.54, 97.56],

|

| 85 |

-

"A100": [1033.05, 11521.97, 123.93],

|

| 86 |

-

},

|

| 87 |

-

"T5 Large": {

|

| 88 |

-

"T4": [1114.22, 16433.31, 424.91],

|

| 89 |

-

"3090": [647.61, 7184.71, 160.97],

|

| 90 |

-

"A100": [1668.73, 19962.78, 200.75],

|

| 91 |

-

},

|

| 92 |

-

"T5 3B": {

|

| 93 |

-

"T4": [2282.56, 20891.22, 2196.02],

|

| 94 |

-

"3090": [1011.32, 9735.97, 734.40],

|

| 95 |

-

"A100": [2769.64, 26440.65, 612.98],

|

| 96 |

-

},

|

| 97 |

-

},

|

| 98 |

-

"Beam Search": {

|

| 99 |

-

"DistilGPT2": {

|

| 100 |

-

"T4": [2407.89, 19442.60, 3313.92],

|

| 101 |

-

"3090": [998.52, 8286.03, 900.28],

|

| 102 |

-

"A100": [2237.41, 21771.40, 760.47],

|

| 103 |

-

},

|

| 104 |

-

"GPT2": {

|

| 105 |

-

"T4": [3767.43, 34813.93, 5559.42],

|

| 106 |

-

"3090": [1633.04, 14606.93, 1533.55],

|

| 107 |

-

"A100": [3705.43, 34586.23, 1295.87],

|

| 108 |

-

},

|

| 109 |

-

"OPT-1.3B": {

|

| 110 |

-

"T4": [16649.82, 78500.33, 21894.31],

|

| 111 |

-

"3090": [508518, 32822.81, 5762.46],

|

| 112 |

-

"A100": [5967.32, 78334.56, 4096.38],

|

| 113 |

-

},

|

| 114 |

-

"GPTJ-6B": {

|

| 115 |

-

"T4": [0, 0, 0],

|

| 116 |

-

"3090": [0, 0, 0],

|

| 117 |

-

"A100": [15119.10, 134000.40, 10214.17],

|

| 118 |

-

},

|

| 119 |

-

"T5 Small": {

|

| 120 |

-

"T4": [283.64, 25089.12, 1391.66],

|

| 121 |

-

"3090": [137.38, 10680.28, 486.96],

|

| 122 |

-

"A100": [329.28, 24747.38, 513.99],

|

| 123 |

-

},

|

| 124 |

-

"T5 Base": {

|

| 125 |

-

"T4": [1383.21, 44809.14, 3920.40],

|

| 126 |

-

"3090": [723.11, 18657.48, 1258.60],

|

| 127 |

-

"A100": [2360.85, 45085.07, 1107.58],

|

| 128 |

-

},

|

| 129 |

-

"T5 Large": {

|

| 130 |

-

"T4": [1663.50, 81902.41, 9551.29],

|

| 131 |

-

"3090": [922.53, 35524.30, 2838.86],

|

| 132 |

-

"A100": [2168.22, 86890.00, 2373.04],

|

| 133 |

-

},

|

| 134 |

-

"T5 3B": {

|

| 135 |

-

"T4": [0, 0, 0],

|

| 136 |

-

"3090": [1521.05, 35337.30, 8282.09],

|

| 137 |

-

"A100": [3162.54, 88453.65, 5585.20],

|

| 138 |

-

},

|

| 139 |

-

},

|

| 140 |

-

}

|

| 141 |

FIGURE_PATH = "plt.png"

|

| 142 |

FIG_DPI = 300

|

| 143 |

|

| 144 |

|

| 145 |

-

def get_plot(

|

| 146 |

-

|

| 147 |

-

df

|

| 148 |

-

df =

|

| 149 |

-

|

| 150 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 151 |

|

| 152 |

g = sns.catplot(

|

| 153 |

data=df,

|

| 154 |

kind="bar",

|

| 155 |

-

x="

|

| 156 |

-

y="

|

| 157 |

-

hue="

|

| 158 |

-

palette={"

|

| 159 |

alpha=.9,

|

| 160 |

)

|

| 161 |

g.despine(left=True)

|

| 162 |

-

g.set_axis_labels("

|

| 163 |

-

g.

|

|

|

|

|

|

|

|

|

|

| 164 |

|

| 165 |

# Add the number to the top of each bar

|

| 166 |

ax = g.facet_axis(0, 0)

|

| 167 |

for i in ax.containers:

|

| 168 |

-

ax.bar_label(i,)

|

| 169 |

|

| 170 |

plt.savefig(FIGURE_PATH, dpi=FIG_DPI)

|

| 171 |

return FIGURE_PATH

|

| 172 |

|

|

|

|

| 173 |

demo = gr.Blocks()

|

| 174 |

|

| 175 |

with demo:

|

| 176 |

gr.Markdown(

|

| 177 |

"""

|

| 178 |

-

#

|

| 179 |

-

Instructions:

|

| 180 |

-

1. Pick a tab for the type of generation (or for benchmark information);

|

| 181 |

-

2. Select a model from the dropdown menu;

|

| 182 |

-

3. Optionally omit results from TensorFlow Eager Execution, if you wish to better compare the performance of

|

| 183 |

-

PyTorch to TensorFlow with XLA.

|

| 184 |

"""

|

| 185 |

)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 186 |

with gr.Tabs():

|

| 187 |

-

with gr.TabItem("

|

| 188 |

-

plot_fn = functools.partial(get_plot,

|

| 189 |

with gr.Row():

|

| 190 |

-

with gr.Column():

|

| 191 |

-

|

| 192 |

-

|

| 193 |

-

value="T5 Small",

|

| 194 |

-

label="Model",

|

| 195 |

-

interactive=True,

|

| 196 |

-

)

|

| 197 |

-

eager_enabler = gr.Radio(

|

| 198 |

-

["Yes", "No"],

|

| 199 |

-

value="Yes",

|

| 200 |

-

label="Plot TF Eager Execution?",

|

| 201 |

-

interactive=True

|

| 202 |

-

)

|

| 203 |

gr.Markdown(

|

| 204 |

"""

|

| 205 |

-

###

|

| 206 |

-

- `

|

| 207 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 208 |

"""

|

| 209 |

)

|

| 210 |

-

|

| 211 |

-

|

| 212 |

-

|

| 213 |

-

|

| 214 |

-

plot_fn =

|

|

|

|

|

|

|

|

|

|

| 215 |

with gr.Row():

|

| 216 |

-

with gr.Column():

|

| 217 |

-

|

| 218 |

-

|

| 219 |

-

value="T5 Small",

|

| 220 |

-

label="Model",

|

| 221 |

-

interactive=True,

|

| 222 |

-

)

|

| 223 |

-

eager_enabler = gr.Radio(

|

| 224 |

-

["Yes", "No"],

|

| 225 |

-

value="Yes",

|

| 226 |

-

label="Plot TF Eager Execution?",

|

| 227 |

-

interactive=True

|

| 228 |

-

)

|

| 229 |

gr.Markdown(

|

| 230 |

"""

|

| 231 |

-

###

|

| 232 |

-

- `

|

| 233 |

-

|

| 234 |

-

|

| 235 |

-

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 236 |

"""

|

| 237 |

)

|

| 238 |

-

|

| 239 |

-

|

| 240 |

-

|

| 241 |

-

|

| 242 |

-

plot_fn =

|

|

|

|

|

|

|

|

|

|

| 243 |

with gr.Row():

|

| 244 |

-

with gr.Column():

|

| 245 |

-

|

| 246 |

-

|

| 247 |

-

|

| 248 |

-

|

| 249 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 250 |

)

|

| 251 |

-

|

| 252 |

-

|

| 253 |

-

|

| 254 |

-

|

| 255 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 256 |

)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 257 |

gr.Markdown(

|

| 258 |

"""

|

| 259 |

-

###

|

| 260 |

-

- `

|

| 261 |

-

|

| 262 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 263 |

"""

|

| 264 |

)

|

| 265 |

-

|

| 266 |

-

|

| 267 |

-

|

|

|

|

|

|

|

|

|

|

| 268 |

with gr.TabItem("Benchmark Information"):

|

| 269 |

gr.Dataframe(

|

| 270 |

headers=["Parameter", "Value"],

|

| 271 |

value=[

|

| 272 |

-

["Transformers Version", "4.

|

| 273 |

-

["

|

| 274 |

-

["Pytorch Version", "1.11.0"],

|

| 275 |

["OS", "22.04 LTS (3090) / Debian 10 (other GPUs)"],

|

| 276 |

-

["CUDA", "11.

|

| 277 |

-

["Number of

|

| 278 |

-

["Is there code to reproduce?", "Yes -- https://

|

| 279 |

],

|

| 280 |

)

|

| 281 |

|

|

|

|

| 2 |

matplotlib.use('Agg')

|

| 3 |

|

| 4 |

import functools

|

|

|

|

| 5 |

import gradio as gr

|

| 6 |

import matplotlib.pyplot as plt

|

| 7 |

import seaborn as sns

|

| 8 |

import pandas as pd

|

| 9 |

|

| 10 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

FIGURE_PATH = "plt.png"

|

| 12 |

FIG_DPI = 300

|

| 13 |

|

| 14 |

|

| 15 |

+

def get_plot(task, gpu, omit_offload):

|

| 16 |

+

# slice the dataframe according to the inputs

|

| 17 |

+

df = pd.read_csv("data.csv")

|

| 18 |

+

df = df[df["task"] == task]

|

| 19 |

+

df = df[df["gpu"] == gpu]

|

| 20 |

+

if omit_offload == "Yes":

|

| 21 |

+

df = df[df["offload"] == 0]

|

| 22 |

+

|

| 23 |

+

# combine model name and dtype

|

| 24 |

+

df["model and dtype"] = df['model_name'].str.cat(df[['dtype']], sep=', ')

|

| 25 |

+

|

| 26 |

+

# fuse the two columns to be compared (original and assisted generation)

|

| 27 |

+

df = df.melt(

|

| 28 |

+

id_vars=["task", "gpu", "model and dtype", "offload"],

|

| 29 |

+

value_vars=["Greedy", "Assisted"],

|

| 30 |

+

var_name="generation_type",

|

| 31 |

+

value_name="generation_time",

|

| 32 |

+

)

|

| 33 |

|

| 34 |

g = sns.catplot(

|

| 35 |

data=df,

|

| 36 |

kind="bar",

|

| 37 |

+

x="model and dtype",

|

| 38 |

+

y="generation_time",

|

| 39 |

+

hue="generation_type",

|

| 40 |

+

palette={"Greedy": "blue", "Assisted": "orange"},

|

| 41 |

alpha=.9,

|

| 42 |

)

|

| 43 |

g.despine(left=True)

|

| 44 |

+

g.set_axis_labels("Model size and dtype", "Latency (ms/token)")

|

| 45 |

+

g.set_xticklabels(fontsize=7)

|

| 46 |

+

g.set_yticklabels(fontsize=7)

|

| 47 |

+

g.legend.set_title("Generation Type")

|

| 48 |

+

plt.setp(g._legend.get_texts(), fontsize='7') # for legend text

|

| 49 |

|

| 50 |

# Add the number to the top of each bar

|

| 51 |

ax = g.facet_axis(0, 0)

|

| 52 |

for i in ax.containers:

|

| 53 |

+

ax.bar_label(i, fontsize=7)

|

| 54 |

|

| 55 |

plt.savefig(FIGURE_PATH, dpi=FIG_DPI)

|

| 56 |

return FIGURE_PATH

|

| 57 |

|

| 58 |

+

|

| 59 |

demo = gr.Blocks()

|

| 60 |

|

| 61 |

with demo:

|

| 62 |

gr.Markdown(

|

| 63 |

"""

|

| 64 |

+

# Assisted Generation Benchmark

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 65 |

"""

|

| 66 |

)

|

| 67 |

+

# components shared across tabs

|

| 68 |

+

omit_offload_fn = functools.partial(

|

| 69 |

+

gr.Radio, ["Yes", "No"], value="No", label="Omit cases with memory offload?", interactive=True

|

| 70 |

+

)

|

| 71 |

+

|

| 72 |

+

def gpu_selector_fn(gpu_list):

|

| 73 |

+

return gr.Dropdown(

|

| 74 |

+

gpu_list, value=gpu_list[-1], label="GPU", interactive=True

|

| 75 |

+

)

|

| 76 |

+

|

| 77 |

with gr.Tabs():

|

| 78 |

+

with gr.TabItem("OPT: Open Text Generation"):

|

| 79 |

+

plot_fn = functools.partial(get_plot, "OPT: Open Text Generation")

|

| 80 |

with gr.Row():

|

| 81 |

+

with gr.Column(scale=0.3):

|

| 82 |

+

gpu_selector = gpu_selector_fn(["3090", "T4", "T4 *2", "A100 (80GB)"])

|

| 83 |

+

omit_offload = omit_offload_fn()

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 84 |

gr.Markdown(

|

| 85 |

"""

|

| 86 |

+

### Assistant Model

|

| 87 |

+

- `facebook/opt-125m`

|

| 88 |

+

|

| 89 |

+

### Model Names:

|

| 90 |

+

- 1.3B: `facebook/opt-1.3b`

|

| 91 |

+

- 6.7B: `facebook/opt-6.7b`

|

| 92 |

+

- 30B: `facebook/opt-30b`

|

| 93 |

+

- 66B: `facebook/opt-66b`

|

| 94 |

+

|

| 95 |

+

### Dataset used as input prompt:

|

| 96 |

+

- C4 (en, validation set)

|

| 97 |

"""

|

| 98 |

)

|

| 99 |

+

# Show plot when the gradio app is initialized

|

| 100 |

+

plot = gr.Image(value=plot_fn("A100 (80GB)", "No"))

|

| 101 |

+

# Update plot when any of the inputs change

|

| 102 |

+

plot_inputs = [gpu_selector, omit_offload]

|

| 103 |

+

gpu_selector.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 104 |

+

omit_offload.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 105 |

+

with gr.TabItem("OPT: Summarization"):

|

| 106 |

+

plot_fn = functools.partial(get_plot, "OPT: Summarization")

|

| 107 |

with gr.Row():

|

| 108 |

+

with gr.Column(scale=0.3):

|

| 109 |

+

gpu_selector = gpu_selector_fn(["3090", "T4", "T4 *2", "A100 (80GB)"])

|

| 110 |

+

omit_offload = omit_offload_fn()

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 111 |

gr.Markdown(

|

| 112 |

"""

|

| 113 |

+

### Assistant Model

|

| 114 |

+

- `facebook/opt-125m`

|

| 115 |

+

|

| 116 |

+

### Model Names:

|

| 117 |

+

- 1.3B: `facebook/opt-1.3b`

|

| 118 |

+

- 6.7B: `facebook/opt-6.7b`

|

| 119 |

+

- 30B: `facebook/opt-30b`

|

| 120 |

+

- 66B: `facebook/opt-66b`

|

| 121 |

+

|

| 122 |

+

### Dataset used as input prompt:

|

| 123 |

+

- CNN Dailymail (3.0.0, validation set)

|

| 124 |

"""

|

| 125 |

)

|

| 126 |

+

# Show plot when the gradio app is initialized

|

| 127 |

+

plot = gr.Image(value=plot_fn("A100 (80GB)", "No"))

|

| 128 |

+

# Update plot when any of the inputs change

|

| 129 |

+

plot_inputs = [gpu_selector, omit_offload]

|

| 130 |

+

gpu_selector.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 131 |

+

omit_offload.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 132 |

+

with gr.TabItem("Whisper: ARS"):

|

| 133 |

+

plot_fn = functools.partial(get_plot, "Whisper: ARS")

|

| 134 |

with gr.Row():

|

| 135 |

+

with gr.Column(scale=0.3):

|

| 136 |

+

gpu_selector = gpu_selector_fn(["3090", "T4"])

|

| 137 |

+

omit_offload = omit_offload_fn()

|

| 138 |

+

gr.Markdown(

|

| 139 |

+

"""

|

| 140 |

+

### Assistant Model

|

| 141 |

+

- `openai/whisper-tiny`

|

| 142 |

+

|

| 143 |

+

### Model Names:

|

| 144 |

+

- large-v2: `openai/whisper-large-v2`

|

| 145 |

+

|

| 146 |

+

### Dataset used as input prompt:

|

| 147 |

+

- Librispeech ARS (clean, validation set)

|

| 148 |

+

"""

|

| 149 |

)

|

| 150 |

+

# Show plot when the gradio app is initialized

|

| 151 |

+

plot = gr.Image(value=plot_fn("T4", "No"))

|

| 152 |

+

# Update plot when any of the inputs change

|

| 153 |

+

plot_inputs = [gpu_selector, omit_offload]

|

| 154 |

+

gpu_selector.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 155 |

+

omit_offload.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 156 |

+

with gr.TabItem("CodeGen: Code Generation"):

|

| 157 |

+

plot_fn = functools.partial(get_plot, "CodeGen: Code Generation")

|

| 158 |

+

with gr.Row():

|

| 159 |

+

with gr.Column(scale=0.3):

|

| 160 |

+

gpu_selector = gpu_selector_fn(["3090", "T4", "T4 *2", "A100 (80GB)"])

|

| 161 |

+

omit_offload = omit_offload_fn()

|

| 162 |

+

gr.Markdown(

|

| 163 |

+

"""

|

| 164 |

+

### Assistant Model

|

| 165 |

+

- `Salesforce/codegen-350M-mono`

|

| 166 |

+

|

| 167 |

+

### Model Names:

|

| 168 |

+

- 2B: `Salesforce/codegen-2B-mono`

|

| 169 |

+

- 6B: `Salesforce/codegen-6B-mono`

|

| 170 |

+

- 16B: `Salesforce/codegen-16B-mono`

|

| 171 |

+

|

| 172 |

+

### Dataset used as input prompt:

|

| 173 |

+

- The Stack (python)

|

| 174 |

+

"""

|

| 175 |

)

|

| 176 |

+

# Show plot when the gradio app is initialized

|

| 177 |

+

plot = gr.Image(value=plot_fn("A100 (80GB)", "No"))

|

| 178 |

+

# Update plot when any of the inputs change

|

| 179 |

+

plot_inputs = [gpu_selector, omit_offload]

|

| 180 |

+

gpu_selector.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 181 |

+

omit_offload.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 182 |

+

with gr.TabItem("Flan-T5: Summarization"):

|

| 183 |

+

plot_fn = functools.partial(get_plot, "Flan-T5: Summarization")

|

| 184 |

+

with gr.Row():

|

| 185 |

+

with gr.Column(scale=0.3):

|

| 186 |

+

gpu_selector = gpu_selector_fn(["3090", "T4", "T4 *2", "A100 (80GB)"])

|

| 187 |

+

omit_offload = omit_offload_fn()

|

| 188 |

gr.Markdown(

|

| 189 |

"""

|

| 190 |

+

### Assistant Model

|

| 191 |

+

- `google/flan-t5-small`

|

| 192 |

+

|

| 193 |

+

### Model Names:

|

| 194 |

+

- large: `google/flan-t5-large`

|

| 195 |

+

- xl: `google/flan-t5-xl`

|

| 196 |

+

- xxl: `google/flan-t5-xxl`

|

| 197 |

+

- ul2: `google/flan-ul2`

|

| 198 |

+

|

| 199 |

+

### Dataset used as input prompt:

|

| 200 |

+

- CNN Dailymail (3.0.0, validation set)

|

| 201 |

"""

|

| 202 |

)

|

| 203 |

+

# Show plot when the gradio app is initialized

|

| 204 |

+

plot = gr.Image(value=plot_fn("A100 (80GB)", "No"))

|

| 205 |

+

# Update plot when any of the inputs change

|

| 206 |

+

plot_inputs = [gpu_selector, omit_offload]

|

| 207 |

+

gpu_selector.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 208 |

+

omit_offload.change(fn=plot_fn, inputs=plot_inputs, outputs=plot)

|

| 209 |

with gr.TabItem("Benchmark Information"):

|

| 210 |

gr.Dataframe(

|

| 211 |

headers=["Parameter", "Value"],

|

| 212 |

value=[

|

| 213 |

+

["Transformers Version", "4.29dev0"],

|

| 214 |

+

["Pytorch Version", "2.0.0"],

|

|

|

|

| 215 |

["OS", "22.04 LTS (3090) / Debian 10 (other GPUs)"],

|

| 216 |

+

["CUDA", "11.8 (3090) / 11.3 (others GPUs)"],

|

| 217 |

+

["Number of input samples", "20-100 (depending on the model size)"],

|

| 218 |

+

["Is there code to reproduce?", "Yes -- https://github.com/gante/huggingface-demos/tree/main/experiments/faster_generation"],

|

| 219 |

],

|

| 220 |

)

|

| 221 |

|

data.csv

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

gpu,task,model_name,dtype,offload,Greedy,Assisted

|

| 2 |

+

3090,OPT: Open Text Generation,1.3B,FP32,0,11.64,10.01

|

| 3 |

+

3090,OPT: Open Text Generation,6.7B,FP32,1,428.47,114.99

|

| 4 |

+

3090,OPT: Open Text Generation,6.7B,FP16,0,19.62,12.44

|

| 5 |

+

3090,OPT: Open Text Generation,6.7B,INT8,0,104.43,40.33

|

| 6 |

+

3090,OPT: Open Text Generation,30B,FP16,1,2616,1099

|

| 7 |

+

3090,OPT: Summarization,1.3B,FP32,0,13.16,10.89

|

| 8 |

+

3090,OPT: Summarization,6.7B,FP32,1,587.8,114.53

|

| 9 |

+

3090,OPT: Summarization,6.7B,FP16,0,25.14,14.56

|

| 10 |

+

3090,OPT: Summarization,30B,FP16,1,2732,331.2

|

| 11 |

+

3090,Whisper: ARS,large-v2,FP32,0,24.81,12.55

|

| 12 |

+

3090,CodeGen: Code Generation,2B,FP32,0,28.90,28.36

|

| 13 |

+

3090,CodeGen: Code Generation,6B,FP32,1,544.11,110.42

|

| 14 |

+

3090,CodeGen: Code Generation,6B,FP16,0,34.36,31.84

|

| 15 |

+

3090,CodeGen: Code Generation,16B,FP16,1,808.69,161.50

|

| 16 |

+

3090,CodeGen: Code Generation,16B,INT8,0,66.69,41.47

|

| 17 |

+

3090,Flan-T5: Summarization,large,FP32,0,21.27,15.76

|

| 18 |

+

3090,Flan-T5: Summarization,xl,FP32,0,25.60,18.94

|

| 19 |

+

3090,Flan-T5: Summarization,xxl,FP32,1,1326.22,580.10

|

| 20 |

+

3090,Flan-T5: Summarization,xxl,FP16,1,52.52,36.07

|

| 21 |

+

3090,Flan-T5: Summarization,xxl,INT8,0,67.13,38.92

|

| 22 |

+

3090,Flan-T5: Summarization,ul2,FP16,1,1185.25,480.11

|

| 23 |

+

|

| 24 |

+

T4,OPT: Open Text Generation,1.3B,FP32,0,24.74,22.37

|

| 25 |

+

T4,OPT: Open Text Generation,6.7B,FP32,1,2863.57,733.32

|

| 26 |

+

T4,OPT: Open Text Generation,6.7B,FP16,0,62.04,29.67

|

| 27 |

+

T4,OPT: Open Text Generation,6.7B,INT8,0,180.59,66.12

|

| 28 |

+

T4,OPT: Summarization,1.3B,FP32,0,32.50,26.58

|

| 29 |

+

T4,OPT: Summarization,6.7B,FP16,1,499.00,67.33

|

| 30 |

+

T4,OPT: Summarization,6.7B,INT8,0,182.98,37.89

|

| 31 |

+

T4,Whisper: ARS,large-v2,FP32,0,62.68,40.74

|

| 32 |

+

T4,CodeGen: Code Generation,2B,FP32,0,73.88,67.62

|

| 33 |

+

T4,CodeGen: Code Generation,6B,FP16,1,682.94,135.99

|

| 34 |

+

T4,CodeGen: Code Generation,6B,INT8,0,117.91,72.40

|

| 35 |

+

T4,Flan-T5: Summarization,large,FP32,0,43.67,36.26

|

| 36 |

+

T4,Flan-T5: Summarization,xl,FP16,0,53.54,42.27

|

| 37 |

+

T4,Flan-T5: Summarization,xxl,FP16,1,2814,1177

|

| 38 |

+

|

| 39 |

+

T4 *2,OPT: Open Text Generation,6.7B,FP32,0,118.42,55.42

|

| 40 |

+

T4 *2,OPT: Open Text Generation,6.7B,FP16,0,61.30,34.76

|

| 41 |

+

T4 *2,OPT: Summarization,6.7B,FP32,1,1238.59,339.34

|

| 42 |

+

T4 *2,OPT: Summarization,6.7B,FP16,0,94.62,34.37

|

| 43 |

+

T4 *2,CodeGen: Code Generation,6B,FP16,0,116.34,72.09

|

| 44 |

+

T4 *2,CodeGen: Code Generation,6B,INT8,0,119.14,79.01

|

| 45 |

+

T4 *2,CodeGen: Code Generation,16B,FP16,1,1509.05,693.01

|

| 46 |

+

T4 *2,CodeGen: Code Generation,16B,INT8,0,200.79,99.00

|

| 47 |

+

T4 *2,Flan-T5: Summarization,xl,FP32,0,59.27,68.70

|

| 48 |

+

T4 *2,Flan-T5: Summarization,xl,FP16,0,51.59,50.56

|

| 49 |

+

T4 *2,Flan-T5: Summarization,xxl,FP16,1,797.7,534.3

|

| 50 |

+

T4 *2,Flan-T5: Summarization,xxl,INT8,0,243.3,143.38

|

| 51 |

+

|

| 52 |

+

A100 (80GB),OPT: Open Text Generation,6.7B,FP32,0,35.34,30.00

|

| 53 |

+

A100 (80GB),OPT: Open Text Generation,30B,FP16,0,54.57,38.27

|

| 54 |

+

A100 (80GB),OPT: Open Text Generation,30B,INT8,0,290.82,135.77

|

| 55 |

+

A100 (80GB),OPT: Open Text Generation,66B,INT8,0,398.49,146.04

|

| 56 |

+

A100 (80GB),OPT: Summarization,6.7B,FP32,0,43.64,27.03

|

| 57 |

+

A100 (80GB),OPT: Summarization,30B,FP16,0,54.94,28.87

|

| 58 |

+

A100 (80GB),OPT: Summarization,30B,INT8,0,291.57,49.42

|

| 59 |

+

A100 (80GB),OPT: Summarization,66B,INT8,0,392.34,82.29

|

| 60 |

+

A100 (80GB),CodeGen: Code Generation,16B,FP32,0,75.56,80.44

|

| 61 |

+

A100 (80GB),CodeGen: Code Generation,16B,FP16,0,70.51,74.79

|

| 62 |

+

A100 (80GB),CodeGen: Code Generation,16B,INT8,0,130.77,90.28

|

| 63 |

+

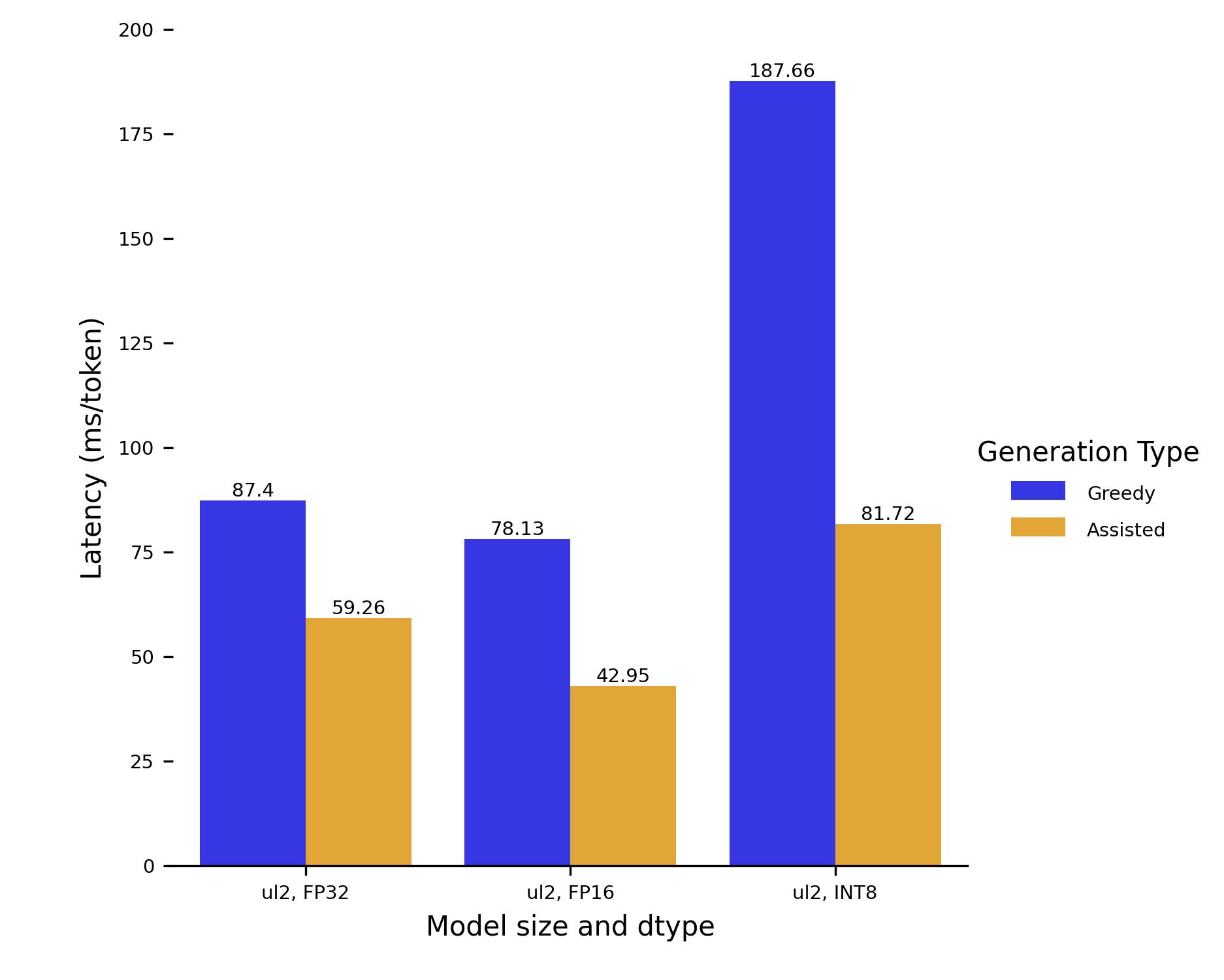

A100 (80GB),Flan-T5: Summarization,ul2,FP32,0,87.40,59.26

|

| 64 |

+

A100 (80GB),Flan-T5: Summarization,ul2,FP16,0,78.13,42.95

|

| 65 |

+

A100 (80GB),Flan-T5: Summarization,ul2,INT8,0,187.66,81.72

|

plt.png

ADDED

|