Spaces:

Runtime error

Do we fully leverage image encoders in vision language models? 👀

A new paper built a dense connector that does it better! Let's dig in 🧶

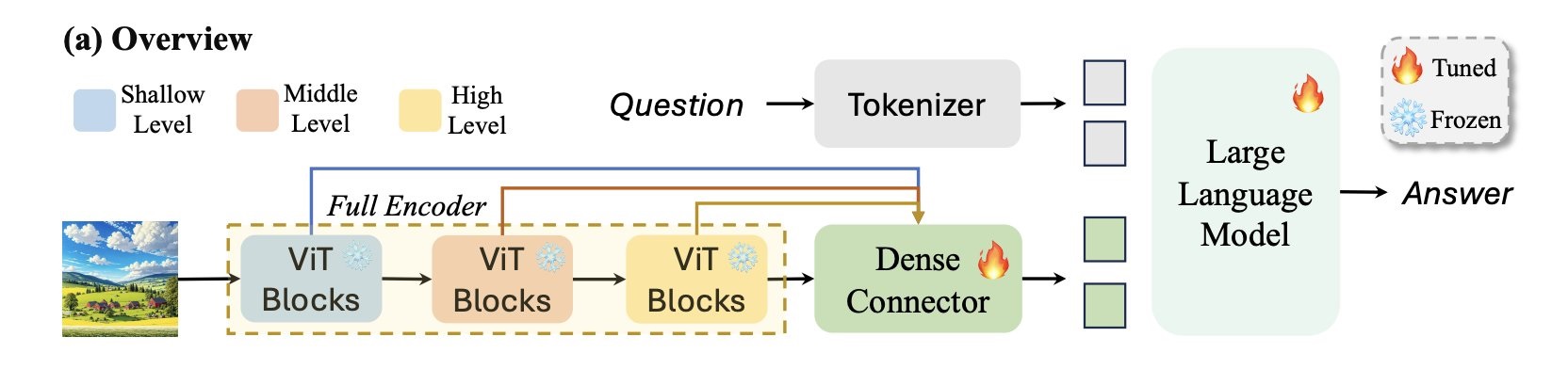

VLMs consist of an image encoder block, a projection layer that projects image embeddings to text embedding space and then a text decoder sequentially connected 📖

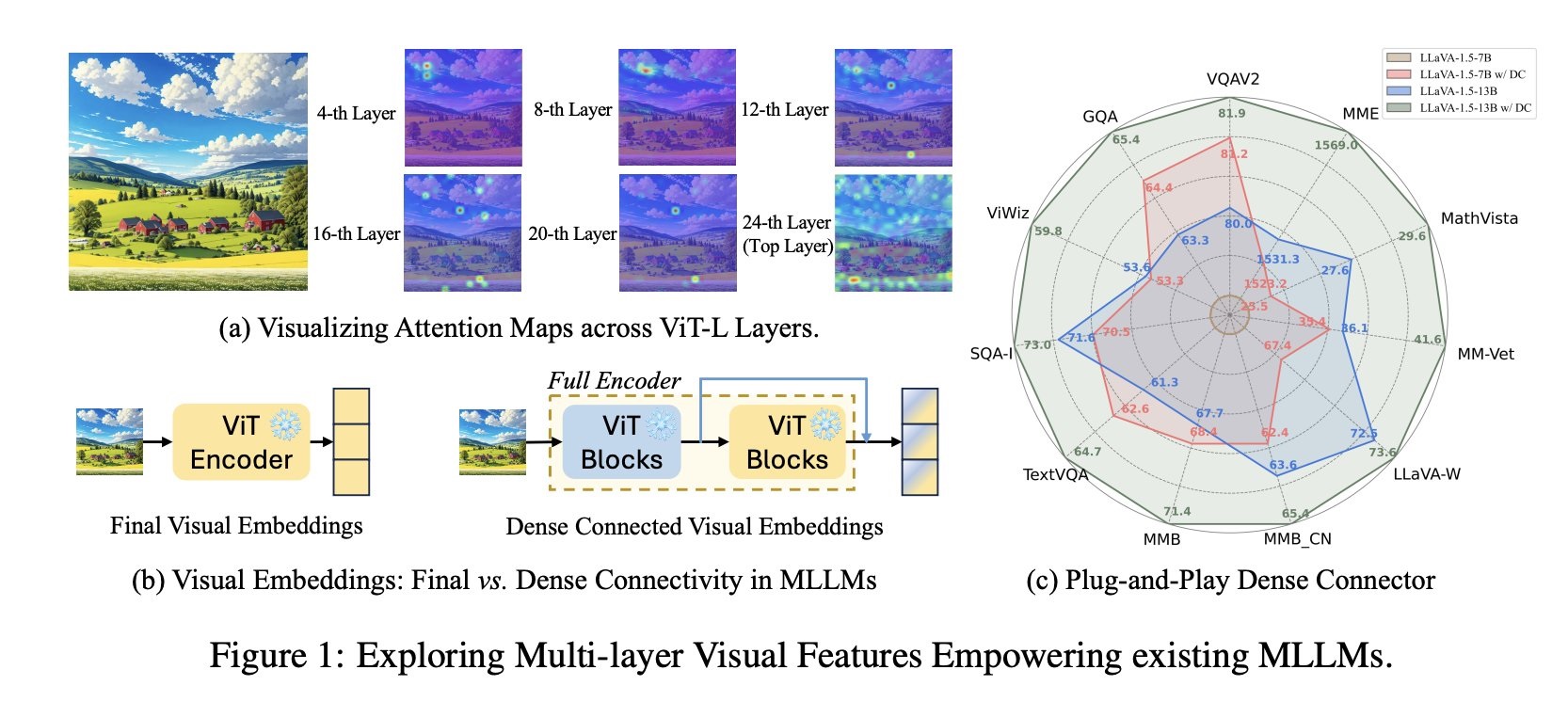

This paper explores using intermediate states of image encoder and not a single output 🤩

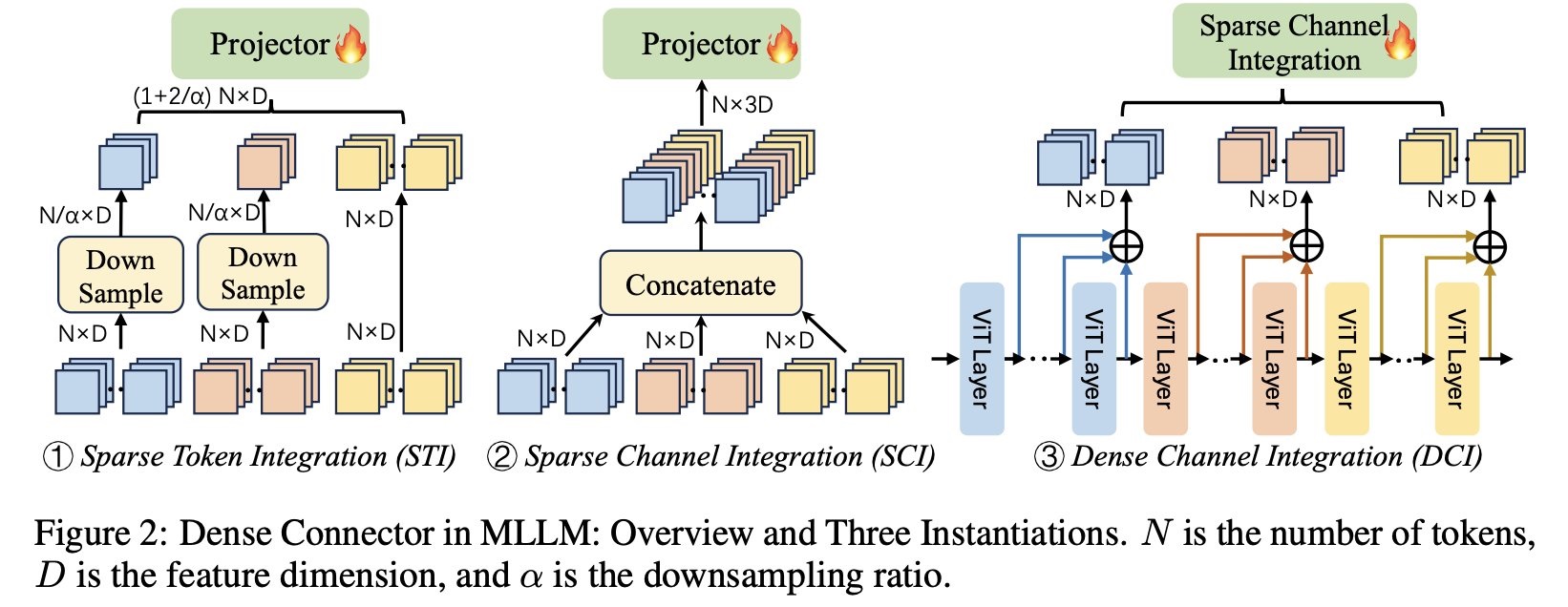

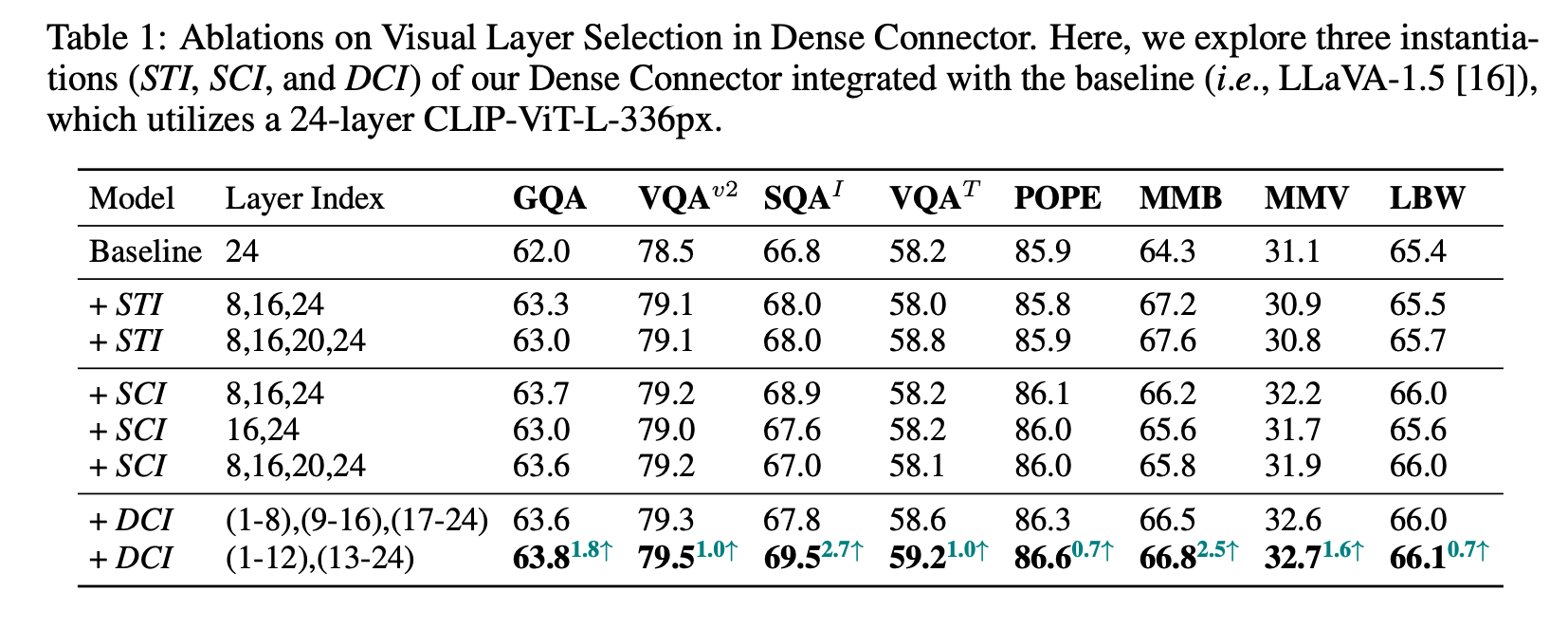

The authors explore three different ways of instantiating dense connector: sparse token integration, sparse channel integration and dense channel integration (each of them just take intermediate outputs and put them together in different ways, see below).

They explore all three of them integrated to LLaVA 1.5 and found out each of the new models are superior to the original LLaVA 1.5.

I tried the model and it seems to work very well 🥹

The authors have released various checkpoints based on different decoders (Vicuna 7/13B and Llama 3-8B).

Ressources:

Dense Connector for MLLMs by Huanjin Yao, Wenhao Wu, Taojiannan Yang, YuXin Song, Mengxi Zhang, Haocheng Feng, Yifan Sun, Zhiheng Li, Wanli Ouyang, Jingdong Wang (2024) GitHub

Original tweet (May 30, 2024)