Spaces:

Runtime error

A newer version of the Streamlit SDK is available:

1.39.0

I love Depth Anything V2 😍 It’s Depth Anything, but scaled with both larger teacher model and a gigantic dataset! Let’s unpack 🤓🧶!

The authors have analyzed Marigold, a diffusion based model against Depth Anything and found out what’s up with using synthetic images vs real images for MDE: 🔖

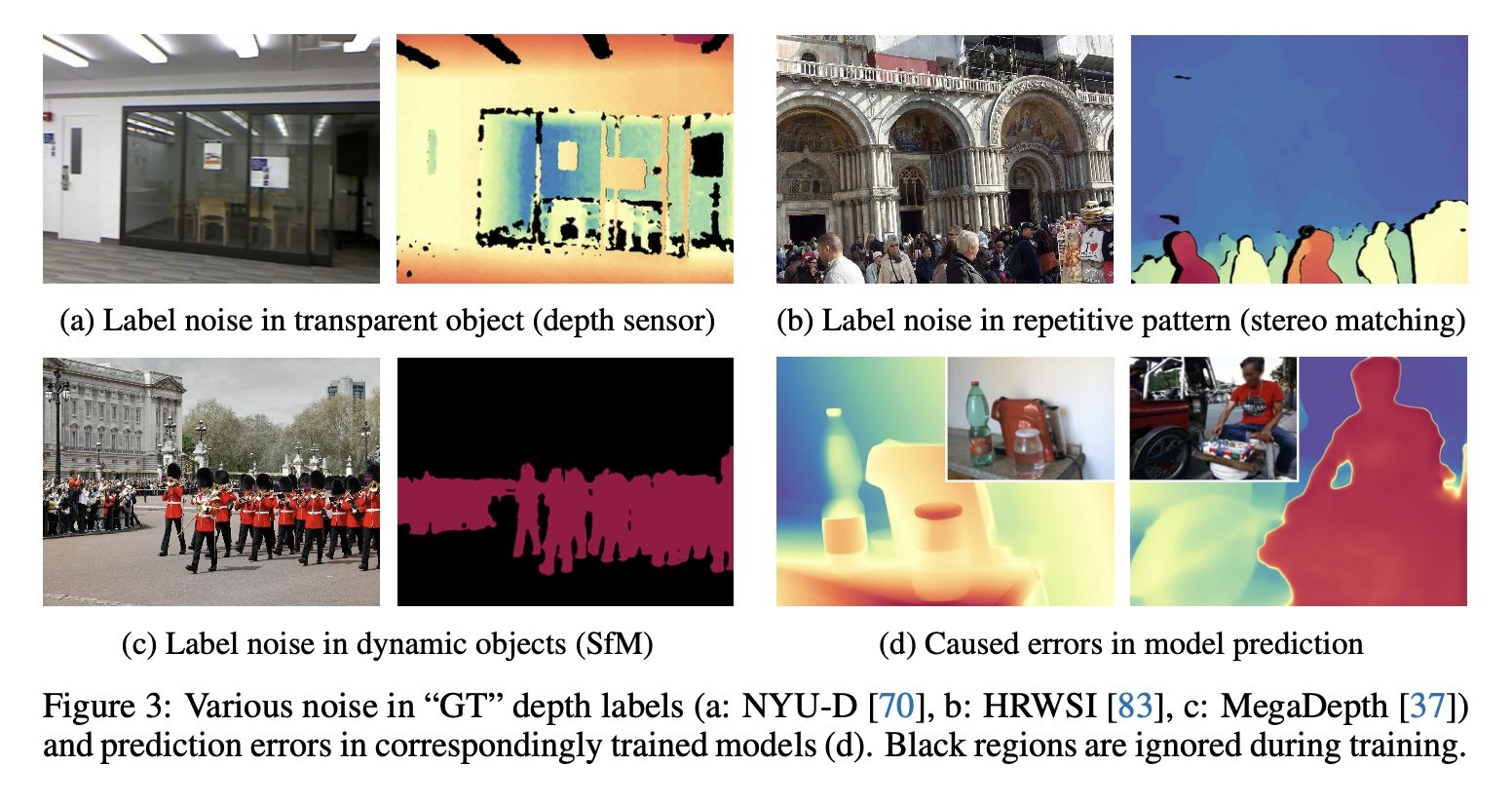

Real data has a lot of label noise, inaccurate depth maps (caused by depth sensors missing transparent objects etc).

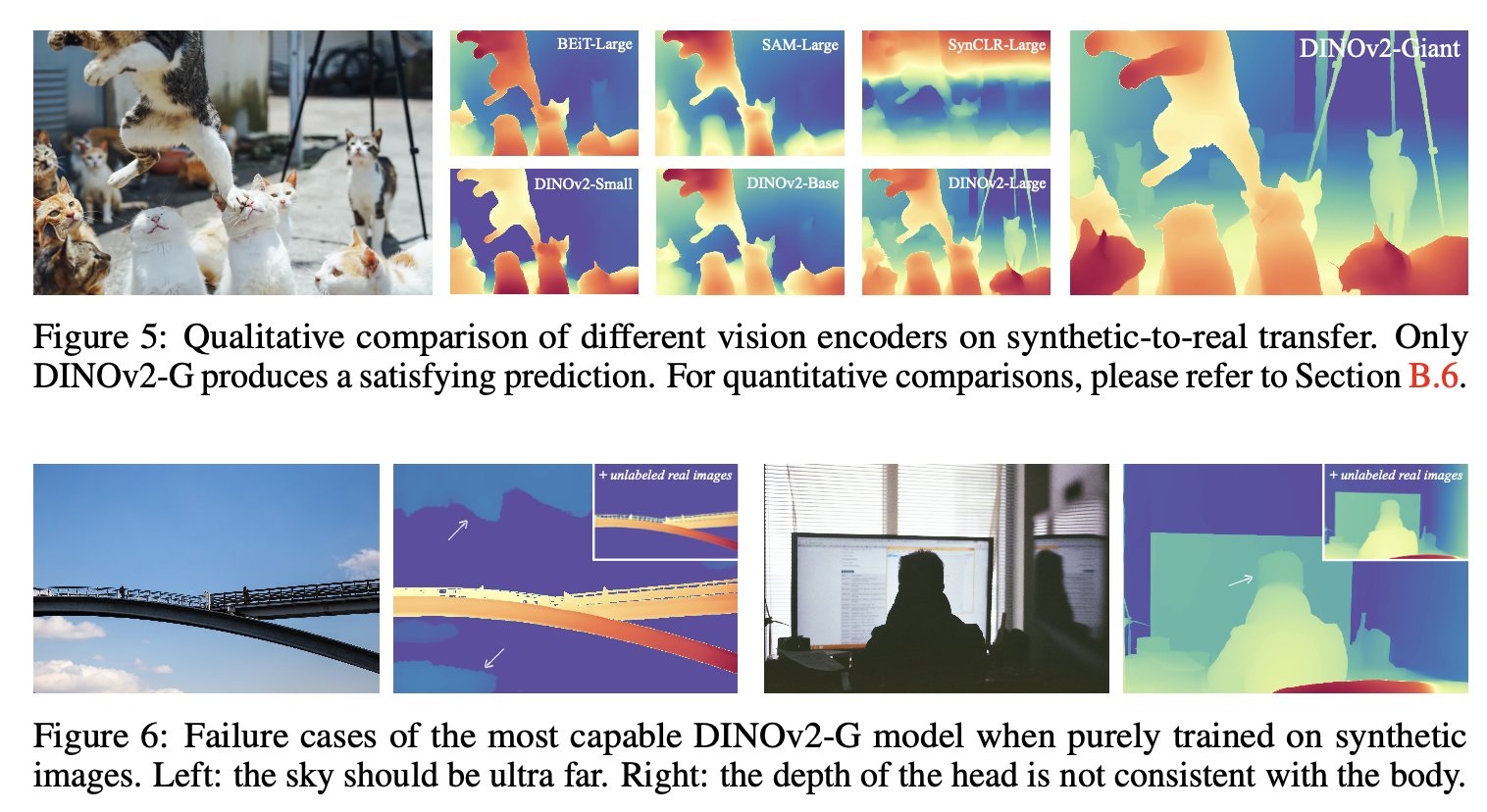

The authors train different image encoders only on synthetic images and find out unless the encoder is very large the model can’t generalize well (but large models generalize inherently anyway) 🧐 But they still fail encountering real images that have wide distribution in labels.

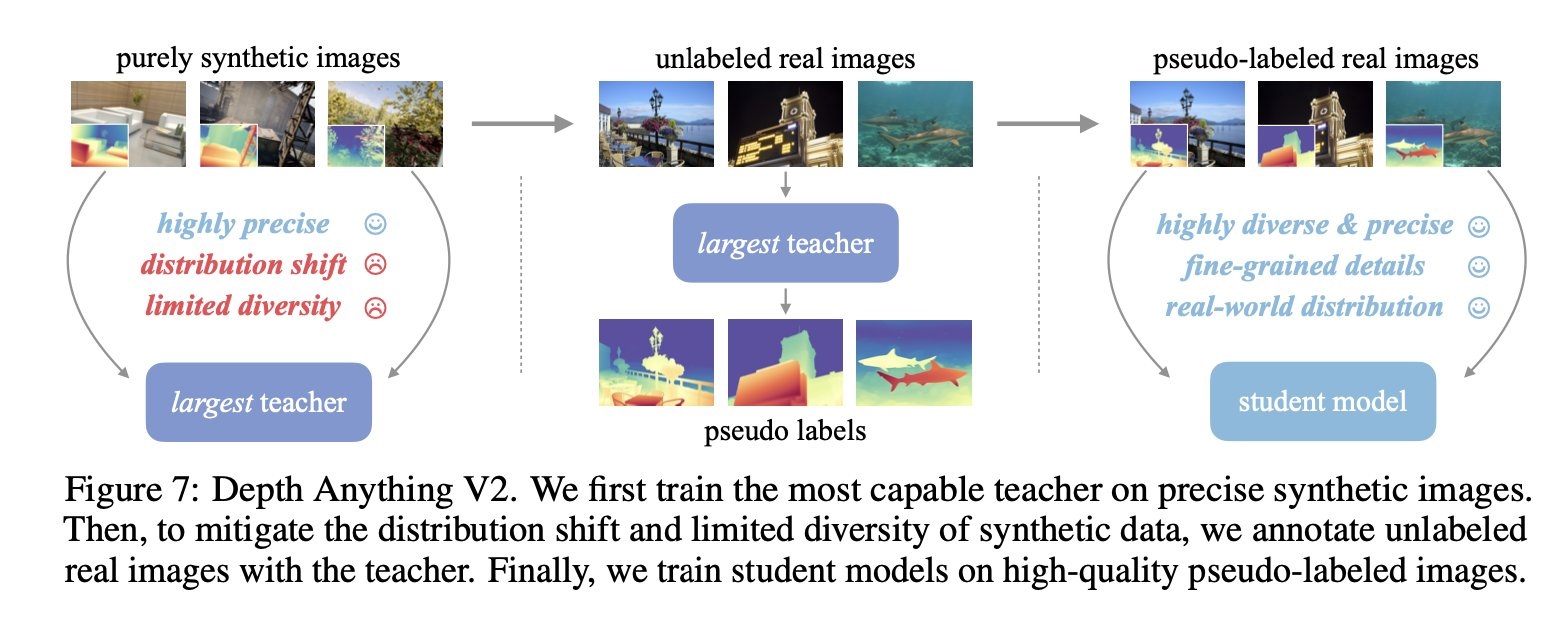

Depth Anything v2 framework is to...

🦖 Train a teacher model based on DINOv2-G based on 595K synthetic images

🏷️ Label 62M real images using teacher model

🦕 Train a student model using the real images labelled by teacher

Result: 10x faster and more accurate than Marigold!

The authors also construct a new benchmark called DA-2K that is less noisy, highly detailed and more diverse!

I have created a collection that has the models, the dataset, the demo and CoreML converted model 😚

Ressources:

Depth Anything V2

by Lihe Yang, Bingyi Kang, Zilong Huang, Zhen Zhao, Xiaogang Xu, Jiashi Feng, Hengshuang Zhao (2024) GitHub Hugging Face documentation

Original tweet (June 18, 2024)