license: other

license_name: sacla

license_link: >-

https://huggingface.co/stabilityai/stable-diffusion-3.5-large/blob/main/LICENSE.md

base_model:

- stabilityai/stable-diffusion-3.5-large

base_model_relation: quantized

Overview

These models are made to work with stable-diffusion.cpp release master-ac54e00 onwards. Support for other inference backends is not guarenteed.

Quantized using this PR https://github.com/leejet/stable-diffusion.cpp/pull/447

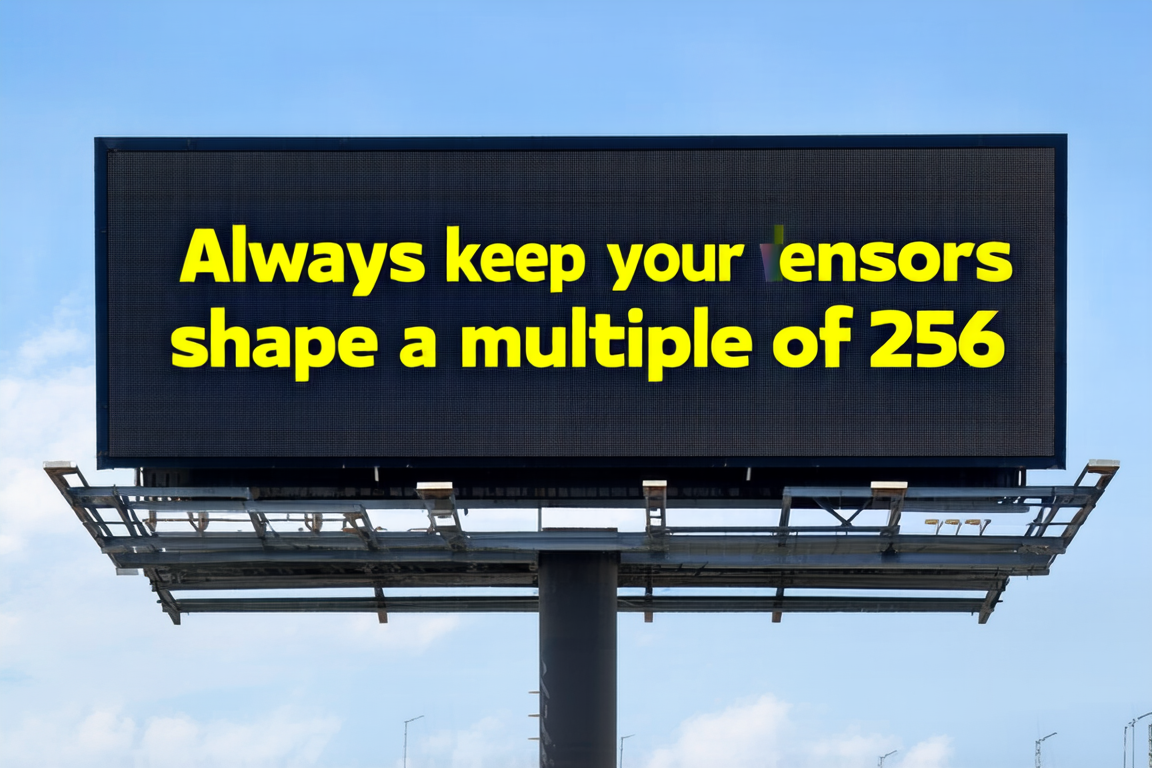

Normal K-quants are not working properly with SD3.5-Large models because around 90% of the weights are in tensors whose shape doesn't match the 256 superblock size of K-quants and therefore can't be quantized this way. Mixing quantization types allows us to take adventage of the better fidelity of k-quants to some extent while keeping the model file size relatively small.

Files:

Mixed Types:

- sd3.5_large-q2_k_4_0.gguf: Smallest quantization yet. Use this if you can't afford anything bigger

- sd3.5_large-q3_k_4_0.gguf

- sd3.5_large-q4_k_4_0.gguf: Exacty same size as q4_0, but with slightly less degradation. Recommended

- sd3.5_large_turbo-q4_k_4_1.gguf: Smaller than q4_1, and with comparable degradation. Recommended

- sd3.5_large_turbo-q4_k_5_0.gguf: Smaller than q5_0, and with comparable degradation. Very close to the original f16 already. Recommended

Legacy types:

- sd3.5_large_turbo-q4_0.gguf: Same size as q4_k_4_0, Not recommended (use q4_k_4_0 instead)

- sd3.5_large_turbo-q4_1.gguf: Not recommended (q4_k_4_1 is better and smaller)

- sd3.5_large_turbo-q5_0.gguf: Barely better and bigger than q4_k_5_0

- sd3.5_large_turbo-q5_1.gguf: Better and bigger than q5_0

- sd3.5_large_turbo-q8_0.gguf: Basically indistinguishable from the original f16, but much smaller. Recommended for best quality

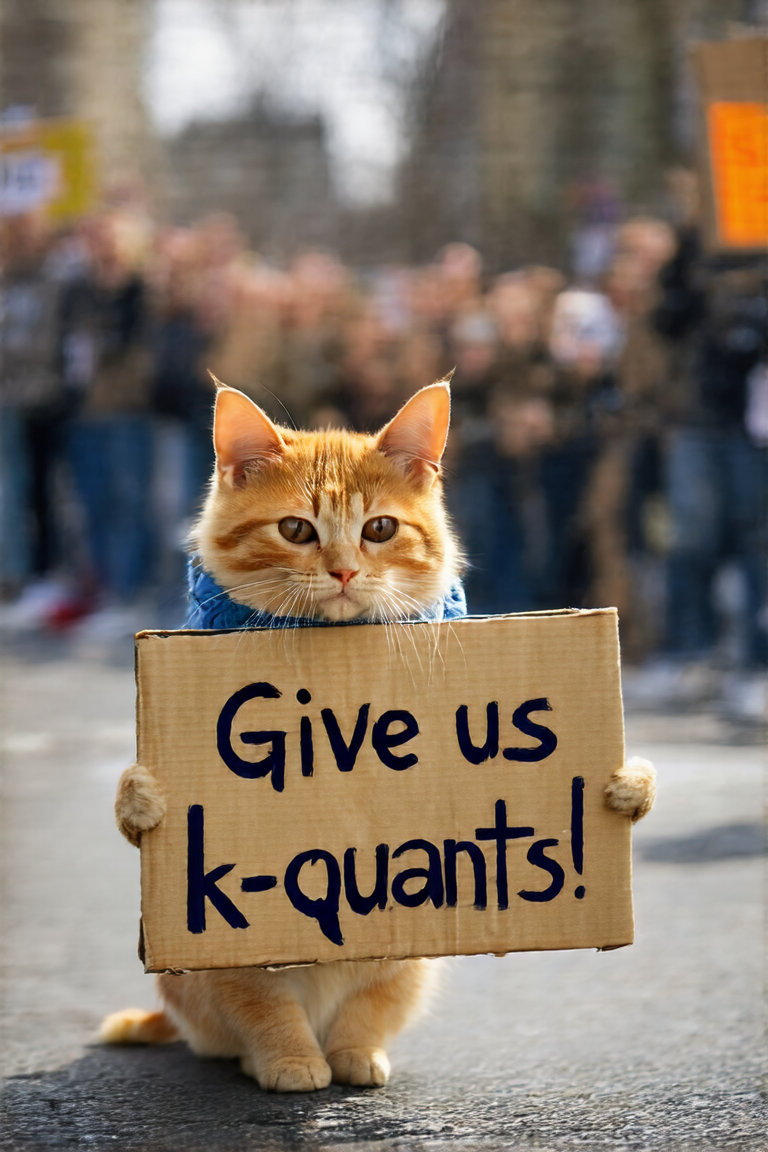

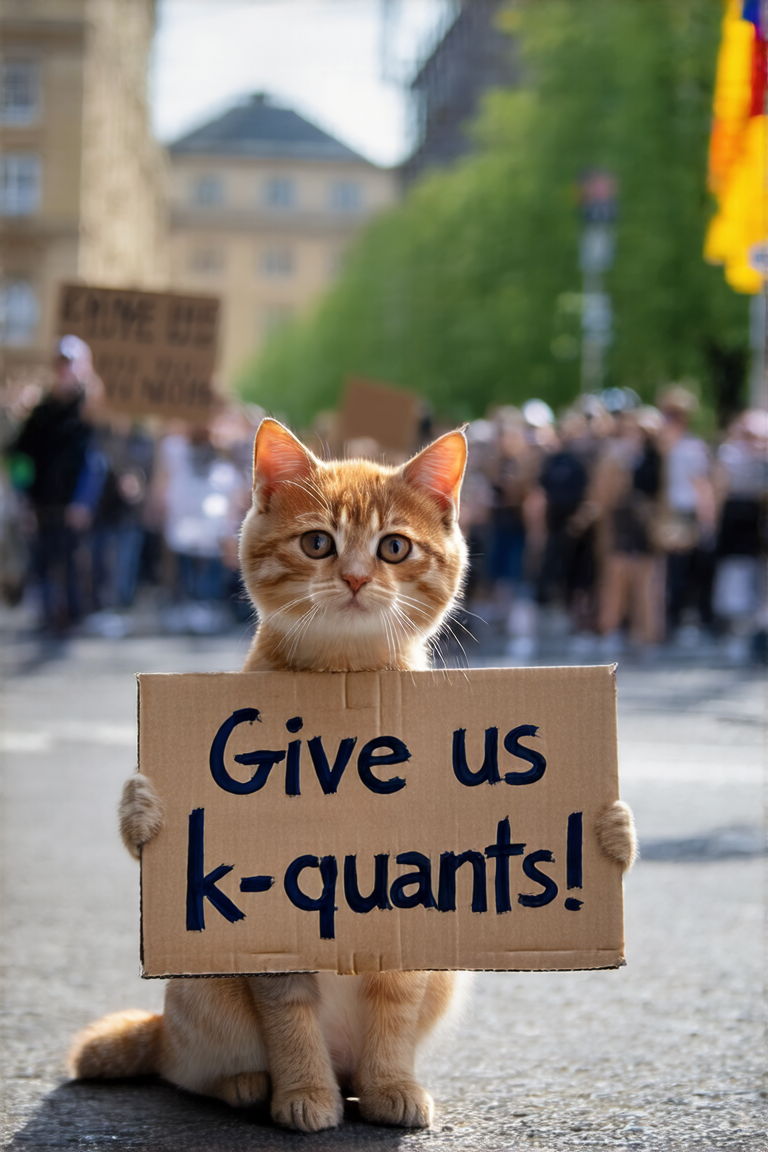

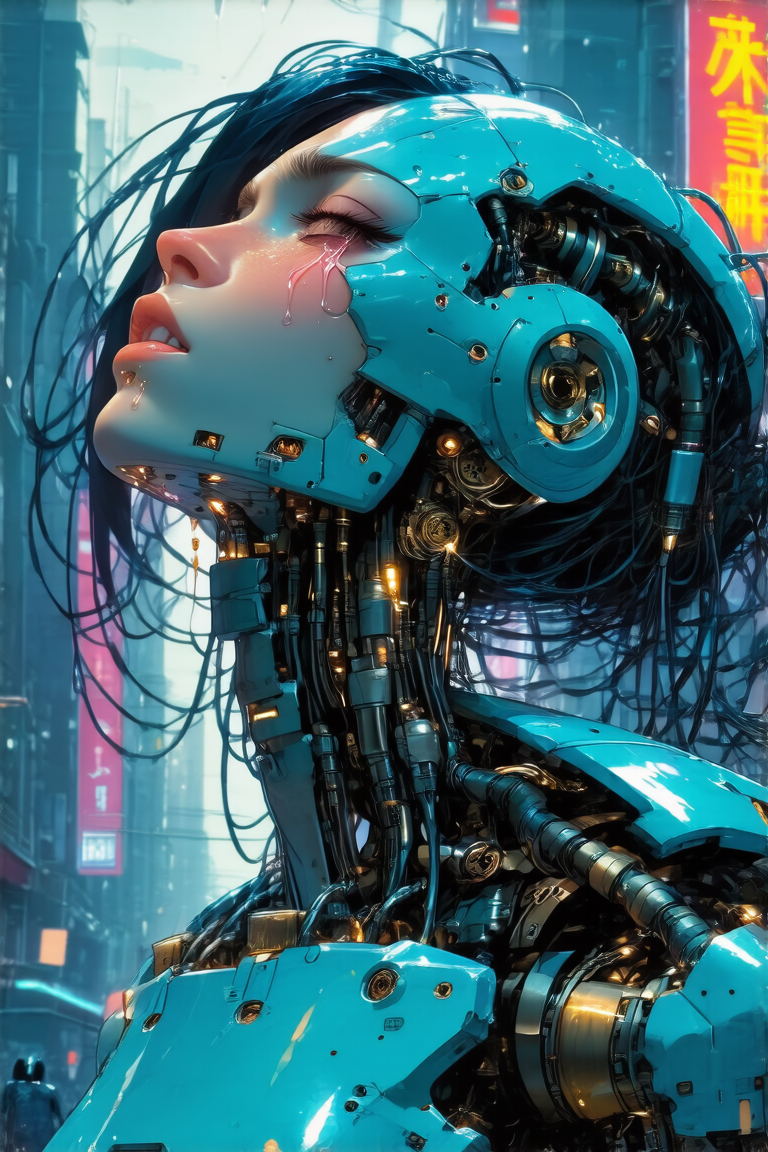

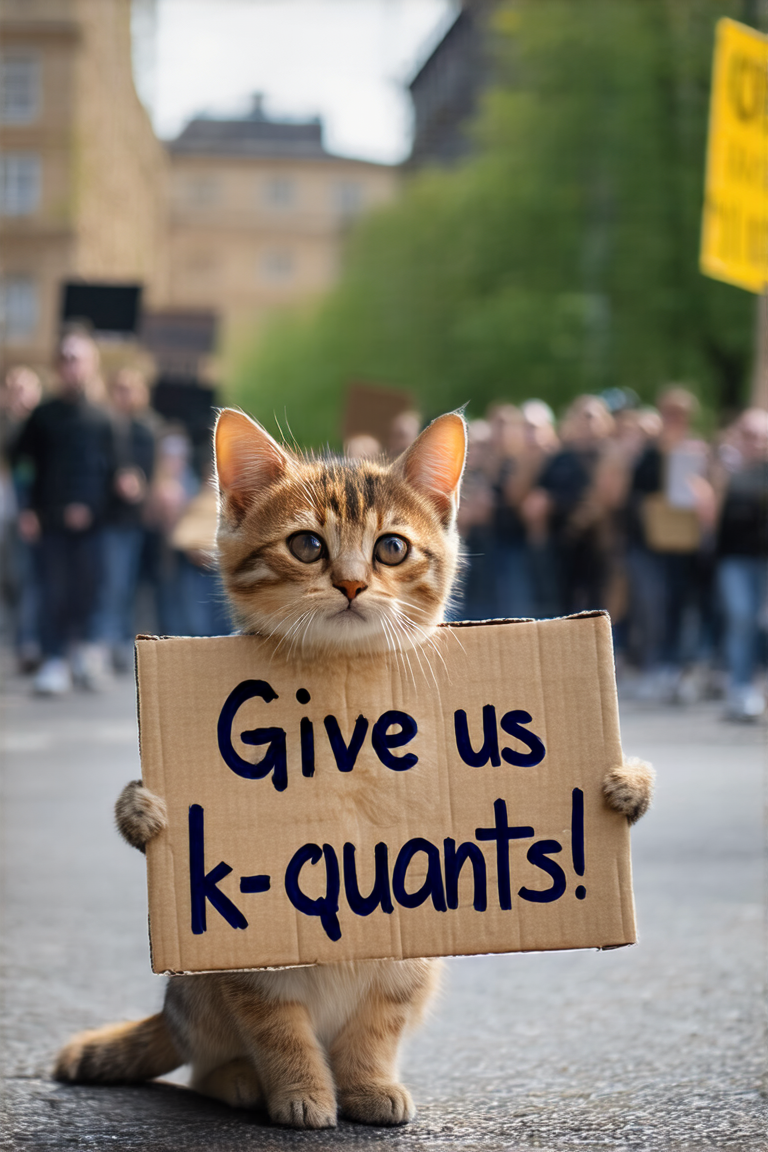

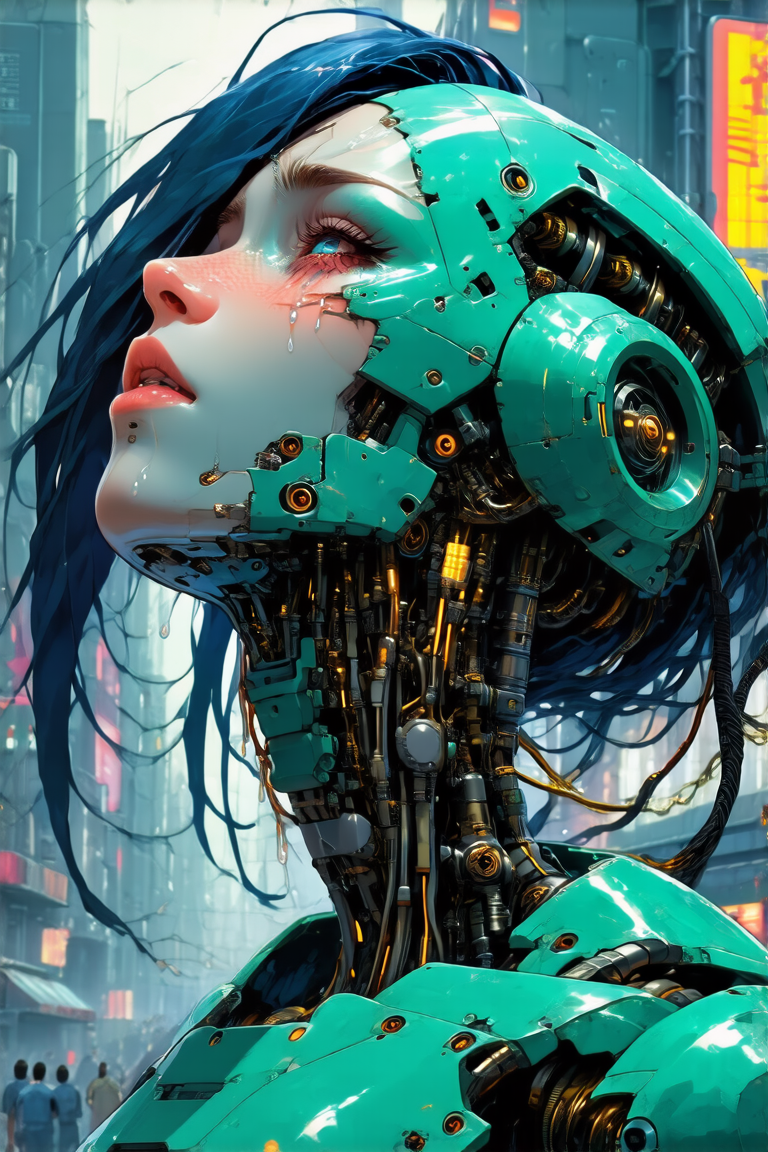

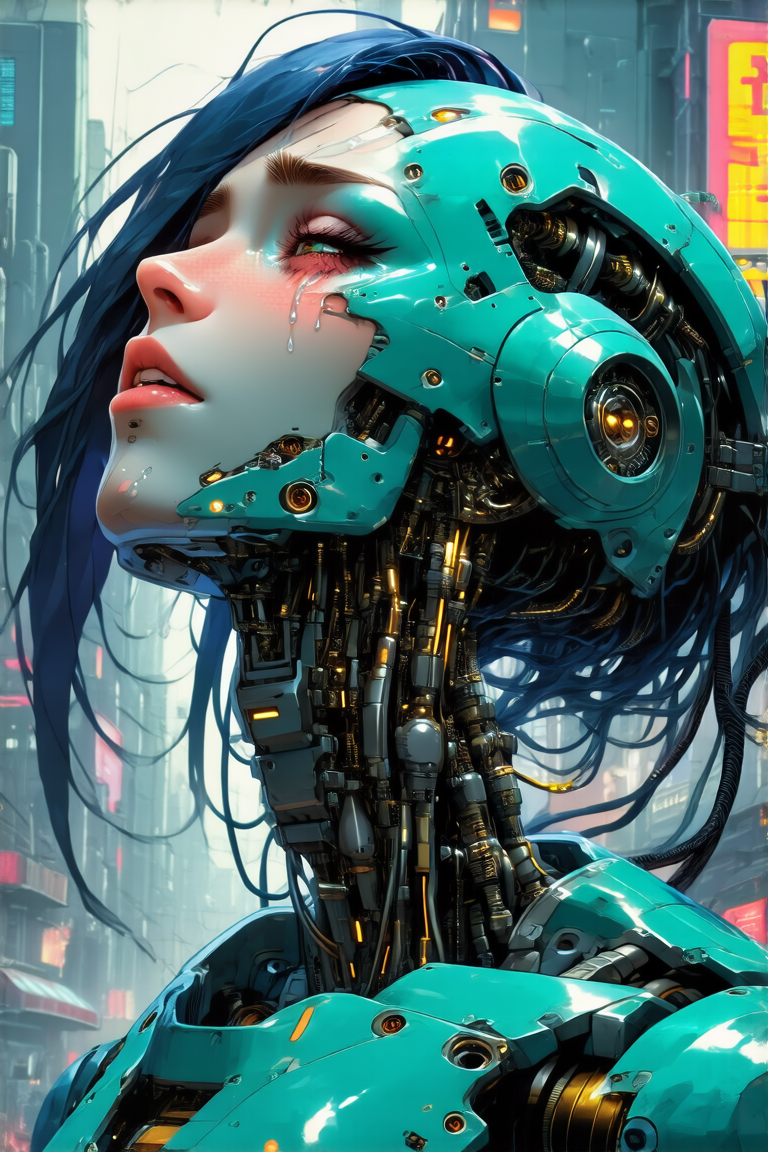

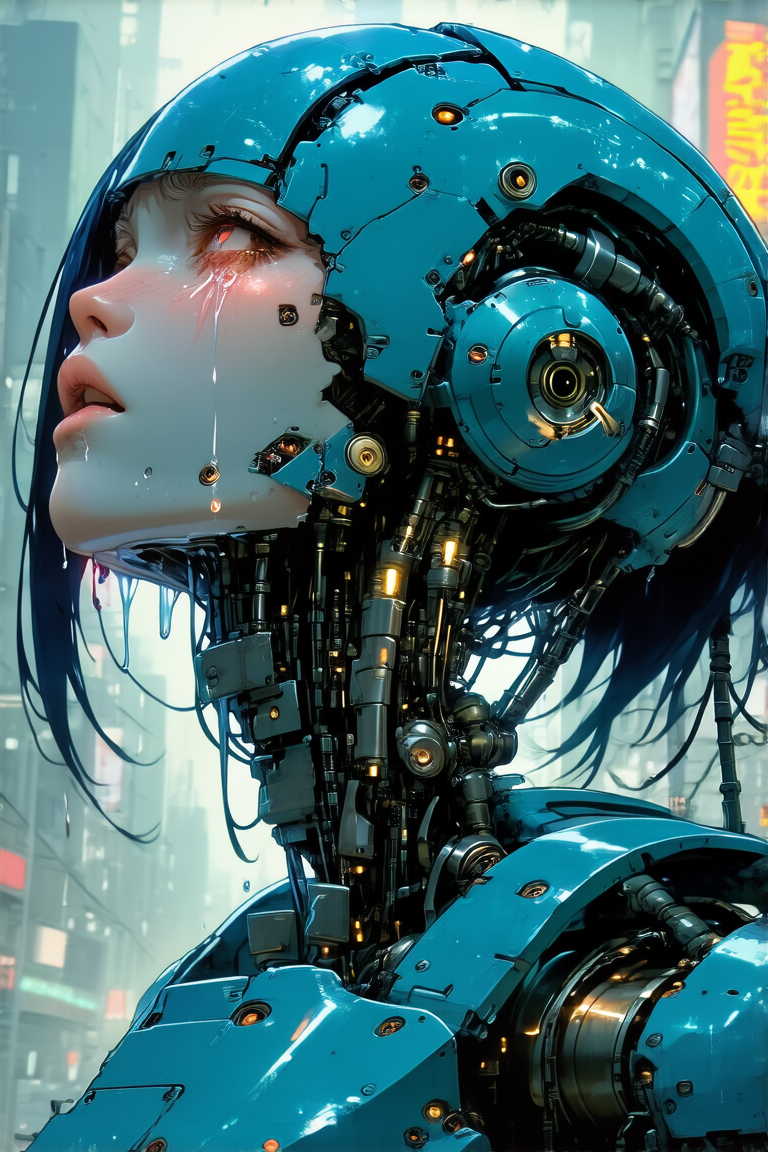

Outputs:

Sorted by model size (Note that q4_0 and q4_k_4_0 are the exact same size)

only 28 steps, cfg scale 4.5

Generated with a modified version of sdcpp with this PR applied to enable clip timestep embeddings support.

Text encoders used: q4_k quant of t5xxl, full precision clip_g, and q8 quant of ViT-L-14-TEXT-detail-improved-hiT-GmP-TE-only-HF in place of clip_l.

Full prompts and settings in png metadata.