language:

- en

thumbnail: url to a thumbnail used in social sharing

tags:

- macroeconomics

- automated summary evaluation

- content

license: apache-2.0

metrics:

- mse

Content Model

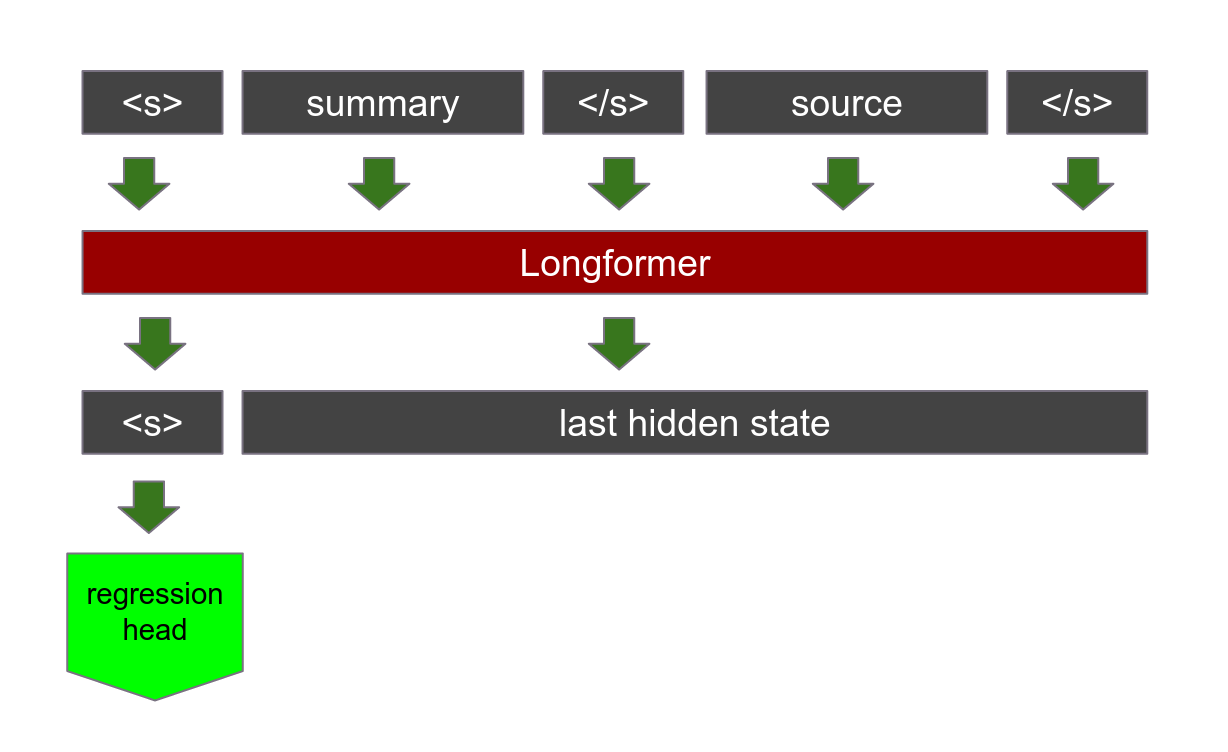

This is a longformer model with a regression head designed to predict the Content score of a summary. By default, longformers assign global attention to only the classification token with a sliding attention window which moves across the rest of the test. This model, however, is trained to assign global attention to the entire summary with a reduced sliding window. When performing inference using this model, you should make sure to assign a custom global attention mask as follows:

def inference(summary, source, model):

combined = summary + tokenizer.sep_token + source

context = tokenizer(combined)

sep_index = context['input_ids'].index(2)

context['global_attention_mask'] = [1]*(sep_index + 1) + [0]*(len(context['input_ids'])-(sep_index + 1))

inputs = {}

for key in context:

inputs[key] = torch.tensor([context[key]])

return float(model(**inputs)['logits'][0][0])

Corpus

It was trained on a corpus of 4,233 summaries of 101 sources compiled by Botarleanu et al. (2022). The summaries were graded by expert raters on 6 criteria: Details, Main Point, Cohesion, Paraphrasing, Objective Language, and Language Beyond the Text. A principle component analyis was used to reduce the dimensionality of the outcome variables to two.

- Content includes Details, Main Point, Paraphrasing and Cohesion

- Wording includes Objective Language, and Language Beyond the Text

Score

This model predicts the Content score. The model to predict the Wording score can be found here. The following diagram illustrates the model architecture:

When providing input to the model, the summary and the source should be concatenated using the seperator token </s>.

All summaries for 15 sources were withheld from the training set to use for testing.

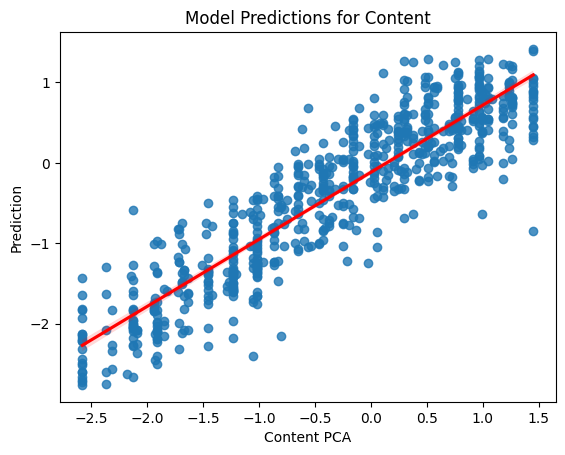

This allows the model to have access to both the summary and the source to provide more accurate scores. The model reported an R2 of 0.82 on the test set of summaries.