metadata

base_model: []

library_name: transformers

tags:

- mergekit

- merge

boreas-10_7b-step1

!NB: THIS MODEL NEEDS CONTINUED (PRE)TRAINING

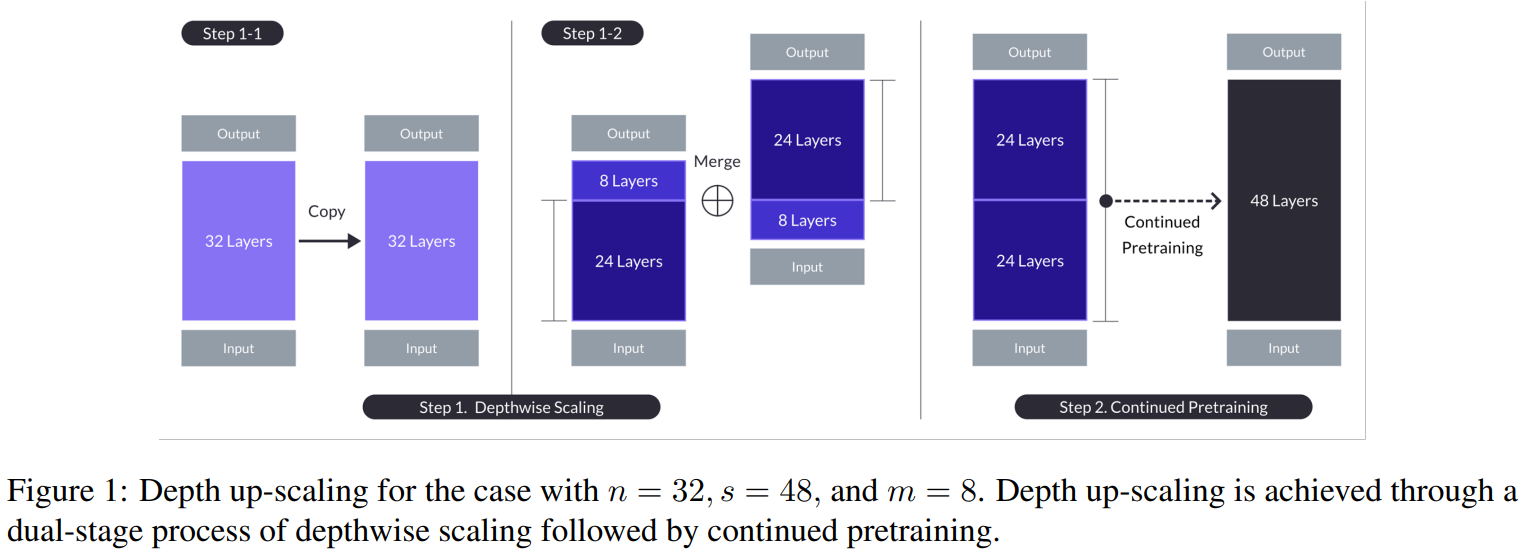

This is the result of step 1 of the upscaling of Boreas-7B with mergekit. It is trying to reproduce the upscaling described in the SOLAR 10.7B: Scaling Large Language Models with Simple yet Effective Depth Up-Scaling paper. This model is the result after step 1 from the figure below:

Step 2 continued training is being done - result will be another model.

Merge Details

Merge Method

This model was merged using the passthrough merge method.

Models Merged

The following models were included in the merge:

- boreas-7b-8-16-24-32

- boreas-7b-16-32

- boreas-7b-0-8-16-24

- boreas-7b-0-16

Configuration

The following YAML configuration was used to produce this model:

slices:

- sources:

- model: boreas-7b-0-16

layer_range: [0, 16]

- sources:

- model: boreas-7b-0-8-16-24

layer_range: [0, 8]

- sources:

- model: boreas-7b-8-16-24-32

layer_range: [0, 8]

- sources:

- model: boreas-7b-16-32

layer_range: [0, 16]

merge_method: passthrough

dtype: bfloat16

The four models were created with the following configurations:

slices:

- sources:

- model: yhavinga/Boreas-7B

layer_range: [0, 16]

merge_method: passthrough

dtype: bfloat16

---

slices:

- sources:

- model: yhavinga/Boreas-7B

layer_range: [0, 8]

- model: yhavinga/Boreas-7B

layer_range: [16, 24]

merge_method: slerp

base_model: yhavinga/Boreas-7B

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

---

slices:

- sources:

- model: yhavinga/Boreas-7B

layer_range: [8, 16]

- model: yhavinga/Boreas-7B

layer_range: [24, 32]

merge_method: slerp

base_model: yhavinga/Boreas-7B

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

---

slices:

- sources:

- model: yhavinga/Boreas-7B

layer_range: [16, 32]

merge_method: passthrough

dtype: bfloat16