metadata

license: creativeml-openrail-m

language:

- en

tags:

- art

- Stable Diffusion

Model Card for lyraSD

We consider the Diffusers as the much more extendable framework for the SD ecosystem. Therefore, we have made a pivot to Diffusers, leading to a complete update of lyraSD.

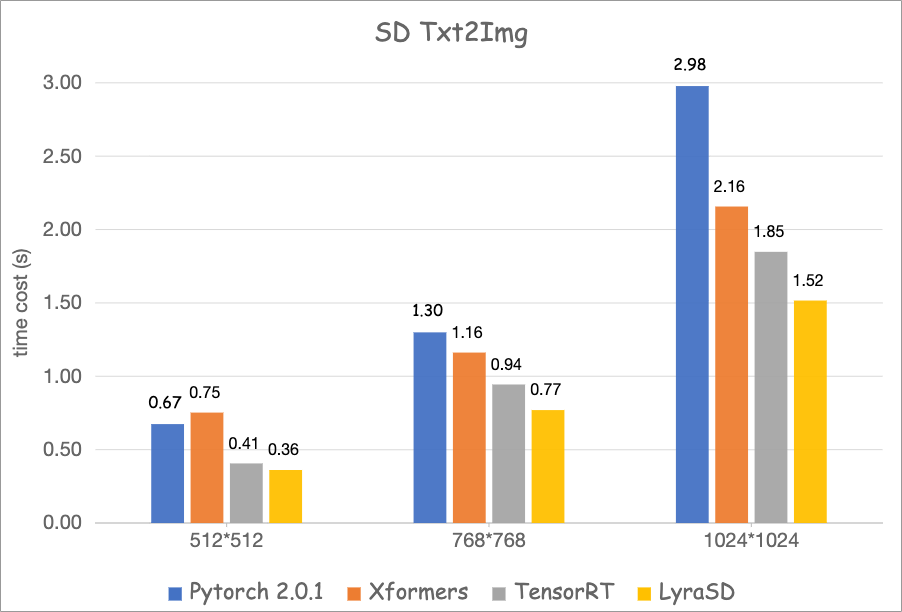

lyraSD is currently the fastest Stable Diffusion model that can 100% align the outputs of Diffusers available, boasting an inference cost of only 0.36 seconds for a 512x512 image, accelerating the process up to 50% faster than the original version.

Among its main features are:

- All Commonly used SD1.5 and SDXL pipelines

- Text2Img

- Img2Img

- Inpainting

- ControlNetText2Img

- ControlNetImg2Img

- IpAdapterText2Img

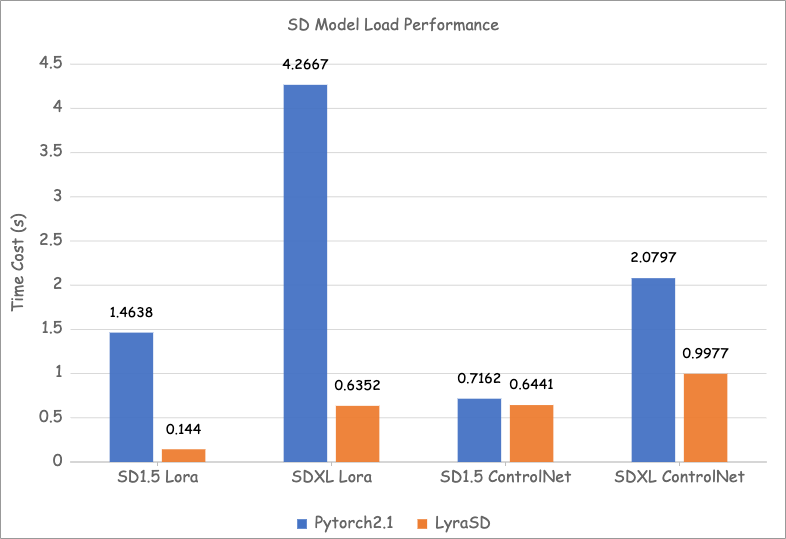

- Fast ControlNet Hot Swap: Can hot swap a ControlNet model weights within 0.6s

- Fast LoRA Hot Swap: Can hot swap a Lora within 0.14s

- 100% likeness to diffusers output

- Supported Devices: Any GPU with SM version >= 80. For example, Nvidia Nvidia Ampere architecture (A2, A10, A16, A30, A40, A100), RTX 4090, 3080 and etc.

Speed

test environment

- Device: Nvidia A100 40G

- Nvidia driver version: 525.105.17

- Nvidia cuda version: 12.0

- Percision:fp16

- Steps: 20

- Sampler: EulerA

SD1.5 Text2Img Performance

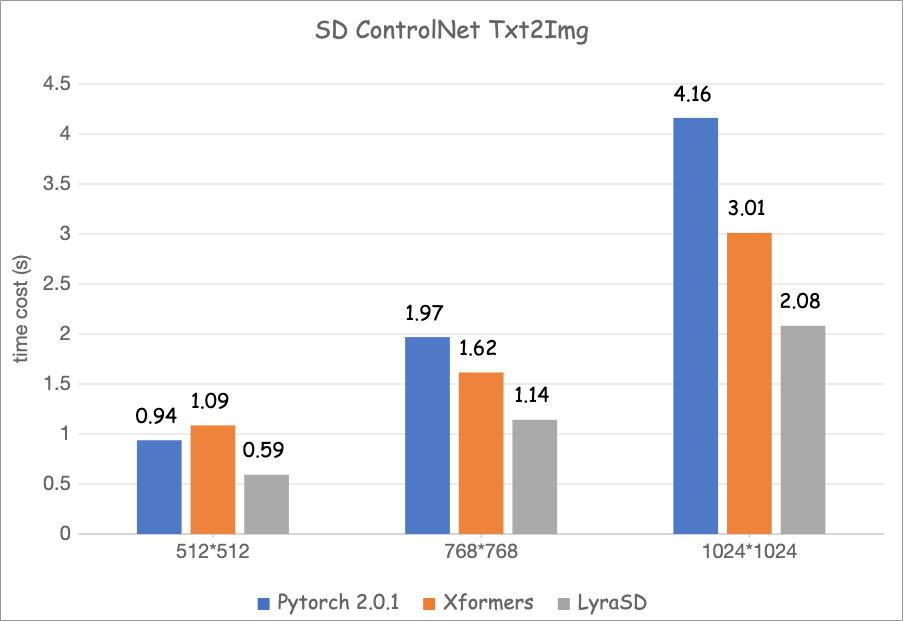

SD1.5 ControlNet-Text2Img Performance

SDXL Text2Img Performance

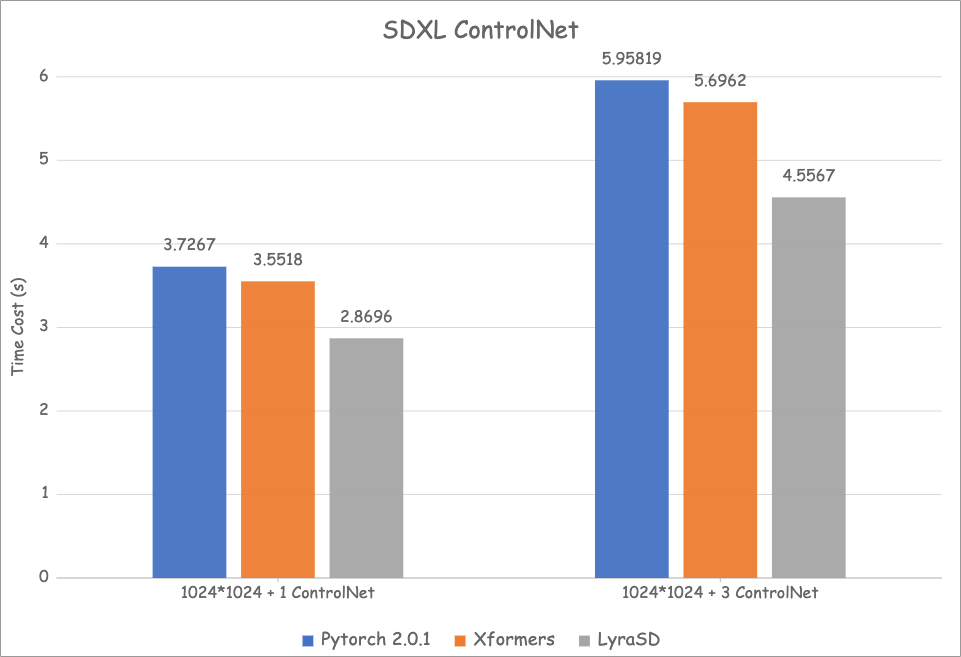

SDXL ControlNet-Text2Img Performance

SD Model Load Performance

Model Sources

SD1.5

- Checkpoint: https://civitai.com/models/7371/rev-animated

- ControlNet: https://huggingface.co/lllyasviel/sd-controlnet-canny

- Lora: https://civitai.com/models/18323?modelVersionId=46846

SDXL

- Checkpoint: https://civitai.com/models/43977?modelVersionId=227916

- ControlNet: https://huggingface.co/diffusers/controlnet-canny-sdxl-1.0

- Lora: https://civitai.com/models/18323?modelVersionId=46846

SD1.5 Text2Img Uses

import torch

import time

from lyrasd_model import LyraSdTxt2ImgPipeline

# 存放模型文件的路径,应该包含一下结构(和diffusers一致):

# 1. clip 模型

# 2. 转换好的优化后的 unet 模型,放入其中的 unet_bins 文件夹

# 3. vae 模型

# 4. scheduler 配置

# LyraSD 的 C++ 编译动态链接库,其中包含 C++ CUDA 计算的细节

lib_path = "./lyrasd_model/lyrasd_lib/libth_lyrasd_cu11_sm80.so"

model_path = "./models/lyrasd_rev_animated"

lora_path = "./models/lyrasd_xiaorenshu_lora"

# 构建 Txt2Img 的 Pipeline

model = LyraSdTxt2ImgPipeline(model_path, lib_path)

# load lora

# lora model path, name,lora strength

model.load_lora_v2(lora_path, "xiaorenshu", 0.4)

# 准备应用的输入和超参数

prompt = "a cat, cute, cartoon, concise, traditional, chinese painting, Tang and Song Dynasties, masterpiece, 4k, 8k, UHD, best quality"

negative_prompt = "(((horrible))), (((scary))), (((naked))), (((large breasts))), high saturation, colorful, human:2, body:2, low quality, bad quality, lowres, out of frame, duplicate, watermark, signature, text, frames, cut, cropped, malformed limbs, extra limbs, (((missing arms))), (((missing legs)))"

height, width = 512, 512

steps = 30

guidance_scale = 7

generator = torch.Generator().manual_seed(123)

num_images = 1

start = time.perf_counter()

# 推理生成

images = model(prompt, height, width, steps,

guidance_scale, negative_prompt, num_images,

generator=generator)

print("image gen cost: ",time.perf_counter() - start)

# 存储生成的图片

for i, image in enumerate(images):

image.save(f"outputs/res_txt2img_lora_{i}.png")

# unload lora, lora’s name, clear lora cache

model.unload_lora_v2("xiaorenshu", True)

SDXL Text2Img Uses

import torch

import time

from lyrasd_model import LyraSdXLTxt2ImgPipeline

# 存放模型文件的路径,应该包含一下结构:

# 1. clip 模型

# 2. 转换好的优化后的 unet 模型,放入其中的 unet_bins 文件夹

# 3. vae 模型

# 4. scheduler 配置

# LyraSD 的 C++ 编译动态链接库,其中包含 C++ CUDA 计算的细节

lib_path = "./lyrasd_model/lyrasd_lib/libth_lyrasd_cu11_sm80.so"

model_path = "./models/lyrasd_helloworldSDXL20Fp16"

lora_path = "./models/lyrasd_xiaorenshu_lora"

# 构建 Txt2Img 的 Pipeline

model = LyraSdXLTxt2ImgPipeline(model_path, lib_path)

# load lora

# lora model path, name,lora strength

model.load_lora_v2(lora_path, "xiaorenshu", 0.4)

# 准备应用的输入和超参数

prompt = "a cat, cute, cartoon, concise, traditional, chinese painting, Tang and Song Dynasties, masterpiece, 4k, 8k, UHD, best quality"

negative_prompt = "(((horrible))), (((scary))), (((naked))), (((large breasts))), high saturation, colorful, human:2, body:2, low quality, bad quality, lowres, out of frame, duplicate, watermark, signature, text, frames, cut, cropped, malformed limbs, extra limbs, (((missing arms))), (((missing legs)))"

height, width = 512, 512

steps = 30

guidance_scale = 7

generator = torch.Generator().manual_seed(123)

num_images = 1

start = time.perf_counter()

# 推理生成

images = model( prompt,

height=height,

width=width,

num_inference_steps=steps,

num_images_per_prompt=1,

guidance_scale=guidance_scale,

negative_prompt=negative_prompt,

generator=generator

)

print("image gen cost: ",time.perf_counter() - start)

# 存储生成的图片

for i, image in enumerate(images):

image.save(f"outputs/res_txt2img_xl_lora_{i}.png")

# unload lora,参数为 lora 的名字,是否清除 lora 缓存

model.unload_lora_v2("xiaorenshu", True)

Demo output

Text2Img

SD1.5 Text2Img

SD1.5 Text2Img with Lora

SDXL Text2Img

SDXL Text2Img with Lora

ControlNet Text2Img

Control Image

SD1.5 ControlNet Text2Img Output

SDXL ControlNet Text2Img Output

Docker Environment Recommendation

- For Cuda 11.X: we recommend

nvcr.io/nvidia/pytorch:22.12-py3 - For Cuda 12.0: we recommend

nvcr.io/nvidia/pytorch:23.02-py3

docker pull nvcr.io/nvidia/pytorch:23.02-py3

docker run --rm -it --gpus all -v ./:/lyraSD nvcr.io/nvidia/pytorch:23.02-py3

pip install -r requirements.txt

python txt2img_demo.py

Citation

@Misc{lyraSD_2023,

author = {Kangjian Wu, Zhengtao Wang, Yibo Lu, Haoxiong Su, Bin Wu},

title = {lyraSD: Accelerating Stable Diffusion with best flexibility},

howpublished = {\url{https://huggingface.co/TMElyralab/lyraSD}},

year = {2024}

}

Report bug

- start a discussion to report any bugs!--> https://huggingface.co/TMElyralab/lyraSD/discussions

- report bug with a

[bug]mark in the title.