PIE Dataset Card for "SciDTB Argmin"

This is a PyTorch-IE wrapper for the SciDTB ArgMin Huggingface dataset loading script.

Usage

from pie_datasets import load_dataset

from pytorch_ie.documents import TextDocumentWithLabeledSpansAndBinaryRelations

# load English variant

dataset = load_dataset("pie/scidtb_argmin")

# if required, normalize the document type (see section Document Converters below)

dataset_converted = dataset.to_document_type(TextDocumentWithLabeledSpansAndBinaryRelations)

assert isinstance(dataset_converted["train"][0], TextDocumentWithLabeledSpansAndBinaryRelations)

# get first relation in the first document

doc = dataset_converted["train"][0]

print(doc.binary_relations[0])

# BinaryRelation(head=LabeledSpan(start=251, end=454, label='means', score=1.0), tail=LabeledSpan(start=455, end=712, label='proposal', score=1.0), label='detail', score=1.0)

print(doc.binary_relations[0].resolve())

# ('detail', (('means', 'We observe , identify , and detect naturally occurring signals of interestingness in click transitions on the Web between source and target documents , which we collect from commercial Web browser logs .'), ('proposal', 'The DSSM is trained on millions of Web transitions , and maps source-target document pairs to feature vectors in a latent space in such a way that the distance between source documents and their corresponding interesting targets in that space is minimized .')))

Data Schema

The document type for this dataset is SciDTBArgminDocument which defines the following data fields:

tokens(tuple of string)id(str, optional)metadata(dictionary, optional)

and the following annotation layers:

units(annotation type:LabeledSpan, target:tokens)relations(annotation type:BinaryRelation, target:units)

See here for the annotation type definitions.

Document Converters

The dataset provides document converters for the following target document types:

pytorch_ie.documents.TextDocumentWithLabeledSpansAndBinaryRelationslabeled_spans:LabeledSpanannotations, converted fromSciDTBArgminDocument'sunits- labels:

proposal,assertion,result,observation,means,description - tuples of

tokensare joined with a whitespace to createtextforLabeledSpans

- labels:

binary_relations:BinaryRelationannotations, converted fromSciDTBArgminDocument'srelations- labels:

support,attack,additional,detail,sequence

- labels:

See here for the document type definitions.

Collected Statistics after Document Conversion

We use the script evaluate_documents.py from PyTorch-IE-Hydra-Template to generate these statistics.

After checking out that code, the statistics and plots can be generated by the command:

python src/evaluate_documents.py dataset=scidtb_argmin_base metric=METRIC

where a METRIC is called according to the available metric configs in config/metric/METRIC (see metrics).

This also requires to have the following dataset config in configs/dataset/scidtb_argmin_base.yaml of this dataset within the repo directory:

_target_: src.utils.execute_pipeline

input:

_target_: pie_datasets.DatasetDict.load_dataset

path: pie/scidtb_argmin

revision: 335a8e6168919d7f204c6920eceb96745dbd161b

For token based metrics, this uses bert-base-uncased from transformer.AutoTokenizer (see AutoTokenizer, and bert-based-uncased to tokenize text in TextDocumentWithLabeledSpansAndBinaryRelations (see document type).

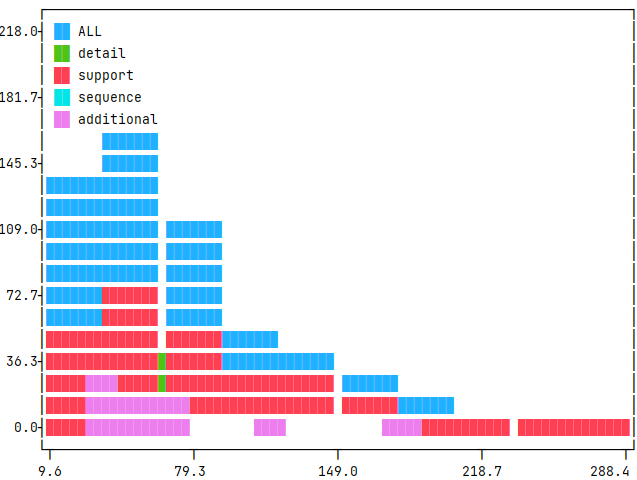

Relation argument (outer) token distance per label

The distance is measured from the first token of the first argumentative unit to the last token of the last unit, a.k.a. outer distance.

We collect the following statistics: number of documents in the split (no. doc), no. of relations (len), mean of token distance (mean), standard deviation of the distance (std), minimum outer distance (min), and maximum outer distance (max). We also present histograms in the collapsible, showing the distribution of these relation distances (x-axis; and unit-counts in y-axis), accordingly.

Command

python src/evaluate_documents.py dataset=scidtb_argmin_base metric=relation_argument_token_distances

| len | max | mean | min | std | |

|---|---|---|---|---|---|

| ALL | 586 | 277 | 75.239 | 21 | 40.312 |

| additional | 54 | 180 | 59.593 | 36 | 29.306 |

| detail | 258 | 163 | 65.62 | 22 | 29.21 |

| sequence | 22 | 93 | 59.727 | 38 | 17.205 |

| support | 252 | 277 | 89.794 | 21 | 48.118 |

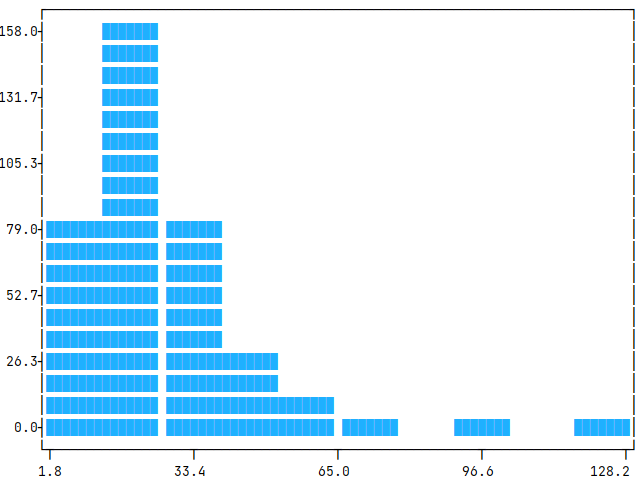

Span lengths (tokens)

The span length is measured from the first token of the first argumentative unit to the last token of the particular unit.

We collect the following statistics: number of documents in the split (no. doc), no. of spans (len), mean of number of tokens in a span (mean), standard deviation of the number of tokens (std), minimum tokens in a span (min), and maximum tokens in a span (max). We also present histograms in the collapsible, showing the distribution of these token-numbers (x-axis; and unit-counts in y-axis), accordingly.

Command

python src/evaluate_documents.py dataset=scidtb_argmin_base metric=span_lengths_tokens

| statistics | train |

|---|---|

| no. doc | 60 |

| len | 353 |

| mean | 27.946 |

| std | 13.054 |

| min | 7 |

| max | 123 |

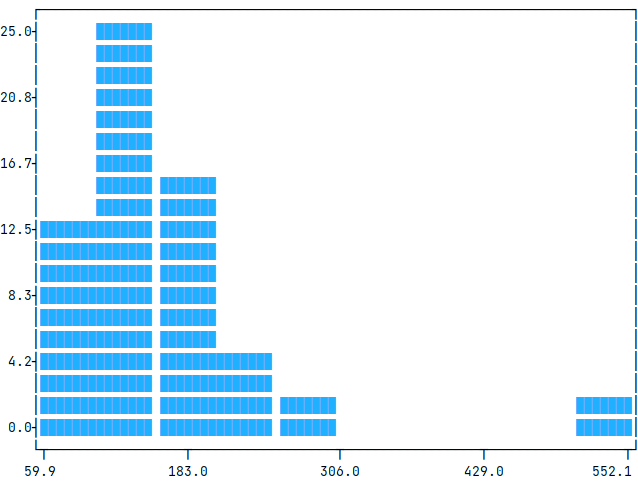

Token length (tokens)

The token length is measured from the first token of the document to the last one.

We collect the following statistics: number of documents in the split (no. doc), mean of document token-length (mean), standard deviation of the length (std), minimum number of tokens in a document (min), and maximum number of tokens in a document (max). We also present histograms in the collapsible, showing the distribution of these token lengths (x-axis; and unit-counts in y-axis), accordingly.

Command

python src/evaluate_documents.py dataset=scidtb_argmin_base metric=count_text_tokens

| statistics | train |

|---|---|

| no. doc | 60 |

| mean | 164.417 |

| std | 64.572 |

| min | 80 |

| max | 532 |