OpenAI GPT2

Overview

OpenAI GPT-2 model was proposed in Language Models are Unsupervised Multitask Learners by Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei and Ilya Sutskever from OpenAI. It’s a causal (unidirectional) transformer pretrained using language modeling on a very large corpus of ~40 GB of text data.

The abstract from the paper is the following:

GPT-2 is a large transformer-based language model with 1.5 billion parameters, trained on a dataset[1] of 8 million web pages. GPT-2 is trained with a simple objective: predict the next word, given all of the previous words within some text. The diversity of the dataset causes this simple goal to contain naturally occurring demonstrations of many tasks across diverse domains. GPT-2 is a direct scale-up of GPT, with more than 10X the parameters and trained on more than 10X the amount of data.

Write With Transformer is a webapp created and hosted by Hugging Face showcasing the generative capabilities of several models. GPT-2 is one of them and is available in five different sizes: small, medium, large, xl and a distilled version of the small checkpoint: distilgpt-2.

This model was contributed by thomwolf. The original code can be found here.

Usage tips

- GPT-2 is a model with absolute position embeddings so it’s usually advised to pad the inputs on the right rather than the left.

- GPT-2 was trained with a causal language modeling (CLM) objective and is therefore powerful at predicting the next token in a sequence. Leveraging this feature allows GPT-2 to generate syntactically coherent text as it can be observed in the run_generation.py example script.

- The model can take the past_key_values (for PyTorch) or past (for TF) as input, which is the previously computed key/value attention pairs. Using this (past_key_values or past) value prevents the model from re-computing pre-computed values in the context of text generation. For PyTorch, see past_key_values argument of the GPT2Model.forward() method, or for TF the past argument of the TFGPT2Model.call() method for more information on its usage.

- Enabling the scale_attn_by_inverse_layer_idx and reorder_and_upcast_attn flags will apply the training stability improvements from Mistral (for PyTorch only).

Usage example

The generate() method can be used to generate text using GPT2 model.

>>> from transformers import AutoModelForCausalLM, AutoTokenizer

>>> model = AutoModelForCausalLM.from_pretrained("gpt2")

>>> tokenizer = AutoTokenizer.from_pretrained("gpt2")

>>> prompt = "GPT2 is a model developed by OpenAI."

>>> input_ids = tokenizer(prompt, return_tensors="pt").input_ids

>>> gen_tokens = model.generate(

... input_ids,

... do_sample=True,

... temperature=0.9,

... max_length=100,

... )

>>> gen_text = tokenizer.batch_decode(gen_tokens)[0]Using Flash Attention 2

Flash Attention 2 is a faster, optimized version of the attention scores computation which relies on cuda kernels.

Installation

First, check whether your hardware is compatible with Flash Attention 2. The latest list of compatible hardware can be found in the official documentation. If your hardware is not compatible with Flash Attention 2, you can still benefit from attention kernel optimisations through Better Transformer support covered above.

Next, install the latest version of Flash Attention 2:

pip install -U flash-attn --no-build-isolation

Usage

To load a model using Flash Attention 2, we can pass the argument attn_implementation="flash_attention_2" to .from_pretrained. We’ll also load the model in half-precision (e.g. torch.float16), since it results in almost no degradation to audio quality but significantly lower memory usage and faster inference:

>>> import torch

>>> from transformers import AutoModelForCausalLM, AutoTokenizer

>>> device = "cuda" # the device to load the model onto

>>> model = AutoModelForCausalLM.from_pretrained("gpt2", torch_dtype=torch.float16, attn_implementation="flash_attention_2")

>>> tokenizer = AutoTokenizer.from_pretrained("gpt2")

>>> prompt = "def hello_world():"

>>> model_inputs = tokenizer([prompt], return_tensors="pt").to(device)

>>> model.to(device)

>>> generated_ids = model.generate(**model_inputs, max_new_tokens=100, do_sample=True)

>>> tokenizer.batch_decode(generated_ids)[0]Expected speedups

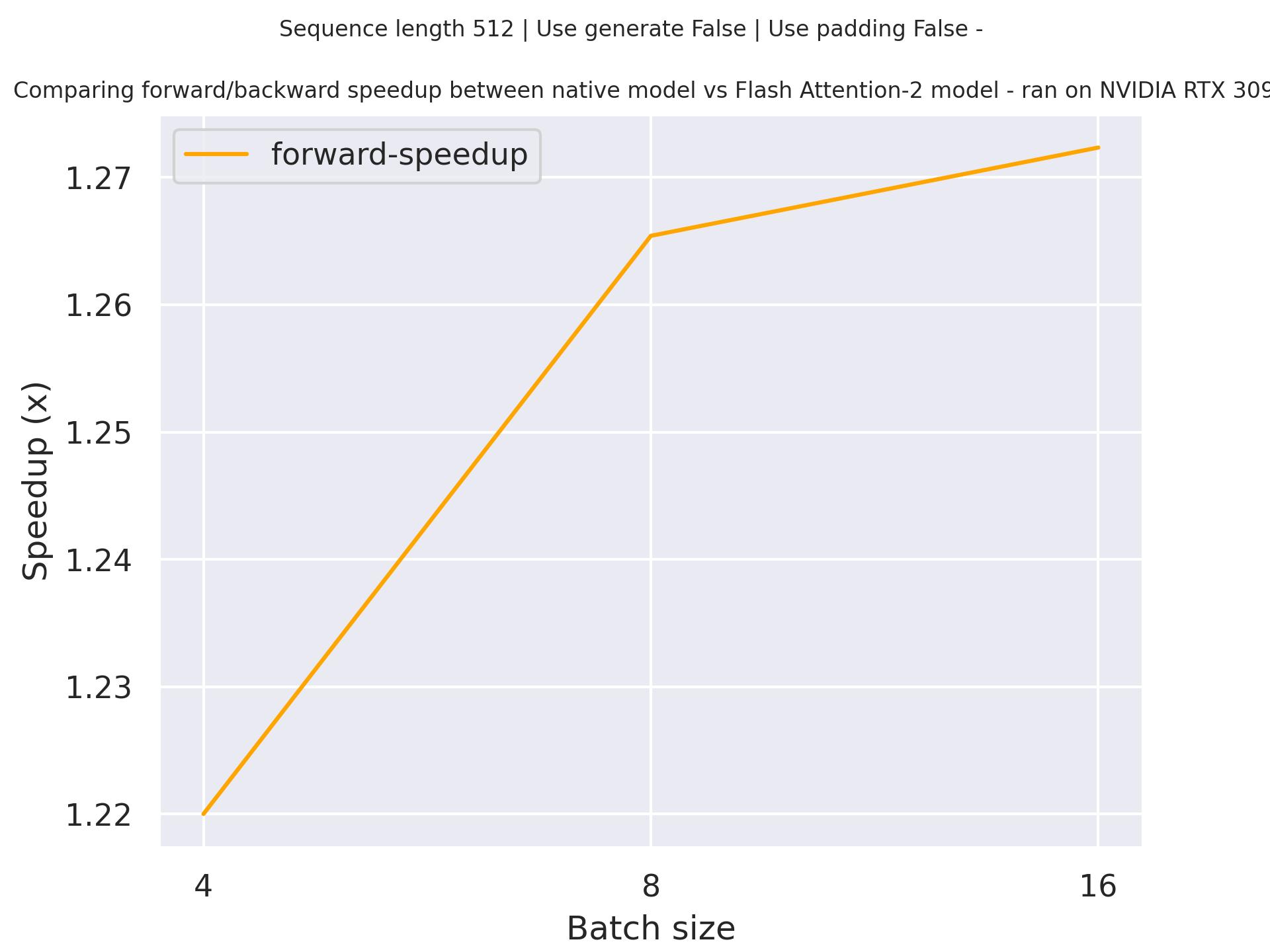

Below is an expected speedup diagram that compares pure inference time between the native implementation in transformers using gpt2 checkpoint and the Flash Attention 2 version of the model using a sequence length of 512.

Using Scaled Dot Product Attention (SDPA)

PyTorch includes a native scaled dot-product attention (SDPA) operator as part of torch.nn.functional. This function

encompasses several implementations that can be applied depending on the inputs and the hardware in use. See the

official documentation

or the GPU Inference

page for more information.

SDPA is used by default for torch>=2.1.1 when an implementation is available, but you may also set

attn_implementation="sdpa" in from_pretrained() to explicitly request SDPA to be used.

from transformers import AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained("gpt2", torch_dtype=torch.float16, attn_implementation="sdpa")

...For the best speedups, we recommend loading the model in half-precision (e.g. torch.float16 or torch.bfloat16).

On a local benchmark (rtx3080ti-16GB, PyTorch 2.2.1, OS Ubuntu 22.04) using float16 with

gpt2-large, we saw the

following speedups during training and inference.

Training

| Batch size | Seq len | Time per batch (Eager - s) | Time per batch (SDPA - s) | Speedup (%) | Eager peak mem (MB) | SDPA peak mem (MB) | Mem saving (%) |

|---|---|---|---|---|---|---|---|

| 1 | 128 | 0.039 | 0.032 | 23.042 | 3482.32 | 3494.62 | -0.352 |

| 1 | 256 | 0.073 | 0.059 | 25.15 | 3546.66 | 3552.6 | -0.167 |

| 1 | 512 | 0.155 | 0.118 | 30.96 | 4230.1 | 3665.59 | 15.4 |

| 1 | 1024 | 0.316 | 0.209 | 50.839 | 8682.26 | 4881.09 | 77.875 |

| 2 | 128 | 0.07 | 0.06 | 15.324 | 3557.8 | 3545.91 | 0.335 |

| 2 | 256 | 0.143 | 0.122 | 16.53 | 3901.5 | 3657.68 | 6.666 |

| 2 | 512 | 0.267 | 0.213 | 25.626 | 7062.21 | 4876.47 | 44.822 |

| 2 | 1024 | OOM | 0.404 | / | OOM | 8096.35 | SDPA does not OOM |

| 4 | 128 | 0.134 | 0.128 | 4.412 | 3675.79 | 3648.72 | 0.742 |

| 4 | 256 | 0.243 | 0.217 | 12.292 | 6129.76 | 4871.12 | 25.839 |

| 4 | 512 | 0.494 | 0.406 | 21.687 | 12466.6 | 8102.64 | 53.858 |

| 4 | 1024 | OOM | 0.795 | / | OOM | 14568.2 | SDPA does not OOM |

Inference

| Batch size | Seq len | Per token latency Eager (ms) | Per token latency SDPA (ms) | Speedup (%) | Mem Eager (MB) | Mem SDPA (MB) | Mem saved (%) |

|---|---|---|---|---|---|---|---|

| 1 | 128 | 7.991 | 6.968 | 14.681 | 1685.2 | 1701.32 | -0.947 |

| 1 | 256 | 8.462 | 7.199 | 17.536 | 1745.49 | 1770.78 | -1.428 |

| 1 | 512 | 8.68 | 7.853 | 10.529 | 1907.69 | 1921.29 | -0.708 |

| 1 | 768 | 9.101 | 8.365 | 8.791 | 2032.93 | 2068.12 | -1.701 |

| 2 | 128 | 9.169 | 9.001 | 1.861 | 1803.84 | 1811.4 | -0.418 |

| 2 | 256 | 9.907 | 9.78 | 1.294 | 1907.72 | 1921.44 | -0.714 |

| 2 | 512 | 11.519 | 11.644 | -1.071 | 2176.86 | 2197.75 | -0.951 |

| 2 | 768 | 13.022 | 13.407 | -2.873 | 2464.3 | 2491.06 | -1.074 |

| 4 | 128 | 10.097 | 9.831 | 2.709 | 1942.25 | 1985.13 | -2.16 |

| 4 | 256 | 11.599 | 11.398 | 1.764 | 2177.28 | 2197.86 | -0.937 |

| 4 | 512 | 14.653 | 14.45 | 1.411 | 2753.16 | 2772.57 | -0.7 |

| 4 | 768 | 17.846 | 17.617 | 1.299 | 3327.04 | 3343.97 | -0.506 |

Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with GPT2. If you’re interested in submitting a resource to be included here, please feel free to open a Pull Request and we’ll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

- A blog on how to Finetune a non-English GPT-2 Model with Hugging Face.

- A blog on How to generate text: using different decoding methods for language generation with Transformers with GPT-2.

- A blog on Training CodeParrot 🦜 from Scratch, a large GPT-2 model.

- A blog on Faster Text Generation with TensorFlow and XLA with GPT-2.

- A blog on How to train a Language Model with Megatron-LM with a GPT-2 model.

- A notebook on how to finetune GPT2 to generate lyrics in the style of your favorite artist. 🌎

- A notebook on how to finetune GPT2 to generate tweets in the style of your favorite Twitter user. 🌎

- Causal language modeling chapter of the 🤗 Hugging Face Course.

- GPT2LMHeadModel is supported by this causal language modeling example script, text generation example script, and notebook.

- TFGPT2LMHeadModel is supported by this causal language modeling example script and notebook.

- FlaxGPT2LMHeadModel is supported by this causal language modeling example script and notebook.

- Text classification task guide

- Token classification task guide

- Causal language modeling task guide

GPT2Config

class transformers.GPT2Config

< source >( vocab_size = 50257 n_positions = 1024 n_embd = 768 n_layer = 12 n_head = 12 n_inner = None activation_function = 'gelu_new' resid_pdrop = 0.1 embd_pdrop = 0.1 attn_pdrop = 0.1 layer_norm_epsilon = 1e-05 initializer_range = 0.02 summary_type = 'cls_index' summary_use_proj = True summary_activation = None summary_proj_to_labels = True summary_first_dropout = 0.1 scale_attn_weights = True use_cache = True bos_token_id = 50256 eos_token_id = 50256 scale_attn_by_inverse_layer_idx = False reorder_and_upcast_attn = False **kwargs )

Parameters

- vocab_size (

int, optional, defaults to 50257) — Vocabulary size of the GPT-2 model. Defines the number of different tokens that can be represented by theinputs_idspassed when calling GPT2Model or TFGPT2Model. - n_positions (

int, optional, defaults to 1024) — The maximum sequence length that this model might ever be used with. Typically set this to something large just in case (e.g., 512 or 1024 or 2048). - n_embd (

int, optional, defaults to 768) — Dimensionality of the embeddings and hidden states. - n_layer (

int, optional, defaults to 12) — Number of hidden layers in the Transformer encoder. - n_head (

int, optional, defaults to 12) — Number of attention heads for each attention layer in the Transformer encoder. - n_inner (

int, optional) — Dimensionality of the inner feed-forward layers.Nonewill set it to 4 times n_embd - activation_function (

str, optional, defaults to"gelu_new") — Activation function, to be selected in the list["relu", "silu", "gelu", "tanh", "gelu_new"]. - resid_pdrop (

float, optional, defaults to 0.1) — The dropout probability for all fully connected layers in the embeddings, encoder, and pooler. - embd_pdrop (

float, optional, defaults to 0.1) — The dropout ratio for the embeddings. - attn_pdrop (

float, optional, defaults to 0.1) — The dropout ratio for the attention. - layer_norm_epsilon (

float, optional, defaults to 1e-05) — The epsilon to use in the layer normalization layers. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - summary_type (

string, optional, defaults to"cls_index") — Argument used when doing sequence summary, used in the models GPT2DoubleHeadsModel and TFGPT2DoubleHeadsModel.Has to be one of the following options:

"last": Take the last token hidden state (like XLNet)."first": Take the first token hidden state (like BERT)."mean": Take the mean of all tokens hidden states."cls_index": Supply a Tensor of classification token position (like GPT/GPT-2)."attn": Not implemented now, use multi-head attention.

- summary_use_proj (

bool, optional, defaults toTrue) — Argument used when doing sequence summary, used in the models GPT2DoubleHeadsModel and TFGPT2DoubleHeadsModel.Whether or not to add a projection after the vector extraction.

- summary_activation (

str, optional) — Argument used when doing sequence summary. Used in for the multiple choice head in GPT2DoubleHeadsModel.Pass

"tanh"for a tanh activation to the output, any other value will result in no activation. - summary_proj_to_labels (

bool, optional, defaults toTrue) — Argument used when doing sequence summary, used in the models GPT2DoubleHeadsModel and TFGPT2DoubleHeadsModel.Whether the projection outputs should have

config.num_labelsorconfig.hidden_sizeclasses. - summary_first_dropout (

float, optional, defaults to 0.1) — Argument used when doing sequence summary, used in the models GPT2DoubleHeadsModel and TFGPT2DoubleHeadsModel.The dropout ratio to be used after the projection and activation.

- scale_attn_weights (

bool, optional, defaults toTrue) — Scale attention weights by dividing by sqrt(hidden_size).. - use_cache (

bool, optional, defaults toTrue) — Whether or not the model should return the last key/values attentions (not used by all models). - bos_token_id (

int, optional, defaults to 50256) — Id of the beginning of sentence token in the vocabulary. - eos_token_id (

int, optional, defaults to 50256) — Id of the end of sentence token in the vocabulary. - scale_attn_by_inverse_layer_idx (

bool, optional, defaults toFalse) — Whether to additionally scale attention weights by1 / layer_idx + 1. - reorder_and_upcast_attn (

bool, optional, defaults toFalse) — Whether to scale keys (K) prior to computing attention (dot-product) and upcast attention dot-product/softmax to float() when training with mixed precision.

This is the configuration class to store the configuration of a GPT2Model or a TFGPT2Model. It is used to instantiate a GPT-2 model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the GPT-2 openai-community/gpt2 architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Example:

>>> from transformers import GPT2Config, GPT2Model

>>> # Initializing a GPT2 configuration

>>> configuration = GPT2Config()

>>> # Initializing a model (with random weights) from the configuration

>>> model = GPT2Model(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configGPT2Tokenizer

class transformers.GPT2Tokenizer

< source >( vocab_file merges_file errors = 'replace' unk_token = '<|endoftext|>' bos_token = '<|endoftext|>' eos_token = '<|endoftext|>' pad_token = None add_prefix_space = False add_bos_token = False **kwargs )

Parameters

- vocab_file (

str) — Path to the vocabulary file. - merges_file (

str) — Path to the merges file. - errors (

str, optional, defaults to"replace") — Paradigm to follow when decoding bytes to UTF-8. See bytes.decode for more information. - unk_token (

str, optional, defaults to"<|endoftext|>") — The unknown token. A token that is not in the vocabulary cannot be converted to an ID and is set to be this token instead. - bos_token (

str, optional, defaults to"<|endoftext|>") — The beginning of sequence token. - eos_token (

str, optional, defaults to"<|endoftext|>") — The end of sequence token. - pad_token (

str, optional) — The token used for padding, for example when batching sequences of different lengths. - add_prefix_space (

bool, optional, defaults toFalse) — Whether or not to add an initial space to the input. This allows to treat the leading word just as any other word. (GPT2 tokenizer detect beginning of words by the preceding space). - add_bos_token (

bool, optional, defaults toFalse) — Whether or not to add an initial beginning of sentence token to the input. This allows to treat the leading word just as any other word.

Construct a GPT-2 tokenizer. Based on byte-level Byte-Pair-Encoding.

This tokenizer has been trained to treat spaces like parts of the tokens (a bit like sentencepiece) so a word will

be encoded differently whether it is at the beginning of the sentence (without space) or not:

>>> from transformers import GPT2Tokenizer

>>> tokenizer = GPT2Tokenizer.from_pretrained("openai-community/gpt2")

>>> tokenizer("Hello world")["input_ids"]

[15496, 995]

>>> tokenizer(" Hello world")["input_ids"]

[18435, 995]You can get around that behavior by passing add_prefix_space=True when instantiating this tokenizer or when you

call it on some text, but since the model was not pretrained this way, it might yield a decrease in performance.

When used with is_split_into_words=True, this tokenizer will add a space before each word (even the first one).

This tokenizer inherits from PreTrainedTokenizer which contains most of the main methods. Users should refer to this superclass for more information regarding those methods.

GPT2TokenizerFast

class transformers.GPT2TokenizerFast

< source >( vocab_file = None merges_file = None tokenizer_file = None unk_token = '<|endoftext|>' bos_token = '<|endoftext|>' eos_token = '<|endoftext|>' add_prefix_space = False **kwargs )

Parameters

- vocab_file (

str, optional) — Path to the vocabulary file. - merges_file (

str, optional) — Path to the merges file. - tokenizer_file (

str, optional) — Path to tokenizers file (generally has a .json extension) that contains everything needed to load the tokenizer. - unk_token (

str, optional, defaults to"<|endoftext|>") — The unknown token. A token that is not in the vocabulary cannot be converted to an ID and is set to be this token instead. - bos_token (

str, optional, defaults to"<|endoftext|>") — The beginning of sequence token. - eos_token (

str, optional, defaults to"<|endoftext|>") — The end of sequence token. - add_prefix_space (

bool, optional, defaults toFalse) — Whether or not to add an initial space to the input. This allows to treat the leading word just as any other word. (GPT2 tokenizer detect beginning of words by the preceding space).

Construct a “fast” GPT-2 tokenizer (backed by HuggingFace’s tokenizers library). Based on byte-level Byte-Pair-Encoding.

This tokenizer has been trained to treat spaces like parts of the tokens (a bit like sentencepiece) so a word will

be encoded differently whether it is at the beginning of the sentence (without space) or not:

>>> from transformers import GPT2TokenizerFast

>>> tokenizer = GPT2TokenizerFast.from_pretrained("openai-community/gpt2")

>>> tokenizer("Hello world")["input_ids"]

[15496, 995]

>>> tokenizer(" Hello world")["input_ids"]

[18435, 995]You can get around that behavior by passing add_prefix_space=True when instantiating this tokenizer, but since

the model was not pretrained this way, it might yield a decrease in performance.

When used with is_split_into_words=True, this tokenizer needs to be instantiated with add_prefix_space=True.

This tokenizer inherits from PreTrainedTokenizerFast which contains most of the main methods. Users should refer to this superclass for more information regarding those methods.

GPT2 specific outputs

class transformers.models.gpt2.modeling_gpt2.GPT2DoubleHeadsModelOutput

< source >( loss: Optional = None mc_loss: Optional = None logits: FloatTensor = None mc_logits: FloatTensor = None past_key_values: Optional = None hidden_states: Optional = None attentions: Optional = None )

Parameters

- loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Language modeling loss. - mc_loss (

torch.FloatTensorof shape(1,), optional, returned whenmc_labelsis provided) — Multiple choice classification loss. - logits (

torch.FloatTensorof shape(batch_size, num_choices, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax). - mc_logits (

torch.FloatTensorof shape(batch_size, num_choices)) — Prediction scores of the multiple choice classification head (scores for each choice before SoftMax). - past_key_values (

Tuple[Tuple[torch.Tensor]], optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple of lengthconfig.n_layers, containing tuples of tensors of shape(batch_size, num_heads, sequence_length, embed_size_per_head)).Contains pre-computed hidden-states (key and values in the attention blocks) that can be used (see

past_key_valuesinput) to speed up sequential decoding. - hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

- attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).GPT2Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

Base class for outputs of models predicting if two sentences are consecutive or not.

class transformers.models.gpt2.modeling_tf_gpt2.TFGPT2DoubleHeadsModelOutput

< source >( logits: tf.Tensor = None mc_logits: tf.Tensor = None past_key_values: List[tf.Tensor] | None = None hidden_states: Tuple[tf.Tensor] | None = None attentions: Tuple[tf.Tensor] | None = None )

Parameters

- logits (

tf.Tensorof shape(batch_size, num_choices, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax). - mc_logits (

tf.Tensorof shape(batch_size, num_choices)) — Prediction scores of the multiple choice classification head (scores for each choice before SoftMax). - past_key_values (

List[tf.Tensor], optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — List oftf.Tensorof lengthconfig.n_layers, with each tensor of shape(2, batch_size, num_heads, sequence_length, embed_size_per_head)).Contains pre-computed hidden-states (key and values in the attention blocks) that can be used (see

past_key_valuesinput) to speed up sequential decoding. - hidden_states (

tuple(tf.Tensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftf.Tensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

- attentions (

tuple(tf.Tensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftf.Tensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

Base class for outputs of models predicting if two sentences are consecutive or not.

GPT2Model

class transformers.GPT2Model

< source >( config )

Parameters

- config (GPT2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare GPT2 Model transformer outputting raw hidden-states without any specific head on top.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: Optional = None past_key_values: Optional = None attention_mask: Optional = None token_type_ids: Optional = None position_ids: Optional = None head_mask: Optional = None inputs_embeds: Optional = None encoder_hidden_states: Optional = None encoder_attention_mask: Optional = None use_cache: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None ) → transformers.modeling_outputs.BaseModelOutputWithPastAndCrossAttentions or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, input_ids_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. - attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

If

past_key_valuesis used,attention_maskneeds to contain the masking strategy that was used forpast_key_values. In other words, theattention_maskalways has to have the length:len(past_key_values) + len(input_ids) - token_type_ids (

torch.LongTensorof shape(batch_size, input_ids_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.modeling_outputs.BaseModelOutputWithPastAndCrossAttentions or tuple(torch.FloatTensor)

A transformers.modeling_outputs.BaseModelOutputWithPastAndCrossAttentions or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (GPT2Config) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model.If

past_key_valuesis used only the last hidden-state of the sequences of shape(batch_size, 1, hidden_size)is output. -

past_key_values (

tuple(tuple(torch.FloatTensor)), optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple oftuple(torch.FloatTensor)of lengthconfig.n_layers, with each tuple having 2 tensors of shape(batch_size, num_heads, sequence_length, embed_size_per_head)) and optionally ifconfig.is_encoder_decoder=True2 additional tensors of shape(batch_size, num_heads, encoder_sequence_length, embed_size_per_head).Contains pre-computed hidden-states (key and values in the self-attention blocks and optionally if

config.is_encoder_decoder=Truein the cross-attention blocks) that can be used (seepast_key_valuesinput) to speed up sequential decoding. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueandconfig.add_cross_attention=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads.

The GPT2Model forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> from transformers import AutoTokenizer, GPT2Model

>>> import torch

>>> tokenizer = AutoTokenizer.from_pretrained("openai-community/gpt2")

>>> model = GPT2Model.from_pretrained("openai-community/gpt2")

>>> inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

>>> outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_stateGPT2LMHeadModel

class transformers.GPT2LMHeadModel

< source >( config )

Parameters

- config (GPT2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The GPT2 Model transformer with a language modeling head on top (linear layer with weights tied to the input embeddings).

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: Optional = None past_key_values: Optional = None attention_mask: Optional = None token_type_ids: Optional = None position_ids: Optional = None head_mask: Optional = None inputs_embeds: Optional = None encoder_hidden_states: Optional = None encoder_attention_mask: Optional = None labels: Optional = None use_cache: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None ) → transformers.modeling_outputs.CausalLMOutputWithCrossAttentions or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, input_ids_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. - attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

If

past_key_valuesis used,attention_maskneeds to contain the masking strategy that was used forpast_key_values. In other words, theattention_maskalways has to have the length:len(past_key_values) + len(input_ids) - token_type_ids (

torch.LongTensorof shape(batch_size, input_ids_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - labels (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Labels for language modeling. Note that the labels are shifted inside the model, i.e. you can setlabels = input_idsIndices are selected in[-100, 0, ..., config.vocab_size]All labels set to-100are ignored (masked), the loss is only computed for labels in[0, ..., config.vocab_size]

Returns

transformers.modeling_outputs.CausalLMOutputWithCrossAttentions or tuple(torch.FloatTensor)

A transformers.modeling_outputs.CausalLMOutputWithCrossAttentions or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (GPT2Config) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Language modeling loss (for next-token prediction). -

logits (

torch.FloatTensorof shape(batch_size, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax). -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Cross attentions weights after the attention softmax, used to compute the weighted average in the cross-attention heads.

-

past_key_values (

tuple(tuple(torch.FloatTensor)), optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple oftorch.FloatTensortuples of lengthconfig.n_layers, with each tuple containing the cached key, value states of the self-attention and the cross-attention layers if model is used in encoder-decoder setting. Only relevant ifconfig.is_decoder = True.Contains pre-computed hidden-states (key and values in the attention blocks) that can be used (see

past_key_valuesinput) to speed up sequential decoding.

The GPT2LMHeadModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> import torch

>>> from transformers import AutoTokenizer, GPT2LMHeadModel

>>> tokenizer = AutoTokenizer.from_pretrained("openai-community/gpt2")

>>> model = GPT2LMHeadModel.from_pretrained("openai-community/gpt2")

>>> inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

>>> outputs = model(**inputs, labels=inputs["input_ids"])

>>> loss = outputs.loss

>>> logits = outputs.logitsGPT2DoubleHeadsModel

class transformers.GPT2DoubleHeadsModel

< source >( config )

Parameters

- config (GPT2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The GPT2 Model transformer with a language modeling and a multiple-choice classification head on top e.g. for RocStories/SWAG tasks. The two heads are two linear layers. The language modeling head has its weights tied to the input embeddings, the classification head takes as input the input of a specified classification token index in the input sequence).

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: Optional = None past_key_values: Optional = None attention_mask: Optional = None token_type_ids: Optional = None position_ids: Optional = None head_mask: Optional = None inputs_embeds: Optional = None mc_token_ids: Optional = None labels: Optional = None mc_labels: Optional = None use_cache: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None **kwargs ) → transformers.models.gpt2.modeling_gpt2.GPT2DoubleHeadsModelOutput or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, input_ids_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. - attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

If

past_key_valuesis used,attention_maskneeds to contain the masking strategy that was used forpast_key_values. In other words, theattention_maskalways has to have the length:len(past_key_values) + len(input_ids) - token_type_ids (

torch.LongTensorof shape(batch_size, input_ids_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - mc_token_ids (

torch.LongTensorof shape(batch_size, num_choices), optional, default to index of the last token of the input) — Index of the classification token in each input sequence. Selected in the range[0, input_ids.size(-1) - 1]. - labels (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Labels for language modeling. Note that the labels are shifted inside the model, i.e. you can setlabels = input_ids. Indices are selected in[-100, 0, ..., config.vocab_size - 1]. All labels set to-100are ignored (masked), the loss is only computed for labels in[0, ..., config.vocab_size - 1] - mc_labels (

torch.LongTensorof shape(batch_size), optional) — Labels for computing the multiple choice classification loss. Indices should be in[0, ..., num_choices]where num_choices is the size of the second dimension of the input tensors. (see input_ids above)

Returns

transformers.models.gpt2.modeling_gpt2.GPT2DoubleHeadsModelOutput or tuple(torch.FloatTensor)

A transformers.models.gpt2.modeling_gpt2.GPT2DoubleHeadsModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (GPT2Config) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Language modeling loss. -

mc_loss (

torch.FloatTensorof shape(1,), optional, returned whenmc_labelsis provided) — Multiple choice classification loss. -

logits (

torch.FloatTensorof shape(batch_size, num_choices, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax). -

mc_logits (

torch.FloatTensorof shape(batch_size, num_choices)) — Prediction scores of the multiple choice classification head (scores for each choice before SoftMax). -

past_key_values (

Tuple[Tuple[torch.Tensor]], optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple of lengthconfig.n_layers, containing tuples of tensors of shape(batch_size, num_heads, sequence_length, embed_size_per_head)).Contains pre-computed hidden-states (key and values in the attention blocks) that can be used (see

past_key_valuesinput) to speed up sequential decoding. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).GPT2Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The GPT2DoubleHeadsModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> import torch

>>> from transformers import AutoTokenizer, GPT2DoubleHeadsModel

>>> tokenizer = AutoTokenizer.from_pretrained("openai-community/gpt2")

>>> model = GPT2DoubleHeadsModel.from_pretrained("openai-community/gpt2")

>>> # Add a [CLS] to the vocabulary (we should train it also!)

>>> num_added_tokens = tokenizer.add_special_tokens({"cls_token": "[CLS]"})

>>> # Update the model embeddings with the new vocabulary size

>>> embedding_layer = model.resize_token_embeddings(len(tokenizer))

>>> choices = ["Hello, my dog is cute [CLS]", "Hello, my cat is cute [CLS]"]

>>> encoded_choices = [tokenizer.encode(s) for s in choices]

>>> cls_token_location = [tokens.index(tokenizer.cls_token_id) for tokens in encoded_choices]

>>> input_ids = torch.tensor(encoded_choices).unsqueeze(0) # Batch size: 1, number of choices: 2

>>> mc_token_ids = torch.tensor([cls_token_location]) # Batch size: 1

>>> outputs = model(input_ids, mc_token_ids=mc_token_ids)

>>> lm_logits = outputs.logits

>>> mc_logits = outputs.mc_logitsGPT2ForQuestionAnswering

class transformers.GPT2ForQuestionAnswering

< source >( config )

Parameters

- config (GPT2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The GPT-2 Model transformer with a span classification head on top for extractive question-answering tasks like

SQuAD (a linear layer on top of the hidden-states output to compute span start logits and span end logits).

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: Optional = None attention_mask: Optional = None token_type_ids: Optional = None position_ids: Optional = None head_mask: Optional = None inputs_embeds: Optional = None start_positions: Optional = None end_positions: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None ) → transformers.modeling_outputs.QuestionAnsweringModelOutput or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, input_ids_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. - attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

If

past_key_valuesis used,attention_maskneeds to contain the masking strategy that was used forpast_key_values. In other words, theattention_maskalways has to have the length:len(past_key_values) + len(input_ids) - token_type_ids (

torch.LongTensorof shape(batch_size, input_ids_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - start_positions (

torch.LongTensorof shape(batch_size,), optional) — Labels for position (index) of the start of the labelled span for computing the token classification loss. Positions are clamped to the length of the sequence (sequence_length). Position outside of the sequence are not taken into account for computing the loss. - end_positions (

torch.LongTensorof shape(batch_size,), optional) — Labels for position (index) of the end of the labelled span for computing the token classification loss. Positions are clamped to the length of the sequence (sequence_length). Position outside of the sequence are not taken into account for computing the loss.

Returns

transformers.modeling_outputs.QuestionAnsweringModelOutput or tuple(torch.FloatTensor)

A transformers.modeling_outputs.QuestionAnsweringModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (GPT2Config) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Total span extraction loss is the sum of a Cross-Entropy for the start and end positions. -

start_logits (

torch.FloatTensorof shape(batch_size, sequence_length)) — Span-start scores (before SoftMax). -

end_logits (

torch.FloatTensorof shape(batch_size, sequence_length)) — Span-end scores (before SoftMax). -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The GPT2ForQuestionAnswering forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

This example uses a random model as the real ones are all very big. To get proper results, you should use

openai-community/gpt2 instead of openai-community/gpt2. If you get out-of-memory when loading that checkpoint, you can try

adding device_map="auto" in the from_pretrained call.

Example:

>>> from transformers import AutoTokenizer, GPT2ForQuestionAnswering

>>> import torch

>>> tokenizer = AutoTokenizer.from_pretrained("openai-community/gpt2")

>>> model = GPT2ForQuestionAnswering.from_pretrained("openai-community/gpt2")

>>> question, text = "Who was Jim Henson?", "Jim Henson was a nice puppet"

>>> inputs = tokenizer(question, text, return_tensors="pt")

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> answer_start_index = outputs.start_logits.argmax()

>>> answer_end_index = outputs.end_logits.argmax()

>>> predict_answer_tokens = inputs.input_ids[0, answer_start_index : answer_end_index + 1]

>>> # target is "nice puppet"

>>> target_start_index = torch.tensor([14])

>>> target_end_index = torch.tensor([15])

>>> outputs = model(**inputs, start_positions=target_start_index, end_positions=target_end_index)

>>> loss = outputs.lossGPT2ForSequenceClassification

class transformers.GPT2ForSequenceClassification

< source >( config )

Parameters

- config (GPT2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The GPT2 Model transformer with a sequence classification head on top (linear layer).

GPT2ForSequenceClassification uses the last token in order to do the classification, as other causal models (e.g. GPT-1) do.

Since it does classification on the last token, it requires to know the position of the last token. If a

pad_token_id is defined in the configuration, it finds the last token that is not a padding token in each row. If

no pad_token_id is defined, it simply takes the last value in each row of the batch. Since it cannot guess the

padding tokens when inputs_embeds are passed instead of input_ids, it does the same (take the last value in

each row of the batch).

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: Optional = None past_key_values: Optional = None attention_mask: Optional = None token_type_ids: Optional = None position_ids: Optional = None head_mask: Optional = None inputs_embeds: Optional = None labels: Optional = None use_cache: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None ) → transformers.modeling_outputs.SequenceClassifierOutputWithPast or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, input_ids_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. - attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

If

past_key_valuesis used,attention_maskneeds to contain the masking strategy that was used forpast_key_values. In other words, theattention_maskalways has to have the length:len(past_key_values) + len(input_ids) - token_type_ids (

torch.LongTensorof shape(batch_size, input_ids_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - labels (

torch.LongTensorof shape(batch_size,), optional) — Labels for computing the sequence classification/regression loss. Indices should be in[0, ..., config.num_labels - 1]. Ifconfig.num_labels == 1a regression loss is computed (Mean-Square loss), Ifconfig.num_labels > 1a classification loss is computed (Cross-Entropy).

Returns

transformers.modeling_outputs.SequenceClassifierOutputWithPast or tuple(torch.FloatTensor)

A transformers.modeling_outputs.SequenceClassifierOutputWithPast or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (GPT2Config) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Classification (or regression if config.num_labels==1) loss. -

logits (

torch.FloatTensorof shape(batch_size, config.num_labels)) — Classification (or regression if config.num_labels==1) scores (before SoftMax). -

past_key_values (

tuple(tuple(torch.FloatTensor)), optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple oftuple(torch.FloatTensor)of lengthconfig.n_layers, with each tuple having 2 tensors of shape(batch_size, num_heads, sequence_length, embed_size_per_head))Contains pre-computed hidden-states (key and values in the self-attention blocks) that can be used (see

past_key_valuesinput) to speed up sequential decoding. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The GPT2ForSequenceClassification forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example of single-label classification:

>>> import torch

>>> from transformers import AutoTokenizer, GPT2ForSequenceClassification

>>> tokenizer = AutoTokenizer.from_pretrained("microsoft/DialogRPT-updown")

>>> model = GPT2ForSequenceClassification.from_pretrained("microsoft/DialogRPT-updown")

>>> inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

>>> with torch.no_grad():

... logits = model(**inputs).logits

>>> predicted_class_id = logits.argmax().item()

>>> # To train a model on `num_labels` classes, you can pass `num_labels=num_labels` to `.from_pretrained(...)`

>>> num_labels = len(model.config.id2label)

>>> model = GPT2ForSequenceClassification.from_pretrained("microsoft/DialogRPT-updown", num_labels=num_labels)

>>> labels = torch.tensor([1])

>>> loss = model(**inputs, labels=labels).lossExample of multi-label classification:

>>> import torch

>>> from transformers import AutoTokenizer, GPT2ForSequenceClassification

>>> tokenizer = AutoTokenizer.from_pretrained("microsoft/DialogRPT-updown")

>>> model = GPT2ForSequenceClassification.from_pretrained("microsoft/DialogRPT-updown", problem_type="multi_label_classification")

>>> inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

>>> with torch.no_grad():

... logits = model(**inputs).logits

>>> predicted_class_ids = torch.arange(0, logits.shape[-1])[torch.sigmoid(logits).squeeze(dim=0) > 0.5]

>>> # To train a model on `num_labels` classes, you can pass `num_labels=num_labels` to `.from_pretrained(...)`

>>> num_labels = len(model.config.id2label)

>>> model = GPT2ForSequenceClassification.from_pretrained(

... "microsoft/DialogRPT-updown", num_labels=num_labels, problem_type="multi_label_classification"

... )

>>> labels = torch.sum(

... torch.nn.functional.one_hot(predicted_class_ids[None, :].clone(), num_classes=num_labels), dim=1

... ).to(torch.float)

>>> loss = model(**inputs, labels=labels).lossGPT2ForTokenClassification

class transformers.GPT2ForTokenClassification

< source >( config )

Parameters

- config (GPT2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

GPT2 Model with a token classification head on top (a linear layer on top of the hidden-states output) e.g. for Named-Entity-Recognition (NER) tasks.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: Optional = None past_key_values: Optional = None attention_mask: Optional = None token_type_ids: Optional = None position_ids: Optional = None head_mask: Optional = None inputs_embeds: Optional = None labels: Optional = None use_cache: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None ) → transformers.modeling_outputs.TokenClassifierOutput or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, input_ids_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. - attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

If

past_key_valuesis used,attention_maskneeds to contain the masking strategy that was used forpast_key_values. In other words, theattention_maskalways has to have the length:len(past_key_values) + len(input_ids) - token_type_ids (

torch.LongTensorof shape(batch_size, input_ids_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - labels (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Labels for computing the sequence classification/regression loss. Indices should be in[0, ..., config.num_labels - 1]. Ifconfig.num_labels == 1a regression loss is computed (Mean-Square loss), Ifconfig.num_labels > 1a classification loss is computed (Cross-Entropy).

Returns

transformers.modeling_outputs.TokenClassifierOutput or tuple(torch.FloatTensor)

A transformers.modeling_outputs.TokenClassifierOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (GPT2Config) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Classification loss. -

logits (

torch.FloatTensorof shape(batch_size, sequence_length, config.num_labels)) — Classification scores (before SoftMax). -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The GPT2ForTokenClassification forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> from transformers import AutoTokenizer, GPT2ForTokenClassification

>>> import torch

>>> tokenizer = AutoTokenizer.from_pretrained("brad1141/gpt2-finetuned-comp2")

>>> model = GPT2ForTokenClassification.from_pretrained("brad1141/gpt2-finetuned-comp2")

>>> inputs = tokenizer(

... "HuggingFace is a company based in Paris and New York", add_special_tokens=False, return_tensors="pt"

... )

>>> with torch.no_grad():

... logits = model(**inputs).logits

>>> predicted_token_class_ids = logits.argmax(-1)

>>> # Note that tokens are classified rather then input words which means that

>>> # there might be more predicted token classes than words.

>>> # Multiple token classes might account for the same word

>>> predicted_tokens_classes = [model.config.id2label[t.item()] for t in predicted_token_class_ids[0]]

>>> predicted_tokens_classes

['Lead', 'Lead', 'Lead', 'Position', 'Lead', 'Lead', 'Lead', 'Lead', 'Lead', 'Lead', 'Lead', 'Lead']

>>> labels = predicted_token_class_ids

>>> loss = model(**inputs, labels=labels).loss

>>> round(loss.item(), 2)

0.25TFGPT2Model

class transformers.TFGPT2Model

< source >( config *inputs **kwargs )

Parameters

- config (GPT2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare GPT2 Model transformer outputting raw hidden-states without any specific head on top.

This model inherits from TFPreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a keras.Model subclass. Use it as a regular TF 2.0 Keras Model and refer to the TF 2.0 documentation for all matter related to general usage and behavior.

TensorFlow models and layers in transformers accept two formats as input:

- having all inputs as keyword arguments (like PyTorch models), or

- having all inputs as a list, tuple or dict in the first positional argument.

The reason the second format is supported is that Keras methods prefer this format when passing inputs to models

and layers. Because of this support, when using methods like model.fit() things should “just work” for you - just

pass your inputs and labels in any format that model.fit() supports! If, however, you want to use the second

format outside of Keras methods like fit() and predict(), such as when creating your own layers or models with

the Keras Functional API, there are three possibilities you can use to gather all the input Tensors in the first

positional argument:

- a single Tensor with

input_idsonly and nothing else:model(input_ids) - a list of varying length with one or several input Tensors IN THE ORDER given in the docstring:

model([input_ids, attention_mask])ormodel([input_ids, attention_mask, token_type_ids]) - a dictionary with one or several input Tensors associated to the input names given in the docstring:

model({"input_ids": input_ids, "token_type_ids": token_type_ids})

Note that when creating models and layers with subclassing then you don’t need to worry about any of this, as you can just pass inputs like you would to any other Python function!

call